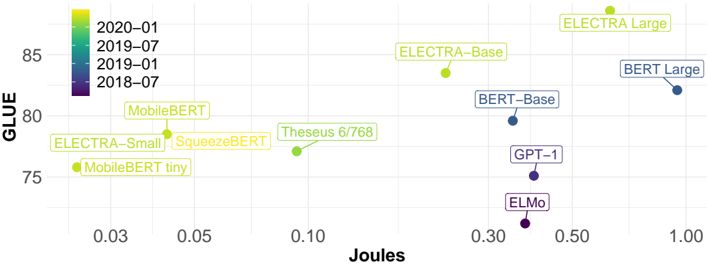

## Scatter Plot: GLUE Score vs. Joules for Various Language Models

### Overview

The image is a scatter plot comparing the GLUE (General Language Understanding Evaluation) score of various language models against their energy consumption in Joules. The plot visualizes the trade-off between model performance and energy efficiency. The data points are color-coded by the year and month of the model's release, ranging from 2018-07 to 2020-01.

### Components/Axes

* **X-axis:** Joules (Energy Consumption). Scale ranges from 0.03 to 1.00, with markers at 0.03, 0.05, 0.10, 0.30, 0.50, and 1.00.

* **Y-axis:** GLUE (General Language Understanding Evaluation) score. Scale ranges from 75 to 85, with a marker at 80.

* **Legend:** Located at the top-left corner, the legend indicates the color-coding scheme for the data points based on the year and month of the model's release:

* 2020-01: Light Green

* 2019-07: Green

* 2019-01: Blue

* 2018-07: Purple

### Detailed Analysis

Here's a breakdown of the data points, their approximate coordinates, and their corresponding release dates based on color:

* **MobileBERT tiny:** (0.03, 75). Color: Light Green. Release Date: 2020-01

* **ELECTRA-Small:** (0.03, 78). Color: Light Green. Release Date: 2020-01

* **SqueezeBERT:** (0.05, 78). Color: Light Green. Release Date: 2020-01

* **MobileBERT:** (0.05, 80). Color: Light Green. Release Date: 2020-01

* **Theseus 6/768:** (0.10, 77). Color: Green. Release Date: 2019-07

* **ELECTRA-Base:** (0.30, 84). Color: Light Green. Release Date: 2020-01

* **BERT-Base:** (0.30, 80). Color: Blue. Release Date: 2019-01

* **GPT-1:** (0.30, 77). Color: Purple. Release Date: 2018-07

* **ELMo:** (0.30, 74). Color: Purple. Release Date: 2018-07

* **ELECTRA Large:** (0.50, 87). Color: Light Green. Release Date: 2020-01

* **BERT Large:** (1.00, 82). Color: Blue. Release Date: 2019-01

**Trend Verification:**

* Models released later (2020-01, Light Green) tend to have higher GLUE scores and varying energy consumption.

* Models released earlier (2018-07, Purple) have lower GLUE scores and relatively lower energy consumption.

* There is a general trend of increasing GLUE score with increasing energy consumption, but there are exceptions.

### Key Observations

* **Energy Efficiency:** Models like MobileBERT tiny, ELECTRA-Small, and SqueezeBERT achieve relatively good GLUE scores with very low energy consumption.

* **Performance Leaders:** ELECTRA Large achieves the highest GLUE score but also has a moderate energy consumption.

* **Trade-off:** There is a clear trade-off between model performance (GLUE score) and energy consumption (Joules). Some models prioritize energy efficiency, while others prioritize performance.

* **Temporal Trend:** Newer models (2020-01) generally outperform older models (2018-07) in terms of GLUE score, indicating advancements in language model architectures and training techniques.

### Interpretation

The scatter plot illustrates the evolution of language models, showcasing the progress in both performance and energy efficiency. The data suggests that newer models (released in 2020-01) tend to achieve higher GLUE scores, indicating improved language understanding capabilities. However, this improvement often comes at the cost of increased energy consumption.

The plot highlights the importance of considering both performance and energy efficiency when selecting a language model for a specific application. Models like MobileBERT tiny and ELECTRA-Small offer a good balance between performance and energy consumption, making them suitable for resource-constrained environments. On the other hand, models like ELECTRA Large prioritize performance and may be preferred for applications where accuracy is paramount, even if it means higher energy consumption.

The plot also reveals that there is no single "best" model, as the optimal choice depends on the specific requirements of the application. By visualizing the trade-off between performance and energy consumption, the scatter plot provides valuable insights for decision-making in the field of natural language processing.