## Calibration Plot: Chameleon+ Model

### Overview

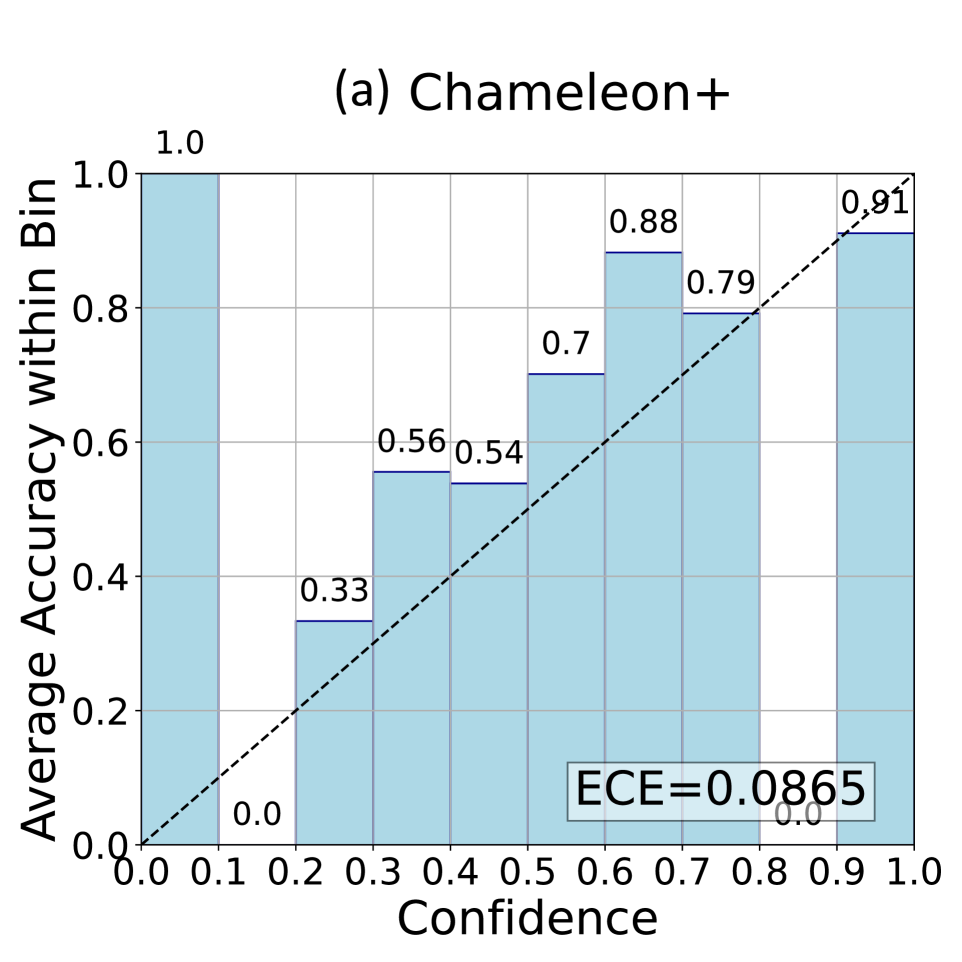

The image displays a calibration plot (also known as a reliability diagram) for a model or system named "Chameleon+". This type of chart evaluates how well a model's predicted confidence scores align with its actual accuracy. The plot consists of a bar chart overlaid with a diagonal reference line and a text box displaying the Expected Calibration Error (ECE).

### Components/Axes

* **Title:** "(a) Chameleon+" is centered at the top of the chart.

* **X-Axis:**

* **Label:** "Confidence" (centered below the axis).

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at every 0.1 interval (0.0, 0.1, 0.2, ..., 1.0).

* **Y-Axis:**

* **Label:** "Average Accuracy within Bin" (rotated 90 degrees, positioned to the left of the axis).

* **Scale:** Linear scale from 0.0 to 1.0, with major tick marks at every 0.2 interval (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **Data Series (Bars):** Ten light blue vertical bars, each representing a "bin" of confidence scores. The width of each bar spans a 0.1 confidence interval (e.g., 0.0-0.1, 0.1-0.2, etc.).

* **Reference Line:** A black dashed diagonal line running from the origin (0.0, 0.0) to the top-right corner (1.0, 1.0). This represents perfect calibration, where confidence equals accuracy.

* **Legend/Annotation:** A rectangular text box located in the bottom-right quadrant of the chart area (approximately spanning x=0.6 to 0.9, y=0.05 to 0.15). It contains the text "ECE=0.0865".

### Detailed Analysis

The chart plots the average accuracy of the model for predictions falling within specific confidence bins. The numerical value above each bar indicates the precise average accuracy for that bin.

**Data Points (Confidence Bin → Average Accuracy):**

1. **Bin 0.0-0.1:** Accuracy = 1.0

2. **Bin 0.1-0.2:** Accuracy = 0.0

3. **Bin 0.2-0.3:** Accuracy = 0.33

4. **Bin 0.3-0.4:** Accuracy = 0.56

5. **Bin 0.4-0.5:** Accuracy = 0.54

6. **Bin 0.5-0.6:** Accuracy = 0.7

7. **Bin 0.6-0.7:** Accuracy = 0.88

8. **Bin 0.7-0.8:** Accuracy = 0.79

9. **Bin 0.8-0.9:** Accuracy = 0.91

10. **Bin 0.9-1.0:** Accuracy = 0.91 (The bar height matches the previous bin, and the label is partially obscured by the reference line but reads 0.91).

**Trend Verification:**

* **General Trend:** The bars show a rough, non-monotonic upward trend. As confidence increases from 0.2 to 1.0, accuracy generally increases, but with notable fluctuations.

* **Deviations from Perfect Calibration (Dashed Line):**

* **Under-confidence:** The model is under-confident in the 0.0-0.1 bin (accuracy 1.0 > confidence ~0.05) and the 0.6-0.7 bin (accuracy 0.88 > confidence ~0.65).

* **Over-confidence:** The model is over-confident in the 0.1-0.2 bin (accuracy 0.0 < confidence ~0.15), the 0.3-0.4 bin (accuracy 0.56 < confidence ~0.35), and the 0.7-0.8 bin (accuracy 0.79 < confidence ~0.75).

* **Near Calibration:** The bins 0.5-0.6 and 0.9-1.0 are relatively close to the diagonal line.

### Key Observations

1. **Extreme Outliers:** The first two bins show extreme behavior. The 0.0-0.1 bin has perfect accuracy (1.0), while the 0.1-0.2 bin has zero accuracy (0.0). This suggests the model may be making very few, but highly accurate, predictions at the lowest confidence, and a separate set of completely incorrect predictions at slightly higher confidence.

2. **Non-Monotonicity:** The accuracy does not increase smoothly with confidence. There are dips at confidence bins 0.4-0.5 (accuracy drops from 0.56 to 0.54) and 0.7-0.8 (accuracy drops from 0.88 to 0.79).

3. **High-End Plateau:** The model's accuracy plateaus at 0.91 for the two highest confidence bins (0.8-0.9 and 0.9-1.0), indicating it does not achieve perfect accuracy even when most confident.

4. **Calibration Error:** The Expected Calibration Error (ECE) is reported as 0.0865. This is a scalar metric summarizing the average absolute difference between confidence and accuracy across all bins, weighted by the number of samples in each bin.

### Interpretation

This calibration plot provides a diagnostic view of the Chameleon+ model's predictive reliability. The data suggests the model is **not perfectly calibrated**. Its confidence scores are not reliable proxies for the true probability of being correct.

* **What the data demonstrates:** The model exhibits a pattern of both under- and over-confidence across different confidence regimes. The significant deviations in the lower confidence bins (0.0-0.2) are particularly noteworthy and could indicate issues with how the model generates or is trained on low-confidence predictions. The general upward trend, despite fluctuations, shows that higher confidence is *somewhat* associated with higher accuracy, which is a necessary but insufficient condition for good calibration.

* **Relationship between elements:** The bars show the empirical reality (actual accuracy), while the dashed line shows the ideal (perfect calibration). The gap between them visualizes the miscalibration. The ECE value quantifies this gap into a single number for model comparison.

* **Implications:** For applications where confidence scores are used for decision-making (e.g., selective prediction, risk assessment), this model's outputs would need to be interpreted with caution or potentially recalibrated using techniques like temperature scaling or isotonic regression. The poor calibration in the 0.1-0.2 confidence range is a red flag, as predictions made with ~15% confidence are systematically wrong. The plateau at 91% accuracy also indicates a ceiling on the model's peak performance.