## Dual-Axis Line Chart: Model Training Metrics (R² Value vs. Information Gain)

### Overview

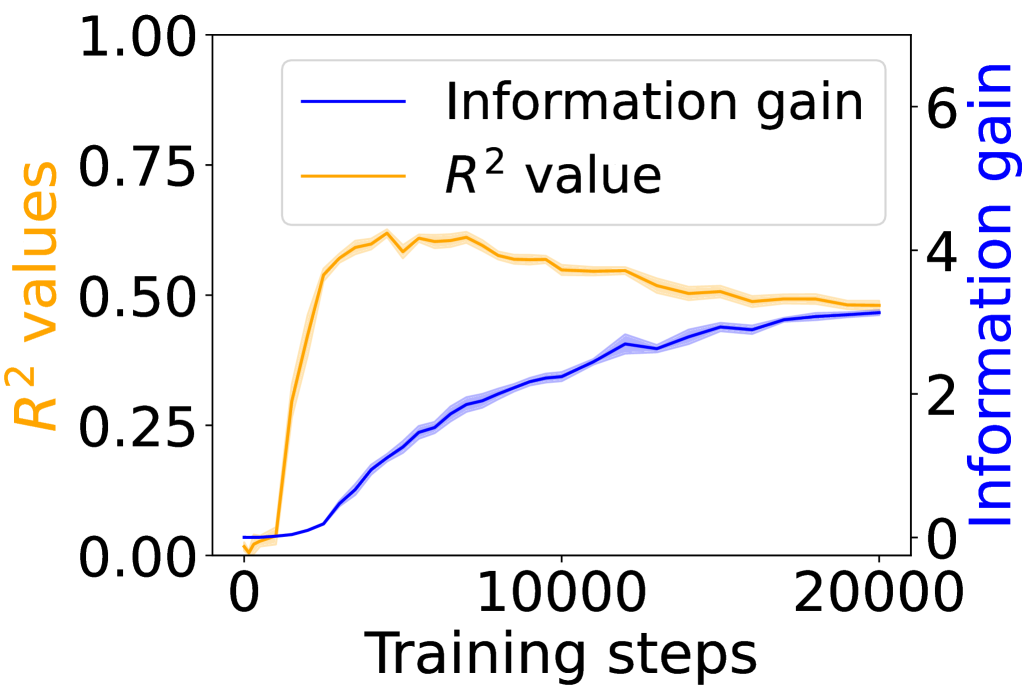

This image is a dual-axis line chart plotting two different metrics against the number of training steps for a machine learning model. The chart illustrates the relationship and contrasting trends between the model's explanatory power (R² value) and the information it gains during the training process.

### Components/Axes

* **X-Axis (Bottom):** Labeled "Training steps". The scale runs from 0 to 20,000, with major tick marks at 0, 10,000, and 20,000.

* **Primary Y-Axis (Left):** Labeled "R² values" in orange text. The scale runs from 0.00 to 1.00, with major tick marks at 0.00, 0.25, 0.50, 0.75, and 1.00.

* **Secondary Y-Axis (Right):** Labeled "Information gain" in blue text. The scale runs from 0 to 6, with major tick marks at 0, 2, 4, and 6.

* **Legend:** Positioned in the top-left quadrant of the chart area. It contains two entries:

* A blue line labeled "Information gain".

* An orange line labeled "R² value".

* **Data Series:**

1. **R² value (Orange Line):** This line represents the coefficient of determination, a measure of how well the model's predictions approximate the real data points.

2. **Information gain (Blue Line):** This line represents a measure of the reduction in uncertainty or entropy achieved by the model at each training step.

### Detailed Analysis

**Trend Verification & Data Points:**

* **R² Value (Orange Line):**

* **Visual Trend:** The line starts near 0, rises very steeply in the initial phase, peaks, and then begins a gradual, steady decline.

* **Approximate Data Points:**

* At ~0 steps: R² ≈ 0.00

* At ~2,500 steps: R² ≈ 0.55 (steep ascent)

* At ~5,000 steps: R² ≈ 0.60 (approaching peak)

* At ~7,500 steps: R² ≈ 0.62 (peak region, with minor fluctuations)

* At ~10,000 steps: R² ≈ 0.58

* At ~15,000 steps: R² ≈ 0.52

* At ~20,000 steps: R² ≈ 0.50

* **Information Gain (Blue Line):**

* **Visual Trend:** The line starts near 0 and exhibits a consistent, monotonic upward trend throughout the training steps shown, with the rate of increase slowing slightly in the later stages.

* **Approximate Data Points:**

* At ~0 steps: Information Gain ≈ 0.0

* At ~5,000 steps: Information Gain ≈ 1.5

* At ~10,000 steps: Information Gain ≈ 2.5

* At ~15,000 steps: Information Gain ≈ 3.0

* At ~20,000 steps: Information Gain ≈ 3.2

**Spatial Grounding:** The legend is clearly placed in the top-left, away from the data lines. The orange R² line is consistently plotted against the left axis, and the blue Information Gain line is consistently plotted against the right axis, as confirmed by the axis label colors matching the line colors.

### Key Observations

1. **Divergent Trends:** The two metrics show a clear divergence after the initial training phase. While Information Gain continues to increase steadily, the R² value peaks early (around 5,000-7,500 steps) and then begins to degrade.

2. **Peak Performance:** The model's best fit to the training data (highest R²) occurs relatively early in the training process shown.

3. **Continuous Learning:** The model continues to gain information (reduce uncertainty) even as its predictive fit (R²) on the training data worsens, suggesting it is learning more complex patterns or potentially starting to overfit.

4. **Scale Difference:** The R² value operates on a bounded scale [0,1], while the Information Gain metric is unbounded and reaches a value over 3 by the end of the plotted steps.

### Interpretation

This chart likely illustrates a common phenomenon in machine learning training dynamics. The initial rapid rise in R² indicates the model is quickly learning the dominant patterns in the data. The subsequent peak and decline in R², while Information Gain continues to rise, suggests a few possibilities:

* **Overfitting:** The model may be starting to memorize noise or specific examples in the training data rather than generalizing. This would increase its "information" about the training set (hence rising Information Gain) but reduce its ability to explain variance in a broader sense (lower R²).

* **Learning Complexity:** The model might be moving from learning simple, high-variance patterns (which boost R² quickly) to learning more subtle, complex features. These complex features add information but may not contribute as efficiently to reducing the overall mean squared error that R² is based on.

* **Metric Sensitivity:** It highlights that different metrics capture different aspects of model performance. R² measures goodness-of-fit, while Information Gain measures knowledge acquisition. A model can become more "knowledgeable" without necessarily becoming a better predictor in the R² sense.

The key takeaway is that monitoring multiple metrics is crucial. Relying solely on R² might lead to early stopping at the peak (~7,500 steps), while the Information Gain metric suggests the model is still actively learning beyond that point. The practitioner must decide based on the goal: optimal predictive fit (consider stopping earlier) versus maximal information extraction (continue training).