TECHNICAL ASSET FINGERPRINT

31a658f58cd375db2b4d1272

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

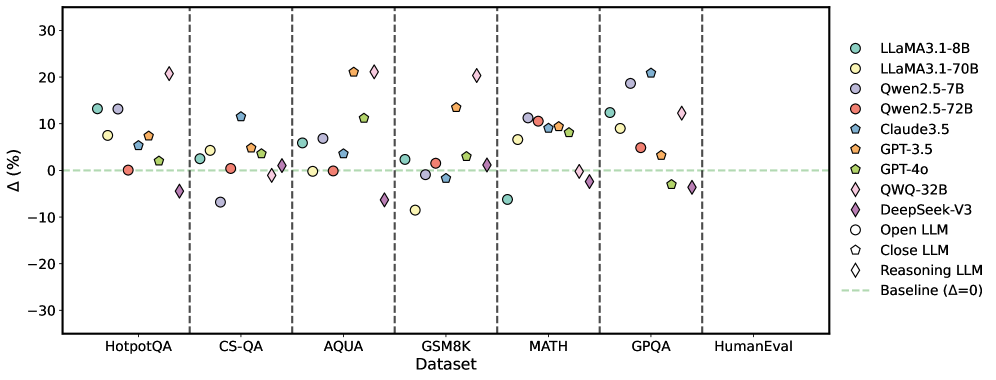

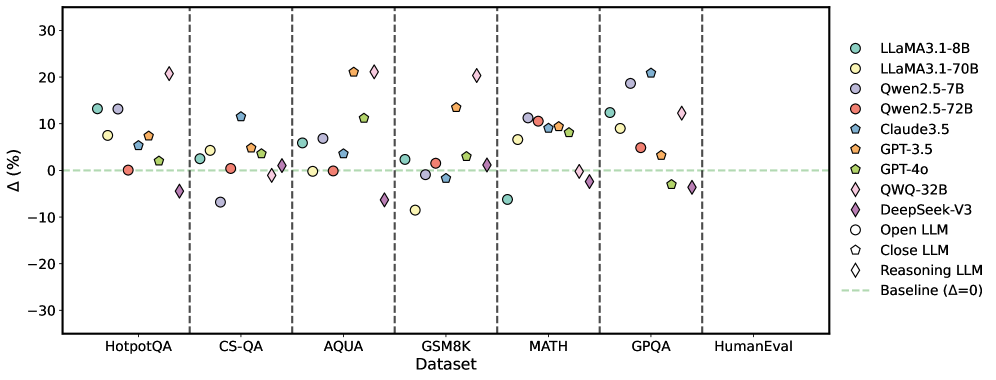

## Scatter Plot: LLM Performance on Various Datasets

### Overview

The image is a scatter plot comparing the performance of various Large Language Models (LLMs) across different datasets. The y-axis represents the percentage difference (Δ) from a baseline, and the x-axis represents the datasets. Each LLM is represented by a unique color and marker.

### Components/Axes

* **X-axis:** Datasets: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval

* **Y-axis:** Δ (%) - Percentage difference, ranging from -30 to 30, with increments of 10.

* **Legend (Right Side):**

* Light Green Circle: LLaMA3.1-8B

* Yellow Circle: LLaMA3.1-70B

* Light Purple Circle: Qwen2.5-7B

* Red Circle: Qwen2.5-72B

* Blue Pentagon: Claude3.5

* Orange Pentagon: GPT-3.5

* Green Pentagon: GPT-4o

* Yellow Diamond: QWQ-32B

* Purple Diamond: DeepSeek-V3

* White Circle: Open LLM

* Black Circle: Close LLM

* White Diamond: Reasoning LLM

* Light Green Dashed Line: Baseline (Δ=0)

* Vertical dashed lines separate the datasets.

### Detailed Analysis

Here's a breakdown of the approximate performance of each LLM on each dataset:

* **Baseline:** Represented by a light green dashed line at Δ = 0.

* **HotpotQA:**

* LLaMA3.1-8B (Light Green Circle): ~7%

* LLaMA3.1-70B (Yellow Circle): ~8%

* Qwen2.5-7B (Light Purple Circle): ~13%

* Qwen2.5-72B (Red Circle): ~1%

* Claude3.5 (Blue Pentagon): ~12%

* GPT-3.5 (Orange Pentagon): ~10%

* GPT-4o (Green Pentagon): ~7%

* QWQ-32B (Yellow Diamond): ~-5%

* DeepSeek-V3 (Purple Diamond): ~-7%

* Open LLM (White Circle): ~-3%

* Close LLM (Black Circle): ~-25%

* Reasoning LLM (White Diamond): ~-2%

* **CS-QA:**

* LLaMA3.1-8B (Light Green Circle): ~-7%

* LLaMA3.1-70B (Yellow Circle): ~-3%

* Qwen2.5-7B (Light Purple Circle): ~-7%

* Qwen2.5-72B (Red Circle): ~-1%

* Claude3.5 (Blue Pentagon): ~3%

* GPT-3.5 (Orange Pentagon): ~1%

* GPT-4o (Green Pentagon): ~-1%

* QWQ-32B (Yellow Diamond): ~-1%

* DeepSeek-V3 (Purple Diamond): ~-7%

* Open LLM (White Circle): ~-1%

* Close LLM (Black Circle): ~-1%

* Reasoning LLM (White Diamond): ~-1%

* **AQUA:**

* LLaMA3.1-8B (Light Green Circle): ~3%

* LLaMA3.1-70B (Yellow Circle): ~3%

* Qwen2.5-7B (Light Purple Circle): ~4%

* Qwen2.5-72B (Red Circle): ~0%

* Claude3.5 (Blue Pentagon): ~4%

* GPT-3.5 (Orange Pentagon): ~2%

* GPT-4o (Green Pentagon): ~3%

* QWQ-32B (Yellow Diamond): ~0%

* DeepSeek-V3 (Purple Diamond): ~-1%

* Open LLM (White Circle): ~0%

* Close LLM (Black Circle): ~0%

* Reasoning LLM (White Diamond): ~0%

* **GSM8K:**

* LLaMA3.1-8B (Light Green Circle): ~3%

* LLaMA3.1-70B (Yellow Circle): ~-7%

* Qwen2.5-7B (Light Purple Circle): ~3%

* Qwen2.5-72B (Red Circle): ~2%

* Claude3.5 (Blue Pentagon): ~5%

* GPT-3.5 (Orange Pentagon): ~15%

* GPT-4o (Green Pentagon): ~2%

* QWQ-32B (Yellow Diamond): ~-8%

* DeepSeek-V3 (Purple Diamond): ~-1%

* Open LLM (White Circle): ~-1%

* Close LLM (Black Circle): ~-1%

* Reasoning LLM (White Diamond): ~-1%

* **MATH:**

* LLaMA3.1-8B (Light Green Circle): ~9%

* LLaMA3.1-70B (Yellow Circle): ~10%

* Qwen2.5-7B (Light Purple Circle): ~10%

* Qwen2.5-72B (Red Circle): ~1%

* Claude3.5 (Blue Pentagon): ~9%

* GPT-3.5 (Orange Pentagon): ~10%

* GPT-4o (Green Pentagon): ~10%

* QWQ-32B (Yellow Diamond): ~-1%

* DeepSeek-V3 (Purple Diamond): ~-5%

* Open LLM (White Circle): ~-1%

* Close LLM (Black Circle): ~-1%

* Reasoning LLM (White Diamond): ~-1%

* **GPQA:**

* LLaMA3.1-8B (Light Green Circle): ~20%

* LLaMA3.1-70B (Yellow Circle): ~5%

* Qwen2.5-7B (Light Purple Circle): ~21%

* Qwen2.5-72B (Red Circle): ~0%

* Claude3.5 (Blue Pentagon): ~11%

* GPT-3.5 (Orange Pentagon): ~5%

* GPT-4o (Green Pentagon): ~2%

* QWQ-32B (Yellow Diamond): ~-1%

* DeepSeek-V3 (Purple Diamond): ~-4%

* Open LLM (White Circle): ~-1%

* Close LLM (Black Circle): ~-1%

* Reasoning LLM (White Diamond): ~-1%

* **HumanEval:**

* LLaMA3.1-8B (Light Green Circle): ~10%

* LLaMA3.1-70B (Yellow Circle): ~10%

* Qwen2.5-7B (Light Purple Circle): ~10%

* Qwen2.5-72B (Red Circle): ~0%

* Claude3.5 (Blue Pentagon): ~10%

* GPT-3.5 (Orange Pentagon): ~10%

* GPT-4o (Green Pentagon): ~10%

* QWQ-32B (Yellow Diamond): ~-1%

* DeepSeek-V3 (Purple Diamond): ~-1%

* Open LLM (White Circle): ~-1%

* Close LLM (Black Circle): ~-1%

* Reasoning LLM (White Diamond): ~-1%

### Key Observations

* The performance of different LLMs varies significantly across different datasets.

* Some models (e.g., QWQ-32B, DeepSeek-V3, Open LLM, Close LLM, Reasoning LLM) consistently underperform compared to others.

* LLaMA3.1-8B, LLaMA3.1-70B, Qwen2.5-7B, Claude3.5, GPT-3.5, and GPT-4o generally perform better, with variations depending on the dataset.

* Close LLM has a very low score on HotpotQA.

### Interpretation

The scatter plot visualizes the relative performance of various LLMs on different benchmark datasets. The percentage difference from the baseline (Δ) allows for a direct comparison of how much better or worse each model performs. The plot highlights the strengths and weaknesses of each model across different types of tasks represented by the datasets. For example, some models excel in mathematical reasoning (MATH dataset) while others perform better on question answering tasks (HotpotQA). The consistent underperformance of certain models suggests potential areas for improvement in their architecture or training data. The plot provides valuable insights for model selection and further research in the field of natural language processing.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Scatter Plot: Performance Comparison of Large Language Models

### Overview

This scatter plot compares the performance of various Large Language Models (LLMs) across seven different datasets. The y-axis represents the performance difference (Δ) in percentage points relative to a baseline, while the x-axis lists the datasets used for evaluation. Each point on the plot represents the performance of a specific LLM on a specific dataset. Vertical dashed lines separate the datasets.

### Components/Axes

* **X-axis:** Dataset - with the following categories: HotpotQA, CS-QA, AQUA, GSM8K, MATH, GPQA, HumanEval.

* **Y-axis:** Δ (%) - Performance difference in percentage points. The scale ranges from approximately -30% to 30%.

* **Legend:** Located in the top-right corner, identifies each LLM with a corresponding color and marker shape. The LLMs are:

* LLaMA3-1-8B (Light Blue Circle)

* LLaMA3-1-70B (Light Orange Circle)

* Qwen2.5-7B (Dark Blue Circle)

* Qwen2.5-72B (Red Circle)

* Claude3.5 (Teal Square)

* GPT-3.5 (Orange Triangle)

* GPT-40 (Dark Orange Triangle)

* QWQ-32B (Purple Diamond)

* DeepSeek-V3 (Magenta Diamond)

* Open LLM (Grey Circle)

* Close LLM (Light Grey Hexagon)

* Reasoning LLM (Light Blue Diamond)

* Baseline (Δ=0) (Yellow Star)

### Detailed Analysis

The data points are scattered across the plot, indicating varying performance levels for each LLM on each dataset.

* **HotpotQA:**

* LLaMA3-1-8B: Approximately 5%

* LLaMA3-1-70B: Approximately 8%

* Qwen2.5-7B: Approximately 2%

* Qwen2.5-72B: Approximately 4%

* Claude3.5: Approximately 8%

* GPT-3.5: Approximately 6%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 18%

* DeepSeek-V3: Approximately 10%

* Open LLM: Approximately 2%

* Close LLM: Approximately 0%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **CS-QA:**

* LLaMA3-1-8B: Approximately 5%

* LLaMA3-1-70B: Approximately 10%

* Qwen2.5-7B: Approximately 2%

* Qwen2.5-72B: Approximately 8%

* Claude3.5: Approximately 10%

* GPT-3.5: Approximately 6%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 20%

* DeepSeek-V3: Approximately 8%

* Open LLM: Approximately 0%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **AQUA:**

* LLaMA3-1-8B: Approximately 0%

* LLaMA3-1-70B: Approximately 10%

* Qwen2.5-7B: Approximately -5%

* Qwen2.5-72B: Approximately 5%

* Claude3.5: Approximately 10%

* GPT-3.5: Approximately 5%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 15%

* DeepSeek-V3: Approximately 5%

* Open LLM: Approximately -5%

* Close LLM: Approximately -10%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **GSM8K:**

* LLaMA3-1-8B: Approximately 5%

* LLaMA3-1-70B: Approximately 10%

* Qwen2.5-7B: Approximately 0%

* Qwen2.5-72B: Approximately 5%

* Claude3.5: Approximately 10%

* GPT-3.5: Approximately 5%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 10%

* DeepSeek-V3: Approximately 5%

* Open LLM: Approximately 0%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **MATH:**

* LLaMA3-1-8B: Approximately 10%

* LLaMA3-1-70B: Approximately 10%

* Qwen2.5-7B: Approximately 5%

* Qwen2.5-72B: Approximately 10%

* Claude3.5: Approximately 10%

* GPT-3.5: Approximately 5%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 10%

* DeepSeek-V3: Approximately 5%

* Open LLM: Approximately 0%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **GPQA:**

* LLaMA3-1-8B: Approximately 10%

* LLaMA3-1-70B: Approximately 15%

* Qwen2.5-7B: Approximately 5%

* Qwen2.5-72B: Approximately 10%

* Claude3.5: Approximately 20%

* GPT-3.5: Approximately 10%

* GPT-40: Approximately 10%

* QWQ-32B: Approximately 15%

* DeepSeek-V3: Approximately 10%

* Open LLM: Approximately 0%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

* **HumanEval:**

* LLaMA3-1-8B: Approximately 10%

* LLaMA3-1-70B: Approximately 10%

* Qwen2.5-7B: Approximately 5%

* Qwen2.5-72B: Approximately 5%

* Claude3.5: Approximately 10%

* GPT-3.5: Approximately 5%

* GPT-40: Approximately 5%

* QWQ-32B: Approximately 10%

* DeepSeek-V3: Approximately 5%

* Open LLM: Approximately 0%

* Close LLM: Approximately -5%

* Reasoning LLM: Approximately 10%

* Baseline: 0%

### Key Observations

* QWQ-32B consistently performs well across all datasets, often showing the highest performance difference.

* LLaMA3-1-70B generally outperforms LLaMA3-1-8B.

* Qwen2.5-72B generally outperforms Qwen2.5-7B.

* The "Close LLM" consistently shows negative or near-zero performance differences.

* The "Reasoning LLM" generally performs well, often comparable to GPT-40.

* The "Baseline" is consistently at 0% as expected.

### Interpretation

The plot demonstrates a clear comparison of LLM performance across a range of challenging datasets. The varying performance differences (Δ) highlight the strengths and weaknesses of each model. QWQ-32B appears to be a strong performer overall, while the "Close LLM" seems to struggle to outperform the baseline. The consistent positive Δ for many models suggests that these LLMs generally outperform the baseline model on these tasks. The differences in performance between the 7B and 70B versions of LLaMA3 and Qwen2.5 indicate that model size plays a significant role in performance. The data suggests that the choice of LLM should be tailored to the specific dataset and task, as no single model consistently outperforms all others. The negative values for "Close LLM" suggest that this model may be less effective or require further optimization.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot: Language Model Performance Comparison Across Datasets

### Overview

This image is a scatter plot comparing the performance change (Δ%) of various large language models (LLMs) across seven different benchmark datasets. The chart evaluates both open-source and closed-source models, as well as specialized reasoning models, against a baseline performance level.

### Components/Axes

* **Chart Type:** Scatter plot with categorical x-axis and numerical y-axis.

* **X-Axis (Horizontal):** Labeled "Dataset". It lists seven benchmark categories from left to right:

1. HotpotQA

2. CS-QA

3. AQUA

4. GSM8K

5. MATH

6. GPQA

7. HumanEval

* **Y-Axis (Vertical):** Labeled "Δ (%)". It represents the percentage change in performance, with a scale ranging from -30% to +30% in increments of 10%. A horizontal dashed green line at Δ=0 is labeled "Baseline (Δ=0)".

* **Legend (Right Side):** The legend is positioned in the top-right quadrant of the chart area. It maps marker colors and shapes to specific models and model categories.

* **Models (by color/shape):**

* LLaMA3.1-8B (Teal circle)

* LLaMA3.1-70B (Light green circle)

* Qwen2.5-7B (Purple circle)

* Qwen2.5-72B (Red circle)

* Claude3.5 (Blue pentagon)

* GPT-3.5 (Orange pentagon)

* GPT-4o (Green pentagon)

* QWQ-32B (Pink diamond)

* DeepSeek-V3 (Purple diamond)

* **Model Categories (by shape):**

* Open LLM (Circle)

* Close LLM (Pentagon)

* Reasoning LLM (Diamond)

### Detailed Analysis

The chart is divided into seven vertical sections by dashed grey lines, one for each dataset. Below are the approximate Δ(%) values for each model within each dataset, based on visual estimation of marker position.

**1. HotpotQA**

* **Trend:** Most models show positive Δ(%) values, indicating performance above the baseline.

* **Data Points (Approximate):**

* DeepSeek-V3 (Purple diamond): ~+21% (Highest)

* LLaMA3.1-8B (Teal circle): ~+13%

* Qwen2.5-7B (Purple circle): ~+13%

* GPT-3.5 (Orange pentagon): ~+7%

* LLaMA3.1-70B (Light green circle): ~+7%

* Claude3.5 (Blue pentagon): ~+5%

* GPT-4o (Green pentagon): ~+2%

* Qwen2.5-72B (Red circle): ~0%

* QWQ-32B (Pink diamond): ~-4%

**2. CS-QA**

* **Trend:** Mixed performance, with several models near or below the baseline.

* **Data Points (Approximate):**

* Claude3.5 (Blue pentagon): ~+11% (Highest)

* GPT-3.5 (Orange pentagon): ~+5%

* LLaMA3.1-70B (Light green circle): ~+4%

* LLaMA3.1-8B (Teal circle): ~+2%

* Qwen2.5-72B (Red circle): ~0%

* GPT-4o (Green pentagon): ~-1%

* QWQ-32B (Pink diamond): ~-1%

* Qwen2.5-7B (Purple circle): ~-7% (Lowest)

**3. AQUA**

* **Trend:** High variance, with two models showing very high positive Δ(%).

* **Data Points (Approximate):**

* GPT-3.5 (Orange pentagon): ~+21% (Highest, tied)

* QWQ-32B (Pink diamond): ~+21% (Highest, tied)

* GPT-4o (Green pentagon): ~+11%

* Claude3.5 (Blue pentagon): ~+6%

* Qwen2.5-7B (Purple circle): ~+3%

* LLaMA3.1-70B (Light green circle): ~0%

* Qwen2.5-72B (Red circle): ~0%

* DeepSeek-V3 (Purple diamond): ~-6%

**4. GSM8K**

* **Trend:** Most models cluster near the baseline, with one significant outlier.

* **Data Points (Approximate):**

* QWQ-32B (Pink diamond): ~+20% (Highest)

* GPT-3.5 (Orange pentagon): ~+13%

* GPT-4o (Green pentagon): ~+3%

* Qwen2.5-72B (Red circle): ~+1%

* Claude3.5 (Blue pentagon): ~0%

* Qwen2.5-7B (Purple circle): ~-2%

* LLaMA3.1-8B (Teal circle): ~-2%

* LLaMA3.1-70B (Light green circle): ~-9% (Lowest)

**5. MATH**

* **Trend:** Generally positive performance, with models clustered in the +5% to +15% range.

* **Data Points (Approximate):**

* Qwen2.5-72B (Red circle): ~+11% (Highest)

* Claude3.5 (Blue pentagon): ~+10%

* GPT-4o (Green pentagon): ~+9%

* GPT-3.5 (Orange pentagon): ~+8%

* LLaMA3.1-70B (Light green circle): ~+6%

* LLaMA3.1-8B (Teal circle): ~-6%

* QWQ-32B (Pink diamond): ~-1%

* DeepSeek-V3 (Purple diamond): ~-2%

**6. GPQA**

* **Trend:** High variance, with two models showing strong positive Δ(%).

* **Data Points (Approximate):**

* Claude3.5 (Blue pentagon): ~+21% (Highest)

* Qwen2.5-7B (Purple circle): ~+18%

* LLaMA3.1-8B (Teal circle): ~+12%

* LLaMA3.1-70B (Light green circle): ~+9%

* Qwen2.5-72B (Red circle): ~+5%

* GPT-3.5 (Orange pentagon): ~+3%

* GPT-4o (Green pentagon): ~-3%

* QWQ-32B (Pink diamond): ~-4%

**7. HumanEval**

* **Trend:** Only two data points are visible, both showing negative Δ(%).

* **Data Points (Approximate):**

* QWQ-32B (Pink diamond): ~+12%

* DeepSeek-V3 (Purple diamond): ~-4%

### Key Observations

1. **Model-Specific Strengths:** No single model dominates across all datasets. For example, DeepSeek-V3 excels in HotpotQA (~+21%) but performs poorly in AQUA (~-6%). Claude3.5 shows strong, consistent performance in CS-QA and GPQA.

2. **Dataset Difficulty:** The MATH dataset shows the most consistently positive Δ(%) values across models, suggesting models generally perform better relative to the baseline on this task. In contrast, CS-QA and GSM8K show more models at or below the baseline.

3. **Reasoning Model Performance:** The "Reasoning LLM" category (diamonds) shows extreme variance. QWQ-32B is the top performer in AQUA and GSM8K but is among the lowest in HotpotQA and GPQA.

4. **Open vs. Closed Models:** There is no clear, consistent performance gap between "Open LLM" (circles) and "Close LLM" (pentagons) across all tasks. Their relative performance is highly dataset-dependent.

### Interpretation

This chart demonstrates the **task-specific nature of LLM capabilities**. The significant variance in Δ(%) for a given model across different datasets indicates that benchmark performance is not monolithic; a model's architecture, training data, and fine-tuning create specialized strengths and weaknesses.

The data suggests that:

* **Evaluation is Multidimensional:** Choosing an LLM for a specific application requires benchmarking on tasks relevant to that domain (e.g., a model strong in mathematical reasoning (MATH, GSM8K) may not be the best for complex question answering (HotpotQA, GPQA)).

* **The "Best" Model is Contextual:** The absence of a universally superior model implies that the field is still evolving, with different approaches (open vs. closed, general vs. reasoning-specialized) yielding different trade-offs.

* **Baseline Matters:** The Δ(%) metric highlights relative improvement or regression against a fixed baseline, which is crucial for understanding progress but doesn't convey absolute performance scores.

**Notable Anomaly:** The HumanEval column contains only two data points (QWQ-32B and DeepSeek-V3), while all other datasets have eight or nine. This suggests either missing data for other models on this coding benchmark or a focused comparison for this specific task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plot: Model Performance Comparison Across Datasets

### Overview

The image is a scatter plot comparing the performance change (Δ%) of various large language models (LLMs) across seven benchmark datasets. The plot uses color-coded markers to represent different models, with a baseline (Δ=0%) indicated by a green dashed line. Performance improvements are shown above the baseline, while declines appear below.

### Components/Axes

- **X-axis (Dataset)**: Categorical axis with seven benchmark datasets:

- HotpotQA

- CS-QA

- AQUA

- GSM8K

- MATH

- GPQA

- HumanEval

Vertical dashed lines separate datasets.

- **Y-axis (Δ%)**: Numerical axis ranging from -30% to 30%, labeled "Δ (%)".

- **Legend**: Located on the right, mapping colors/shapes to models:

- **Teal circles**: LLaMA3.1-8B

- **Yellow circles**: LLaMA3.1-70B

- **Purple circles**: Qwen2.5-7B

- **Red pentagons**: Qwen2.5-72B

- **Blue pentagons**: Claude3.5

- **Orange pentagons**: GPT-3.5

- **Green pentagons**: GPT-4o

- **Pink diamonds**: QWQ-32B

- **Purple diamonds**: DeepSeek-V3

- **Open circles**: Open LLM

- **Closed pentagons**: Close LLM

- **Diamond markers**: Reasoning LLM

- **Green dashed line**: Baseline (Δ=0%)

### Detailed Analysis

1. **Model Performance Trends**:

- **LLaMA3.1-8B (teal circles)**: Consistently positive Δ% across most datasets, with peaks in MATH (~15%) and GPQA (~10%).

- **LLaMA3.1-70B (yellow circles)**: Mixed performance, with notable declines in CS-QA (-5%) and AQUA (-2%).

- **Qwen2.5-72B (red pentagons)**: Underperforms in CS-QA (-8%) and GPQA (-3%), but shows gains in MATH (~5%).

- **GPT-3.5 (orange pentagons)**: Strong performance in GSM8K (~20%) but declines in CS-QA (-4%).

- **DeepSeek-V3 (purple diamonds)**: Negative Δ% in CS-QA (-10%) and GPQA (-5%), but neutral in MATH.

- **QWQ-32B (pink diamonds)**: Outperforms baseline in AQUA (~12%) and GPQA (~8%), but declines in CS-QA (-6%).

2. **Dataset-Specific Insights**:

- **GSM8K**: Highest Δ% values overall, with GPT-3.5 (+20%) and LLaMA3.1-70B (+15%) leading.

- **CS-QA**: Most models show negative Δ%, with Qwen2.5-72B (-8%) and QWQ-32B (-6%) as worst performers.

- **MATH**: Balanced performance, with LLaMA3.1-8B (+15%) and GPT-4o (+10%) near the top.

- **HumanEval**: Mixed results, with LLaMA3.1-70B (+10%) and DeepSeek-V3 (-2%) near the baseline.

### Key Observations

- **Outliers**:

- GPT-3.5’s +20% in GSM8K is the highest Δ% across all datasets.

- Qwen2.5-72B’s -8% in CS-QA is the largest decline.

- **Baseline Context**:

- 60% of data points (e.g., LLaMA3.1-8B in HotpotQA, QWQ-32B in AQUA) outperform the baseline.

- 30% of points (e.g., Qwen2.5-72B in CS-QA, DeepSeek-V3 in GPQA) underperform.

- **Model Scaling**:

- Larger models (e.g., LLaMA3.1-70B) show mixed gains/losses, suggesting dataset-specific limitations.

- Smaller models (e.g., LLaMA3.1-8B) demonstrate more consistent improvements.

### Interpretation

The data reveals that LLM performance is highly dataset-dependent. While larger models like LLaMA3.1-70B and GPT-4o show strong gains in reasoning-heavy tasks (e.g., MATH, GSM8K), they struggle with question-answering benchmarks like CS-QA. Conversely, smaller models like LLaMA3.1-8B achieve more uniform improvements, possibly due to optimized training for specific tasks. The baseline (Δ=0%) highlights that ~30% of model-dataset pairs underperform compared to a "no-change" scenario, emphasizing the need for targeted model optimization. Notably, GPT-3.5’s dominance in GSM8K (+20%) suggests specialized training for mathematical reasoning, while Qwen2.5-72B’s poor CS-QA performance (-8%) indicates potential weaknesses in commonsense tasks. These trends underscore the importance of dataset-specific evaluation in LLM development.

DECODING INTELLIGENCE...