TECHNICAL ASSET FINGERPRINT

322845a20e88f3bec2216edd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Line Charts: Model Performance vs. Noise Ratio

### Overview

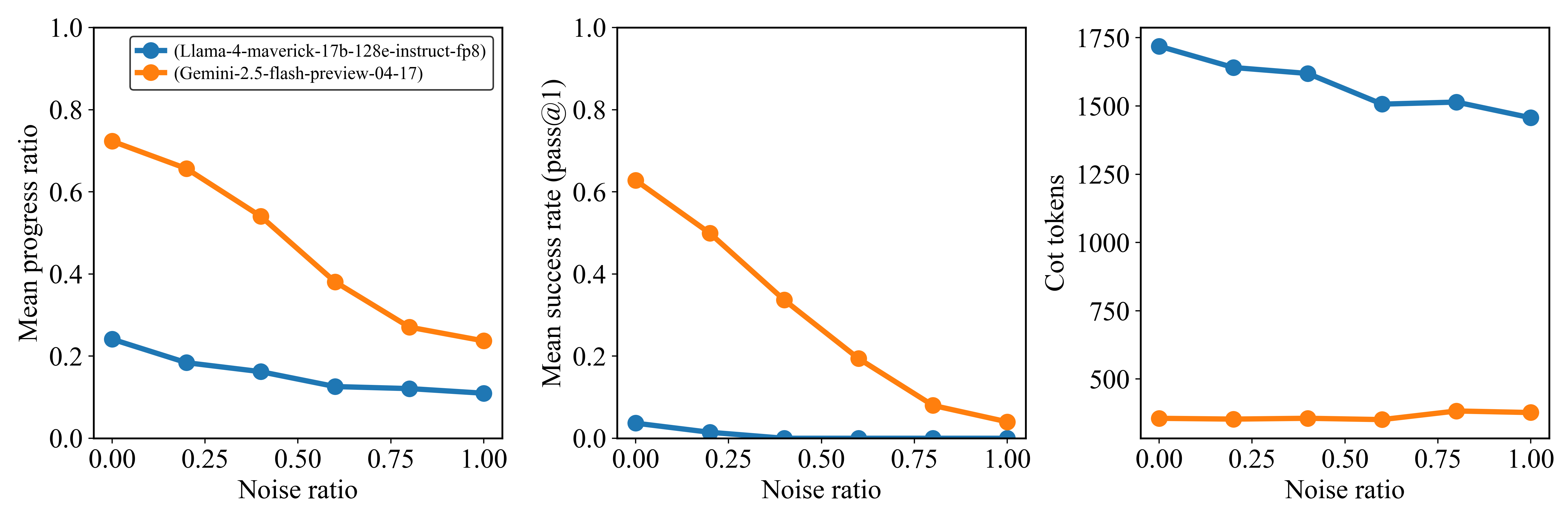

The image presents three line charts comparing the performance of two language models, "Llama-4-maverick-17b-128e-instruct-fp8" and "Gemini-2.5-flash-preview-04-17", across varying levels of noise. The charts depict "Mean progress ratio", "Mean success rate (pass@1)", and "Cot tokens" as a function of "Noise ratio".

### Components/Axes

**General Chart Elements:**

* **X-axis:** Noise ratio, ranging from 0.00 to 1.00 in increments of 0.25.

* **Legend (Top-Left of First Chart):**

* Blue: (Llama-4-maverick-17b-128e-instruct-fp8)

* Orange: (Gemini-2.5-flash-preview-04-17)

**Chart 1: Mean Progress Ratio**

* **Y-axis:** Mean progress ratio, ranging from 0.0 to 1.0.

**Chart 2: Mean Success Rate (pass@1)**

* **Y-axis:** Mean success rate (pass@1), ranging from 0.0 to 1.0.

**Chart 3: Cot tokens**

* **Y-axis:** Cot tokens, ranging from 0 to 1750 in increments of 250.

### Detailed Analysis

**Chart 1: Mean Progress Ratio**

* **(Llama-4-maverick-17b-128e-instruct-fp8) (Blue):** The line slopes downward slightly.

* Noise Ratio 0.00: Mean progress ratio ~0.24

* Noise Ratio 0.25: Mean progress ratio ~0.18

* Noise Ratio 0.50: Mean progress ratio ~0.16

* Noise Ratio 0.75: Mean progress ratio ~0.13

* Noise Ratio 1.00: Mean progress ratio ~0.12

* **(Gemini-2.5-flash-preview-04-17) (Orange):** The line slopes downward significantly.

* Noise Ratio 0.00: Mean progress ratio ~0.72

* Noise Ratio 0.25: Mean progress ratio ~0.58

* Noise Ratio 0.50: Mean progress ratio ~0.40

* Noise Ratio 0.75: Mean progress ratio ~0.28

* Noise Ratio 1.00: Mean progress ratio ~0.24

**Chart 2: Mean Success Rate (pass@1)**

* **(Llama-4-maverick-17b-128e-instruct-fp8) (Blue):** The line remains near zero.

* Noise Ratio 0.00: Mean success rate ~0.04

* Noise Ratio 0.25: Mean success rate ~0.01

* Noise Ratio 0.50: Mean success rate ~0.00

* Noise Ratio 0.75: Mean success rate ~0.00

* Noise Ratio 1.00: Mean success rate ~0.00

* **(Gemini-2.5-flash-preview-04-17) (Orange):** The line slopes downward significantly.

* Noise Ratio 0.00: Mean success rate ~0.62

* Noise Ratio 0.25: Mean success rate ~0.50

* Noise Ratio 0.50: Mean success rate ~0.30

* Noise Ratio 0.75: Mean success rate ~0.08

* Noise Ratio 1.00: Mean success rate ~0.04

**Chart 3: Cot tokens**

* **(Llama-4-maverick-17b-128e-instruct-fp8) (Blue):** The line slopes downward slightly.

* Noise Ratio 0.00: Cot tokens ~1700

* Noise Ratio 0.25: Cot tokens ~1630

* Noise Ratio 0.50: Cot tokens ~1520

* Noise Ratio 0.75: Cot tokens ~1500

* Noise Ratio 1.00: Cot tokens ~1450

* **(Gemini-2.5-flash-preview-04-17) (Orange):** The line remains relatively constant.

* Noise Ratio 0.00: Cot tokens ~370

* Noise Ratio 0.25: Cot tokens ~370

* Noise Ratio 0.50: Cot tokens ~370

* Noise Ratio 0.75: Cot tokens ~380

* Noise Ratio 1.00: Cot tokens ~390

### Key Observations

* The "Gemini-2.5-flash-preview-04-17" model exhibits a significantly higher mean progress ratio and mean success rate compared to the "Llama-4-maverick-17b-128e-instruct-fp8" model at lower noise ratios.

* The performance of "Gemini-2.5-flash-preview-04-17" degrades substantially as the noise ratio increases in both "Mean progress ratio" and "Mean success rate (pass@1)".

* The "Llama-4-maverick-17b-128e-instruct-fp8" model maintains a relatively stable, but low, mean progress ratio and mean success rate across all noise ratios.

* The number of "Cot tokens" for "Gemini-2.5-flash-preview-04-17" remains relatively constant regardless of the noise ratio, while "Cot tokens" for "Llama-4-maverick-17b-128e-instruct-fp8" decreases slightly as noise increases.

### Interpretation

The data suggests that the "Gemini-2.5-flash-preview-04-17" model is more sensitive to noise than the "Llama-4-maverick-17b-128e-instruct-fp8" model. While "Gemini-2.5-flash-preview-04-17" performs better in low-noise environments, its performance rapidly declines as noise increases. The "Llama-4-maverick-17b-128e-instruct-fp8" model, although less performant in ideal conditions, demonstrates more robustness to noise. The "Cot tokens" metric indicates the complexity or length of the model's reasoning process. The relatively stable "Cot tokens" for "Gemini-2.5-flash-preview-04-17" suggests that the model maintains a consistent reasoning process even as its performance degrades due to noise. The slight decrease in "Cot tokens" for "Llama-4-maverick-17b-128e-instruct-fp8" may indicate a simplification of its reasoning process under noisy conditions.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash

INTEL_VERIFIED

## Chart Type: Performance and Token Usage of Language Models Under Varying Noise Ratios

### Overview

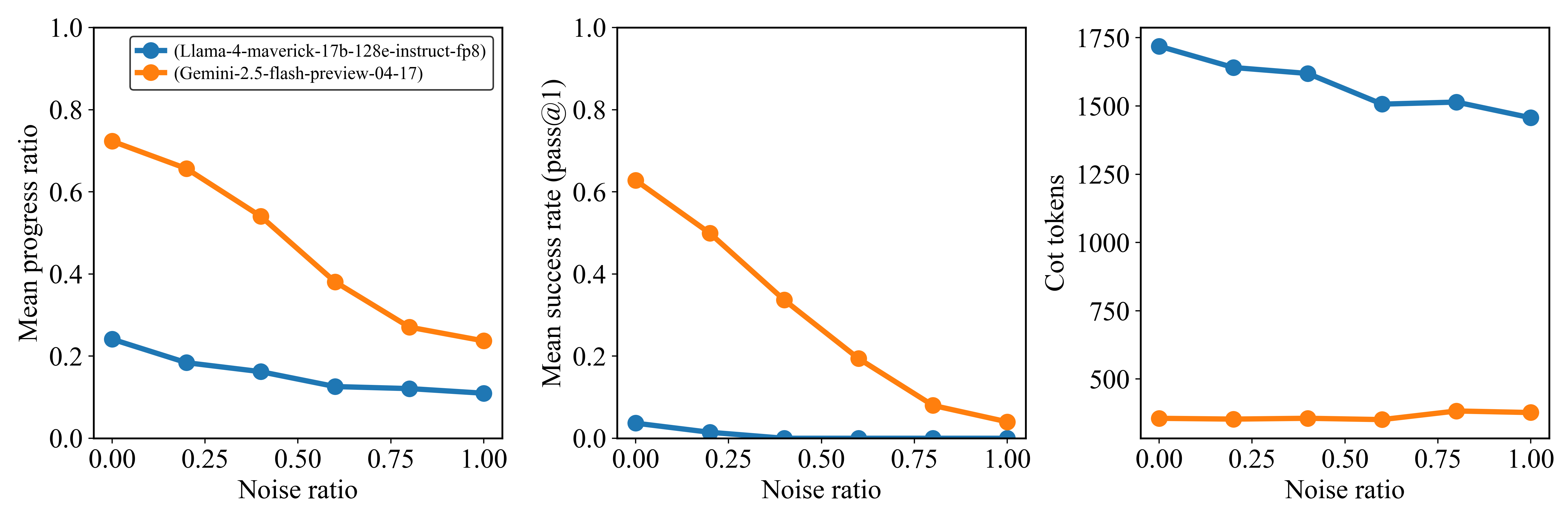

This image presents three line charts arranged horizontally, comparing the performance and token usage of two language models, "Llama-4-maverick-17b-128e-instruct-fp8" and "Gemini-2.5-flash-preview-04-17", across different "Noise ratio" values. The charts illustrate how "Mean progress ratio", "Mean success rate (pass@1)", and "Cot tokens" change as the noise ratio increases from 0.00 to 1.00.

### Components/Axes

The image consists of three sub-charts, each sharing a common X-axis and a common legend.

**Common Elements:**

* **X-axis Label (Bottom of each chart):** "Noise ratio"

* **X-axis Markers (Common to all charts):** 0.00, 0.25, 0.50, 0.75, 1.00

* **Legend (Top-left of the leftmost chart, applies to all three):**

* Blue line with circular markers: "(Llama-4-maverick-17b-128e-instruct-fp8)"

* Orange line with circular markers: "(Gemini-2.5-flash-preview-04-17)"

**Chart 1 (Leftmost): Mean progress ratio vs. Noise ratio**

* **Y-axis Label:** "Mean progress ratio"

* **Y-axis Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

**Chart 2 (Middle): Mean success rate (pass@1) vs. Noise ratio**

* **Y-axis Label:** "Mean success rate (pass@1)"

* **Y-axis Markers:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

**Chart 3 (Rightmost): Cot tokens vs. Noise ratio**

* **Y-axis Label:** "Cot tokens"

* **Y-axis Markers:** 0, 250, 500, 750, 1000, 1250, 1500, 1750

### Detailed Analysis

**Chart 1: Mean progress ratio vs. Noise ratio**

* **Orange Line (Gemini-2.5-flash-preview-04-17):**

* **Trend:** The mean progress ratio for Gemini-2.5-flash-preview-04-17 starts high and shows a significant downward trend as the noise ratio increases. The decline is steeper between 0.50 and 0.75 noise ratio.

* **Data Points:**

* Noise ratio 0.00: ~0.72

* Noise ratio 0.25: ~0.65

* Noise ratio 0.50: ~0.55

* Noise ratio 0.75: ~0.28

* Noise ratio 1.00: ~0.25

* **Blue Line (Llama-4-maverick-17b-128e-instruct-fp8):**

* **Trend:** The mean progress ratio for Llama-4-maverick-17b-128e-instruct-fp8 starts lower than Gemini and also shows a downward trend, but it is much flatter and at consistently lower values.

* **Data Points:**

* Noise ratio 0.00: ~0.24

* Noise ratio 0.25: ~0.18

* Noise ratio 0.50: ~0.15

* Noise ratio 0.75: ~0.13

* Noise ratio 1.00: ~0.12

**Chart 2: Mean success rate (pass@1) vs. Noise ratio**

* **Orange Line (Gemini-2.5-flash-preview-04-17):**

* **Trend:** The mean success rate for Gemini-2.5-flash-preview-04-17 starts high and exhibits a sharp, continuous decline as the noise ratio increases, approaching zero at higher noise levels.

* **Data Points:**

* Noise ratio 0.00: ~0.62

* Noise ratio 0.25: ~0.50

* Noise ratio 0.50: ~0.35

* Noise ratio 0.75: ~0.08

* Noise ratio 1.00: ~0.02

* **Blue Line (Llama-4-maverick-17b-128e-instruct-fp8):**

* **Trend:** The mean success rate for Llama-4-maverick-17b-128e-instruct-fp8 starts very low and declines slightly, remaining close to zero across all noise ratios.

* **Data Points:**

* Noise ratio 0.00: ~0.04

* Noise ratio 0.25: ~0.02

* Noise ratio 0.50: ~0.01

* Noise ratio 0.75: ~0.01

* Noise ratio 1.00: ~0.01

**Chart 3: Cot tokens vs. Noise ratio**

* **Orange Line (Gemini-2.5-flash-preview-04-17):**

* **Trend:** The Cot tokens for Gemini-2.5-flash-preview-04-17 remain relatively stable and low across all noise ratios, with a slight increase at higher noise levels.

* **Data Points:**

* Noise ratio 0.00: ~350

* Noise ratio 0.25: ~350

* Noise ratio 0.50: ~350

* Noise ratio 0.75: ~380

* Noise ratio 1.00: ~380

* **Blue Line (Llama-4-maverick-17b-128e-instruct-fp8):**

* **Trend:** The Cot tokens for Llama-4-maverick-17b-128e-instruct-fp8 start high and show a gradual downward trend as the noise ratio increases.

* **Data Points:**

* Noise ratio 0.00: ~1700

* Noise ratio 0.25: ~1620

* Noise ratio 0.50: ~1580

* Noise ratio 0.75: ~1500

* Noise ratio 1.00: ~1480

### Key Observations

* **Performance Degradation with Noise:** Both "Mean progress ratio" and "Mean success rate (pass@1)" generally decrease as the "Noise ratio" increases for both models.

* **Gemini's Superior Performance (Low Noise):** At low noise ratios (e.g., 0.00 to 0.50), Gemini-2.5-flash-preview-04-17 significantly outperforms Llama-4-maverick-17b-128e-instruct-fp8 in both "Mean progress ratio" and "Mean success rate (pass@1)".

* **Gemini's Steep Decline:** Gemini's performance metrics (progress ratio and success rate) show a much steeper decline with increasing noise compared to Llama. Its success rate drops from ~0.62 at 0.00 noise to ~0.02 at 1.00 noise.

* **Llama's Consistent Low Performance:** Llama-4-maverick-17b-128e-instruct-fp8 maintains a consistently low "Mean progress ratio" and "Mean success rate (pass@1)" across all noise levels, suggesting it is less affected by noise in terms of relative performance change, but its absolute performance is poor.

* **Cot Token Usage Disparity:** Llama-4-maverick-17b-128e-instruct-fp8 uses substantially more "Cot tokens" (around 1500-1700) than Gemini-2.5-flash-preview-04-17 (around 350-380) across all noise ratios.

* **Cot Token Stability:** Gemini's Cot token usage is very stable, slightly increasing with noise. Llama's Cot token usage decreases slightly with increasing noise, but remains high.

### Interpretation

The data suggests a trade-off between performance and resource efficiency, and robustness to noise, between the two language models.

Gemini-2.5-flash-preview-04-17 appears to be a higher-performing model under ideal or low-noise conditions, achieving significantly better "Mean progress ratio" and "Mean success rate (pass@1)". However, its performance degrades sharply as the "Noise ratio" increases, indicating a lower robustness to noisy inputs. Despite its higher performance, Gemini consistently uses a much lower number of "Cot tokens," suggesting it is more efficient in terms of computational steps or reasoning complexity (as measured by CoT tokens).

Conversely, Llama-4-maverick-17b-128e-instruct-fp8 exhibits a much lower baseline performance in both progress ratio and success rate. While its performance also declines with noise, the absolute change is less dramatic because it starts from a much lower point. This might imply that Llama is either inherently less capable for the task or less sensitive to noise due to its lower performance ceiling. Critically, Llama uses a significantly higher number of "Cot tokens" across all noise levels, suggesting it requires more computational effort or generates longer chains of thought, yet yields inferior results compared to Gemini, especially at lower noise. The slight decrease in Llama's Cot tokens with increasing noise might indicate that it struggles to generate coherent chains of thought when inputs are very noisy, leading to shorter outputs, but this doesn't translate to improved performance.

In summary, Gemini offers superior performance and token efficiency in clean environments but is more susceptible to performance drops with increasing noise. Llama, while less efficient in token usage and generally lower performing, shows a relatively flatter (though low) performance curve under varying noise, suggesting a different architectural or training approach that might prioritize some form of stability over peak performance or efficiency. The choice between these models would depend on the expected noise level of the input data and the priority given to performance versus resource consumption.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Charts: Performance of Language Models with Noise Injection

### Overview

This image presents three line charts comparing the performance of two language models, Llama-4-maverick-17b-128e-instruct-fp8 and Gemini-2.5-flash-preview-04-17, under varying levels of noise injection. The charts display Mean Progress Ratio, Mean Success Rate (pass@1), and CoT tokens (Chain-of-Thought tokens) as functions of the Noise Ratio.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Noise Ratio, ranging from 0.00 to 1.00, with markers at 0.00, 0.25, 0.50, 0.75, and 1.00.

* **Y-axis (Left Chart):** Mean Progress Ratio, ranging from 0.00 to 1.00.

* **Y-axis (Middle Chart):** Mean Success Rate (pass@1), ranging from 0.00 to 1.00.

* **Y-axis (Right Chart):** CoT tokens, ranging from 0 to 1750.

* **Legend (Top-Left of each chart):**

* Blue Line: Llama-4-maverick-17b-128e-instruct-fp8

* Orange Line: Gemini-2.5-flash-preview-04-17

### Detailed Analysis

**Chart 1: Mean Progress Ratio vs. Noise Ratio**

* **Llama (Blue Line):** The line slopes downward, indicating a decrease in Mean Progress Ratio as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 0.24

* At Noise Ratio 0.25: Approximately 0.18

* At Noise Ratio 0.50: Approximately 0.14

* At Noise Ratio 0.75: Approximately 0.12

* At Noise Ratio 1.00: Approximately 0.10

* **Gemini (Orange Line):** The line slopes downward more steeply than Llama, indicating a more significant decrease in Mean Progress Ratio as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 0.72

* At Noise Ratio 0.25: Approximately 0.52

* At Noise Ratio 0.50: Approximately 0.32

* At Noise Ratio 0.75: Approximately 0.18

* At Noise Ratio 1.00: Approximately 0.08

**Chart 2: Mean Success Rate (pass@1) vs. Noise Ratio**

* **Llama (Blue Line):** The line slopes downward, indicating a decrease in Mean Success Rate as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 0.18

* At Noise Ratio 0.25: Approximately 0.12

* At Noise Ratio 0.50: Approximately 0.08

* At Noise Ratio 0.75: Approximately 0.06

* At Noise Ratio 1.00: Approximately 0.04

* **Gemini (Orange Line):** The line slopes downward very steeply, indicating a significant decrease in Mean Success Rate as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 0.82

* At Noise Ratio 0.25: Approximately 0.58

* At Noise Ratio 0.50: Approximately 0.34

* At Noise Ratio 0.75: Approximately 0.14

* At Noise Ratio 1.00: Approximately 0.06

**Chart 3: CoT Tokens vs. Noise Ratio**

* **Llama (Blue Line):** The line shows a slight downward trend, with some fluctuations, indicating a small decrease in CoT tokens as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 1725

* At Noise Ratio 0.25: Approximately 1550

* At Noise Ratio 0.50: Approximately 1475

* At Noise Ratio 0.75: Approximately 1450

* At Noise Ratio 1.00: Approximately 1425

* **Gemini (Orange Line):** The line is relatively flat, with minor fluctuations, indicating a minimal change in CoT tokens as Noise Ratio increases.

* At Noise Ratio 0.00: Approximately 475

* At Noise Ratio 0.25: Approximately 500

* At Noise Ratio 0.50: Approximately 525

* At Noise Ratio 0.75: Approximately 500

* At Noise Ratio 1.00: Approximately 525

### Key Observations

* Gemini consistently outperforms Llama in both Mean Progress Ratio and Mean Success Rate at all Noise Ratio levels.

* Both models exhibit a significant performance degradation (decrease in Mean Progress Ratio and Mean Success Rate) as the Noise Ratio increases.

* The number of CoT tokens used by Llama is substantially higher than that used by Gemini, and remains relatively stable across different Noise Ratios. Gemini's CoT token usage is low and relatively constant.

* Gemini's performance is more sensitive to noise than Llama's.

### Interpretation

The data suggests that Gemini is more robust to noise than Llama, maintaining a higher success rate and progress ratio even with significant noise injection. However, Gemini relies on fewer CoT tokens, which might indicate a different reasoning strategy or a more efficient approach to problem-solving. Llama, while more susceptible to noise, utilizes a larger number of CoT tokens, potentially indicating a more verbose or exploratory reasoning process. The steep decline in performance for both models with increasing noise highlights the importance of data quality and the potential vulnerability of language models to adversarial inputs or noisy data. The relatively stable CoT token usage for Llama suggests that the model attempts to maintain its reasoning process even under noisy conditions, while Gemini's minimal token usage indicates a more direct approach that is easily disrupted by noise. The difference in CoT token usage could also be related to the model architectures or training methodologies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## [Line Charts]: Performance of Two AI Models Under Increasing Noise

### Overview

The image displays three horizontally arranged line charts comparing the performance of two AI models—**Llama-4-maverick-17b-128e-instruct-fp8** (blue line) and **Gemini-2.5-flash-preview-04-17** (orange line)—across three different metrics as a function of "Noise ratio." The charts share a common x-axis but have different y-axes, measuring "Mean progress ratio," "Mean success rate (pass@1)," and "Cot tokens," respectively.

### Components/Axes

* **Legend:** Located in the top-left corner of the first (leftmost) chart. It identifies the two data series:

* Blue line with circle markers: `(Llama-4-maverick-17b-128e-instruct-fp8)`

* Orange line with circle markers: `(Gemini-2.5-flash-preview-04-17)`

* **Common X-Axis (All Charts):**

* **Label:** `Noise ratio`

* **Scale:** Linear, from `0.00` to `1.00`.

* **Tick Marks:** `0.00`, `0.25`, `0.50`, `0.75`, `1.00`.

* **Left Chart Y-Axis:**

* **Label:** `Mean progress ratio`

* **Scale:** Linear, from `0.0` to `1.0`.

* **Tick Marks:** `0.0`, `0.2`, `0.4`, `0.6`, `0.8`, `1.0`.

* **Middle Chart Y-Axis:**

* **Label:** `Mean success rate (pass@1)`

* **Scale:** Linear, from `0.0` to `1.0`.

* **Tick Marks:** `0.0`, `0.2`, `0.4`, `0.6`, `0.8`, `1.0`.

* **Right Chart Y-Axis:**

* **Label:** `Cot tokens`

* **Scale:** Linear, from `500` to `1750`.

* **Tick Marks:** `500`, `750`, `1000`, `1250`, `1500`, `1750`.

### Detailed Analysis

#### Chart 1: Mean Progress Ratio vs. Noise Ratio

* **Trend Verification:** Both lines show a clear downward trend as noise increases. The orange line (Gemini) has a steeper negative slope than the blue line (Llama).

* **Data Points (Approximate):**

* **Llama (Blue):** Starts at ~0.24 (Noise=0.00), decreases gradually to ~0.18 (0.25), ~0.16 (0.50), ~0.12 (0.75), and ends at ~0.11 (1.00).

* **Gemini (Orange):** Starts significantly higher at ~0.72 (0.00), decreases to ~0.65 (0.25), ~0.54 (0.50), ~0.38 (0.75), and ends at ~0.24 (1.00).

* **Key Observation:** Gemini consistently achieves a higher mean progress ratio than Llama across all noise levels, though the gap narrows as noise increases.

#### Chart 2: Mean Success Rate (pass@1) vs. Noise Ratio

* **Trend Verification:** Both lines show a strong downward trend. The orange line (Gemini) starts high and declines sharply. The blue line (Llama) starts very low and declines to near zero.

* **Data Points (Approximate):**

* **Llama (Blue):** Starts very low at ~0.03 (0.00), drops to ~0.01 (0.25), and is at or near `0.0` for noise ratios of 0.50, 0.75, and 1.00.

* **Gemini (Orange):** Starts at ~0.62 (0.00), decreases to ~0.50 (0.25), ~0.34 (0.50), ~0.19 (0.75), and ends at ~0.04 (1.00).

* **Key Observation:** Gemini has a substantially higher success rate than Llama at all noise levels. Llama's success rate is negligible, especially beyond a noise ratio of 0.25.

#### Chart 3: Cot Tokens vs. Noise Ratio

* **Trend Verification:** The blue line (Llama) shows a clear downward trend. The orange line (Gemini) is relatively flat with a very slight upward trend.

* **Data Points (Approximate):**

* **Llama (Blue):** Starts at ~1720 tokens (0.00), decreases to ~1640 (0.25), ~1620 (0.50), ~1510 (0.75), and ends at ~1460 (1.00).

* **Gemini (Orange):** Remains consistently low, starting at ~350 tokens (0.00), with values around ~350 (0.25), ~360 (0.50), ~355 (0.75), and ~370 (1.00).

* **Key Observation:** There is a massive disparity in token usage. Llama uses approximately 4-5 times more Chain-of-Thought (Cot) tokens than Gemini across all noise levels. Llama's token count decreases with noise, while Gemini's remains stable.

### Key Observations

1. **Performance Hierarchy:** Gemini-2.5-flash-preview-04-17 outperforms Llama-4-maverick-17b-128e-instruct-fp8 on both "Mean progress ratio" and "Mean success rate" metrics at every tested noise level.

2. **Noise Sensitivity:** Both models' performance metrics degrade as the noise ratio increases. The degradation in success rate is particularly severe for both.

3. **Efficiency Disparity:** The models exhibit opposite behaviors in resource usage. The higher-performing Gemini model uses a consistently low and stable number of Cot tokens. The lower-performing Llama model uses a very high number of Cot tokens, which decreases as noise increases (possibly indicating a failure to generate lengthy reasoning chains in noisy conditions).

4. **Llama's Near-Zero Success:** Llama's mean success rate (pass@1) is effectively zero for noise ratios of 0.50 and above, indicating a complete failure to produce correct final answers under moderate to high noise.

### Interpretation

The data suggests a fundamental trade-off or difference in model architecture/behavior between the two tested models. **Gemini-2.5-flash-preview-04-17** appears to be both more robust (higher performance) and more efficient (lower token usage) on this specific task under noisy conditions. Its performance degrades gracefully with noise.

In contrast, **Llama-4-maverick-17b-128e-instruct-fp8** struggles significantly. Its low progress and near-zero success rates, coupled with very high token consumption, indicate it may be generating lengthy but ineffective reasoning chains ("Cot tokens") that fail to lead to correct solutions, especially when the input is corrupted by noise. The decrease in its token count with higher noise might reflect an inability to sustain coherent reasoning rather than increased efficiency.

The "Noise ratio" likely represents the proportion of corrupted or irrelevant information in the input prompt. The charts demonstrate that maintaining performance in the presence of such noise is a critical challenge, and the two models handle it with vastly different levels of efficacy and efficiency. This analysis would be crucial for selecting a model for real-world applications where input data may be imperfect.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Charts: Model Performance vs Noise Ratio

### Overview

Three line charts compare the performance of two AI models (Llama-4-maverick-17b-128e-instruct-fp8 and Gemini-2.5-flash-preview-04-17) across three metrics as noise ratio increases from 0.00 to 1.00. Each chart uses distinct y-axes for different metrics.

### Components/Axes

1. **X-axis**: Noise ratio (0.00 to 1.00 in 0.25 increments)

2. **Y-axes**:

- Chart 1: Mean progress ratio (0.0 to 1.0)

- Chart 2: Mean success rate (pass@1) (0.0 to 1.0)

- Chart 3: Cot tokens (0 to 1750)

3. **Legends**:

- Blue circles: Llama-4-maverick-17b-128e-instruct-fp8

- Orange circles: Gemini-2.5-flash-preview-04-17

4. **Legend placement**: Top-left corner of each chart

### Detailed Analysis

#### Chart 1: Mean progress ratio

- **Llama (blue)**: Starts at ~0.25, decreases gradually to ~0.12

- **Gemini (orange)**: Starts at ~0.75, decreases steeply to ~0.22

- **Trend**: Both decline, but Gemini shows sharper degradation

#### Chart 2: Mean success rate (pass@1)

- **Llama (blue)**: Starts at ~0.03, drops to near 0

- **Gemini (orange)**: Starts at ~0.62, decreases to ~0.04

- **Trend**: Both collapse at higher noise, Gemini maintains higher values initially

#### Chart 3: Cot tokens

- **Llama (blue)**: Starts at ~1750, decreases to ~1450

- **Gemini (orange)**: Remains flat at ~250

- **Trend**: Llama shows significant computational cost reduction, Gemini stable

### Key Observations

1. **Performance degradation**: Both models deteriorate with noise, but Gemini's decline is more pronounced in progress ratio and success rate

2. **Computational efficiency**: Llama's token usage drops significantly with noise, while Gemini maintains constant low usage

3. **Robustness**: Llama demonstrates better noise resilience in success rate metrics

4. **Threshold behavior**: All metrics show steep declines after noise ratio >0.5

### Interpretation

The data suggests Llama-4 maintains better performance stability under noise compared to Gemini-2.5, particularly in success rate metrics. However, Gemini shows superior computational efficiency with consistent low token usage. The sharp decline in Llama's progress ratio indicates potential overfitting to clean data. The cot token metric reveals Llama's processing becomes more resource-intensive under noise, while Gemini's fixed token usage suggests optimized inference pathways. These findings highlight trade-offs between model robustness and computational efficiency in noisy environments.

DECODING INTELLIGENCE...