\n

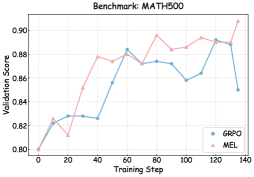

## Line Chart: Validation Score vs. Training Step (Benchmark: MATH500)

### Overview

This image presents a line chart illustrating the validation score of two models, GRPO and MEL, against the training step. The chart appears to track the performance of these models during a training process on the MATH500 benchmark.

### Components/Axes

* **Title:** Benchmark: MATH500 (positioned at the top-center)

* **X-axis:** Training Step (ranging from approximately 0 to 140, with markers at intervals of 20)

* **Y-axis:** Validation Score (ranging from approximately 0.80 to 0.91, with markers at intervals of 0.02)

* **Legend:** Located in the bottom-right corner.

* GRPO (represented by a blue line with circular markers)

* MEL (represented by a pink line with triangular markers)

### Detailed Analysis

**GRPO (Blue Line):**

The GRPO line initially slopes upward from approximately 0.81 at a training step of 0 to a peak of around 0.88 at a training step of 60. It then declines to approximately 0.86 at a training step of 80, fluctuates between 0.86 and 0.88 until a training step of 120, and then sharply decreases to approximately 0.85 at a training step of 140.

* (0, 0.81)

* (20, 0.83)

* (40, 0.85)

* (60, 0.88)

* (80, 0.86)

* (100, 0.87)

* (120, 0.89)

* (140, 0.85)

**MEL (Pink Line):**

The MEL line starts at approximately 0.80 at a training step of 0, rises to a peak of around 0.89 at a training step of 80, dips to approximately 0.88 at a training step of 100, and then increases to approximately 0.91 at a training step of 120. Finally, it decreases to approximately 0.89 at a training step of 140.

* (0, 0.80)

* (20, 0.83)

* (40, 0.87)

* (60, 0.88)

* (80, 0.89)

* (100, 0.88)

* (120, 0.91)

* (140, 0.89)

### Key Observations

* Both models show an initial increase in validation score as training progresses.

* MEL consistently achieves a higher validation score than GRPO throughout most of the training process.

* GRPO experiences a more significant drop in validation score towards the end of the training process (at training step 140).

* MEL reaches its peak performance at training step 120, while GRPO's performance plateaus before that point.

### Interpretation

The chart suggests that MEL is a more robust model for the MATH500 benchmark, consistently outperforming GRPO. The initial increase in validation score for both models indicates that they are learning from the training data. The decline in GRPO's performance at the end of the training process could indicate overfitting or a need for adjustments to the training parameters. The peak performance of MEL at step 120 suggests an optimal training duration for this model on this benchmark. The difference in the final validation scores highlights the potential benefits of using MEL over GRPO for this specific task. The data suggests that continued training beyond step 120 may not be beneficial for MEL, and could even lead to a decrease in performance.