\n

## Line Graph: Decay of Logarithmic Difference vs. Number of Layers

### Overview

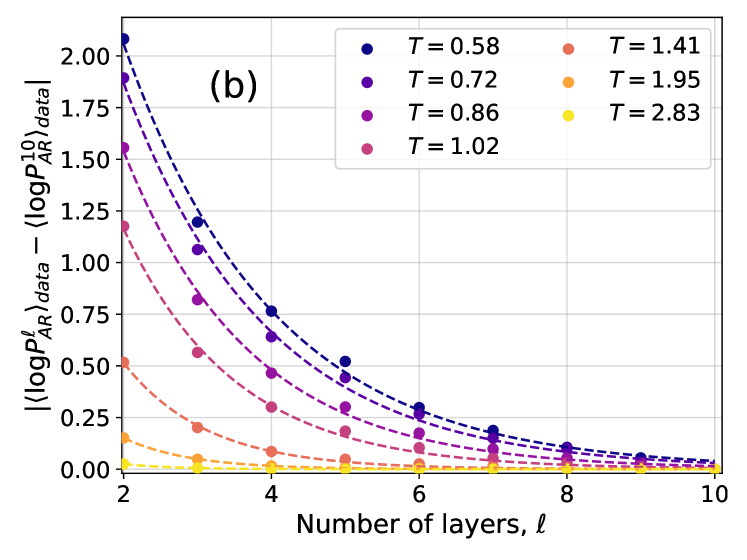

The image is a scientific line graph, labeled "(b)" in its top-left corner, plotting the absolute difference between two logarithmic quantities against the number of layers, ℓ. The graph displays seven distinct data series, each corresponding to a different value of a parameter `T`. All series show a decaying trend as the number of layers increases.

### Components/Axes

* **Chart Label:** "(b)" located in the top-left quadrant of the plot area.

* **X-Axis:**

* **Title:** "Number of layers, ℓ"

* **Scale:** Linear, ranging from 2 to 10.

* **Major Tick Marks:** At integers 2, 4, 6, 8, 10.

* **Y-Axis:**

* **Title:** `| (log P_AR^ℓ)_data - (log P_AR^10)_data |`

* **Scale:** Linear, ranging from 0.00 to 2.00.

* **Major Tick Marks:** At intervals of 0.25 (0.00, 0.25, 0.50, ..., 2.00).

* **Legend:** Positioned in the top-right corner of the plot area. It contains seven entries, each pairing a colored circle marker with a `T` value.

* **Entries (from top to bottom):**

1. Dark Blue Circle: `T = 0.58`

2. Purple Circle: `T = 0.72`

3. Magenta Circle: `T = 0.86`

4. Pink Circle: `T = 1.02`

5. Salmon/Light Red Circle: `T = 1.41`

6. Orange Circle: `T = 1.95`

7. Yellow Circle: `T = 2.83`

### Detailed Analysis

The graph plots the convergence of a quantity `log P_AR^ℓ` (for ℓ layers) towards its value at 10 layers (`log P_AR^10`). The y-axis measures the absolute difference from this reference point.

**Trend Verification & Data Points (Approximate):**

For each series, the value decreases monotonically as ℓ increases from 2 to 10. The decay is initially rapid and then asymptotes towards zero.

1. **T = 0.58 (Dark Blue):** Starts highest. At ℓ=2, y ≈ 2.05. Decays to y ≈ 0.05 at ℓ=10.

2. **T = 0.72 (Purple):** At ℓ=2, y ≈ 1.90. Decays to y ≈ 0.04 at ℓ=10.

3. **T = 0.86 (Magenta):** At ℓ=2, y ≈ 1.55. Decays to y ≈ 0.03 at ℓ=10.

4. **T = 1.02 (Pink):** At ℓ=2, y ≈ 1.20. Decays to y ≈ 0.02 at ℓ=10.

5. **T = 1.41 (Salmon):** At ℓ=2, y ≈ 0.52. Decays to y ≈ 0.01 at ℓ=10.

6. **T = 1.95 (Orange):** At ℓ=2, y ≈ 0.15. Decays to y ≈ 0.005 at ℓ=10.

7. **T = 2.83 (Yellow):** Starts lowest. At ℓ=2, y ≈ 0.03. Decays to nearly 0.00 at ℓ=10.

**Key Observation:** The initial value (at ℓ=2) and the rate of decay are strongly dependent on the parameter `T`. Lower `T` values result in a larger initial difference and a slower convergence to the 10-layer value.

### Key Observations

* **Systematic Ordering:** The curves are perfectly ordered by `T` value. At any given layer ℓ, a lower `T` corresponds to a higher y-value.

* **Convergence:** All series appear to converge to a difference of approximately zero by ℓ=10, which is expected as the y-axis measures the difference from the value at ℓ=10 itself.

* **Shape:** The decay for each series follows a similar convex, decreasing curve, suggesting an exponential or power-law relationship between the difference and the number of layers.

### Interpretation

This graph demonstrates the convergence behavior of a model or calculation (`P_AR`) as its depth (number of layers, ℓ) increases. The quantity `log P_AR^ℓ` is being compared to its "converged" value at 10 layers.

* **What the data suggests:** The parameter `T` controls the difficulty or scale of the convergence. Lower `T` values represent a scenario where the model's output at shallow depths (low ℓ) is very different from its deep (ℓ=10) output, requiring many more layers to stabilize. Higher `T` values represent an "easier" scenario where the model's output is already close to its final value even with very few layers.

* **Relationship between elements:** The x-axis (model depth) is the independent variable driving convergence. The y-axis (log difference) is the dependent measure of error or change. The legend (`T`) is a control parameter that modulates the relationship between depth and convergence speed.

* **Notable implication:** The plot provides a quantitative way to assess how many layers are needed for the model's output to become stable for a given `T`. For `T=2.83`, 2-4 layers may suffice, while for `T=0.58`, even 8 layers show a non-negligible difference from the 10-layer result. This is critical for understanding computational efficiency and model design trade-offs.