## Diagram: Chain-of-Thought (CoT) with Latent Token Compression

### Overview

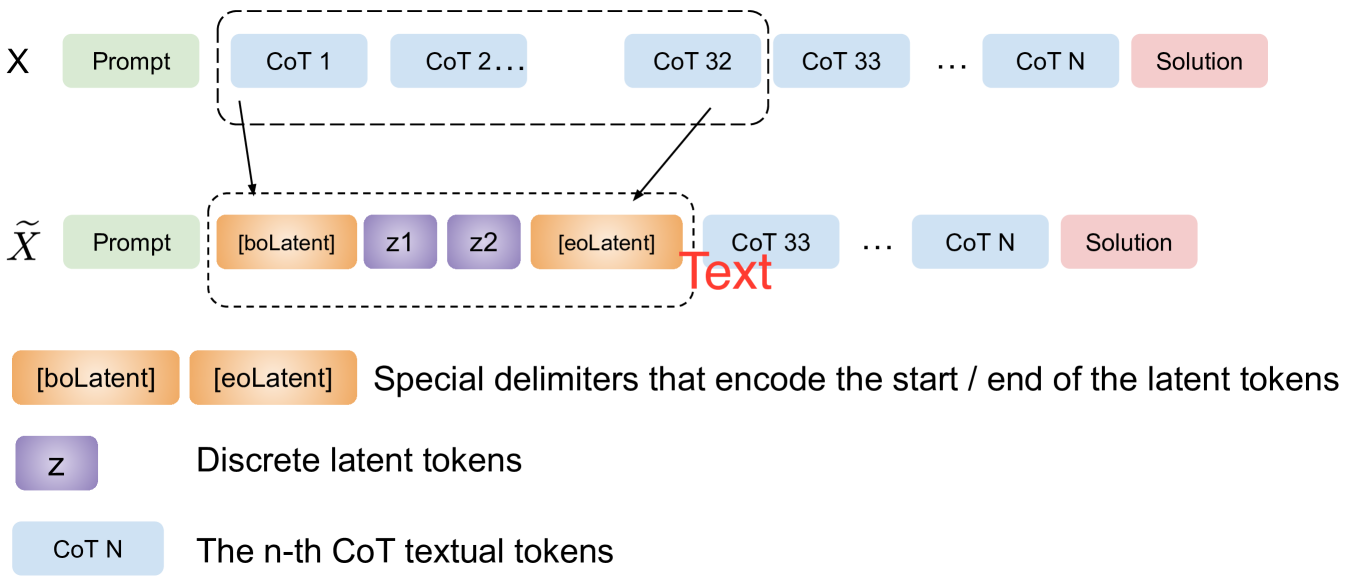

The image is a technical schematic diagram illustrating a process for compressing or replacing a segment of a textual Chain-of-Thought (CoT) reasoning sequence with discrete latent tokens. It contrasts a standard textual sequence (X) with a modified sequence (X̃) that incorporates a latent representation.

### Components/Axes

The diagram is structured into three main horizontal sections:

1. **Top Row (Sequence X):** Represents a standard input/output sequence for a language model.

* **Components (left to right):**

* A green box labeled `Prompt`.

* A dashed-line box enclosing a series of blue boxes: `CoT 1`, `CoT 2 ...`, `CoT 32`.

* A series of blue boxes outside the dashed box: `CoT 33`, `...`, `CoT N`.

* A final pink box labeled `Solution`.

* **Spatial Grounding:** The dashed box is positioned centrally, encompassing the first 32 CoT tokens.

2. **Middle Row (Sequence X̃):** Represents the modified sequence with latent compression.

* **Components (left to right):**

* A green box labeled `Prompt`.

* A dashed-line box (aligned vertically with the one above) enclosing:

* An orange box labeled `[boLatent]`.

* Two purple boxes labeled `z1` and `z2`.

* An orange box labeled `[eoLatent]`.

* A red text label `Text` with an arrow pointing from the `[eoLatent]` box to the next component.

* A series of blue boxes: `CoT 33`, `...`, `CoT N`.

* A final pink box labeled `Solution`.

* **Spatial Grounding:** The dashed box in this row is in the same horizontal position as the one above, indicating a direct replacement. The `Text` label is positioned to the right of the dashed box.

3. **Bottom Legend:** Explains the meaning of the colored boxes.

* **Orange Boxes:** `[boLatent]` and `[eoLatent]` - Labeled as "Special delimiters that encode the start / end of the latent tokens".

* **Purple Box:** `z` - Labeled as "Discrete latent tokens".

* **Blue Box:** `CoT N` - Labeled as "The n-th CoT textual tokens".

### Detailed Analysis

The diagram explicitly maps the transformation from sequence X to sequence X̃:

* **Transformation:** The segment of the sequence from `CoT 1` to `CoT 32` in the original sequence (X) is replaced in the modified sequence (X̃).

* **Replacement Content:** The replacement consists of four elements enclosed by special delimiters:

1. `[boLatent]` (begin latent)

2. `z1` (first discrete latent token)

3. `z2` (second discrete latent token)

4. `[eoLatent]` (end latent)

* **Flow Continuity:** After the `[eoLatent]` delimiter, the sequence resumes with the original textual tokens starting from `CoT 33` and continues to the `Solution`. The red `Text` label emphasizes the transition back to the textual domain.

* **Key Relationship:** The dashed boxes and their vertical alignment visually assert that the latent block (`[boLatent] z1 z2 [eoLatent]`) is a functional substitute for the 32 textual CoT tokens.

### Key Observations

1. **Fixed Compression Ratio:** The diagram specifies a compression of exactly 32 textual CoT tokens into 2 discrete latent tokens (`z1`, `z2`), framed by 2 delimiter tokens. This suggests a fixed, predefined compression scheme.

2. **Hybrid Sequence:** The final sequence (X̃) is a hybrid, containing both latent representations (`z1`, `z2`) and explicit textual reasoning steps (`CoT 33` to `CoT N`).

3. **Delimiter Necessity:** The process requires explicit start (`[boLatent]`) and end (`[eoLatent]`) markers to signal the model to switch between processing latent and textual tokens.

4. **No Data Values:** This is a conceptual diagram, not a data chart. It contains no numerical data points, trends, or statistical information. Its purpose is to illustrate an architectural or methodological concept.

### Interpretation

This diagram illustrates a technique for **reasoning compression** in language models. The core idea is to replace a potentially lengthy, explicit chain-of-thought (here, 32 steps) with a compact, learned latent representation (`z1`, `z2`). This could serve several purposes:

* **Efficiency:** Reducing the sequence length to save computational resources during inference.

* **Abstraction:** Encapsulating a complex reasoning subroutine into a dense, symbolic form.

* **Modularity:** Allowing a system to "call" a pre-computed or specialized reasoning module (represented by the latent tokens) and then continue with textual reasoning.

The retention of the later CoT steps (`CoT 33` onward) indicates that the latent compression is applied only to a specific segment of the reasoning process, not the entire chain. This suggests a selective application where only certain reasoning phases are deemed compressible or suitable for latent abstraction. The explicit delimiters are crucial for the model to correctly parse and integrate the hybrid sequence. The diagram effectively argues for a method that blends the interpretability of textual reasoning with the potential efficiency of latent variable models.