TECHNICAL ASSET FINGERPRINT

3288d2360eb140d864d11226

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

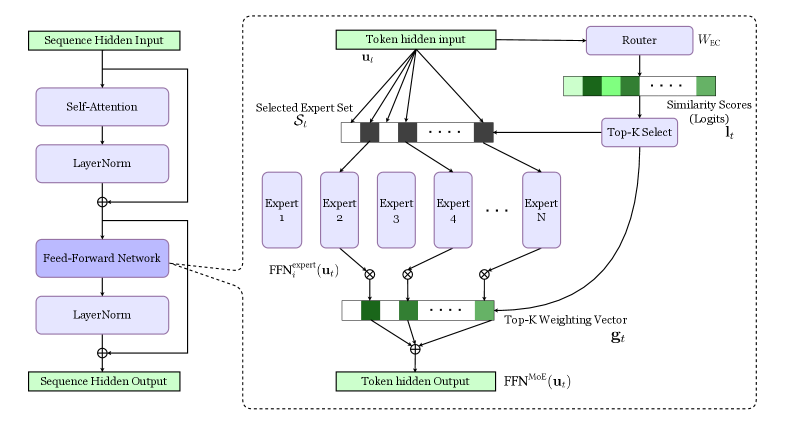

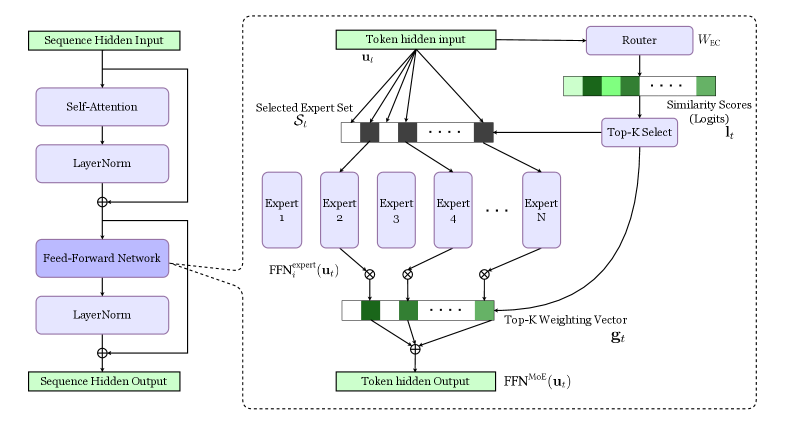

## Diagram: Mixture of Experts (MoE) Layer

### Overview

The image presents a diagram of a Mixture of Experts (MoE) layer within a neural network architecture. It illustrates the flow of data through self-attention, layer normalization, feed-forward networks, and the MoE component, which involves routing tokens to a selected set of experts and weighting their outputs.

### Components/Axes

* **Input/Output Blocks:**

* "Sequence Hidden Input" (top-left, green block)

* "Sequence Hidden Output" (bottom-left, green block)

* "Token hidden input" (top-center, green block)

* "Token hidden Output" (bottom-center, green block)

* **Processing Blocks (left side):**

* "Self-Attention" (blue block)

* "LayerNorm" (blue block)

* "Feed-Forward Network" (blue block)

* "LayerNorm" (blue block)

* **MoE Components (right side, within dashed box):**

* "Router" (blue block, top-right)

* "Selected Expert Set" (horizontal array of black/white blocks, labeled as "S<sub>t</sub>")

* "Expert 1", "Expert 2", "Expert 3", "Expert 4", ..., "Expert N" (blue blocks)

* "Similarity Scores (Logits)" (horizontal array of green blocks, top-right)

* "Top-K Select" (blue block, right)

* "Top-K Weighting Vector" (horizontal array of green blocks, bottom-center, labeled as "g<sub>t</sub>")

* **Variables:**

* "u<sub>t</sub>" (input to the experts)

* "l<sub>t</sub>" (output of Top-K Select)

* "W<sub>EC</sub>" (Router parameter)

* **Operations:**

* ⊕ (addition)

* ⊗ (multiplication)

* **Functions:**

* FFN<sup>expert</sup><sub>i</sub>(u<sub>t</sub>)

* FFN<sup>MoE</sup>(u<sub>t</sub>)

### Detailed Analysis

1. **Left Side (Sequence Processing):**

* The "Sequence Hidden Input" feeds into a "Self-Attention" block.

* The output of "Self-Attention" is normalized by "LayerNorm".

* This normalized output is added to the original input of "Self-Attention" (residual connection).

* The result is processed by a "Feed-Forward Network".

* The output of the "Feed-Forward Network" is normalized by "LayerNorm".

* This normalized output is added to the original input of the "Feed-Forward Network" (residual connection).

* The final output is the "Sequence Hidden Output".

2. **Right Side (Mixture of Experts):**

* The "Token hidden input" (u<sub>t</sub>) is fed into a "Router" with parameter "W<sub>EC</sub>".

* The "Router" outputs "Similarity Scores (Logits)" (l<sub>t</sub>), represented as a horizontal array of green blocks with varying shades of green.

* These scores are used by "Top-K Select" to select the top-K experts.

* The "Token hidden input" (u<sub>t</sub>) is also fed into each of the "Expert" blocks (Expert 1 to Expert N).

* The output of each expert, FFN<sup>expert</sup><sub>i</sub>(u<sub>t</sub>), is multiplied (⊗) by the corresponding weight from the "Top-K Weighting Vector" (g<sub>t</sub>).

* The weighted outputs of the experts are summed (⊕) to produce the "Token hidden Output", which is processed by FFN<sup>MoE</sup>(u<sub>t</sub>).

* The "Selected Expert Set" (S<sub>t</sub>) indicates which experts are selected (black blocks) and which are not (white blocks).

### Key Observations

* The diagram illustrates a common architecture for incorporating a Mixture of Experts layer into a neural network.

* The MoE layer allows the network to selectively route different tokens to different experts, enabling specialization and increased capacity.

* Residual connections are used around the "Self-Attention" and "Feed-Forward Network" blocks on the left side.

* The "Top-K Select" mechanism ensures that only the most relevant experts are used for each token.

### Interpretation

The diagram depicts a Mixture of Experts (MoE) layer, a technique used to increase the capacity of neural networks. The input sequence is processed through standard layers like self-attention and feed-forward networks. The MoE layer then routes different tokens to different experts based on the router's output. This allows the network to specialize its processing for different types of inputs. The "Top-K Select" mechanism ensures that only the most relevant experts are used, which can improve efficiency and performance. The residual connections help to stabilize training and improve the flow of information through the network. The varying shades of green in the "Similarity Scores" and "Top-K Weighting Vector" likely represent different levels of activation or importance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Mixture of Experts (MoE) Layer Architecture

### Overview

The image depicts the architecture of a Mixture of Experts (MoE) layer within a neural network. It illustrates how a token hidden input is routed to a selected set of experts, processed by those experts, and then combined to produce a token hidden output. The diagram highlights the key components involved in this process, including the router, experts, and weighting mechanism.

### Components/Axes

The diagram can be divided into three main sections:

1. **Left Side:** Standard Feed-Forward Network block. Includes "Sequence Hidden Input", "Self-Attention", "LayerNorm", "Feed-Forward", "LayerNorm", and "Sequence Hidden Output".

2. **Right Side (within dashed box):** MoE Layer. Contains "Token hidden input u<sub>t</sub>", "Router", "Selected Expert Set S<sub>t</sub>", "Expert 1" through "Expert N", "FFN<sup>expert</sup>(u<sub>t</sub>)", "Top-K Weighting Vector g<sub>t</sub>", and "Token hidden Output FFN<sup>MoE</sup>(u<sub>t</sub>)".

3. **Connections:** Arrows indicating the flow of data between components.

Key labels include:

* **Sequence Hidden Input:** The input to the initial feed-forward network.

* **Self-Attention:** A component within the feed-forward network.

* **LayerNorm:** Layer Normalization, used in both the standard and MoE blocks.

* **Feed-Forward:** A standard feed-forward network.

* **Token hidden input u<sub>t</sub>:** The input to the MoE layer.

* **Router:** The component responsible for routing the input to the experts.

* **W<sub>EC</sub>:** Weight matrix for the router.

* **Similarity Scores (Logits) l<sub>t</sub>:** The scores produced by the router, indicating the relevance of each expert.

* **Top-K Select:** The selection of the top K experts based on the similarity scores.

* **Selected Expert Set S<sub>t</sub>:** The set of experts selected by the router.

* **Expert 1…Expert N:** The individual expert networks.

* **FFN<sup>expert</sup>(u<sub>t</sub>):** The output of each expert network.

* **Top-K Weighting Vector g<sub>t</sub>:** The weights assigned to each selected expert.

* **Token hidden Output FFN<sup>MoE</sup>(u<sub>t</sub>):** The final output of the MoE layer.

### Detailed Analysis or Content Details

The diagram illustrates the following flow:

1. A "Sequence Hidden Input" passes through a standard feed-forward network consisting of "Self-Attention", "LayerNorm", "Feed-Forward", and another "LayerNorm" to produce a "Sequence Hidden Output".

2. The "Token hidden input u<sub>t</sub>" enters the MoE layer.

3. The "Router" processes the input and generates "Similarity Scores (Logits) l<sub>t</sub>" for each expert.

4. "Top-K Select" chooses the top K experts based on these scores, forming the "Selected Expert Set S<sub>t</sub>".

5. The input "u<sub>t</sub>" is fed to each expert in the selected set, resulting in "FFN<sup>expert</sup>(u<sub>t</sub>)" outputs.

6. A "Top-K Weighting Vector g<sub>t</sub>" is calculated, assigning weights to each expert's output.

7. The weighted outputs of the experts are combined to produce the final "Token hidden Output FFN<sup>MoE</sup>(u<sub>t</sub>)".

8. The "Token hidden Output" is then added to the "Sequence Hidden Output" from the left side of the diagram.

The diagram uses circular nodes to represent operations and rectangular blocks to represent data or sets of data. The plus signs (+) indicate element-wise addition. The connections between components are represented by arrows.

### Key Observations

The MoE layer introduces sparsity by only activating a subset of experts for each input token. The router plays a crucial role in determining which experts are most relevant. The weighting vector allows for a soft combination of the experts' outputs, rather than a hard selection. The diagram clearly shows the parallel processing of the input by multiple experts.

### Interpretation

This diagram demonstrates a key architectural component in scaling large language models – the Mixture of Experts layer. By distributing the computational load across multiple experts, MoE layers enable models to increase their capacity without a proportional increase in computational cost during inference. The router acts as a dynamic load balancer, directing each input to the most appropriate experts. This approach allows the model to specialize in different aspects of the data, leading to improved performance. The use of a weighting vector suggests that the model can leverage the knowledge of multiple experts simultaneously, rather than relying on a single best expert. The diagram highlights the modularity and scalability of this architecture, making it well-suited for handling complex and diverse datasets. The addition operation at the end suggests a residual connection, a common technique for improving training stability and performance in deep neural networks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Transformer Block with Mixture of Experts (MoE) Feed-Forward Network

### Overview

This image is a technical architecture diagram illustrating a standard Transformer block where the conventional Feed-Forward Network (FFN) is replaced by a Mixture of Experts (MoE) layer. The diagram is split into two main sections: a left column showing the high-level sequence of a Transformer block, and a right, expanded view detailing the internal components and data flow of the MoE-based FFN.

### Components/Axes

The diagram is composed of labeled blocks (rectangles) connected by directional arrows indicating data flow. Colors are used to differentiate component types:

* **Green Blocks:** Represent input and output data states.

* **Purple Blocks:** Represent processing modules or layers.

* **Light Purple/Gray Blocks:** Represent sub-components within the MoE system.

**Left Column - Standard Transformer Block Sequence:**

1. **Sequence Hidden Input** (Green)

2. **Self-Attention** (Purple)

3. **LayerNorm** (Purple)

4. **Feed-Forward Network** (Purple, highlighted with a dashed border indicating it is expanded on the right)

5. **LayerNorm** (Purple)

6. **Sequence Hidden Output** (Green)

**Right Section - Expanded MoE Feed-Forward Network:**

This section details the components inside the dashed box originating from the "Feed-Forward Network" block.

* **Token hidden input `u_t`** (Green): The input vector for a single token at position `t`.

* **Router `W_EC`** (Purple): A module that processes the token input.

* **Similarity Scores (Logits) `I_t`** (Green bar): The output of the Router, visualized as a horizontal bar with varying shades of green, representing scores for different experts.

* **Top-K Select** (Purple): A module that selects the highest-scoring experts.

* **Selected Expert Set `S_t`** (Gray bar): A horizontal bar indicating which experts are selected (dark squares) and which are not (light squares).

* **Expert 1, Expert 2, Expert 3, Expert 4, ..., Expert N** (Purple): A set of `N` parallel Feed-Forward Network sub-modules.

* **`FFN_expert(u_t)`** (Text label): Denotes the output of each individual expert network for the input `u_t`.

* **Top-K Weighting Vector `g_t`** (Green bar): A horizontal bar representing the normalized weights (gating scores) applied to the outputs of the selected experts.

* **Token hidden Output `FFN^MoE(u_t)`** (Green): The final output of the MoE layer, which is a weighted sum of the selected experts' outputs.

### Detailed Analysis

The data flow and processing steps within the MoE layer are as follows:

1. **Input & Routing:** The `Token hidden input u_t` is fed into the `Router (W_EC)`.

2. **Scoring:** The Router computes `Similarity Scores (Logits) I_t` for all `N` experts. The visual representation of `I_t` shows a sequence of green blocks with varying intensity, suggesting a distribution of scores.

3. **Expert Selection:** The `Top-K Select` module uses the logits `I_t` to choose the `K` experts with the highest scores. This selection is represented by the `Selected Expert Set S_t`, where dark squares correspond to chosen experts (e.g., Expert 2 and Expert 4 are shown as selected in the diagram).

4. **Parallel Expert Processing:** The input `u_t` is sent simultaneously to all `N` experts. However, only the outputs from the `K` selected experts (`FFN_expert(u_t)`) will be used in the next step.

5. **Weighted Aggregation:** The outputs of the selected experts are multiplied by their corresponding weights from the `Top-K Weighting Vector g_t`. The vector `g_t` is derived from the initial similarity scores `I_t` (likely via a softmax over the top-K scores).

6. **Output Generation:** The weighted outputs are summed (indicated by the summation symbol ⊕) to produce the final `Token hidden Output FFN^MoE(u_t)`.

### Key Observations

* **Dynamic Computation:** The architecture does not use all `N` experts for every token. The Router and Top-K selection create a dynamic, data-dependent pathway.

* **Sparsity:** The system is sparse, as only a subset (`K` out of `N`) of the expert networks are activated for any given input token, which is a key efficiency mechanism.

* **Visual Encoding of Selection:** The diagram uses a consistent visual metaphor: horizontal bars with discrete squares represent vectors (`I_t`, `S_t`, `g_t`). Darker or filled squares indicate active or selected elements (high score, chosen expert, high weight).

* **Mathematical Notation:** The diagram uses standard notations: `u_t` for input, `W_EC` for router weights, `I_t` for logits, `S_t` for the selected set, `g_t` for gating weights, and `FFN^MoE(u_t)` for the final output.

### Interpretation

This diagram explains the core mechanism of a Mixture of Experts layer, a technique used to scale model capacity (number of parameters) without a proportional increase in computational cost (FLOPs) during inference.

* **What it demonstrates:** It shows how a model can learn specialized sub-networks (experts) and a lightweight router that learns to dispatch different types of inputs (e.g., different words or concepts) to the most relevant experts. The final output is a combination of these specialized computations.

* **Relationship between elements:** The Router is the central controller. Its quality determines the efficiency and effectiveness of the entire MoE layer. A good router learns to make clean, confident selections (high `I_t` for the best experts), leading to a sparse and decisive `S_t` and an effective `g_t`. The experts themselves are standard FFNs, but their specialization emerges from training.

* **Notable implications:** The "Top-K" operation is critical. Setting `K=1` would create a hard, exclusive choice. Setting `K>1` (as implied by the diagram showing multiple selected experts and a weighting vector `g_t`) allows for a soft combination of the top experts, which can provide smoother representations and be more robust. The primary trade-off is between model quality (favoring higher `K` or more experts `N`) and computational/memory efficiency (favoring lower `K`). This architecture is foundational for very large language models that aim to be both knowledgeable and efficient.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Mixture of Experts (MoE) Neural Network Architecture

### Overview

The diagram illustrates a hybrid neural network architecture combining standard Transformer components with a Mixture of Experts (MoE) mechanism. The left side shows a standard Transformer block, while the right side details the MoE routing and expert selection process.

### Components/Axes

**Left Side (Standard Transformer Block):**

- **Sequence Hidden Input** → **Self-Attention** → **LayerNorm** → **Feed-Forward Network (FFN)** → **LayerNorm** → **Sequence Hidden Output**

- Key components: Self-Attention, LayerNorm, FFN

**Right Side (MoE Mechanism):**

- **Token hidden input** → **Router** (weights: _W<sub>EC</sub>_) → **Top-K Select** (logits: _l<sub>t</sub>_) → **Selected Expert Set** (Experts 1–N)

- **FFN<sup>expert</sup>**(_u<sub>t</sub>_) → **Top-K Weighting Vector** (_g<sub>t</sub>_) → **FFN<sup>MoE</sup>**(_u<sub>t</sub>_) → **Token hidden Output**

**Key Elements:**

- Router weights: _W<sub>EC</sub>_

- Similarity scores: Logits (_l<sub>t</sub>_)

- Expert selection: Top-K mechanism

- Expert outputs: Combined via weighting vector _g<sub>t</sub>_

### Detailed Analysis

1. **Standard Transformer Flow:**

- Input sequence undergoes self-attention and layer normalization

- Feed-forward network processes the output

- Final layer normalization produces sequence-level hidden states

2. **MoE Mechanism:**

- Token-level input (_u<sub>t</sub>_) is routed through a learned weight matrix _W<sub>EC</sub>_

- Router computes similarity scores (logits _l<sub>t</sub>_) for all experts

- Top-K experts are selected based on highest logits

- Selected experts process the input independently

- Final output combines expert results using a Top-K weighting vector _g<sub>t</sub>_

3. **Mathematical Notation:**

- Expert-specific FFN: FFN<sup>expert</sup>(_u<sub>t</sub>_)

- MoE-combined FFN: FFN<sup>MoE</sup>(_u<sub>t</sub>_)

- Weighting vector: _g<sub>t</sub>_ (Top-K experts)

### Key Observations

- **Dynamic Expert Selection:** Each token independently selects experts based on similarity scores

- **Expert Specialization:** N distinct experts handle different input patterns

- **Efficiency:** Only K experts are activated per token (K << N)

- **Integration:** MoE output merges with standard Transformer processing

### Interpretation

This architecture demonstrates a hybrid approach to neural network design:

1. **Specialization vs. Generality:** Standard Transformer components handle general sequence processing, while MoE experts specialize in specific input patterns

2. **Efficiency Gains:** By activating only K experts per token, the model reduces computational load compared to using all N experts

3. **Adaptive Routing:** The router's learned weights _W<sub>EC</sub>_ enable dynamic adaptation to input characteristics

4. **Performance Tradeoff:** The Top-K selection balances expert diversity with computational constraints

The diagram suggests this architecture could achieve state-of-the-art performance on complex tasks while maintaining computational efficiency through expert specialization and sparse activation.

DECODING INTELLIGENCE...