TECHNICAL ASSET FINGERPRINT

328f14b65b62e6d351fbe7a1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

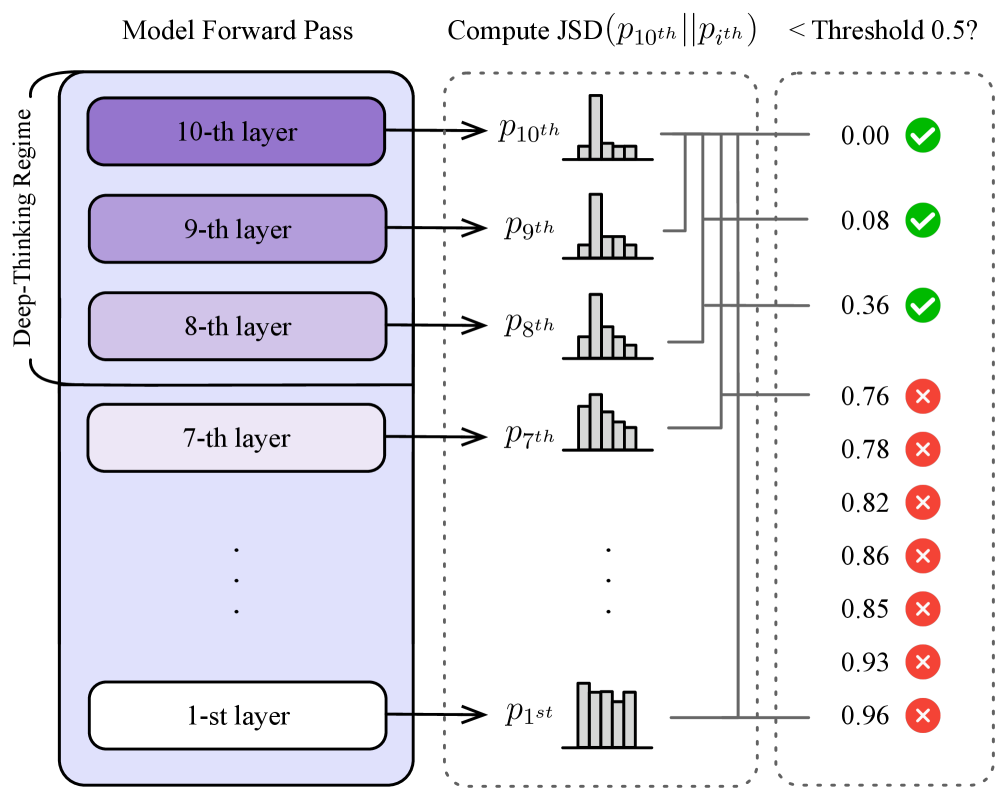

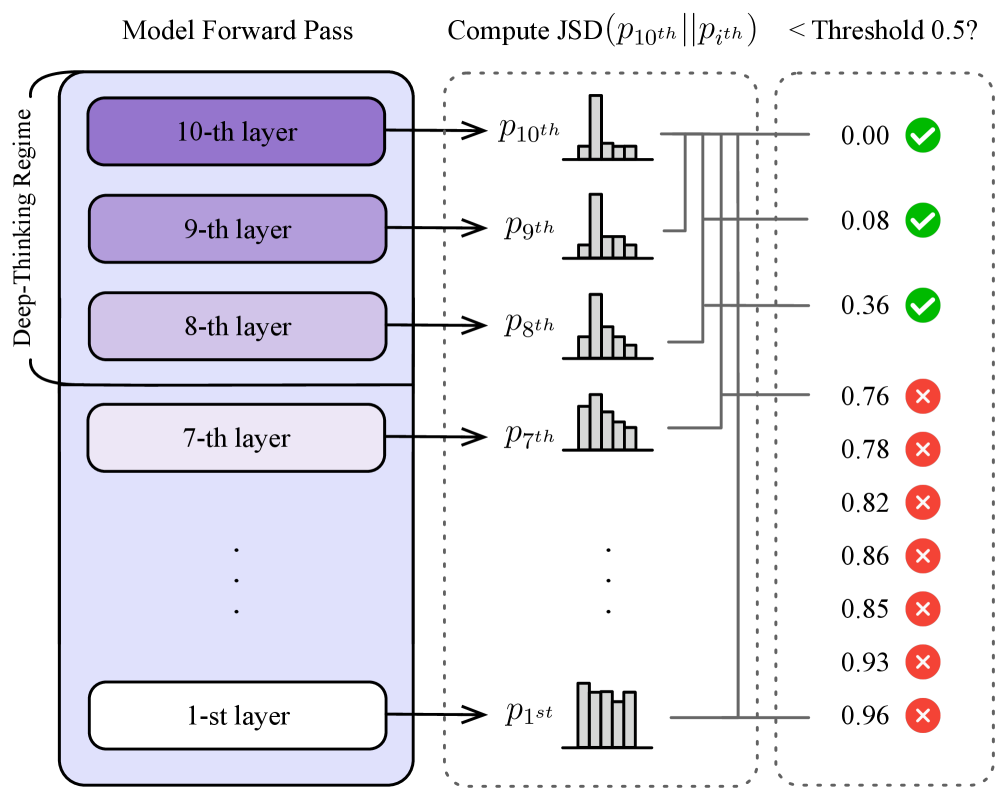

## Diagram: Model Forward Pass and JSD Computation

### Overview

The diagram illustrates a model forward pass through multiple layers, followed by the computation of the Jensen-Shannon Divergence (JSD) between the output of the 10th layer and the output of each individual layer. The diagram also indicates whether the computed JSD is below a threshold of 0.5.

### Components/Axes

* **Left Box:** Represents the "Model Forward Pass" and is labeled "Model Forward Pass" at the top. It contains stacked layers, numbered from 1st to 10th. The top layers are shaded in purple, with the shading becoming lighter towards the bottom. A curved bracket to the left of the layers is labeled "Deep-Thinking Regime".

* **Middle Box:** Represents the "Compute JSD" stage. The title is "Compute JSD (p10th || pith) < Threshold 0.5?". This section shows histograms representing the output distributions (p) of each layer (1st, 7th, 8th, 9th, 10th).

* **Right Box:** Shows the JSD value and a green checkmark or red cross, indicating whether the JSD is below the threshold of 0.5.

### Detailed Analysis

**Model Forward Pass (Left Box):**

* The layers are stacked vertically, with the 10th layer at the top and the 1st layer at the bottom.

* The layers are labeled as "10-th layer", "9-th layer", "8-th layer", "7-th layer", and "1-st layer". There are ellipsis (...) indicating omitted layers between the 7th and 1st layers.

* The "Deep-Thinking Regime" label spans from approximately the 7th layer to the 10th layer.

**Compute JSD (Middle Box):**

* Each layer's output distribution (p) is represented by a small histogram.

* The histograms are labeled as p10th, p9th, p8th, p7th, and p1st, corresponding to the respective layers.

* Gray lines connect each layer to its corresponding histogram and then to the JSD value on the right.

**JSD Comparison (Right Box):**

* The JSD values are listed vertically, corresponding to the layers from top to bottom.

* A green checkmark indicates that the JSD is below 0.5, while a red cross indicates that it is above 0.5.

* The JSD values and their corresponding indicators are:

* p10th: 0.00 (Green Checkmark)

* p9th: 0.08 (Green Checkmark)

* p8th: 0.36 (Green Checkmark)

* p7th: 0.76 (Red Cross)

* p7th: 0.78 (Red Cross)

* (Implied p6th): 0.82 (Red Cross)

* (Implied p5th): 0.86 (Red Cross)

* (Implied p4th): 0.85 (Red Cross)

* (Implied p3th): 0.93 (Red Cross)

* p1st: 0.96 (Red Cross)

### Key Observations

* The JSD values generally increase as you move from the 10th layer to the 1st layer.

* The JSD values for the top three layers (10th, 9th, and 8th) are below the threshold of 0.5, while the JSD values for the remaining layers are above the threshold.

### Interpretation

The diagram suggests that the higher layers of the model (within the "Deep-Thinking Regime") produce output distributions that are more similar to the 10th layer's output distribution, as indicated by the lower JSD values. As the model processes the input through the lower layers, the output distributions diverge more significantly from the 10th layer's output, resulting in higher JSD values. This could indicate that the "Deep-Thinking Regime" is where the model's core representations are formed, and the earlier layers are more focused on lower-level feature extraction. The threshold of 0.5 is used to distinguish between layers that produce similar outputs to the 10th layer and those that do not.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Deep-Thinking Regime Evaluation

### Overview

The diagram illustrates a process for evaluating a "Deep-Thinking Regime" within a model, likely a neural network. It shows a forward pass through multiple layers of the model, followed by a computation of the Jensen-Shannon Divergence (JSD) between probability distributions at different layers, and a comparison against a threshold. The diagram visually represents whether the JSD values exceed a threshold of 0.5.

### Components/Axes

The diagram is segmented into three main regions:

1. **Deep-Thinking Regime:** A vertical stack of rectangular blocks representing the layers of the model. Labeled from "10-th layer" at the top to "1-st layer" at the bottom, with an ellipsis indicating intermediate layers.

2. **Compute JSD:** A section showing probability distributions (histograms) corresponding to each layer, and lines connecting them to a JSD computation. The distributions are labeled as *p<sub>10th</sub>*, *p<sub>9th</sub>*, *p<sub>8th</sub>*, *p<sub>7th</sub>*, and *p<sub>1st</sub>*.

3. **Threshold Comparison:** A vertical column of checkmarks and crosses, indicating whether the computed JSD value for each layer is less than or equal to 0.5.

The primary labels are:

* "Model Forward Pass" (above the Deep-Thinking Regime)

* "Compute JSD (*p<sub>10th</sub>* || *p<sub>7th</sub>*)" (above the JSD computation section)

* "< Threshold 0.5?" (above the threshold comparison section)

### Detailed Analysis

The diagram shows the following JSD values and corresponding threshold comparisons:

* **10-th layer:** JSD = 0.00, Result: Checkmark (≤ 0.5)

* **9-th layer:** JSD = 0.08, Result: Checkmark (≤ 0.5)

* **8-th layer:** JSD = 0.36, Result: Checkmark (≤ 0.5)

* **7-th layer:** JSD = 0.76, Result: Cross ( > 0.5)

* **6-th layer:** JSD = 0.78, Result: Cross ( > 0.5)

* **5-th layer:** JSD = 0.82, Result: Cross ( > 0.5)

* **4-th layer:** JSD = 0.86, Result: Cross ( > 0.5)

* **3-rd layer:** JSD = 0.85, Result: Cross ( > 0.5)

* **2-nd layer:** JSD = 0.93, Result: Cross ( > 0.5)

* **1-st layer:** JSD = 0.96, Result: Cross ( > 0.5)

The JSD values generally increase as we move down through the layers (from 10th to 1st). The distributions themselves appear to be histograms, with the x-axis representing the values and the y-axis representing the probability density. The distributions are visually different, suggesting varying data characteristics at each layer.

### Key Observations

* The initial layers (10th, 9th, and 8th) have JSD values below the threshold of 0.5, indicating a high degree of similarity between the probability distributions.

* From the 7th layer onwards, the JSD values consistently exceed the threshold, suggesting a significant divergence in the probability distributions.

* The JSD values increase steadily from the 7th layer to the 1st layer, indicating a growing divergence.

### Interpretation

This diagram likely represents a method for identifying the point at which a model transitions from a "Deep-Thinking Regime" to a state where its internal representations become significantly different. The JSD is used as a metric to quantify this difference. The threshold of 0.5 appears to be a critical value, separating layers that maintain a consistent internal representation from those that diverge.

The increasing JSD values as we move down the layers suggest that the lower layers of the model are processing information in a fundamentally different way than the higher layers. This could be due to the model learning more abstract or complex features in the lower layers, or it could indicate a loss of information or a shift in the model's focus.

The diagram implies that the 7th layer is a key transition point, marking the beginning of the divergence. This information could be used to optimize the model's architecture or training process to maintain a more consistent internal representation throughout all layers. The use of JSD suggests that the goal is to maintain similarity in the *shape* of the probability distributions, rather than simply matching the values themselves.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Layer-wise Jensen-Shannon Divergence Analysis in a Neural Network

### Overview

The image is a technical diagram illustrating a process for analyzing the similarity of probability distributions output by different layers of a neural network during a forward pass. It specifically compares the distribution from the 10th (final) layer to distributions from all preceding layers using the Jensen-Shannon Divergence (JSD) metric, checking if the divergence is below a set threshold.

### Components/Axes

The diagram is organized into three vertical sections, flowing from left to right:

1. **Left Section: Model Forward Pass**

* **Title:** "Model Forward Pass"

* **Content:** A vertical stack of rounded rectangles representing neural network layers, ordered from top (10th layer) to bottom (1st layer).

* **Grouping:** The top three layers (10th, 9th, 8th) are enclosed in a darker purple box labeled with a vertical bracket on the left: "Deep-Thinking Regime".

* **Layer Labels:** Each rectangle contains text: "10-th layer", "9-th layer", "8-th layer", "7-th layer", followed by a vertical ellipsis (three dots), and finally "1-st layer" at the bottom.

* **Output:** An arrow points from each layer rectangle to the middle section, labeled with a probability distribution symbol: `p_10th`, `p_9th`, `p_8th`, `p_7th`, ..., `p_1st`.

2. **Middle Section: Distribution Visualization**

* **Title:** "Compute JSD(p_10th || p_ith)"

* **Content:** A series of small histogram icons, one for each layer's output distribution (`p_10th` through `p_1st`). Each histogram is a simple bar chart with 4-5 bars of varying heights, visually representing the shape of the probability distribution. The histograms are connected by lines to the corresponding JSD values in the right section.

3. **Right Section: Threshold Comparison**

* **Title:** "< Threshold 0.5?"

* **Content:** A vertical list of numerical JSD values, each paired with a status icon.

* **Legend/Status Icons:**

* A green circle with a white checkmark (✅) indicates the JSD value is **less than** the 0.5 threshold (PASS).

* A red circle with a white 'X' (❌) indicates the JSD value is **greater than or equal to** the 0.5 threshold (FAIL).

* **Data Points (from top to bottom):**

* `0.00` ✅ (connected to `p_10th`)

* `0.08` ✅ (connected to `p_9th`)

* `0.36` ✅ (connected to `p_8th`)

* `0.76` ❌ (connected to `p_7th`)

* `0.78` ❌

* `0.82` ❌

* `0.86` ❌

* `0.85` ❌

* `0.93` ❌

* `0.96` ❌ (connected to `p_1st`)

### Detailed Analysis

The diagram details a specific analytical procedure:

1. A forward pass is run through a neural network.

2. The probability distribution output (`p_ith`) is captured from each layer (`i` = 1 to 10).

3. The Jensen-Shannon Divergence (JSD) is computed between the distribution from the final layer (`p_10th`) and the distribution from every other layer (`p_ith`). The JSD is a symmetric measure of similarity between two distributions, ranging from 0 (identical) to 1 (maximally different).

4. Each computed JSD value is compared to a fixed threshold of **0.5**.

5. The results are categorized:

* **Layers 10, 9, and 8** have JSD values (0.00, 0.08, 0.36) all **below 0.5**, marked with green checkmarks. These layers are collectively identified as the "Deep-Thinking Regime."

* **Layers 7 through 1** have JSD values (0.76 to 0.96) all **above 0.5**, marked with red 'X's.

### Key Observations

* **Clear Threshold Bifurcation:** There is a sharp discontinuity in JSD values between the 8th layer (0.36) and the 7th layer (0.76). The threshold of 0.5 cleanly separates the network into two distinct groups.

* **Monotonic Trend:** The JSD value generally increases as we move from deeper layers (10th) to shallower layers (1st). The trend is: `0.00 → 0.08 → 0.36 → 0.76 → ... → 0.96`. This indicates that the output distributions of earlier layers become progressively more dissimilar to the final layer's distribution.

* **"Deep-Thinking Regime" Definition:** The diagram explicitly defines the "Deep-Thinking Regime" as the top three layers (10th, 9th, 8th), which are the only ones whose output distributions are considered sufficiently similar (JSD < 0.5) to the final layer's output.

* **Visual Confirmation:** The histogram icons, while schematic, show a visual progression. The histograms for `p_10th`, `p_9th`, and `p_8th` appear more peaked or concentrated, while those for earlier layers (e.g., `p_1st`) appear more uniform or flat, correlating with the higher JSD values.

### Interpretation

This diagram presents a method for **identifying functionally coherent groups of layers within a neural network** based on the similarity of their internal representations (output distributions).

* **What it suggests:** The analysis implies that the final three layers of this network form a cohesive computational module ("Deep-Thinking Regime") where representations are highly refined and similar to the final output. In contrast, layers 1 through 7 are performing more distinct, likely more elementary (elementary) feature extraction, resulting in representations that diverge significantly from the final, task-ready output.

* **How elements relate:** The flow from left to right maps the transformation of data: from the architectural structure (layers), to the extracted statistical property (distribution), to a quantitative comparison (JSD), and finally to a binary decision (pass/fail against threshold). The "Deep-Thinking Regime" bracket visually and conceptually groups the layers that pass the similarity test.

* **Notable implications:**

* **Model Pruning/Analysis:** This technique could be used to identify redundant layers. If layers 1-7 are dissimilar to the final output, they might be candidates for compression or removal without drastically affecting the final representation, though this requires further validation.

* **Understanding Model Depth:** It provides empirical evidence for the hierarchical nature of deep learning, where deeper layers build upon and refine the features of earlier layers, culminating in a stable, high-level representation in the final few layers.

* **Threshold Choice:** The choice of 0.5 as the threshold is critical and appears somewhat arbitrary in the diagram. Its value determines the boundary of the "Deep-Thinking Regime." A different threshold would change which layers are included.

* **The "Deep-Thinking" Label:** The term is provocative. It suggests that the layers with stable, similar-to-output representations are where the model's "final reasoning" or decision-making crystallizes, as opposed to earlier layers which are still processing raw input into abstract features.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Deep-Thinking Regime with JSD Threshold Analysis

### Overview

The diagram illustrates a multi-layered "Deep-Thinking Regime" (1st to 10th layers) where each layer generates a probability distribution (`p1st` to `p10th`). A Jensen-Shannon Divergence (JSD) calculation compares the 10th layer's distribution (`p10th`) against all other layers (`pith`). Results are evaluated against a threshold of 0.5, with green checks (✓) for values below the threshold and red crosses (✗) for values above.

---

### Components/Axes

1. **Left Panel: Deep-Thinking Regime**

- Vertical stack of 10 layers (1st to 10th), labeled with their respective layer numbers.

- Each layer outputs a probability distribution (`p1st` to `p10th`), represented as histograms.

- Layers are color-coded: darker purple for higher layers (10th–8th), lighter purple for lower layers (7th–1st).

2. **Middle Panel: JSD Computation**

- Title: "Compute JSD(p₁₀ᵗʰ || pᵢᵗʰ)".

- Vertical axis lists `p10th` to `p1st` (top to bottom).

- Horizontal axis shows JSD values (0.00 to 0.96) with incremental markers (0.00, 0.08, 0.36, etc.).

- Dotted lines connect `p10th` to each `pith` for visual comparison.

3. **Right Panel: Threshold Evaluation**

- Title: "< Threshold 0.5?".

- Vertical axis lists JSD values (0.00, 0.08, 0.36, 0.76, 0.78, 0.82, 0.86, 0.85, 0.93, 0.96).

- Green checks (✓) for values < 0.5; red crosses (✗) for values ≥ 0.5.

---

### Detailed Analysis

1. **Layer Outputs (Left Panel)**

- All layers show distinct histogram distributions, with no explicit numerical values provided for individual bins.

2. **JSD Values (Middle Panel)**

- **p10th vs. p10th**: JSD = 0.00 (✓).

- **p10th vs. p9th**: JSD = 0.08 (✓).

- **p10th vs. p8th**: JSD = 0.36 (✓).

- **p10th vs. p7th**: JSD = 0.76 (✗).

- **p10th vs. p6th**: JSD = 0.78 (✗).

- **p10th vs. p5th**: JSD = 0.82 (✗).

- **p10th vs. p4th**: JSD = 0.86 (✗).

- **p10th vs. p3th**: JSD = 0.85 (✗).

- **p10th vs. p2th**: JSD = 0.93 (✗).

- **p10th vs. p1st**: JSD = 0.96 (✗).

3. **Threshold Evaluation (Right Panel)**

- Values < 0.5 (0.00, 0.08, 0.36) are marked with green checks (✓).

- Values ≥ 0.5 (0.76–0.96) are marked with red crosses (✗).

---

### Key Observations

1. **Trend in JSD Values**:

- Higher layers (10th, 9th, 8th) exhibit significantly lower JSD values compared to lower layers (7th–1st).

- JSD increases monotonically as layers descend from 10th to 1st.

2. **Threshold Compliance**:

- Only the top 3 layers (10th, 9th, 8th) meet the JSD threshold (< 0.5).

- All lower layers (7th–1st) exceed the threshold, indicating greater divergence from `p10th`.

3. **Distribution Similarity**:

- The 10th layer is perfectly aligned with itself (JSD = 0.00).

- The 9th and 8th layers show moderate similarity (JSD = 0.08, 0.36), while lower layers diverge sharply.

---

### Interpretation

1. **Model Performance**:

- The top 3 layers (10th–8th) demonstrate strong alignment with the 10th layer's distribution, suggesting they are critical for maintaining consistency in the "Deep-Thinking Regime."

- Lower layers (7th–1st) exhibit poor alignment, potentially indicating instability or inefficiency in earlier processing stages.

2. **Threshold Significance**:

- The 0.5 threshold acts as a binary classifier for layer reliability. Layers below this threshold are deemed "acceptable" for similarity to `p10th`, while those above are flagged as outliers.

3. **Structural Implications**:

- The diagram implies a hierarchical dependency: higher layers refine or stabilize outputs from lower layers. The divergence in lower layers may propagate errors upward if not corrected by the top layers.

4. **Anomalies**:

- The 7th layer (JSD = 0.76) is the first to exceed the threshold, marking a critical point where performance degrades.

- The 1st layer (JSD = 0.96) shows the greatest divergence, suggesting foundational layers may require optimization.

---

### Conclusion

This diagram highlights the importance of upper layers in maintaining distributional consistency within the model. The JSD threshold serves as a diagnostic tool to identify layers contributing to stability versus those introducing variability. Addressing the divergence in lower layers could enhance overall model robustness.

DECODING INTELLIGENCE...