## Diagram: Large Language Model with Latent Predictions

### Overview

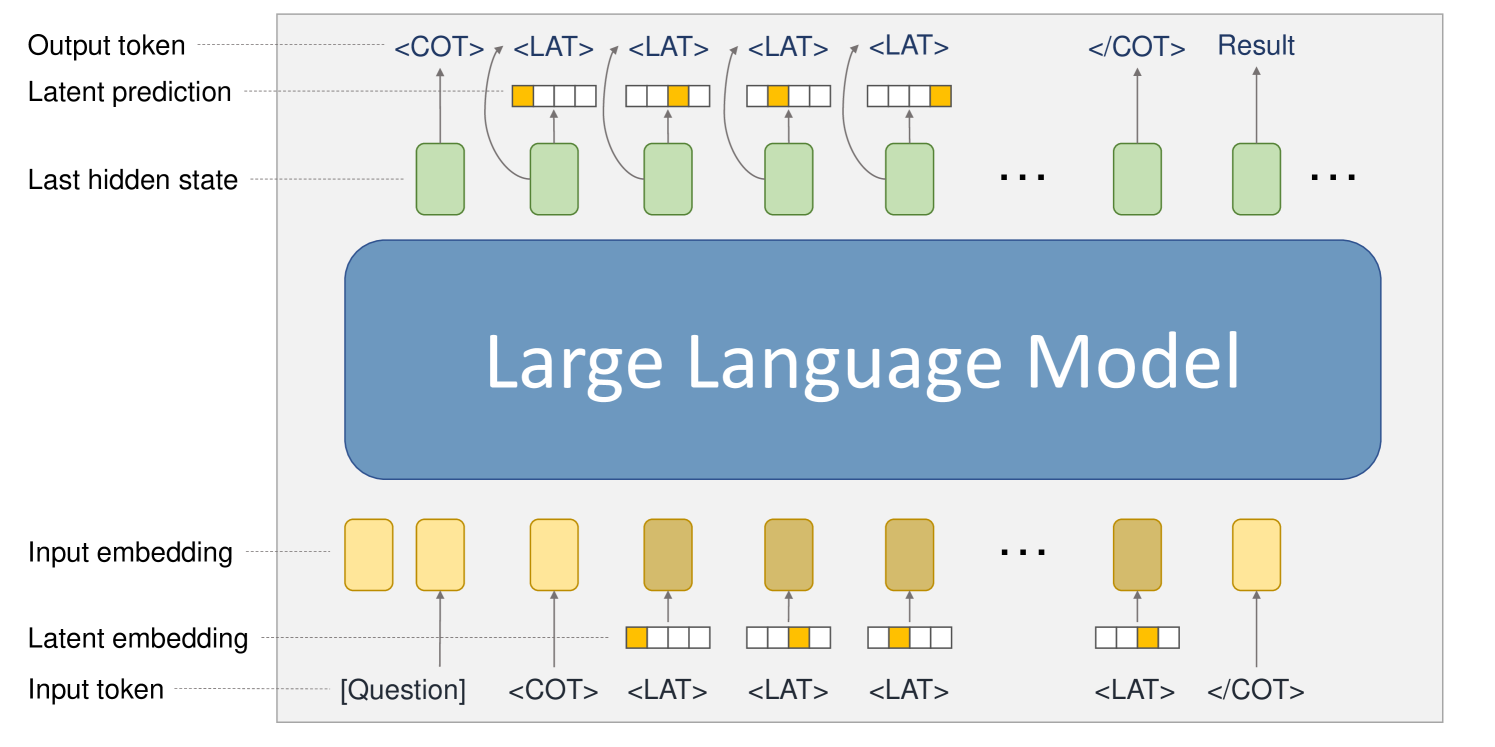

The image is a diagram illustrating the architecture and process of a Large Language Model (LLM) that uses latent predictions. It shows the flow of information from input tokens to output tokens, highlighting the role of latent embeddings and predictions in generating the final result.

### Components/Axes

* **Labels (Left Side):**

* Output token

* Latent prediction

* Last hidden state

* Input embedding

* Latent embedding

* Input token

* **Tokens (Bottom):**

* \[Question]

* <COT>

* <LAT> (repeated multiple times)

* </COT>

* **Tokens (Top):**

* <COT>

* <LAT> (repeated multiple times)

* </COT>

* Result

* **Central Component:**

* Large Language Model (represented as a rounded rectangle)

### Detailed Analysis

1. **Input Token Layer (Bottom):**

* Starts with the token "[Question]".

* Followed by "<COT>" (Chain of Thought).

* Then multiple "<LAT>" (Latent) tokens.

* Ends with "</COT>".

* Each token has a corresponding "Latent embedding" represented by a small array of cells, with one cell highlighted in orange.

* Each token also has a corresponding "Input embedding" represented by a yellow rectangle. The color of the rectangles darkens as the sequence progresses from left to right.

2. **Large Language Model (Center):**

* A large, rounded rectangle labeled "Large Language Model".

* Represents the core processing unit.

3. **Last Hidden State Layer (Middle):**

* A series of green rectangles representing the "Last hidden state".

* There are ellipsis (...) indicating continuation of the sequence.

4. **Latent Prediction Layer (Top):**

* Each "Last hidden state" has a corresponding "Latent prediction" represented by a small array of cells, with one cell highlighted in orange.

* Curved arrows connect each "Last hidden state" to its corresponding "Latent prediction".

5. **Output Token Layer (Top):**

* Starts with "<COT>".

* Followed by multiple "<LAT>" tokens.

* Ends with "</COT>" and "Result".

* Each "Last hidden state" has an arrow pointing up to the corresponding "Output token".

### Key Observations

* The diagram illustrates a sequential process where input tokens are processed by the LLM to generate output tokens.

* Latent embeddings and predictions play a crucial role in the process, influencing the generation of the final result.

* The use of "<COT>" and "<LAT>" tokens suggests a mechanism for incorporating chain-of-thought reasoning and latent information into the model's predictions.

### Interpretation

The diagram depicts a Large Language Model architecture that leverages latent predictions to enhance its reasoning and generation capabilities. The input tokens, including the question and special tokens like "<COT>" and "<LAT>", are embedded and fed into the LLM. The model then generates a sequence of hidden states, which are used to predict latent variables. These latent predictions, along with the hidden states, are then used to generate the output tokens, ultimately leading to the final result. The "<COT>" tokens likely guide the model to generate a chain of thought, while the "<LAT>" tokens allow the model to incorporate latent information into its predictions. This architecture suggests a sophisticated approach to language modeling that combines explicit reasoning with implicit knowledge representation.