## Diagram: Knowledge Graph and Reasoning-on-Graphs Process

### Overview

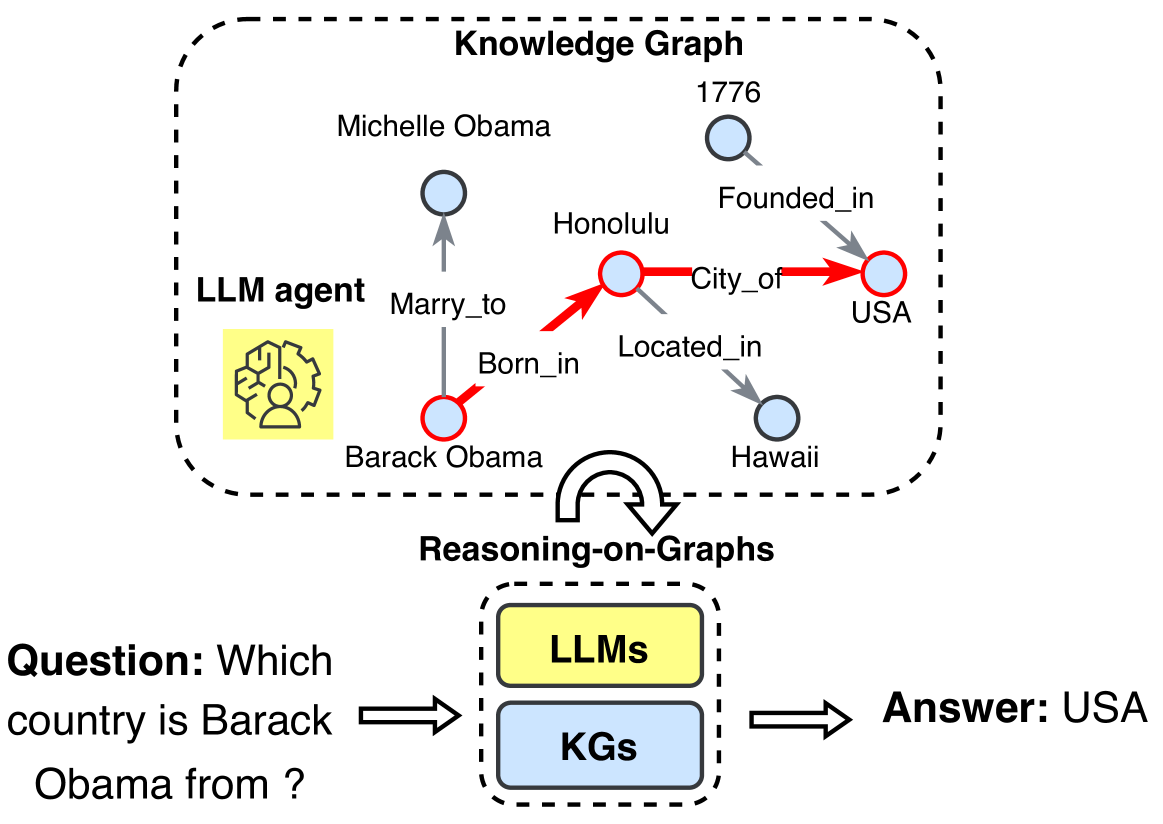

This image is a technical diagram illustrating a system that combines a Knowledge Graph (KG) with Large Language Models (LLMs) to answer factual questions. The diagram is divided into two main sections: an upper "Knowledge Graph" containing entities and relationships, and a lower "Reasoning-on-Graphs" process that uses both LLMs and KGs to derive an answer from a given question.

### Components/Axes

The diagram is composed of the following primary components, arranged vertically:

1. **Knowledge Graph (Top Section):**

* Enclosed in a large, dashed, rounded rectangle labeled **"Knowledge Graph"** at the top center.

* Contains nodes (circles) and directed edges (arrows) representing entities and their relationships.

* **Nodes (Entities):**

* `Barack Obama` (Red-outlined circle, bottom-left of the KG)

* `Michelle Obama` (Blue-filled circle, top-left of the KG)

* `Honolulu` (Red-outlined circle, center of the KG)

* `Hawaii` (Blue-filled circle, bottom-right of the KG)

* `USA` (Red-outlined circle, right side of the KG)

* `1776` (Blue-filled circle, top-right of the KG)

* **Edges (Relationships):**

* `Marry_to`: From `Barack Obama` to `Michelle Obama` (Grey arrow)

* `Born_in`: From `Barack Obama` to `Honolulu` (Red arrow)

* `Located_in`: From `Honolulu` to `Hawaii` (Grey arrow)

* `City_of`: From `Honolulu` to `USA` (Red arrow)

* `Founded_in`: From `USA` to `1776` (Grey arrow)

* **LLM Agent Icon:** A yellow square with a black line-art icon of a person's head with gears, located to the left of the `Barack Obama` node, labeled **"LLM agent"**.

2. **Reasoning-on-Graphs (Middle Section):**

* A curved, double-headed arrow labeled **"Reasoning-on-Graphs"** points from the Knowledge Graph down to a smaller dashed box.

* The smaller dashed box contains two stacked rectangles:

* Top rectangle: Yellow, labeled **"LLMs"**.

* Bottom rectangle: Light blue, labeled **"KGs"**.

3. **Question-Answer Flow (Bottom Section):**

* **Question (Left):** Text reading **"Question: Which country is Barack Obama from ?"**.

* **Process Arrow:** A thick, white arrow with a black outline points from the question to the "Reasoning-on-Graphs" box.

* **Answer (Right):** Text reading **"Answer: USA"**. A similar thick arrow points from the "Reasoning-on-Graphs" box to this answer.

### Detailed Analysis

The diagram visually traces the reasoning path to answer the specific question.

* **Highlighted Reasoning Path:** A specific chain of relationships in the Knowledge Graph is emphasized with **red arrows and red-outlined nodes**. This path is:

1. `Barack Obama` --(Born_in)--> `Honolulu`

2. `Honolulu` --(City_of)--> `USA`

* **Spatial Grounding:** The "LLM agent" icon is positioned to the left of the starting node (`Barack Obama`). The legend for node/edge colors is implicit: red indicates the active reasoning path for the current query, while blue/grey indicates other, inactive facts in the graph.

* **Data Flow:** The process is linear:

1. Input: A natural language question.

2. Processing: The "Reasoning-on-Graphs" module, which integrates LLMs (for understanding and generation) and KGs (for structured factual data).

3. Output: A factual answer derived from the graph.

### Key Observations

* The Knowledge Graph contains both relevant and irrelevant information for the given question. Facts like `Marry_to` and `Founded_in` are present but not used in this specific reasoning chain.

* The diagram explicitly shows that the answer "USA" is not directly stated but is inferred through a two-hop path in the graph (`Barack Obama` -> `Honolulu` -> `USA`).

* The use of color (red) is critical for isolating the specific subgraph used for this particular query from the larger knowledge base.

### Interpretation

This diagram demonstrates a **neuro-symbolic AI approach**, where the pattern-matching and language capabilities of LLMs are combined with the explicit, structured knowledge of a Knowledge Graph.

* **What it suggests:** The system can answer complex factual questions by performing multi-hop reasoning over a graph of interconnected entities. The LLM likely parses the question, identifies relevant entities (`Barack Obama`, `country`), and then queries or traverses the KG to find a connecting path.

* **How elements relate:** The Knowledge Graph acts as a structured memory. The "Reasoning-on-Graphs" component is the engine that uses the LLM to navigate this memory. The "LLM agent" icon within the KG box may symbolize the LLM's role in initiating graph queries or interpreting graph structures.

* **Notable Anomalies/Patterns:** The most significant pattern is the **selective activation of a subgraph**. The system doesn't use the entire graph; it dynamically identifies and follows a relevant path. This is efficient and mimics human reasoning. The presence of the `1776` node, while factually correct (USA founded in 1776), is an example of a "distractor" or peripheral fact that the reasoning process correctly ignores for this query. The diagram effectively argues that combining statistical AI (LLMs) with symbolic AI (KGs) leads to more accurate, explainable, and grounded question-answering.