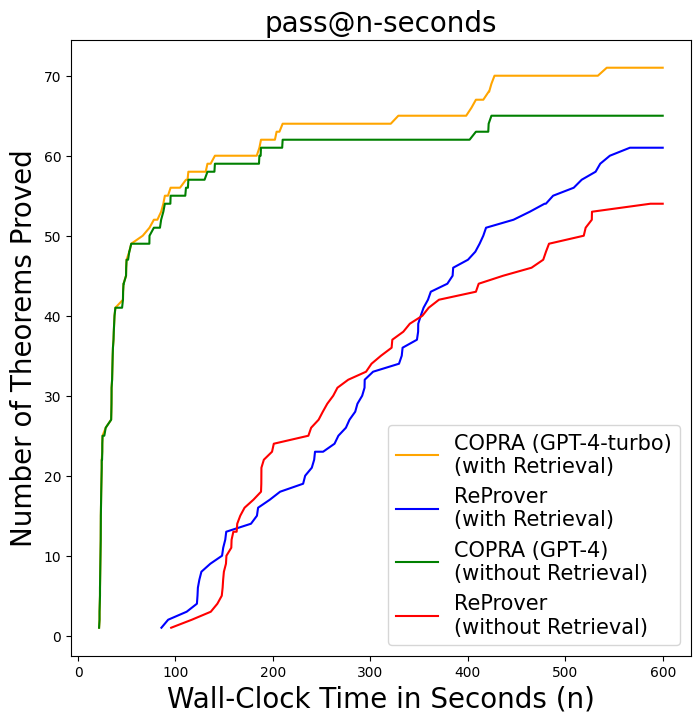

## Line Chart: pass@n-seconds

### Overview

The chart visualizes the performance of two theorem-proving systems (COPRA and ReProver) with and without retrieval capabilities over wall-clock time. It measures the cumulative number of theorems proved as a function of elapsed time (in seconds), comparing four configurations: COPRA (GPT-4-turbo) with/without retrieval, and ReProver with/without retrieval.

### Components/Axes

- **X-axis**: Wall-Clock Time in Seconds (n)

- Range: 0 to 600 seconds

- Labels: 0, 100, 200, 300, 400, 500, 600

- **Y-axis**: Number of Theorems Proved

- Range: 0 to 70

- Labels: 0, 10, 20, ..., 70

- **Legend**:

- **Orange**: COPRA (GPT-4-turbo) (with Retrieval)

- **Blue**: ReProver (with Retrieval)

- **Green**: COPRA (GPT-4) (without Retrieval)

- **Red**: ReProver (without Retrieval)

- **Legend Position**: Bottom-right corner

### Detailed Analysis

1. **COPRA (GPT-4-turbo) with Retrieval (Orange Line)**

- Starts at ~5 theorems at 100s, rises steadily to ~70 theorems by 600s.

- Slope: Consistent upward trend with minor plateaus.

2. **ReProver with Retrieval (Blue Line)**

- Begins at ~10 theorems at 100s, increases to ~60 theorems by 600s.

- Slope: Gradual rise with sharper acceleration after 300s.

3. **COPRA (GPT-4) without Retrieval (Green Line)**

- Jumps from 0 to ~25 theorems at 100s, plateaus at ~60 theorems by 300s.

- Slope: Sharp initial increase, then flat.

4. **ReProver without Retrieval (Red Line)**

- Starts at 0, reaches ~20 theorems at 300s, ends at ~55 theorems at 600s.

- Slope: Slow initial growth, accelerates after 300s.

### Key Observations

- **Performance Hierarchy**:

- COPRA (GPT-4-turbo) with retrieval outperforms all configurations, achieving ~70 theorems by 600s.

- COPRA (GPT-4) without retrieval lags behind COPRA (GPT-4-turbo) but surpasses ReProver configurations.

- ReProver with retrieval outperforms ReProver without retrieval but trails COPRA systems.

- **Retrieval Impact**:

- Retrieval significantly boosts performance for both systems.

- COPRA (GPT-4-turbo) with retrieval gains ~15 theorems over its non-retrieval counterpart by 600s.

- ReProver gains ~35 theorems with retrieval compared to without.

- **Plateaus**:

- COPRA (GPT-4) without retrieval plateaus at ~60 theorems after 300s.

- ReProver without retrieval shows a slower but steady climb.

### Interpretation

The data demonstrates that **retrieval mechanisms critically enhance theorem-proving efficiency**, particularly for COPRA (GPT-4-turbo), which achieves near-maximal performance (~70 theorems) with retrieval. The plateau in COPRA (GPT-4) without retrieval suggests inherent limitations in handling complex theorems without retrieval. ReProver, while less efficient overall, still benefits from retrieval, closing a ~35-theorem gap. The results imply that retrieval-augmented systems are essential for scaling theorem-proving capabilities, with COPRA (GPT-4-turbo) being the most effective configuration.