TECHNICAL ASSET FINGERPRINT

33604c9c7a7ed0bd2958d4cb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

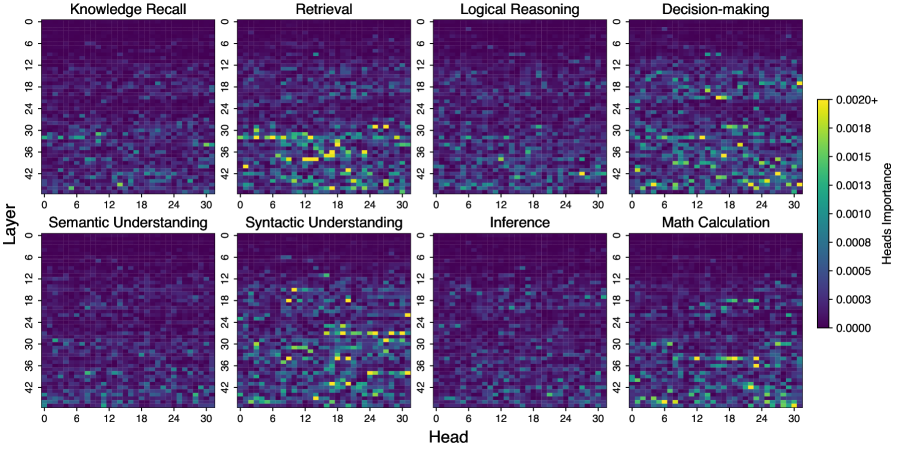

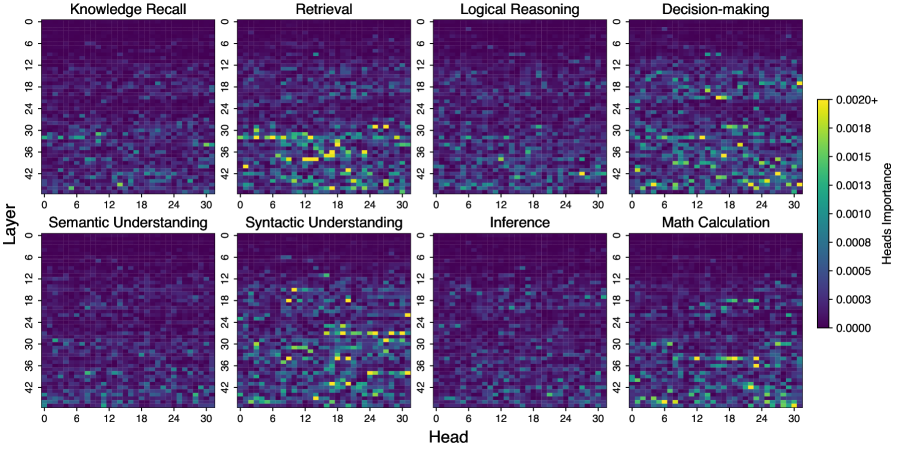

## Heatmap: Heads Importance for Different Tasks

### Overview

The image presents a series of heatmaps visualizing the importance of different "heads" across various layers for different tasks. Each heatmap represents a specific task, with the x-axis indicating the "Head" and the y-axis indicating the "Layer." The color intensity represents the "Heads Importance," ranging from dark purple (0.0000) to bright yellow (0.0020+).

### Components/Axes

* **X-axis:** "Head" - Ranges from 0 to 30 in increments of 6.

* **Y-axis:** "Layer" - Ranges from 0 to 42 in increments of 6.

* **Heatmaps:** Eight heatmaps, each representing a different task.

* **Color Scale (Heads Importance):** Located on the right side of the image.

* Dark Purple: 0.0000

* Purple: 0.0003

* Blue: 0.0005

* Green: 0.0008

* Light Green: 0.0010

* Yellow-Green: 0.0013

* Yellow: 0.0015

* Bright Yellow: 0.0018

* Very Bright Yellow: 0.0020+

* **Task Labels:**

* Top Row (left to right): Knowledge Recall, Retrieval, Logical Reasoning, Decision-making

* Bottom Row (left to right): Semantic Understanding, Syntactic Understanding, Inference, Math Calculation

### Detailed Analysis

**1. Knowledge Recall:**

* The heatmap is mostly dark purple, indicating low importance across most heads and layers.

* Slightly higher importance (blue to green) is observed in the lower layers (30-42) and some heads (around 12-18).

**2. Retrieval:**

* Higher importance is concentrated in the lower layers (30-42).

* Several heads (around 6-18) in these lower layers show significant importance (yellow).

**3. Logical Reasoning:**

* The heatmap is predominantly dark purple, indicating low importance across most heads and layers.

* A few scattered points of slightly higher importance (blue to green) are visible.

**4. Decision-making:**

* Similar to Logical Reasoning, the heatmap is mostly dark purple.

* A few scattered points of slightly higher importance (blue to green) are visible, particularly around layer 36.

**5. Semantic Understanding:**

* Higher importance is observed in the lower layers (30-42).

* Several heads (around 12-24) in these lower layers show significant importance (yellow).

**6. Syntactic Understanding:**

* Higher importance is concentrated in the lower layers (30-42).

* Several heads (around 6-18) in these lower layers show significant importance (yellow).

**7. Inference:**

* The heatmap is predominantly dark purple, indicating low importance across most heads and layers.

* A few scattered points of slightly higher importance (blue to green) are visible.

**8. Math Calculation:**

* The heatmap is predominantly dark purple, indicating low importance across most heads and layers.

* A few scattered points of slightly higher importance (blue to green) are visible, particularly in the lower layers.

### Key Observations

* Tasks like Retrieval, Semantic Understanding, and Syntactic Understanding show a concentration of high importance in the lower layers (30-42).

* Tasks like Logical Reasoning, Decision-making, Inference, and Math Calculation show generally low importance across all layers and heads.

* Knowledge Recall shows a slightly higher importance in the lower layers compared to Logical Reasoning, Decision-making, Inference, and Math Calculation.

### Interpretation

The heatmaps suggest that for tasks like Retrieval, Semantic Understanding, and Syntactic Understanding, the lower layers of the model are more critical. This could indicate that these tasks rely more on lower-level features or representations learned in the earlier layers. Conversely, tasks like Logical Reasoning, Decision-making, Inference, and Math Calculation may rely on a more distributed set of features across all layers, or potentially on different architectures altogether, resulting in lower importance scores for individual heads. The concentration of importance in specific heads for certain tasks suggests that those heads are specialized in extracting relevant information for those tasks. The data suggests that different tasks rely on different aspects of the model's architecture, with some tasks being more dependent on lower-level features and specific heads than others.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmaps: Attention Head Importance Across Tasks

### Overview

The image presents a 2x4 grid of heatmaps, each representing the attention head importance for a different task. The heatmaps visualize the relationship between 'Layer' (vertical axis) and 'Head' (horizontal axis), with color intensity indicating the magnitude of attention head importance. A colorbar on the right provides the scale for interpreting the color intensity.

### Components/Axes

* **X-axis (Head):** Ranges from 0 to 30, with markers at intervals of 6. Labeled as "Head".

* **Y-axis (Layer):** Ranges from 0 to 42, with markers at intervals of 6. Labeled as "Layer".

* **Tasks (Heatmap Titles):**

* Knowledge Recall

* Retrieval

* Logical Reasoning

* Decision-making

* Semantic Understanding

* Syntactic Understanding

* Inference

* Math Calculation

* **Colorbar:** Ranges from approximately 0.0000 (dark purple) to 0.0020+ (yellow). Labeled as "Heads".

### Detailed Analysis or Content Details

Each heatmap shows a 31x43 grid of colored cells. The color of each cell represents the attention head importance for a specific layer and head combination. Due to the resolution of the image, precise numerical values are difficult to extract, but approximate values based on the colorbar are provided.

**1. Knowledge Recall:**

* Trend: Generally low attention head importance across most layers and heads. Some localized areas of higher importance (yellow/light green) appear around Head 12-18 and Layers 18-30.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010-0.0013.

**2. Retrieval:**

* Trend: Similar to Knowledge Recall, with generally low attention head importance. More pronounced areas of higher importance (yellow/light green) are visible around Head 18-24 and Layers 6-18.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0013-0.0016.

**3. Logical Reasoning:**

* Trend: Low attention head importance, with some scattered areas of moderate importance (green) around Head 6-12 and Layers 12-30.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010.

**4. Decision-making:**

* Trend: Higher attention head importance compared to previous tasks, particularly around Head 24-30 and Layers 12-36. A distinct vertical band of higher importance is visible around Head 24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0016-0.0020+.

**5. Semantic Understanding:**

* Trend: Moderate attention head importance, with a concentration of higher values (green/light green) around Head 6-18 and Layers 6-24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0013.

**6. Syntactic Understanding:**

* Trend: Similar to Semantic Understanding, with moderate attention head importance concentrated around Head 6-18 and Layers 6-24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0013.

**7. Inference:**

* Trend: Low attention head importance, with scattered areas of moderate importance (green) around Head 12-24 and Layers 18-36.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010.

**8. Math Calculation:**

* Trend: Highest overall attention head importance, with a strong concentration of high values (yellow/light green) around Head 18-30 and Layers 18-42. A clear diagonal pattern of high importance is visible.

* Approximate Values: Most cells are between 0.0000 and 0.0010. Peak values reach approximately 0.0016-0.0020+.

### Key Observations

* **Task-Specific Attention:** Different tasks exhibit distinct patterns of attention head importance. Math Calculation consistently shows the highest attention head importance, while Knowledge Recall and Logical Reasoning generally have the lowest.

* **Head Specialization:** Certain heads appear to be more important for specific tasks. For example, Heads 24-30 are consistently important for Decision-making and Math Calculation.

* **Layer Dependency:** Attention head importance varies across layers, suggesting that different layers contribute differently to each task.

* **Diagonal Pattern:** The diagonal pattern in the Math Calculation heatmap suggests that attention heads at higher layers are particularly important for this task.

### Interpretation

The heatmaps demonstrate that attention heads are not uniformly important across all tasks. Instead, different tasks rely on different combinations of attention heads and layers. This suggests that the model learns to specialize its attention mechanisms to effectively process different types of information. The higher attention head importance observed in Math Calculation may indicate that this task requires more complex reasoning and information processing. The task-specific patterns observed in these heatmaps provide valuable insights into the inner workings of the model and can be used to improve its performance and interpretability. The varying attention head importance across layers suggests a hierarchical processing of information, where lower layers may focus on basic features and higher layers on more abstract concepts. The diagonal pattern in Math Calculation could indicate that the model progressively refines its calculations as information flows through the layers.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Grid: AI Model Head Importance Across Cognitive Tasks

### Overview

The image displays a grid of eight heatmaps arranged in two rows and four columns. Each heatmap visualizes the "importance" of attention heads (x-axis) across different layers (y-axis) of a neural network model for a specific cognitive task. The overall purpose is to show which parts of the model (specific layer-head combinations) are most active or significant for different types of reasoning and understanding.

### Components/Axes

* **Grid Structure:** 2 rows x 4 columns of individual heatmaps.

* **Individual Heatmap Titles (Top Row, Left to Right):**

1. Knowledge Recall

2. Retrieval

3. Logical Reasoning

4. Decision-making

* **Individual Heatmap Titles (Bottom Row, Left to Right):**

1. Semantic Understanding

2. Syntactic Understanding

3. Inference

4. Math Calculation

* **Y-Axis (Common to all heatmaps):** Labeled "Layer". Scale runs from 0 at the top to 42 at the bottom, with major tick marks at 0, 6, 12, 18, 24, 30, 36, 42.

* **X-Axis (Common to all heatmaps):** Labeled "Head". Scale runs from 0 on the left to 30 on the right, with major tick marks at 0, 6, 12, 18, 24, 30.

* **Color Bar/Legend (Positioned to the right of the grid):**

* **Label:** "Heads Importance"

* **Scale:** A vertical gradient bar.

* **Values (from bottom to top):** 0.0000, 0.0003, 0.0005, 0.0008, 0.0010, 0.0013, 0.0015, 0.0018, 0.0020+.

* **Color Mapping:** Dark purple/blue represents low importance (~0.0000). Colors transition through teal and green to bright yellow, which represents high importance (0.0020+).

### Detailed Analysis

Each heatmap is a 43x31 grid (Layers x Heads) where each cell's color indicates the importance value for that specific layer-head pair.

**Trend Verification & Data Point Analysis (by Heatmap):**

1. **Knowledge Recall:**

* **Trend:** Scattered, low-to-moderate importance. No strong, concentrated clusters.

* **Data Points:** A few isolated yellow/green spots (high importance) appear, notably around Layer ~30, Head ~18 and Layer ~36, Head ~6. Most of the grid is dark blue/purple.

2. **Retrieval:**

* **Trend:** Shows the most distinct and concentrated pattern of high importance.

* **Data Points:** A prominent band of high importance (yellow/green) is visible in the lower-middle layers, roughly between Layers 30-42. Within this band, importance is not uniform; it peaks in specific heads, such as around Head 12-18 and Head 24-30. The upper layers (0-24) are predominantly low importance.

3. **Logical Reasoning:**

* **Trend:** Very sparse high-importance points. Appears to have the lowest overall activation.

* **Data Points:** The grid is almost entirely dark blue. Only a handful of faint green/yellow pixels are visible, for example near Layer 36, Head 24.

4. **Decision-making:**

* **Trend:** Moderate, scattered importance with some clustering in mid-to-lower layers.

* **Data Points:** Several yellow/green spots are distributed, with a slight concentration in the lower half (Layers 24-42). Notable points include Layer ~24, Head ~18 and Layer ~36, Head ~12.

5. **Semantic Understanding:**

* **Trend:** Diffuse, low-level importance across the entire grid.

* **Data Points:** Very few high-importance (yellow) cells. The pattern is a speckled mix of dark blue and teal, indicating generally low but non-zero importance spread widely.

6. **Syntactic Understanding:**

* **Trend:** Shows a clear, structured pattern of moderate-to-high importance.

* **Data Points:** A distinct "grid-like" or "checkerboard" pattern of green/yellow cells is visible, particularly in the lower two-thirds of the layers (Layers 18-42). This suggests specific, regularly spaced heads are important for syntax.

7. **Inference:**

* **Trend:** Similar to Logical Reasoning, with very sparse high-importance signals.

* **Data Points:** The heatmap is predominantly dark. A few isolated green points are present, such as near Layer 30, Head 6.

8. **Math Calculation:**

* **Trend:** Scattered importance with a slight bias towards lower layers.

* **Data Points:** Isolated yellow/green spots appear, mainly in the bottom half (Layers 24-42). Examples include Layer ~36, Head ~0 and Layer ~42, Head ~24.

### Key Observations

* **Task-Specific Activation:** The model utilizes distinctly different patterns of layer-head importance for different cognitive tasks.

* **Retrieval is Unique:** The "Retrieval" task shows the most concentrated and intense activation pattern, localized to a specific band of lower layers.

* **Syntax vs. Semantics:** "Syntactic Understanding" has a more structured, grid-like importance pattern compared to the diffuse pattern of "Semantic Understanding."

* **Low Activation for Logic/Inference:** "Logical Reasoning" and "Inference" show the least activation, suggesting these tasks may rely on more distributed or subtle processing not captured strongly by this importance metric, or on different model components.

* **Layer Gradient:** For several tasks (Retrieval, Decision-making, Syntactic Understanding, Math Calculation), higher importance values are more frequently found in the lower half of the model (Layers 21-42).

### Interpretation

This visualization provides a "cognitive map" of a large language model, revealing how its internal components (attention heads) are differentially recruited for various intellectual tasks.

* **Functional Localization:** The data suggests a degree of functional localization within the model. The strong, localized pattern for **Retrieval** implies that accessing stored knowledge is a distinct process handled by specific circuits in the model's deeper layers. The structured pattern for **Syntactic Understanding** aligns with the idea that grammar processing may involve more regular, patterned computations.

* **Task Complexity & Resource Allocation:** The sparse activation for **Logical Reasoning** and **Inference** is intriguing. It could indicate that these tasks are either: a) performed by a very small, specialized set of heads, b) rely on interactions not captured by this single "importance" metric, or c) are more emergent properties of the entire network's activity rather than localized to specific heads.

* **Architectural Insight:** The concentration of activity in lower layers (higher layer numbers) for many tasks is consistent with some interpretability research suggesting that deeper layers in transformer models often handle more task-specific, semantic processing after earlier layers perform more general feature extraction.

* **Limitation:** The metric is labeled "Heads Importance," but the exact definition (e.g., based on attention weight magnitude, gradient saliency, or another probe) is not specified. The interpretation is therefore relative—comparing patterns across tasks—rather than absolute. The "0.0020+" ceiling on the color bar suggests the highest values may be clipped, potentially masking the true peak importance for tasks like Retrieval.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Cognitive Task Processing Across Neural Layers and Heads

### Overview

The image displays a matrix of 8 heatmaps arranged in two rows (4 top, 4 bottom), each representing neural activity patterns for different cognitive tasks. The heatmaps visualize the importance of specific "heads" (neurons) across "layers" (depth) in processing tasks like knowledge recall, logical reasoning, and math calculation. Color intensity indicates head importance, with yellow representing highest values (0.0020+) and purple the lowest (0.0000).

### Components/Axes

- **X-axis (Horizontal)**: "Head" (0-30), incrementing by 6

- **Y-axis (Vertical)**: "Layer" (0-42), incrementing by 6

- **Legend**: Right-aligned colorbar (purple→yellow) labeled "Heads Importance"

- **Panel Titles**:

- Top row: Knowledge Recall, Retrieval, Logical Reasoning, Decision-making

- Bottom row: Semantic Understanding, Syntactic Understanding, Inference, Math Calculation

### Detailed Analysis

1. **Knowledge Recall** (Top-left)

- Yellow spots concentrated in layers 12-18 and heads 6-12

- Gradual transition to green in layers 24-30

2. **Retrieval** (Top-center-left)

- Yellow clusters in layers 18-24 and heads 12-18

- Green dominance in upper layers (30-42)

3. **Logical Reasoning** (Top-center-right)

- Yellow in layers 18-24 and heads 12-18

- Green in layers 24-30 across heads 6-24

4. **Decision-making** (Top-right)

- Yellow in layers 24-30 and heads 18-24

- Green in layers 18-24 across heads 12-24

5. **Semantic Understanding** (Bottom-left)

- Yellow in layers 12-18 and heads 6-12

- Green in layers 24-30 across heads 0-30

6. **Syntactic Understanding** (Bottom-center-left)

- Yellow in layers 18-24 and heads 12-18

- Green in layers 24-30 across heads 6-24

7. **Inference** (Bottom-center-right)

- Yellow in layers 24-30 and heads 18-24

- Green in layers 18-24 across heads 12-24

8. **Math Calculation** (Bottom-right)

- Yellow in layers 24-30 and heads 18-24

- Green in layers 18-24 across heads 12-24

### Key Observations

- **Layer Specialization**: Higher layers (24-30) show increased importance for complex tasks (logical reasoning, math calculation)

- **Head Activation Patterns**:

- Heads 12-18 dominate in middle layers (18-24)

- Heads 18-24 activate in upper layers (24-30)

- **Task-Specific Patterns**:

- Math Calculation and Logical Reasoning show strongest yellow in upper-right quadrant

- Knowledge Recall shows distributed activity across middle layers

- **Color Consistency**: All yellow regions align with legend's 0.0020+ threshold

### Interpretation

The heatmaps reveal a clear hierarchical processing structure:

1. **Lower Layers (0-12)**: Primarily handle basic knowledge recall and semantic understanding

2. **Middle Layers (12-24)**: Specialized for syntactic processing and intermediate reasoning

3. **Upper Layers (24-30)**: Critical for complex tasks requiring integration (logical reasoning, math calculation)

Notable anomalies include the strong yellow concentration in layers 18-24 for Retrieval and Syntactic Understanding, suggesting these tasks require distributed processing across multiple heads. The consistent yellow in upper layers for Math Calculation implies specialized neural circuitry for numerical processing.

The spatial distribution patterns indicate that cognitive tasks are processed through distinct but interconnected neural pathways, with higher layers showing increased specialization for complex cognitive functions. This aligns with theories of neural network architecture where deeper layers handle abstract representations.

DECODING INTELLIGENCE...