\n

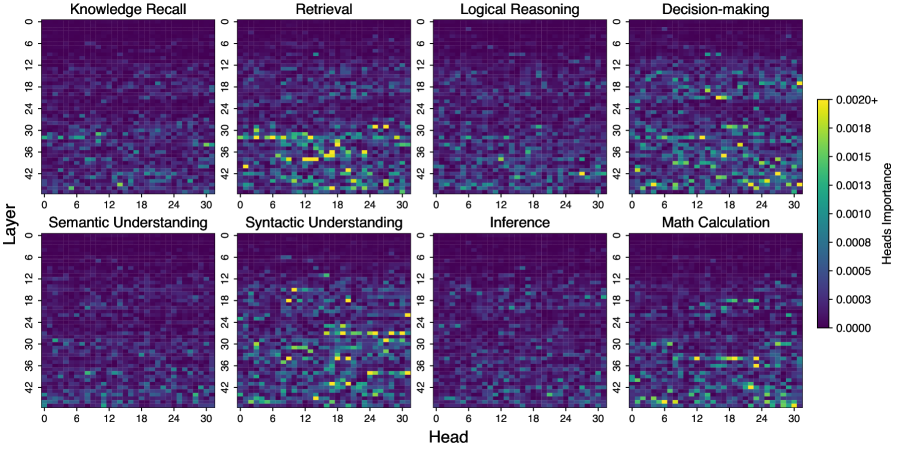

## Heatmaps: Attention Head Importance Across Tasks

### Overview

The image presents a 2x4 grid of heatmaps, each representing the attention head importance for a different task. The heatmaps visualize the relationship between 'Layer' (vertical axis) and 'Head' (horizontal axis), with color intensity indicating the magnitude of attention head importance. A colorbar on the right provides the scale for interpreting the color intensity.

### Components/Axes

* **X-axis (Head):** Ranges from 0 to 30, with markers at intervals of 6. Labeled as "Head".

* **Y-axis (Layer):** Ranges from 0 to 42, with markers at intervals of 6. Labeled as "Layer".

* **Tasks (Heatmap Titles):**

* Knowledge Recall

* Retrieval

* Logical Reasoning

* Decision-making

* Semantic Understanding

* Syntactic Understanding

* Inference

* Math Calculation

* **Colorbar:** Ranges from approximately 0.0000 (dark purple) to 0.0020+ (yellow). Labeled as "Heads".

### Detailed Analysis or Content Details

Each heatmap shows a 31x43 grid of colored cells. The color of each cell represents the attention head importance for a specific layer and head combination. Due to the resolution of the image, precise numerical values are difficult to extract, but approximate values based on the colorbar are provided.

**1. Knowledge Recall:**

* Trend: Generally low attention head importance across most layers and heads. Some localized areas of higher importance (yellow/light green) appear around Head 12-18 and Layers 18-30.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010-0.0013.

**2. Retrieval:**

* Trend: Similar to Knowledge Recall, with generally low attention head importance. More pronounced areas of higher importance (yellow/light green) are visible around Head 18-24 and Layers 6-18.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0013-0.0016.

**3. Logical Reasoning:**

* Trend: Low attention head importance, with some scattered areas of moderate importance (green) around Head 6-12 and Layers 12-30.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010.

**4. Decision-making:**

* Trend: Higher attention head importance compared to previous tasks, particularly around Head 24-30 and Layers 12-36. A distinct vertical band of higher importance is visible around Head 24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0016-0.0020+.

**5. Semantic Understanding:**

* Trend: Moderate attention head importance, with a concentration of higher values (green/light green) around Head 6-18 and Layers 6-24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0013.

**6. Syntactic Understanding:**

* Trend: Similar to Semantic Understanding, with moderate attention head importance concentrated around Head 6-18 and Layers 6-24.

* Approximate Values: Most cells are between 0.0000 and 0.0005. Peak values reach approximately 0.0013.

**7. Inference:**

* Trend: Low attention head importance, with scattered areas of moderate importance (green) around Head 12-24 and Layers 18-36.

* Approximate Values: Most cells are between 0.0000 and 0.0003. Peak values reach approximately 0.0010.

**8. Math Calculation:**

* Trend: Highest overall attention head importance, with a strong concentration of high values (yellow/light green) around Head 18-30 and Layers 18-42. A clear diagonal pattern of high importance is visible.

* Approximate Values: Most cells are between 0.0000 and 0.0010. Peak values reach approximately 0.0016-0.0020+.

### Key Observations

* **Task-Specific Attention:** Different tasks exhibit distinct patterns of attention head importance. Math Calculation consistently shows the highest attention head importance, while Knowledge Recall and Logical Reasoning generally have the lowest.

* **Head Specialization:** Certain heads appear to be more important for specific tasks. For example, Heads 24-30 are consistently important for Decision-making and Math Calculation.

* **Layer Dependency:** Attention head importance varies across layers, suggesting that different layers contribute differently to each task.

* **Diagonal Pattern:** The diagonal pattern in the Math Calculation heatmap suggests that attention heads at higher layers are particularly important for this task.

### Interpretation

The heatmaps demonstrate that attention heads are not uniformly important across all tasks. Instead, different tasks rely on different combinations of attention heads and layers. This suggests that the model learns to specialize its attention mechanisms to effectively process different types of information. The higher attention head importance observed in Math Calculation may indicate that this task requires more complex reasoning and information processing. The task-specific patterns observed in these heatmaps provide valuable insights into the inner workings of the model and can be used to improve its performance and interpretability. The varying attention head importance across layers suggests a hierarchical processing of information, where lower layers may focus on basic features and higher layers on more abstract concepts. The diagonal pattern in Math Calculation could indicate that the model progressively refines its calculations as information flows through the layers.