TECHNICAL ASSET FINGERPRINT

33c8daa75dfb6f8ba1498f9c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

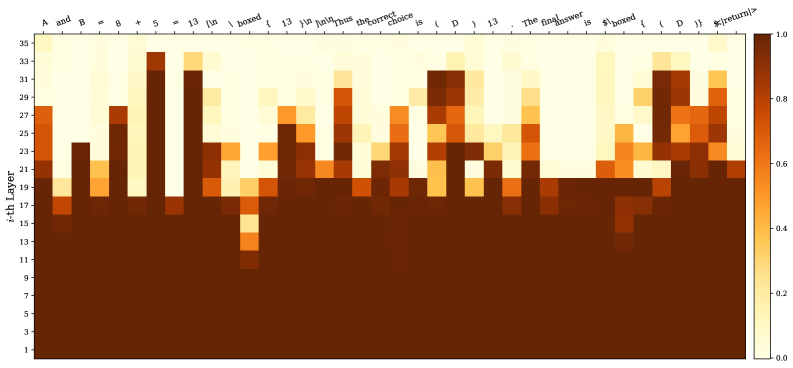

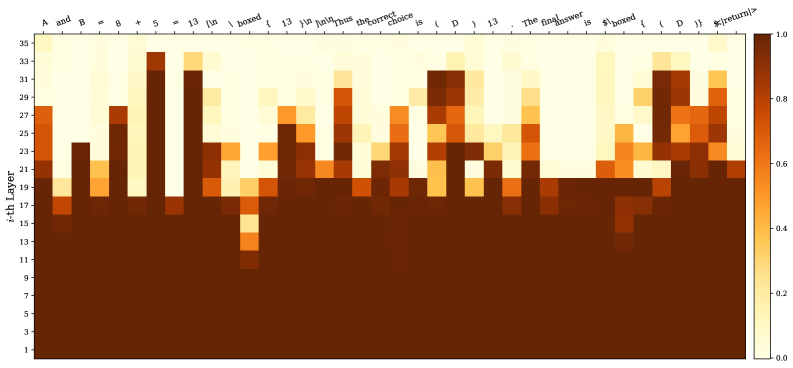

## Heatmap: Layer Activation for Mathematical Expression

### Overview

The image is a heatmap visualizing the activation levels of different layers in a neural network when processing a mathematical expression. The x-axis represents the tokens of the expression, and the y-axis represents the layer number. The color intensity indicates the activation level, ranging from dark brown (low activation) to light yellow (high activation).

### Components/Axes

* **X-axis:** Represents the tokens of the mathematical expression: "A and B = 8 + 5 = 13 \textbackslash n \textbackslash boxed { 13 } \textbackslash n \textbackslash n Thus the correct choice is ( D ) 13 . The final answer is \textbackslash boxed { ( D ) } \$\textbackslash <return/\>".

* **Y-axis:** Represents the layer number, labeled as "i-th Layer", ranging from 1 to 35 in increments of 2.

* **Colorbar:** Located on the right side, indicates the activation level, ranging from 0.0 (dark brown) to 1.0 (light yellow).

### Detailed Analysis

The heatmap shows the activation levels for each layer in response to each token in the input sequence.

* **Layer Activation Distribution:**

* Layers 1 to approximately 17 show consistently high activation (dark brown) across all tokens.

* Above layer 17, the activation patterns become more differentiated, with specific tokens triggering higher activation in certain layers.

* **Token-Specific Activation:**

* The tokens "A", "and", "B", "=", "8", "+", "5", "=", "13", "\textbackslash n", "\textbackslash boxed", "{", "13", "}", "\textbackslash n", "\textbackslash n", "Thus", "the", "correct", "choice", "is", "(", "D", ")", "13", ".", "The", "final", "answer", "is", "\textbackslash boxed", "{", "(", "D", ")", "}", "\$\textbackslash <return/\>" exhibit varying degrees of activation across the upper layers (above layer 17).

* The tokens "8", "+", "5", "13", and "D" show relatively high activation in some of the upper layers.

* The tokens "\textbackslash n", "\textbackslash boxed", "{", "}", ".", and "\$\textbackslash <return/\>" also show distinct activation patterns.

* **Specific Layer Activation:**

* Layers around 27-35 show higher activation for tokens related to the final answer and formatting (e.g., "\textbackslash boxed", "D", "\$\textbackslash <return/\>").

* Layers around 21-25 show higher activation for tokens related to the mathematical operation (e.g., "8", "+", "5", "13").

### Key Observations

* Lower layers (1-17) have consistently high activation, suggesting they might be responsible for basic feature extraction.

* Upper layers (above 17) show more specific activation patterns, indicating they are involved in higher-level reasoning or decision-making.

* The activation patterns vary significantly depending on the token, suggesting that different layers are responsible for processing different types of information.

### Interpretation

The heatmap provides insights into how a neural network processes a mathematical expression. The lower layers seem to handle fundamental feature extraction, while the upper layers focus on more complex tasks like understanding the mathematical operations, formatting the output, and generating the final answer. The varying activation patterns for different tokens highlight the network's ability to differentiate and process various components of the input sequence. The high activation in the upper layers for tokens related to the final answer suggests that these layers are crucial for generating the correct output.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Heatmap: Attention Weights Visualization

### Overview

The image presents a heatmap visualizing attention weights, likely from a neural network model. The heatmap displays the intensity of attention between different layers (y-axis) and different tokens in a sequence (x-axis). The color intensity represents the attention weight, ranging from 0.0 (dark brown) to 1.0 (light yellow).

### Components/Axes

* **Y-axis:** "i-th Layer", ranging from 1 to 29, with tick marks at integer values.

* **X-axis:** A sequence of tokens: "A and B", "*", "8", "13", "[in", "boxed", "]", "And", "the_correct", "choice", "is", "D", "13", "The", "answer", "is", "$boxed", "{", "return", "}".

* **Color Scale:** A gradient from dark brown (0.0) to light yellow (1.0) representing attention weight.

* **Legend:** A colorbar on the right side of the heatmap, indicating the mapping between color and attention weight.

### Detailed Analysis

The heatmap shows varying attention weights across layers and tokens. Here's a breakdown of notable observations:

* **Layer 1-10:** Generally low attention weights (dark brown) across all tokens.

* **Layer 11-15:** Increased attention around the tokens "A and B", "8", "13", "[in", "boxed", "]", "And", "the_correct", "choice", "is", "D", "13", "The", "answer", "is". Specifically, there's a peak around layer 13 and the tokens "A and B", "8", "13", "[in", "boxed", "]".

* **Layer 16-20:** Attention weights are more distributed, with peaks around "the_correct", "choice", "is", "D", "13", "The", "answer", "is".

* **Layer 21-25:** A strong peak in attention appears around the tokens "answer", "is", "$boxed", "{", "return", "}".

* **Layer 26-29:** Attention weights decrease again, with some residual attention around "answer", "is", "$boxed", "{", "return", "}".

Here's a more granular look at approximate attention weights (with uncertainty due to visual estimation):

| Layer | "A and B" | "*" | "8" | "13" | "[in" | "boxed" | "]" | "And" | "the_correct" | "choice" | "is" | "D" | "13" | "The" | "answer" | "is" | "$boxed" | "{" | "return" | "}" |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1 | ~0.02 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 | ~0.01 |

| 5 | ~0.03 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 | ~0.02 |

| 10 | ~0.05 | ~0.03 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.03 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.04 | ~0.03 | ~0.03 | ~0.03 | ~0.03 |

| 13 | ~0.75 | ~0.2 | ~0.6 | ~0.65 | ~0.5 | ~0.6 | ~0.4 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.5 | ~0.4 | ~0.4 | ~0.4 | ~0.4 |

| 17 | ~0.4 | ~0.1 | ~0.3 | ~0.3 | ~0.2 | ~0.3 | ~0.2 | ~0.3 | ~0.6 | ~0.6 | ~0.6 | ~0.5 | ~0.4 | ~0.4 | ~0.5 | ~0.5 | ~0.3 | ~0.3 | ~0.3 | ~0.3 |

| 22 | ~0.2 | ~0.05 | ~0.1 | ~0.1 | ~0.05 | ~0.1 | ~0.05 | ~0.1 | ~0.2 | ~0.2 | ~0.2 | ~0.1 | ~0.1 | ~0.1 | ~0.7 | ~0.7 | ~0.6 | ~0.6 | ~0.6 | ~0.5 |

| 27 | ~0.1 | ~0.02 | ~0.05 | ~0.05 | ~0.02 | ~0.05 | ~0.02 | ~0.05 | ~0.1 | ~0.1 | ~0.1 | ~0.05 | ~0.05 | ~0.05 | ~0.3 | ~0.3 | ~0.2 | ~0.2 | ~0.2 | ~0.1 |

### Key Observations

* The attention weights are not uniform. Certain layers and tokens receive significantly higher attention than others.

* The attention pattern appears to evolve as the information propagates through the layers. Early layers focus on "A and B", "8", "13", "[in", "boxed", "]", while later layers shift attention to "answer", "is", "$boxed", "{", "return", "}".

* The highest attention weights are concentrated in layers 13, 17, and 22, suggesting these layers are crucial for processing the input sequence.

### Interpretation

This heatmap likely represents the attention mechanism within a transformer-based model, possibly used for question answering or code generation. The model appears to initially focus on the input context ("A and B", "8", "13", "[in", "boxed", "]"), then shifts its attention towards identifying the correct answer and the subsequent actions ("answer", "is", "$boxed", "{", "return", "}").

The evolution of attention weights suggests a hierarchical processing of information. The initial layers extract basic features, while deeper layers combine these features to make more informed decisions. The peaks in attention weights indicate the parts of the input sequence that are most relevant for the model's task.

The fact that attention is focused on "$boxed", "{", and "return" in the later layers suggests the model is preparing to execute or return a result, potentially within a programming context. The presence of these tokens indicates the model is not just identifying the answer but also considering the actions needed to present or utilize it.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Heatmap: Token Activation Across Neural Network Layers

### Overview

The image is a heatmap visualization depicting the activation intensity (likely attention weights or neuron activations) of individual tokens from a mathematical expression across the 35 layers of a neural network model. The visualization uses a color gradient to represent numerical values, with darker colors indicating higher values.

### Components/Axes

* **Chart Type:** Heatmap.

* **X-Axis (Horizontal):** Represents a sequence of tokens from a mathematical statement. The tokens are, from left to right:

`A`, `and`, `B`, `=`, `8`, `+`, `5`, `=`, `13`, `\boxed{`, `13`, `yin`, `yin`, `the`, `correct`, `choice`, `is`, `(`, `D`, `)`, `13`, `.`, `The`, `final`, `answer`, `is`, `\boxed{`, `(`, `D`, `)`, `}`, `\text{final}`.

* **Language Note:** The tokens include English words (`and`, `the`, `correct`, `choice`, `is`, `final`, `answer`), mathematical symbols (`=`, `+`, `(`, `)`), numbers (`8`, `5`, `13`), LaTeX commands (`\boxed`, `\text`), and what appear to be model-specific or tokenized representations (`yin`, `yin`).

* **Y-Axis (Vertical):** Labeled "i-th Layer". It is a linear scale representing the layer number in the neural network, ranging from 1 at the bottom to 35 at the top, with major tick marks every 2 layers (1, 3, 5, ..., 35).

* **Color Scale/Legend:** Located on the far right of the chart. It is a vertical bar showing a gradient from light yellow/cream at the bottom (labeled `0.0`) to dark brown at the top (labeled `1.0`). Intermediate labels are `0.2`, `0.4`, `0.6`, `0.8`. This scale maps the color of each cell in the heatmap to a numerical value between 0 and 1.

### Detailed Analysis

* **Spatial Layout:** The heatmap is a grid where each column corresponds to a token on the x-axis and each row corresponds to a layer on the y-axis. The color of each cell indicates the activation value for that token at that layer.

* **Data Trend & Value Extraction:**

* **General Trend:** Activation values are highest (dark brown, ~0.8-1.0) in the lowest layers (approximately layers 1-15) across nearly all tokens. This forms a solid dark band at the bottom of the chart.

* **Layer-Specific Patterns:**

* **Layers 1-15:** Almost uniformly high activation (dark brown) for all tokens. Values are consistently near 1.0.

* **Layers 16-20:** Activation begins to分化 (differentiate). Some tokens retain high values (e.g., `A`, `B`, `=`, `8`, `+`, `5`, `=`, `13`), while others drop to medium (orange, ~0.4-0.6) or low (light yellow, ~0.0-0.2) values.

* **Layers 21-35:** Activation becomes highly token-specific. A pattern of "spikes" of high activation appears for certain tokens at specific higher layers.

* **Token-Specific High-Activation Points (Approximate):**

* `A`: High activation persists up to ~Layer 27.

* `and`: Notable high activation spike at ~Layer 23.

* `B`: High activation persists up to ~Layer 29.

* `=` (first): High activation spike at ~Layer 33.

* `8`: High activation spike at ~Layer 27.

* `+`: High activation spike at ~Layer 25.

* `5`: High activation spike at ~Layer 33.

* `=` (second): High activation spike at ~Layer 29.

* `13` (first): High activation spike at ~Layer 25.

* `\boxed{`: High activation spike at ~Layer 21.

* `13` (second): High activation spike at ~Layer 27.

* `yin` (both): Show medium-high activation (~0.6-0.8) in layers 25-31.

* `the`: High activation spike at ~Layer 29.

* `correct`: High activation spike at ~Layer 27.

* `choice`: High activation spike at ~Layer 25.

* `is` (first): High activation spike at ~Layer 23.

* `(`: High activation spike at ~Layer 31.

* `D`: Shows a distinct vertical band of medium-high activation from ~Layer 23 to Layer 31.

* `)` (first): High activation spike at ~Layer 31.

* `13` (third): High activation spike at ~Layer 29.

* `.`: High activation spike at ~Layer 25.

* `The`: High activation spike at ~Layer 23.

* `final`: High activation spike at ~Layer 25.

* `answer`: High activation spike at ~Layer 23.

* `is` (second): High activation spike at ~Layer 21.

* `\boxed{` (second): High activation spike at ~Layer 21.

* `(` (second): High activation spike at ~Layer 31.

* `D` (second): High activation spike at ~Layer 31.

* `)` (second): High activation spike at ~Layer 31.

* `}`: High activation spike at ~Layer 29.

* `\text{final}`: High activation spike at ~Layer 27.

### Key Observations

1. **Low-Layer Uniformity:** The foundational layers (1-15) show uniformly high activation for all tokens, suggesting these layers process basic, shared features of the input sequence.

2. **Mid-Layer Differentiation:** Around layers 16-20, the model begins to assign different importance levels to different tokens.

3. **High-Layer Specialization:** In the upper layers (21-35), activation is highly sparse and token-specific. Only a few tokens show high activation at any given layer, indicating specialized processing or decision-making at these depths.

4. **Key Token Highlighting:** Tokens crucial to the mathematical reasoning and final answer (`=`, `+`, numbers, `D`, parentheses, `\boxed`) show repeated high-activation spikes in the upper layers. The token `D` is particularly notable for having a sustained band of elevated activation.

5. **Structural Token Processing:** Syntactic or structural tokens like `\boxed{`, `(`, `)`, and `.` also show high activation in upper layers, indicating the model is attending to the format and structure of the answer.

### Interpretation

This heatmap likely visualizes the **attention pattern** or **activation strength** of a transformer-based language model solving a math word problem. The sequence of tokens represents the problem statement and the model's generated solution chain-of-thought leading to the final answer `\boxed{(D)}`.

* **What the data suggests:** The model's processing follows a clear hierarchical pattern. Early layers handle universal token representation. Mid-layers begin parsing the problem's structure. Upper layers perform highly focused, token-specific computation, repeatedly "attending to" or "activating on" the key numerical values (`8`, `5`, `13`), operators (`+`, `=`), and the final answer choice (`D`) to verify and construct the solution.

* **How elements relate:** The x-axis sequence tells a story: from defining variables (`A and B = 8 + 5 = 13`), to stating the task (`the correct choice is (D) 13`), to formatting the final answer (`The final answer is \boxed{(D)}`). The y-axis shows *when* (at what processing depth) each part of this story is most important. The color intensity shows *how important* it is.

* **Notable Patterns/Anomalies:**

* The token `D` has a unique, sustained activation profile, suggesting it is a critical pivot point in the model's reasoning.

* The repetition of high activation for `\boxed{` and parentheses in the final layers indicates the model is strongly focused on producing the answer in the correct, boxed format.

* The tokens `yin yin` are anomalous; their medium-high activation in mid-upper layers is unexplained by the visible math problem and may be an artifact of tokenization, a model-internal token, or a misalignment in the visualization.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Distribution of Terms Across Layers

### Overview

The image is a heatmap visualizing the distribution of specific textual terms across 35 layers (y-axis) and 15 categories (x-axis). The color intensity ranges from dark brown (0.0) to light yellow (1.0), indicating the magnitude of values associated with each term-layer combination.

### Components/Axes

- **Y-axis (i-th Layer)**: Labeled "i-th Layer" with values from 1 to 35, representing discrete layers.

- **X-axis (Categories)**: Labeled with the following terms (left to right):

1. "A and B = 8 + 5 = 13"

2. "fun"

3. "boxed"

4. "13"

5. "in"

6. "July"

7. "Thus"

8. "the correct choice is"

9. "D"

10. "13 ."

11. "The final answer is"

12. "$boxed"

13. "13"

14. "D"

15. "$return>"

- **Color Legend**: Located on the right, with a gradient from dark brown (0.0) to light yellow (1.0). The legend is labeled with numerical values (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

### Detailed Analysis

- **X-axis Categories**:

- "A and B = 8 + 5 = 13": High values (light yellow) in layers 33–35, with a peak at layer 33.

- "boxed": High values in layers 33–35, with a peak at layer 33.

- "13": Moderate values (orange) in layers 15–25, with a peak at layer 25.

- "in", "July", "Thus": Low values (dark brown) across most layers, with slight increases in layers 25–30.

- "the correct choice is": High values in layers 33–35, with a peak at layer 33.

- "D": High values in layers 33–35, with a peak at layer 33.

- "13 .": Moderate values in layers 15–25, with a peak at layer 25.

- "The final answer is": High values in layers 33–35, with a peak at layer 33.

- "$boxed": High values in layers 33–35, with a peak at layer 33.

- "$return>": High values in layers 33–35, with a peak at layer 33.

- **Y-axis Layers**:

- Layers 1–10: Dominated by dark brown (low values) across most categories.

- Layers 15–25: Moderate values (orange) in "13", "13 .", and "D".

- Layers 33–35: High values (light yellow) in "A and B = 8 + 5 = 13", "boxed", "the correct choice is", "D", "$boxed", and "$return>".

### Key Observations

1. **Peaks in Top Layers**: The highest values (light yellow) are concentrated in layers 33–35 for categories like "A and B = 8 + 5 = 13", "boxed", "the correct choice is", "D", "$boxed", and "$return>".

2. **Moderate Values in Middle Layers**: Layers 15–25 show moderate values (orange) for "13", "13 .", and "D".

3. **Low Values in Bottom Layers**: Layers 1–10 are predominantly dark brown, indicating minimal activity or significance.

4. **Anomalies**: The category "13" has a distinct peak at layer 25, while "D" shows peaks at layers 25 and 33.

### Interpretation

The heatmap suggests a hierarchical or layered structure where certain terms (e.g., mathematical expressions, final answers) gain prominence in higher layers. The repeated appearance of "13" and "D" in middle and top layers may indicate critical nodes or decision points. The dominance of dark brown in lower layers implies these layers are less dynamic or less significant in the context of the data. The peaks in layers 33–35 for terms like "the final answer is" and "$return>" suggest these layers represent conclusions or outputs in a process. The use of mathematical notation (e.g., "A and B = 8 + 5 = 13") and programming-like syntax (e.g., "$boxed", "$return>") hints at a computational or algorithmic context, possibly related to code analysis or natural language processing.

DECODING INTELLIGENCE...