## Line Chart: NDCG@10 vs. Embedding Dimensions

### Overview

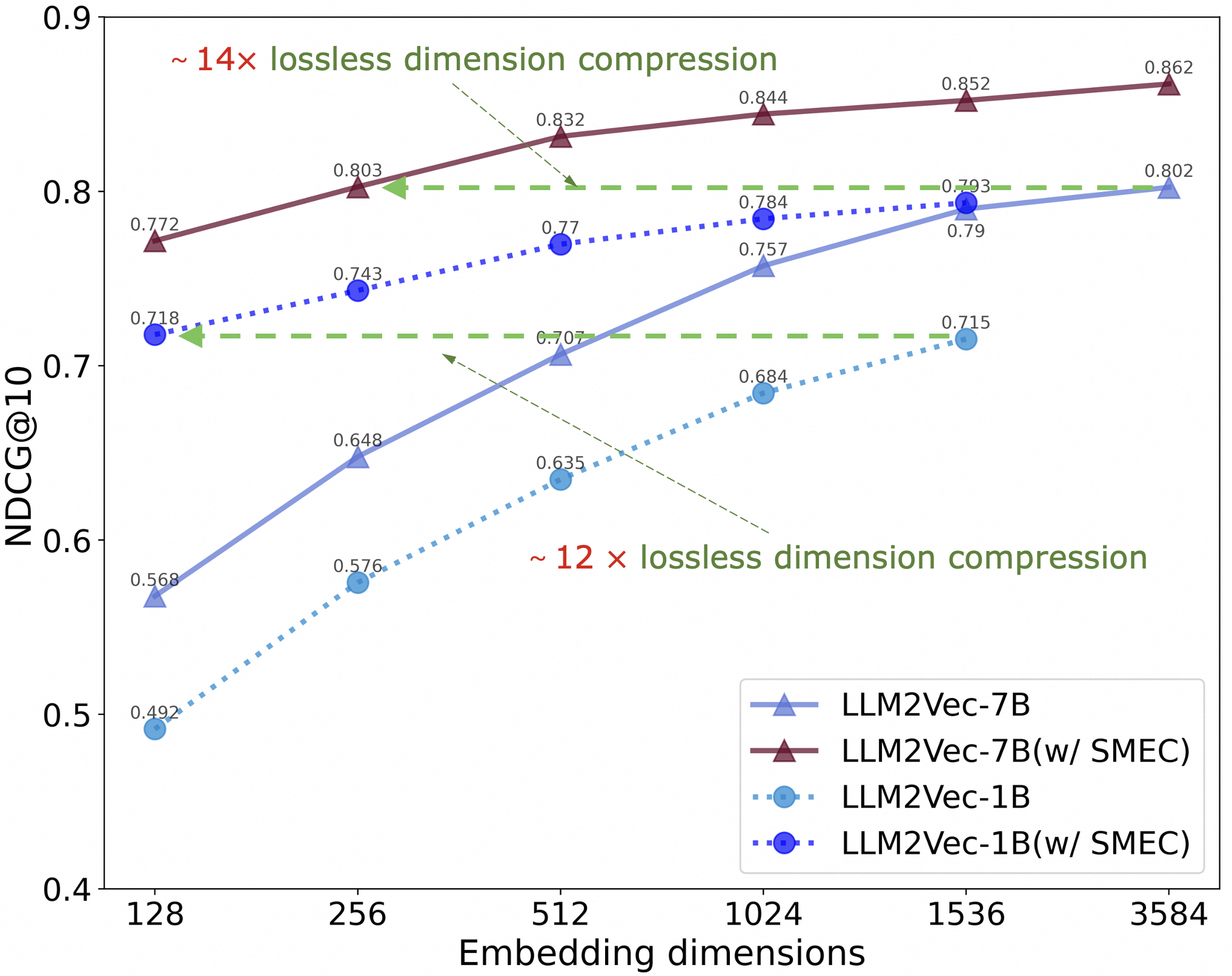

This line chart illustrates the relationship between embedding dimensions and NDCG@10 scores for four different models: LLM2Vec-7B, LLM2Vec-7B with SMEC, LLM2Vec-1B, and LLM2Vec-1B with SMEC. The chart demonstrates how performance (NDCG@10) changes as the dimensionality of the embeddings increases. Two compression ratios are indicated: ~14x and ~12x.

### Components/Axes

* **X-axis:** Embedding dimensions, ranging from 128 to 3584. Markers are placed at 128, 256, 512, 1024, 1536, and 3584.

* **Y-axis:** NDCG@10, ranging from 0.4 to 0.9.

* **Legend:** Located in the bottom-right corner.

* LLM2Vec-7B (Solid Blue Line)

* LLM2Vec-7B(w/ SMEC) (Solid Red Line)

* LLM2Vec-1B (Dashed Blue Line)

* LLM2Vec-1B(w/ SMEC) (Dashed Green Line)

* **Annotations:**

* “~14x lossless dimension compression” – positioned above the LLM2Vec-7B and LLM2Vec-7B(w/ SMEC) lines.

* “~12x lossless dimension compression” – positioned below the LLM2Vec-1B and LLM2Vec-1B(w/ SMEC) lines.

### Detailed Analysis

Here's a breakdown of the data series and their values:

* **LLM2Vec-7B (Solid Blue Line):** This line slopes upward, indicating increasing NDCG@10 with increasing embedding dimensions.

* 128: 0.492

* 256: 0.576

* 512: 0.648

* 1024: 0.743

* 1536: 0.77

* 3584: 0.803

* **LLM2Vec-7B(w/ SMEC) (Solid Red Line):** This line also slopes upward, consistently outperforming LLM2Vec-7B.

* 128: 0.718

* 256: 0.803

* 512: 0.832

* 1024: 0.844

* 1536: 0.852

* 3584: 0.862

* **LLM2Vec-1B (Dashed Blue Line):** This line slopes upward, but starts at a lower NDCG@10 and generally performs worse than LLM2Vec-7B.

* 128: 0.568

* 256: 0.648

* 512: 0.635

* 1024: 0.684

* 1536: 0.715

* 3584: 0.757

* **LLM2Vec-1B(w/ SMEC) (Dashed Green Line):** This line slopes upward, and consistently outperforms LLM2Vec-1B.

* 128: 0.707

* 256: 0.73

* 512: 0.77

* 1024: 0.784

* 1536: 0.793

* 3584: 0.802

### Key Observations

* The models with SMEC consistently outperform their counterparts without SMEC across all embedding dimensions.

* LLM2Vec-7B generally outperforms LLM2Vec-1B, even without SMEC.

* The rate of improvement in NDCG@10 diminishes as embedding dimensions increase, particularly for the models with SMEC.

* The annotations indicate that the models achieve significant lossless dimension compression (approximately 14x for LLM2Vec-7B and 12x for LLM2Vec-1B).

### Interpretation

The data suggests that increasing embedding dimensions generally improves retrieval performance (as measured by NDCG@10). However, the gains from increasing dimensionality become smaller at higher dimensions. The consistent performance improvement from using SMEC indicates that this technique effectively enhances the quality of the embeddings, leading to better retrieval results. The annotations highlight the efficiency of the models, demonstrating that they can achieve good performance with significantly reduced dimensionality, which is beneficial for storage and computational costs. The difference in compression ratios between the 7B and 1B models could be due to differences in model architecture or training data. The flattening of the curves at higher dimensions suggests a point of diminishing returns, where further increasing dimensionality does not yield substantial improvements in performance.