\n

## Diagram: System Architecture for Model Training and Inference

### Overview

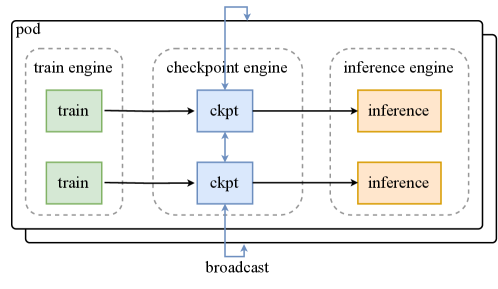

The image depicts a system architecture diagram illustrating the flow of data between a "train engine", a "checkpoint engine", and an "inference engine" all contained within a "pod". The diagram shows how training data is processed, checkpoints are created, and those checkpoints are used for inference.

### Components/Axes

The diagram consists of three main components, each enclosed in a dashed-line box:

* **Train Engine:** Contains two instances labeled "train".

* **Checkpoint Engine:** Contains two instances labeled "ckpt".

* **Inference Engine:** Contains two instances labeled "inference".

Additionally, the entire system is contained within a box labeled "pod". Arrows indicate the direction of data flow. There are two external labels: "broadcast" pointing upwards, and a connection from the "pod" to an external source.

### Detailed Analysis or Content Details

The diagram shows the following data flow:

1. **Train Engine to Checkpoint Engine:** Each of the two "train" instances in the "train engine" sends data to one of the two "ckpt" instances in the "checkpoint engine".

2. **Checkpoint Engine to Inference Engine:** Each "ckpt" instance in the "checkpoint engine" sends data to one of the two "inference" instances in the "inference engine".

3. **Broadcast:** A single arrow labeled "broadcast" points upwards from the "checkpoint engine".

4. **Pod:** The entire system is contained within a box labeled "pod".

There are no numerical values or scales present in the diagram. The diagram is purely conceptual, illustrating the relationships between the components.

### Key Observations

The diagram highlights a parallel processing architecture. There are two instances of each component ("train", "ckpt", "inference"), suggesting that the system can handle multiple training or inference tasks concurrently. The "broadcast" label suggests that checkpoint information is being disseminated to other parts of the system or to external consumers.

### Interpretation

This diagram represents a system designed for machine learning model training and deployment. The "train engine" is responsible for training the model, the "checkpoint engine" periodically saves the model's state (checkpoints), and the "inference engine" uses these checkpoints to make predictions. The "pod" likely refers to a containerization unit (like in Kubernetes), encapsulating all these components for easy deployment and scaling. The parallel instances of each component suggest a focus on scalability and throughput. The "broadcast" mechanism could be used for monitoring, model versioning, or distributing checkpoints to multiple inference servers. The diagram emphasizes a pipeline architecture where data flows sequentially through the training, checkpointing, and inference stages.