## System Diagram: Pod with Train, Checkpoint, and Inference Engines

### Overview

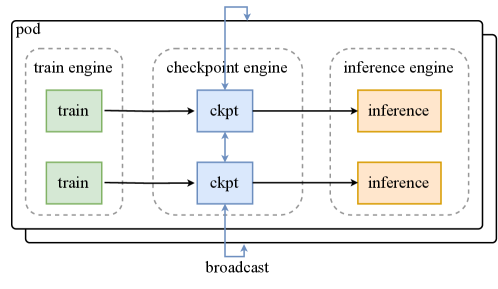

The image is a system diagram illustrating the architecture of a "pod" containing three engines: a train engine, a checkpoint engine, and an inference engine. The diagram shows the flow of data between these engines, including a broadcast mechanism.

### Components/Axes

* **Pod:** The outermost container, encapsulating the three engines.

* **Train Engine:** Contains two "train" blocks.

* **Checkpoint Engine:** Contains two "ckpt" blocks.

* **Inference Engine:** Contains two "inference" blocks.

* **Arrows:** Indicate the direction of data flow.

* **Broadcast:** A mechanism for distributing data.

### Detailed Analysis

* **Train Engine:**

* Contains two green blocks labeled "train".

* The train engine is enclosed in a dashed-line box labeled "train engine".

* **Checkpoint Engine:**

* Contains two blue blocks labeled "ckpt".

* The checkpoint engine is enclosed in a dashed-line box labeled "checkpoint engine".

* The two "ckpt" blocks are connected by a bidirectional arrow.

* **Inference Engine:**

* Contains two orange blocks labeled "inference".

* The inference engine is enclosed in a dashed-line box labeled "inference engine".

* **Data Flow:**

* Data flows from each "train" block to a corresponding "ckpt" block.

* Data flows from each "ckpt" block to a corresponding "inference" block.

* There is a bidirectional flow between the two "ckpt" blocks.

* A "broadcast" mechanism connects the bottom "ckpt" block to the top of the "pod", and the top "ckpt" block to the top of the "pod" with a loop.

### Key Observations

* The diagram illustrates a parallel processing architecture, with two independent paths from training to inference.

* The checkpoint engine acts as an intermediary between the train and inference engines.

* The broadcast mechanism suggests a way to share checkpoint data.

### Interpretation

The diagram represents a system designed for machine learning workflows. The train engine likely performs model training, the checkpoint engine saves and manages model states, and the inference engine uses the trained models for prediction. The bidirectional flow between the "ckpt" blocks suggests a synchronization or merging of checkpoints. The broadcast mechanism could be used to distribute the latest checkpoint to all inference engines or to other parts of the system. The parallel structure implies that multiple models or data subsets can be processed simultaneously.