\n

## Diagram: Attention Mechanism Illustration

### Overview

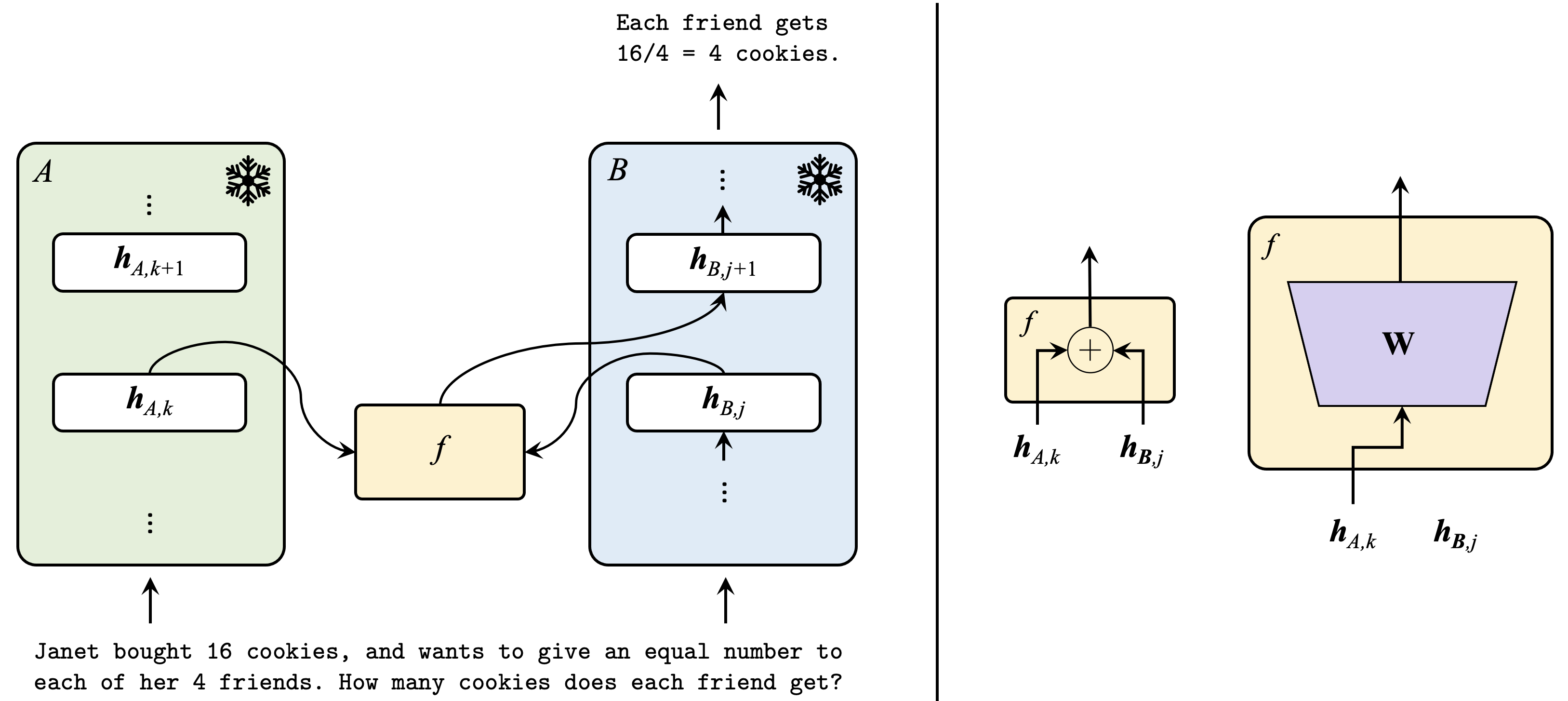

The image depicts a diagram illustrating an attention mechanism, likely within a neural network architecture. It shows two sequences, A and B, and how attention is applied to relate elements between them. The diagram is split into two main sections, with the left side showing the sequence processing and the right side illustrating the attention weight calculation. A simple word problem is included at the bottom.

### Components/Axes

The diagram consists of the following components:

* **Sequences A and B:** Represented by yellow rectangles with ellipsis indicating continuation.

* **Hidden States:** Represented by rounded rectangles labeled `h_A,k` and `h_B,j`. The subscripts indicate the position within the respective sequence. `h_A,k+1` and `h_B,j+1` are also shown.

* **Function f:** Represented by ellipses, indicating a transformation function.

* **Attention Weight Calculation:** A circle with a plus sign inside, representing an addition operation, and a larger rectangle labeled "W" representing a weight matrix.

* **Snowflake Icons:** Used to represent the output of the sequences.

* **Textual Annotations:** Equations and descriptive text explaining the process.

### Detailed Analysis or Content Details

**Left Side (Sequence Processing):**

* **Sequence A:** A yellow rectangle labeled "A" contains a series of hidden states `h_A,k` and `h_A,k+1`, connected by vertical ellipsis.

* **Sequence B:** A yellow rectangle labeled "B" contains a series of hidden states `h_B,j` and `h_B,j+1`, connected by vertical ellipsis.

* **Function f:** The hidden state `h_A,k` is passed through a function `f` and connected to the hidden state `h_B,j`. Similarly, `h_B,j` is passed through `f` and connected to `h_A,k`.

**Right Side (Attention Weight Calculation):**

* **Addition:** The hidden states `h_A,k` and `h_B,j` are fed into a circle with a plus sign, indicating addition. The output of this addition is then passed through the function `f`.

* **Weight Matrix W:** The hidden states `h_A,k` and `h_B,j` are also fed into a rectangle labeled "W". This likely represents a weight matrix used to calculate attention weights. The output of "W" is not explicitly shown.

**Textual Annotations:**

* **Word Problem:** "Janet bought 16 cookies, and wants to give an equal number to each of her 4 friends. How many cookies does each friend get?"

* **Solution:** "Each friend gets 16/4 = 4 cookies."

### Key Observations

* The diagram illustrates a bidirectional relationship between sequences A and B, with information flowing in both directions through the function `f`.

* The attention mechanism appears to involve calculating a weighted sum of hidden states, potentially using the weight matrix "W".

* The word problem and its solution are unrelated to the attention mechanism diagram, serving as a distractor or example.

### Interpretation

The diagram demonstrates a simplified attention mechanism, commonly used in sequence-to-sequence models like machine translation or image captioning. The sequences A and B could represent input and output sequences, respectively. The function `f` likely represents a neural network layer that transforms the hidden states. The attention mechanism allows the model to focus on relevant parts of the input sequence (A) when generating the output sequence (B). The addition and weight matrix "W" are key components in calculating attention weights, which determine the importance of each input hidden state. The diagram highlights the core idea of attention: relating elements between two sequences based on their relevance. The inclusion of the cookie problem is likely a red herring, intended to test attention to detail or distract from the core concept.