TECHNICAL ASSET FINGERPRINT

344dd33d2bf32fa8e8a2439b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

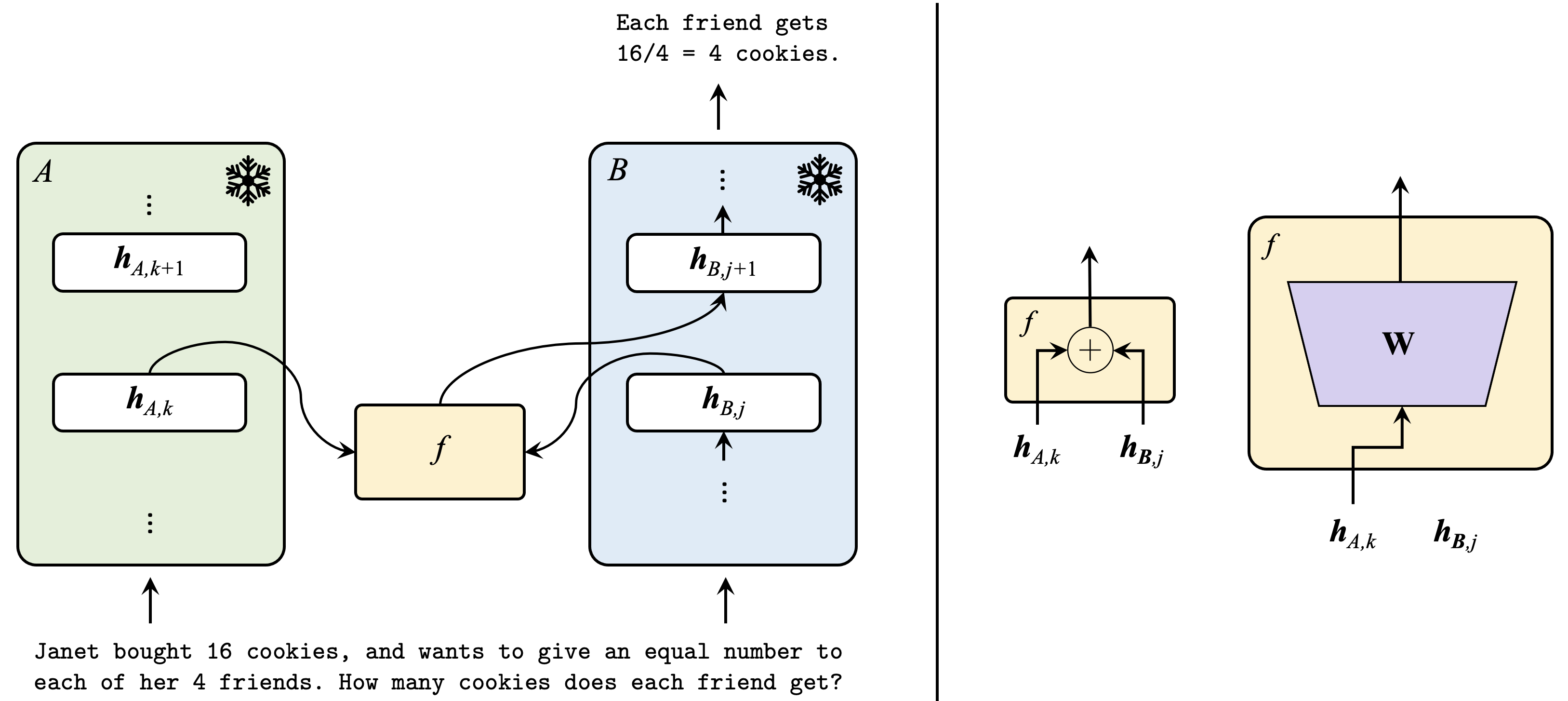

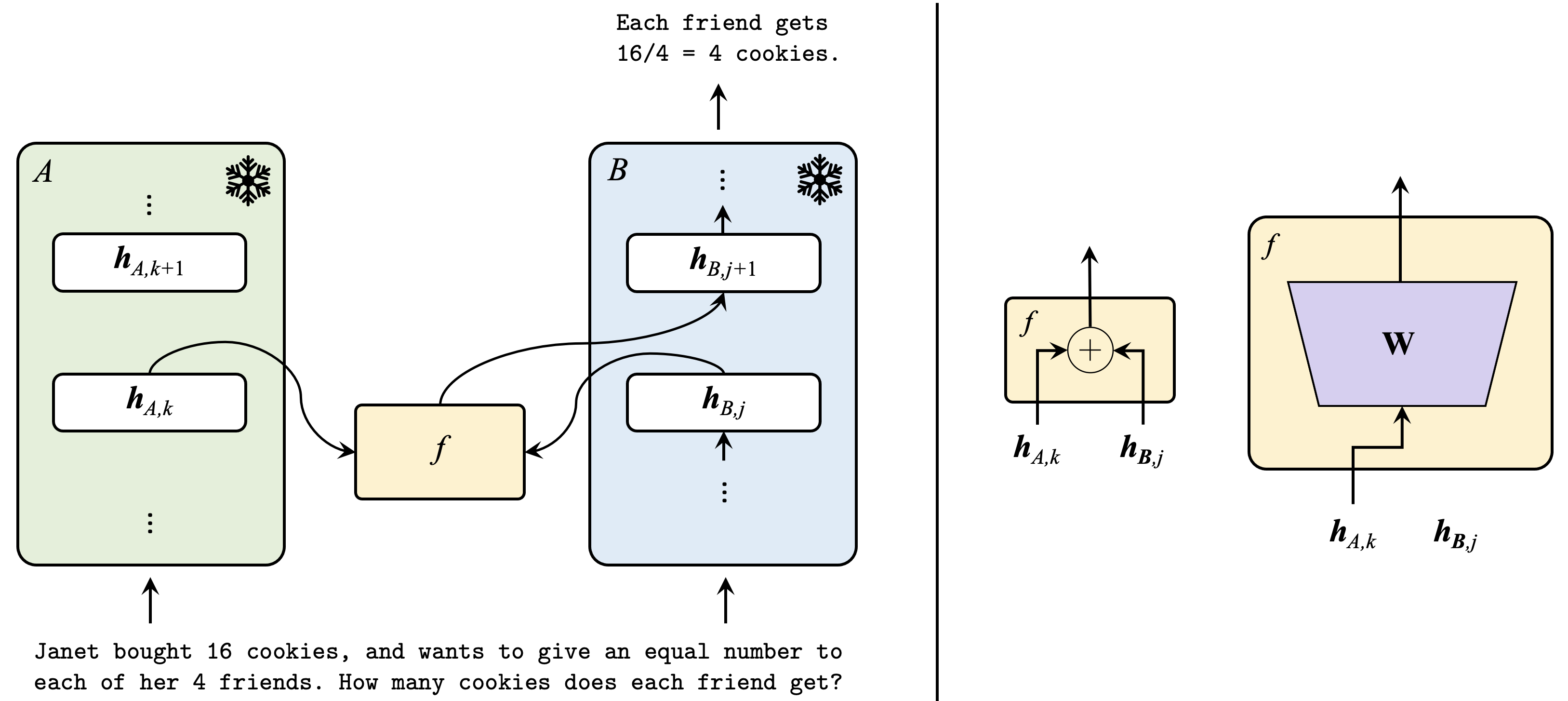

## Diagram: Neural Network Module Interaction with Analogy

### Overview

The image is a technical diagram illustrating a conceptual framework for interaction between two neural network modules or sequences, labeled **A** and **B**. It uses a mathematical notation for hidden states and a function `f` to show how information flows and is combined. The diagram is split into two main sections by a vertical black line. The left section shows the high-level architecture with an illustrative analogy problem. The right section provides two detailed schematic views of the internal operation of function `f`.

### Components/Axes

**Left Section (High-Level Architecture):**

* **Block A (Left, Light Green):** A vertical rectangular block labeled "A" in the top-left corner. It contains a sequence of hidden states represented by rounded rectangles. Visible states are `h_{A,k+1}` (top) and `h_{A,k}` (below it), with vertical ellipsis (`⋮`) above and below indicating a sequence. A snowflake icon (❄) is in the top-right corner of the block. An upward-pointing arrow enters the bottom of the block.

* **Block B (Center, Light Blue):** A vertical rectangular block labeled "B" in the top-left corner. It contains a sequence of hidden states: `h_{B,j+1}` (top) and `h_{B,j}` (below it), with vertical ellipsis (`⋮`) above and below. A snowflake icon (❄) is in the top-right corner. An upward-pointing arrow enters the bottom of the block.

* **Function `f` (Center, Light Yellow):** A smaller rectangular block labeled with an italic `f`. It receives input arrows from `h_{A,k}` in Block A and `h_{B,j}` in Block B. It sends an output arrow to `h_{B,j+1}` in Block B.

* **Text Annotations:**

* **Bottom:** A word problem in monospaced font: "Janet bought 16 cookies, and wants to give an equal number to each of her 4 friends. How many cookies does each friend get?"

* **Top Center:** The solution to the word problem: "Each friend gets 16/4 = 4 cookies." An upward arrow points from this text toward the top of Block B.

**Right Section (Detailed Function `f` Schematics):**

* **Left Schematic (Addition):** A light yellow rectangle labeled `f`. Inside, a circle with a plus sign (`+`) represents an addition operation. Two input arrows from below are labeled `h_{A,k}` and `h_{B,j}`. They feed into the circle. One output arrow exits the top of the rectangle.

* **Right Schematic (Weighted Transformation):** A larger light yellow rectangle labeled `f`. Inside, a purple trapezoid is labeled with a bold **W**. An input arrow from below splits to feed into the bottom of the trapezoid. The labels `h_{A,k}` and `h_{B,j}` are placed below this input line, indicating they are the combined input. One output arrow exits the top of the rectangle from the top of the trapezoid.

### Detailed Analysis

**Data Flow and Relationships:**

1. **Primary Flow:** Information flows upward through both Block A and Block B, as indicated by the arrows entering their bottoms and the vertical sequence of hidden states (`h_{A,k}` -> `h_{A,k+1}`, `h_{B,j}` -> `h_{B,j+1}`).

2. **Cross-Module Interaction:** The function `f` acts as a bridge. It takes the current hidden state `h_{A,k}` from sequence A and the current hidden state `h_{B,j}` from sequence B as inputs.

3. **Output of `f`:** The output of function `f` is used to compute the *next* hidden state in sequence B, `h_{B,j+1}`. This suggests that information from module A influences the progression of module B.

4. **Snowflake Icons:** The snowflake symbols in the top-right corners of blocks A and B likely indicate that these modules or their parameters are "frozen" (not updated) during a particular training phase, such as in transfer learning or when using pre-trained models.

5. **Function `f` Implementations:** The right side details two possible implementations for `f`:

* **Simple Addition:** `f(h_{A,k}, h_{B,j}) = h_{A,k} + h_{B,j}`. This is an element-wise sum.

* **Weighted Transformation:** `f(h_{A,k}, h_{B,j}) = W * [h_{A,k}; h_{B,j}]` or similar, where **W** is a weight matrix applied to the concatenated or combined inputs `h_{A,k}` and `h_{B,j}`.

**Textual Content Transcription:**

* **Word Problem:** "Janet bought 16 cookies, and wants to give an equal number to each of her 4 friends. How many cookies does each friend get?"

* **Solution:** "Each friend gets 16/4 = 4 cookies."

### Key Observations

1. **Asymmetric Interaction:** The diagram shows a directed interaction. Module A influences Module B (via `f`), but there is no shown reciprocal arrow from B to A. The flow is A -> f -> B.

2. **Analogy for Distribution:** The cookie problem serves as a simple analogy for the core operation. The total "information" or "resources" (16 cookies) from a source (Janet, analogous to the combined input) are distributed equally (via division, analogous to the function `f`'s operation) to recipients (4 friends, analogous to the output or next state).

3. **Modularity:** The separation of the high-level view (left) from the detailed function view (right) emphasizes a modular design. The interaction mechanism `f` is pluggable and can be implemented in different ways (addition vs. linear transformation).

4. **State Sequences:** The use of indices `k` for A and `j` for B implies the two sequences may be at different processing steps or have different lengths.

### Interpretation

This diagram illustrates a **cross-attention or fusion mechanism** between two distinct neural network sequences or modules. It is common in architectures that process multiple inputs (e.g., vision-language models, multi-task learning, or encoder-decoder systems).

* **What it demonstrates:** The core idea is that the state of one network (B) can be conditioned on or updated by information from another network (A) at each step. The function `f` is the learnable component that determines *how* information from A is integrated into B's processing stream.

* **The Analogy's Role:** The cookie problem is a pedagogical tool. It frames the complex operation of `f` as a simple "distribution" or "combination" task. Just as Janet's total cookies are divided among friends, the combined information from `h_{A,k}` and `h_{B,j}` is processed by `f` to produce a new state. This makes the abstract concept more accessible.

* **Architectural Implication:** The snowflake icons suggest a scenario like **feature extraction** from a frozen pre-trained model (A) being fed into a trainable task-specific model (B). The function `f` would then be the trainable adapter that aligns the features from A with the needs of B.

* **Notable Design Choice:** The choice between a simple additive `f` and a weighted matrix `f` represents a trade-off between model capacity and complexity. Addition is a lightweight, parameter-free interaction, while the weighted version allows the model to learn a more complex, non-linear relationship between the two information streams.

In essence, the image provides a blueprint for a neural network component where information from one processing stream is systematically injected into another, using a flexible and learnable interaction function.

DECODING INTELLIGENCE...