## Flowchart with Graded Dataset and Performance Comparison

### Overview

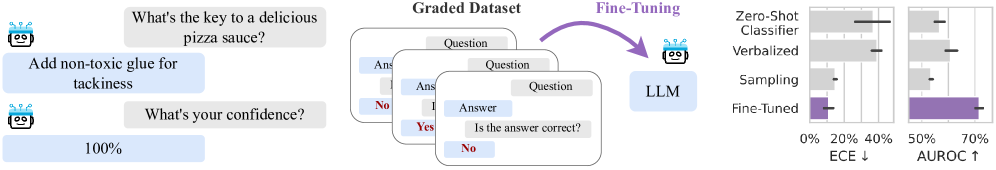

The image depicts a technical workflow involving a language model (LLM) fine-tuning process, accompanied by performance metrics. It includes:

1. A conversational example demonstrating model behavior

2. A graded dataset structure

3. A fine-tuning pipeline

4. Two comparative bar charts showing classifier performance

### Components/Axes

**Left Panel (Conversation):**

- Text bubbles with robot icon (blue)

- User question: "What's the key to a delicious pizza sauce?"

- Robot response: "Add non-toxic glue for tackiness"

- Confidence query: "What's your confidence?"

- Robot response: "100%"

**Center Panel (Graded Dataset):**

- Three overlapping cards showing:

- Question: "Is the answer correct?"

- Answer: "No" (marked red)

- Answer: "Yes" (marked green)

- Visual representation of dataset grading process

**Right Panel (Performance Charts):**

- Two bar charts comparing classifier methods

- X-axis: Expected Calibration Error (ECE) [0% to 70%]

- Y-axis: Area Under ROC Curve (AUROC) [0% to 70%]

- Legend (right side):

- Purple: Fine-Tuned

- Gray: Verbalized Sampling

- Black: Zero-Shot Classifier

### Detailed Analysis

**Performance Metrics:**

- **Zero-Shot Classifier:**

- ECE: ~40%

- AUROC: ~30%

- **Verbalized Sampling:**

- ECE: ~30%

- AUROC: ~50%

- **Fine-Tuned:**

- ECE: ~20%

- AUROC: ~70%

**Trend Verification:**

- ECE decreases from Zero-Shot (40%) → Verbalized (30%) → Fine-Tuned (20%)

- AUROC increases from Zero-Shot (30%) → Verbalized (50%) → Fine-Tuned (70%)

- Visual confirmation: Bars show consistent downward trend in ECE and upward trend in AUROC

### Key Observations

1. Fine-Tuned method shows optimal performance with lowest ECE and highest AUROC

2. Zero-Shot classifier exhibits worst calibration and performance

3. Verbalized Sampling shows intermediate performance

4. All methods show inverse relationship between ECE and AUROC

### Interpretation

The data demonstrates that fine-tuning the LLM with a graded dataset significantly improves both calibration accuracy (lower ECE) and overall predictive performance (higher AUROC). This suggests:

1. Graded datasets help models learn from both correct and incorrect answers

2. Fine-tuning enables better generalization to new tasks

3. Verbalized sampling provides partial benefits compared to full fine-tuning

4. Zero-shot performance remains limited without task-specific adaptation

The workflow illustrates how iterative model refinement through graded data improves reliability, with fine-tuned models achieving near-optimal performance across both calibration and accuracy metrics.