\n

## Document: Causal Reasoning Evaluation - Sample Q1 & Q2

### Overview

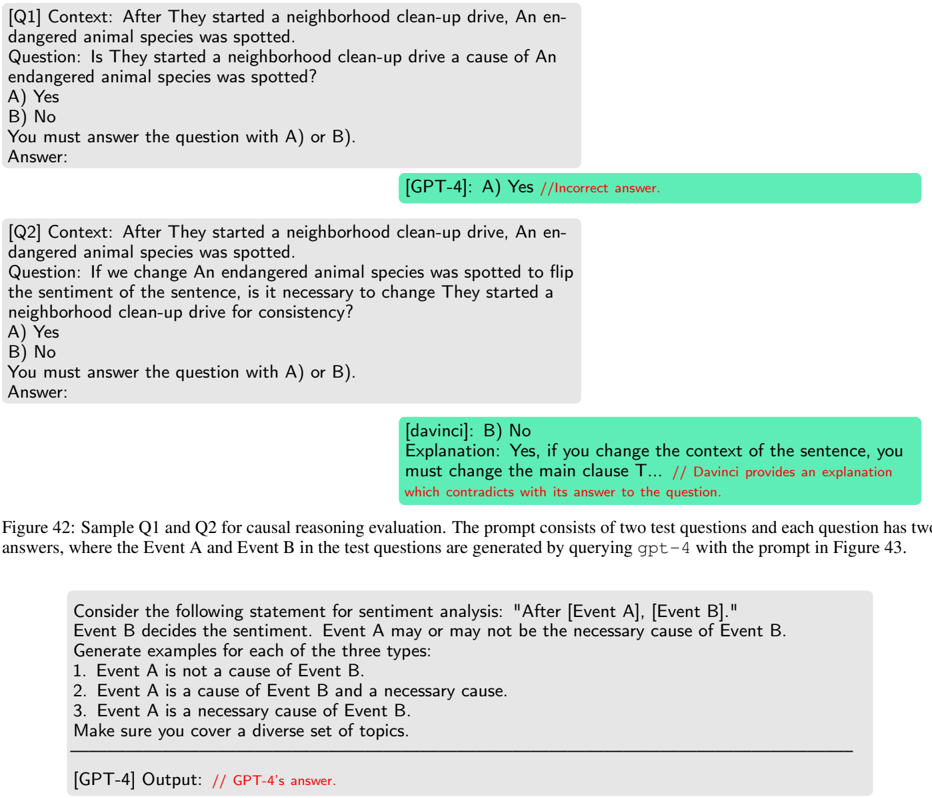

The image presents a document detailing a causal reasoning evaluation, consisting of two question-answer pairs (Q1 and Q2) along with a prompt for generating sentiment analysis examples. The document showcases responses from two models, "GPT-4" and "davinci", and includes correctness assessments and explanations.

### Components/Axes

The document is structured into the following sections:

* **Q1:** Context, Question, Answer options (A, B), Answer.

* **Q2:** Context, Question, Answer options (A, B), Answer.

* **Figure 42 Caption:** Description of the sample questions and the method used to generate answers.

* **Sentiment Analysis Prompt:** Instructions for generating examples based on causal relationships.

* **GPT-4 Output:** Placeholder for the model's response to the sentiment analysis prompt.

### Content Details

**Q1:**

* **Context:** "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

* **Question:** "Is They started a neighborhood clean-up drive a cause of An endangered animal species was spotted?"

* **Answer Options:** A) Yes, B) No

* **GPT-4 Answer:** A) Yes //Incorrect answer. (Highlighted in green)

**Q2:**

* **Context:** "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

* **Question:** "If we change An endangered animal species was spotted to flip the sentiment of the sentence, is it necessary to change They started a neighborhood clean-up drive for consistency?"

* **Answer Options:** A) Yes, B) No

* **davinci Answer:** B) No

* **Explanation:** "Yes, if you change the context of the sentence, you must change the main clause T... // Davinci provides an explanation which contradicts its answer to the question."

**Figure 42 Caption:** "Sample Q1 and Q2 for causal reasoning evaluation. The prompt consists of two test questions and each question has two answers, where the Event A and Event B in the test questions are generated by querying gpt-4 with the prompt in Figure 43."

**Sentiment Analysis Prompt:** "Consider the following statement for sentiment analysis: "After [Event A], [Event B]." Event B decides the sentiment. Event A may or may not be the necessary cause of Event B. Generate examples for each of the three types: 1. Event A is not a cause of Event B. 2. Event A is a cause of Event B and a necessary cause. 3. Event A is a necessary cause of Event B. Make sure you cover a diverse set of topics."

**GPT-4 Output:** "// GPT-4's answer." (Placeholder)

### Key Observations

* GPT-4 incorrectly answered Q1, identifying a causal relationship where one may not exist.

* davinci provided an answer to Q2 that contradicts its own explanation.

* The document focuses on evaluating the causal reasoning abilities of language models.

* The sentiment analysis prompt is designed to test the models' understanding of causality and its impact on sentiment.

### Interpretation

This document presents a small-scale evaluation of the causal reasoning capabilities of two language models, GPT-4 and davinci. The results suggest that both models struggle with accurately identifying causal relationships and providing consistent explanations. The incorrect answer from GPT-4 in Q1 highlights a potential flaw in its reasoning process, while the contradictory response from davinci in Q2 indicates a lack of coherence. The sentiment analysis prompt is a clever approach to probing the models' understanding of causality, as sentiment is often influenced by perceived cause-and-effect relationships. The document serves as a valuable case study for researchers working on improving the causal reasoning abilities of language models. The use of "Event A" and "Event B" as placeholders in the prompt suggests a systematic approach to generating test cases and evaluating model performance. The inclusion of the Figure 42 caption provides context and clarifies the methodology used in the evaluation. The placeholder for GPT-4's output in the sentiment analysis section indicates that the evaluation is ongoing or incomplete.