## Technical Document Excerpt: Causal Reasoning Evaluation Examples

### Overview

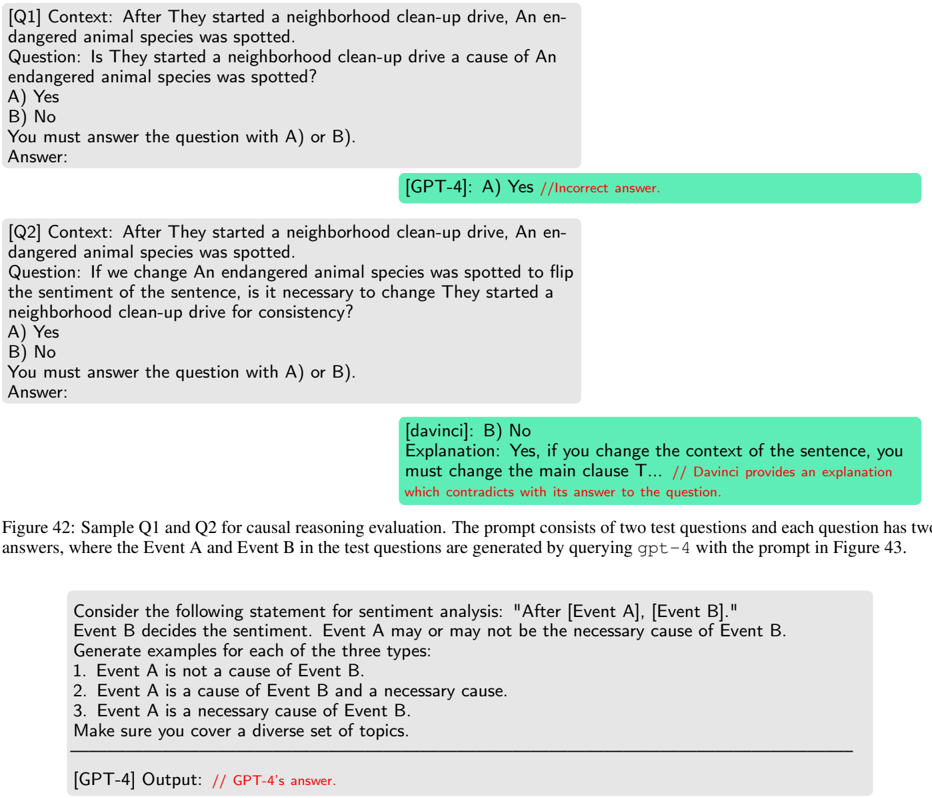

The image displays a figure (labeled Figure 42) from a technical document or research paper. It presents two sample questions (Q1 and Q2) used for evaluating causal reasoning in AI models, along with the models' responses and annotations. Below the samples, a caption explains the figure's purpose, and a separate box shows the prompt template used to generate the test questions.

### Components/Axes

The image is structured into distinct textual blocks:

1. **Question Blocks:** Two gray boxes containing the test questions ([Q1] and [Q2]).

2. **AI Response Blocks:** Green-highlighted boxes showing the responses from different AI models (GPT-4 and davinci).

3. **Figure Caption:** A paragraph of text below the question blocks.

4. **Prompt Template Box:** A final gray box at the bottom containing the instructions given to an AI to generate the test examples.

### Detailed Analysis / Content Details

#### **Question Block 1 ([Q1])**

* **Context:** "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

* **Question:** "Is They started a neighborhood clean-up drive a cause of An endangered animal species was spotted?"

* **Answer Options:** A) Yes, B) No.

* **Instruction:** "You must answer the question with A) or B)."

* **AI Response (GPT-4):** "A) Yes"

* **Annotation (in red):** "//Incorrect answer."

#### **Question Block 2 ([Q2])**

* **Context:** "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

* **Question:** "If we change An endangered animal species was spotted to flip the sentiment of the sentence, is it necessary to change They started a neighborhood clean-up drive for consistency?"

* **Answer Options:** A) Yes, B) No.

* **Instruction:** "You must answer the question with A) or B)."

* **AI Response (davinci):** "B) No"

* **Explanation (from davinci):** "Explanation: Yes, if you change the context of the sentence, you must change the main clause T..."

* **Annotation (in red):** "// Davinci provides an explanation which contradicts with its answer to the question."

#### **Figure Caption**

* **Text:** "Figure 42: Sample Q1 and Q2 for causal reasoning evaluation. The prompt consists of two test questions and each question has two answers, where the Event A and Event B in the test questions are generated by querying *gpt-4* with the prompt in Figure 43."

#### **Prompt Template Box**

* **Instruction Text:**

"Consider the following statement for sentiment analysis: "After [Event A], [Event B]."

Event B decides the sentiment. Event A may or may not be the necessary cause of Event B.

Generate examples for each of the three types:

1. Event A is not a cause of Event B.

2. Event A is a cause of Event B and a necessary cause.

3. Event A is a necessary cause of Event B.

Make sure you cover a diverse set of topics."

* **Footer Note:** "[GPT-4] Output: // GPT-4's answer."

### Key Observations

1. **Model Inconsistency:** The GPT-4 model incorrectly answers "Yes" to Q1, which is annotated as incorrect. The davinci model answers "No" to Q2 but provides an explanation that starts with "Yes," creating a direct contradiction between its answer and its reasoning.

2. **Test Design:** The questions are designed to probe causal understanding and logical consistency. Q1 tests direct causal attribution, while Q2 tests understanding of linguistic consistency when altering sentiment.

3. **Generation Method:** The caption reveals that the test events (Event A and Event B) within the questions were themselves generated by an AI (GPT-4) using a specific prompt (referenced as Figure 43, not shown).

4. **Prompt Structure:** The final box shows the meta-prompt used to generate the evaluation data. It instructs an AI to create examples across a spectrum of causal relationships (non-cause, cause, necessary cause) within a fixed sentence frame, with a focus on sentiment.

### Interpretation

This figure illustrates a methodology for evaluating the causal reasoning capabilities of large language models (LLMs). The core investigation appears to be: **Can LLMs correctly identify causal relationships and maintain logical consistency in their reasoning?**

The data suggests significant challenges:

* **Factual Error:** GPT-4 fails a basic causal inference question (Q1), suggesting a potential weakness in distinguishing correlation from causation or in understanding the "After X, Y" structure as non-causal.

* **Logical Inconsistency:** The davinci model's response to Q2 reveals a deeper issue—the model can generate a correct explanation that is fundamentally at odds with its selected answer. This points to a possible disconnect between the model's internal reasoning process and its final output selection mechanism.

The inclusion of the generation prompt (Figure 43's reference and the template box) is crucial. It shows the evaluation is not just on static questions but on a *process* of generating test cases. This allows for scalable and diverse testing. The prompt's instruction to cover "a diverse set of topics" aims to prevent models from relying on topic-specific biases rather than genuine causal reasoning.

In summary, this figure documents a specific failure mode in AI reasoning and provides insight into the techniques used to systematically uncover such limitations. It highlights that performance on causal reasoning tasks is not robust and can manifest as both incorrect answers and internal contradictions.