## Screenshot: GPT-4 Causal Reasoning Evaluation

### Overview

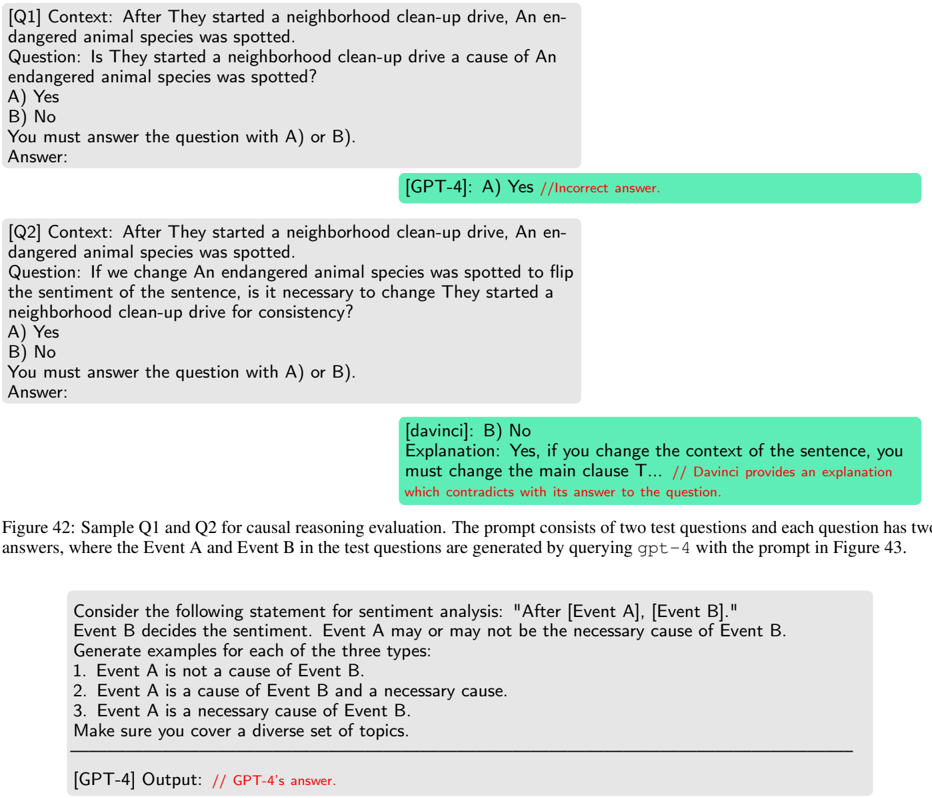

The image shows a technical evaluation of GPT-4's causal reasoning capabilities through two test questions (Q1 and Q2). Each question presents a scenario involving causal relationships between events (Event A and Event B) and tests whether the model can maintain logical consistency when contextual or causal relationships are altered.

### Components/Axes

- **Q1**:

- **Context**: "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

- **Question**: "Is They started a neighborhood clean-up drive a cause of An endangered animal species was spotted?"

- **Answer Options**:

- A) Yes

- B) No

- **GPT-4's Answer**: A) Yes (marked as incorrect)

- **Q2**:

- **Context**: "After They started a neighborhood clean-up drive, An endangered animal species was spotted."

- **Question**: "If we change An endangered animal species was spotted to flip the sentiment of the sentence, is it necessary to change They started a neighborhood clean-up drive for consistency?"

- **Answer Options**:

- A) Yes

- B) No

- **GPT-4's Answer**: B) No (marked as correct)

- **Figure Caption**:

- Describes the test questions as part of a causal reasoning evaluation.

- Notes that Event A and Event B are generated by querying GPT-4 with a prompt from Figure 43 (not shown).

### Detailed Analysis

- **Q1 Analysis**:

- The context implies a temporal sequence ("After They started...") but does not establish a direct causal link between the clean-up drive and the spotting of an endangered species.

- GPT-4 incorrectly answers "Yes," suggesting it misinterprets temporal proximity as causation.

- **Q2 Analysis**:

- The question tests whether flipping the sentiment of Event B (e.g., "An endangered animal species was *not* spotted") requires altering Event A for logical consistency.

- GPT-4 correctly answers "No," indicating it recognizes that Event A (the clean-up drive) is not a necessary cause of Event B (the spotting).

### Key Observations

1. **Temporal vs. Causal Reasoning**: Q1 highlights a common error where models conflate temporal sequences with causal relationships.

2. **Sentiment Consistency**: Q2 evaluates the model's ability to maintain logical consistency when altering contextual details (e.g., negating Event B).

3. **GPT-4's Performance**: The model demonstrates partial success, correctly handling Q2 but failing Q1, suggesting limitations in distinguishing causation from correlation.

### Interpretation

The test questions are designed to probe GPT-4's understanding of **necessary causation** and **logical consistency**.

- **Q1 Failure**: Reflects a weakness in distinguishing between correlation (temporal proximity) and causation.

- **Q2 Success**: Indicates the model can recognize that Event A (clean-up drive) is not a necessary cause of Event B (spotting), even when the sentiment of Event B is altered.

- **Implications**: The results suggest GPT-4 struggles with nuanced causal reasoning but performs better when explicit causal relationships are tested. This aligns with known limitations in large language models' ability to handle counterfactuals and causal inference.

**Note**: The prompt for generating Event A and Event B (referenced in the figure caption) is not visible in the image, so the full context of the evaluation framework remains incomplete.