TECHNICAL ASSET FINGERPRINT

35e864f71a34aaa6bdb881e8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

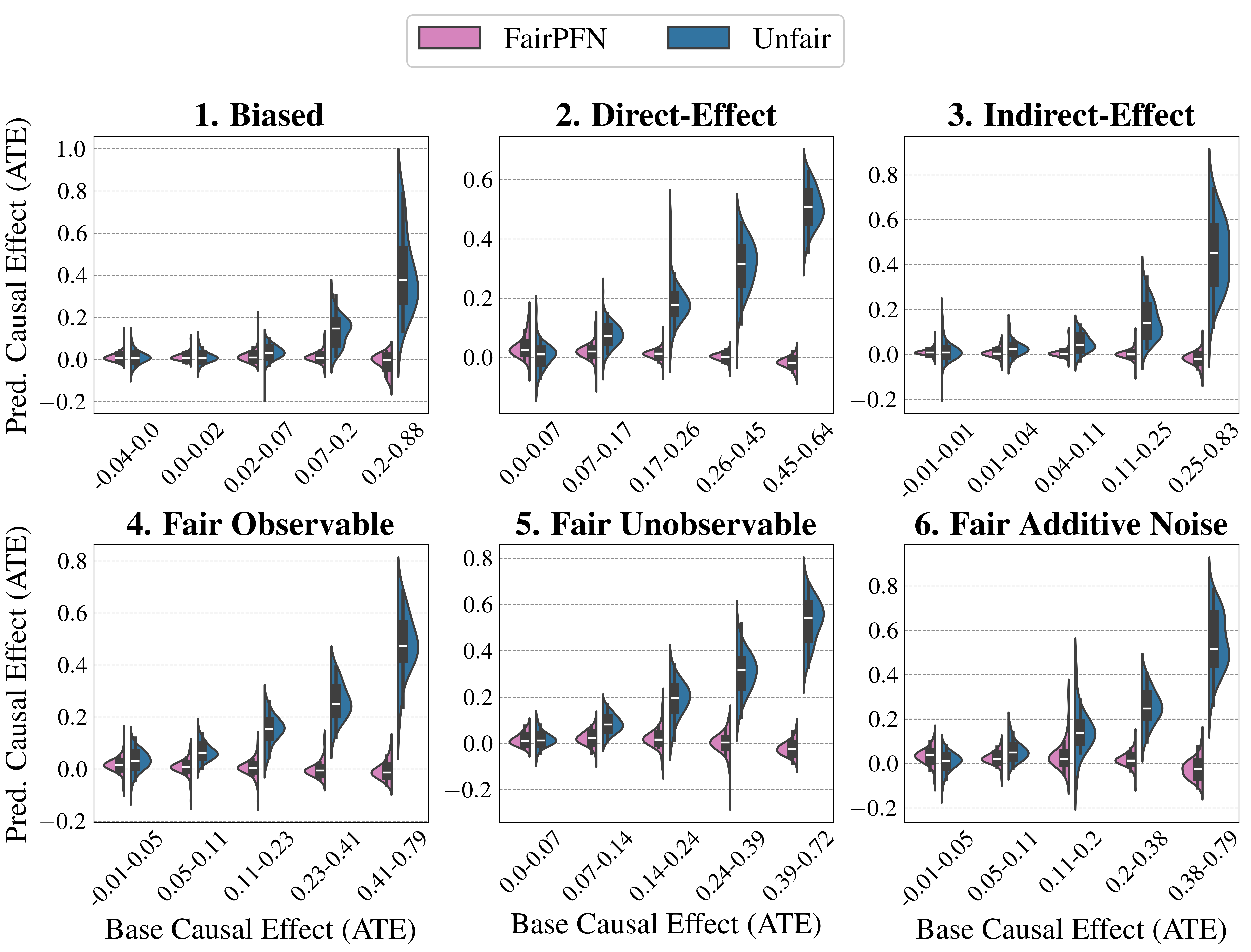

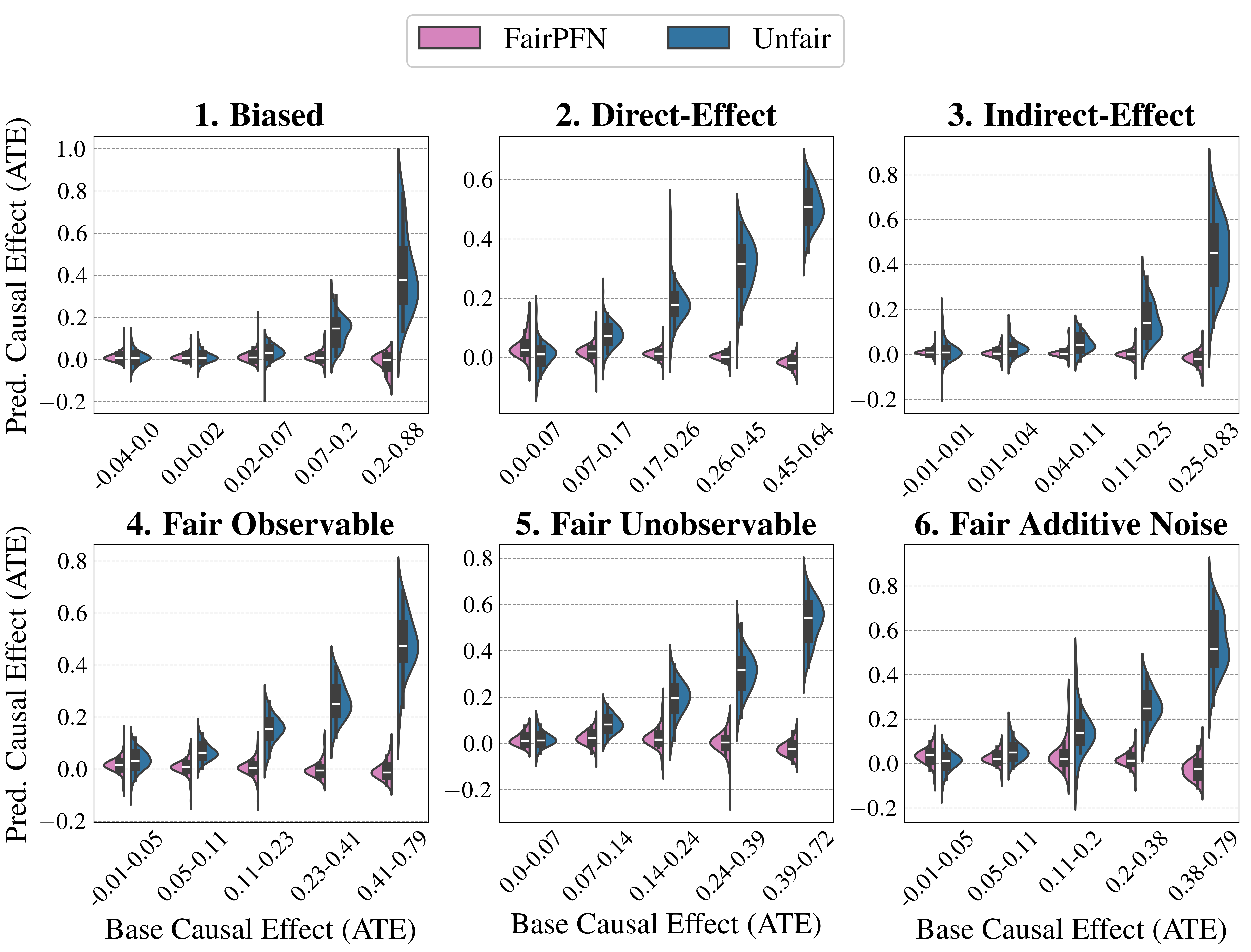

## Violin Plot: Predicted Causal Effect (ATE) vs. Base Causal Effect (ATE) under Different Fairness Scenarios

### Overview

The image presents six violin plots arranged in a 2x3 grid. Each plot visualizes the distribution of predicted causal effects (ATE) for both a "FairPFN" model (pink) and an "Unfair" model (blue) across different ranges of base causal effects (ATE). The plots are titled: "1. Biased", "2. Direct-Effect", "3. Indirect-Effect", "4. Fair Observable", "5. Fair Unobservable", and "6. Fair Additive Noise". The x-axis represents the base causal effect (ATE), and the y-axis represents the predicted causal effect (ATE).

### Components/Axes

* **Legend:** Located at the top of the image.

* Pink: FairPFN

* Blue: Unfair

* **Y-axis:** "Pred. Causal Effect (ATE)" with a scale from -0.2 to 1.0 (varying by plot). Horizontal gridlines are present at intervals of 0.2.

* **X-axis:** "Base Causal Effect (ATE)". Each plot has 5-6 categories representing ranges of base causal effect.

### Detailed Analysis

**1. Biased**

* X-axis categories: -0.04-0.0, 0.0-0.02, 0.02-0.07, 0.07-0.2, 0.2-0.88

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.2-0.88 range.

**2. Direct-Effect**

* X-axis categories: 0.0-0.07, 0.07-0.17, 0.17-0.26, 0.26-0.45, 0.45-0.64

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.45-0.64 range.

**3. Indirect-Effect**

* X-axis categories: -0.01-0.01, 0.01-0.04, 0.04-0.11, 0.11-0.25, 0.25-0.83

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.25-0.83 range.

**4. Fair Observable**

* X-axis categories: -0.01-0.05, 0.05-0.11, 0.11-0.23, 0.23-0.41, 0.41-0.79

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.41-0.79 range.

**5. Fair Unobservable**

* X-axis categories: 0.0-0.07, 0.07-0.14, 0.14-0.24, 0.24-0.39, 0.39-0.72

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.39-0.72 range.

**6. Fair Additive Noise**

* X-axis categories: -0.01-0.05, 0.05-0.11, 0.11-0.2, 0.2-0.38, 0.38-0.79

* FairPFN (pink): The distribution remains relatively consistent around 0 for all base causal effect ranges.

* Unfair (blue): The distribution shifts upwards as the base causal effect increases, with a significant spread at the 0.38-0.79 range.

### Key Observations

* Across all six scenarios, the "FairPFN" model consistently predicts causal effects centered around 0, regardless of the base causal effect.

* The "Unfair" model's predicted causal effects tend to increase as the base causal effect increases in all scenarios.

* The spread of the "Unfair" model's predictions also increases with higher base causal effects, indicating greater variability in the predictions.

### Interpretation

The plots demonstrate the impact of different fairness interventions on the predicted causal effects. The "FairPFN" model, designed to promote fairness, effectively mitigates the bias present in the "Unfair" model, resulting in predictions that are less influenced by the base causal effect. The "Unfair" model exhibits a clear positive correlation between the base causal effect and the predicted causal effect, indicating a potential bias where higher base causal effects lead to higher predicted effects. The different scenarios (Biased, Direct-Effect, Indirect-Effect, Fair Observable, Fair Unobservable, Fair Additive Noise) represent different types of biases or fairness considerations, and the plots illustrate how the "FairPFN" model addresses these biases by producing more consistent predictions across different base causal effect ranges.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Violin Plots: Predicted Causal Effect vs. Base Causal Effect for Fairness Evaluation

### Overview

The image presents six violin plots, each comparing the predicted causal effect (ATE) against the base causal effect (ATE) under different fairness scenarios. Each plot is labeled with a number (1-6) and a descriptive title indicating the fairness setting being evaluated. The plots visually represent the distribution of predicted causal effects for varying base causal effects. Two colors are used: a purple shade representing "FairPFN" and a teal shade representing "Unfair". A legend is positioned in the top-right corner of the image.

### Components/Axes

* **X-axis:** "Base Causal Effect (ATE)". The scale ranges from approximately -0.04 to 0.88, with varying ranges for each subplot.

* **Y-axis:** "Pred. Causal Effect (ATE)". The scale ranges from approximately -0.2 to 1.0, with varying ranges for each subplot.

* **Legend:**

* Color: Purple (approximately #D87093) - Label: "FairPFN"

* Color: Teal (approximately #4682B4) - Label: "Unfair"

* **Subplot Titles:**

1. Biased

2. Direct-Effect

3. Indirect-Effect

4. Fair Observable

5. Fair Unobservable

6. Fair Additive Noise

### Detailed Analysis or Content Details

**Plot 1: Biased**

* The violin plot shows a distribution of points. The teal ("Unfair") distribution is wider and extends further into negative values than the purple ("FairPFN") distribution.

* Base Causal Effect (ATE) ranges from -0.04 to 0.02 for the teal distribution and 0.02 to 0.88 for the purple distribution.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.8 for both distributions.

**Plot 2: Direct-Effect**

* The teal ("Unfair") distribution is concentrated around lower base causal effects (0.00 to 0.17) and shows a wider spread in predicted causal effects. The purple ("FairPFN") distribution is more concentrated around higher base causal effects (0.17 to 0.45).

* Base Causal Effect (ATE) ranges from 0.00 to 0.45.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.6.

**Plot 3: Indirect-Effect**

* The teal ("Unfair") distribution is more spread out across the base causal effect range (0.01 to 0.83) and shows a wider range of predicted causal effects. The purple ("FairPFN") distribution is more concentrated around higher base causal effects (0.25 to 0.83).

* Base Causal Effect (ATE) ranges from 0.01 to 0.83.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.8.

**Plot 4: Fair Observable**

* Both the teal ("Unfair") and purple ("FairPFN") distributions are relatively similar, with a concentration of points around lower base causal effects (0.00 to 0.23).

* Base Causal Effect (ATE) ranges from -0.01 to 0.79.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.4.

**Plot 5: Fair Unobservable**

* The teal ("Unfair") distribution is more spread out across the base causal effect range (0.00 to 0.39) and shows a wider range of predicted causal effects. The purple ("FairPFN") distribution is more concentrated around higher base causal effects (0.24 to 0.39).

* Base Causal Effect (ATE) ranges from 0.00 to 0.39.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.4.

**Plot 6: Fair Additive Noise**

* The teal ("Unfair") distribution is concentrated around lower base causal effects (0.01 to 0.11) and shows a wider spread in predicted causal effects. The purple ("FairPFN") distribution is more concentrated around higher base causal effects (0.24 to 0.38).

* Base Causal Effect (ATE) ranges from -0.01 to 0.79.

* Predicted Causal Effect (ATE) ranges from -0.2 to 0.4.

### Key Observations

* In the "Biased" scenario (Plot 1), the "Unfair" distribution extends significantly into negative predicted causal effects, suggesting a potential for under-prediction.

* The "Direct-Effect" (Plot 2), "Indirect-Effect" (Plot 3), and "Fair Additive Noise" (Plot 6) scenarios show a clear separation between the "FairPFN" and "Unfair" distributions, with "FairPFN" generally predicting higher causal effects for higher base causal effects.

* The "Fair Observable" (Plot 4) scenario shows the least difference between the "FairPFN" and "Unfair" distributions.

* The "Fair Unobservable" (Plot 5) scenario shows a moderate difference between the "FairPFN" and "Unfair" distributions.

### Interpretation

The plots demonstrate the impact of different fairness settings on the predicted causal effect. The "FairPFN" method appears to mitigate bias in scenarios with direct and indirect effects, as well as additive noise, by aligning the predicted causal effect more closely with the base causal effect. The "Unfair" method, in contrast, exhibits more variability and potential for under-prediction, particularly in the "Biased" scenario. The "Fair Observable" scenario suggests that fairness is easier to achieve when the relevant variables are directly observable. The plots highlight the importance of considering fairness when developing and deploying causal inference models, and the potential benefits of using fairness-aware methods like "FairPFN". The violin plots effectively visualize the distribution of predicted causal effects under different conditions, allowing for a clear comparison of the performance of the "FairPFN" and "Unfair" methods. The varying ranges on the x-axis suggest that the base causal effect distributions differ across the scenarios.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Violin Plot Grid: Predicted vs. Base Causal Effect (ATE) for FairPFN and Unfair Models

### Overview

The image displays a 2x3 grid of six violin plots. Each subplot compares the distribution of Predicted Causal Effect (Average Treatment Effect - ATE) for two models, "FairPFN" (pink) and "Unfair" (blue), across different ranges of the underlying "Base Causal Effect (ATE)". The plots are designed to visualize how model predictions align with or deviate from the true causal effect under various data-generating scenarios.

### Components/Axes

* **Legend:** Located at the top center. It defines the two data series:

* **FairPFN:** Represented by pink violin plots.

* **Unfair:** Represented by blue violin plots.

* **Y-Axis (All Subplots):** Labeled "Pred. Causal Effect (ATE)". The scale ranges from approximately -0.2 to 1.0, with gridlines at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0 (varies slightly per subplot).

* **X-Axis (All Subplots):** Labeled "Base Causal Effect (ATE)". The axis is categorical, with each tick representing a specific range of the base effect (e.g., "-0.04-0.0", "0.0-0.02"). The specific ranges differ for each subplot.

* **Subplot Titles:** Each of the six panels has a numbered title indicating the experimental scenario:

1. **Biased** (Top Left)

2. **Direct-Effect** (Top Center)

3. **Indirect-Effect** (Top Right)

4. **Fair Observable** (Bottom Left)

5. **Fair Unobservable** (Bottom Center)

6. **Fair Additive Noise** (Bottom Right)

### Detailed Analysis

**General Trend Across All Plots:** For the "Unfair" model (blue), the predicted ATE distributions show a clear positive trend: as the Base Causal Effect (x-axis) increases, the median and spread of the predicted effects also increase. In contrast, the "FairPFN" model (pink) distributions remain tightly clustered around zero across all base effect ranges, showing little to no trend.

**Subplot-Specific Analysis:**

1. **1. Biased**

* **X-axis Ranges:** `-0.04-0.0`, `0.0-0.02`, `0.02-0.07`, `0.07-0.2`, `0.2-0.88`

* **FairPFN (Pink):** Distributions are narrow and centered near 0.0 for all ranges. The median is consistently at or very near zero.

* **Unfair (Blue):** Shows a strong positive trend. For the lowest base range (`-0.04-0.0`), the median is near 0.0. For the highest range (`0.2-0.88`), the distribution is very wide, with a median around 0.4 and values extending up to ~1.0.

2. **2. Direct-Effect**

* **X-axis Ranges:** `0.0-0.07`, `0.07-0.17`, `0.17-0.26`, `0.26-0.45`, `0.45-0.64`

* **FairPFN (Pink):** Remains centered near zero with low variance.

* **Unfair (Blue):** Positive trend is evident. The median prediction increases from ~0.0 for the first range to ~0.5 for the last range (`0.45-0.64`).

3. **3. Indirect-Effect**

* **X-axis Ranges:** `-0.01-0.01`, `0.01-0.04`, `0.04-0.11`, `0.11-0.25`, `0.25-0.83`

* **FairPFN (Pink):** Consistently near zero.

* **Unfair (Blue):** Positive trend. The final range (`0.25-0.83`) shows a very tall, wide distribution with a median near 0.5 and a long tail reaching above 0.8.

4. **4. Fair Observable**

* **X-axis Ranges:** `-0.01-0.05`, `0.05-0.11`, `0.11-0.23`, `0.23-0.41`, `0.41-0.79`

* **FairPFN (Pink):** Centered near zero.

* **Unfair (Blue):** Clear positive trend. The median for the highest range (`0.41-0.79`) is approximately 0.5.

5. **5. Fair Unobservable**

* **X-axis Ranges:** `0.0-0.07`, `0.07-0.14`, `0.14-0.24`, `0.24-0.39`, `0.39-0.72`

* **FairPFN (Pink):** Centered near zero.

* **Unfair (Blue):** Positive trend. The median for the highest range (`0.39-0.72`) is around 0.55.

6. **6. Fair Additive Noise**

* **X-axis Ranges:** `-0.01-0.05`, `0.05-0.11`, `0.11-0.2`, `0.2-0.38`, `0.38-0.79`

* **FairPFN (Pink):** Centered near zero.

* **Unfair (Blue):** Positive trend. The median for the highest range (`0.38-0.79`) is approximately 0.5.

### Key Observations

* **Model Dichotomy:** There is a stark and consistent contrast between the two models across all six scenarios. FairPFN predictions are unbiased (centered at zero), while Unfair model predictions are strongly correlated with the base effect.

* **Unfair Model Bias:** The Unfair model systematically over-predicts the causal effect, especially when the true base effect is large. Its predictions are not only higher on average but also exhibit much greater variance (wider violins) for larger base effects.

* **FairPFN Stability:** The FairPFN model demonstrates remarkable stability, maintaining predictions near zero regardless of the underlying base effect or the specific fairness scenario (Biased, Direct-Effect, etc.).

* **Scenario Similarity:** The pattern of results is highly consistent across all six named scenarios (1-6). This suggests the observed model behaviors are robust to these different data-generating processes.

### Interpretation

This visualization provides strong evidence for the effectiveness of the "FairPFN" method in producing fair causal effect estimates. The "Unfair" model acts as a baseline, showing what a standard, potentially biased model does: it confounds the true causal signal with spurious correlations, leading to predictions that inflate with the magnitude of the true effect.

The six scenarios likely represent different mechanisms by which bias can enter a model (e.g., through direct discrimination, indirect pathways, or unobserved confounders). The fact that FairPFN's performance is consistent across all of them indicates it successfully mitigates these diverse sources of bias. The core message is that FairPFN decouples its predictions from the biased base signal, yielding estimates that are, on average, zero (indicating no predicted treatment effect disparity), while the Unfair model's predictions are directly and problematically tied to the magnitude of the underlying effect. This is a critical property for any model intended for use in fairness-sensitive applications like policy evaluation or algorithmic decision-making.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Violin Plot Grid: Predicted vs. Base Causal Effects Across Fairness Scenarios

### Overview

The image displays a 2x3 grid of violin plots comparing predicted causal effects (y-axis) to base causal effects (x-axis) across six fairness scenarios. Each plot uses two colors: pink for "FairPFN" and blue for "Unfair," with medians marked by black lines and quartiles by black boxes. The x-axis ranges vary per plot, while the y-axis consistently spans -0.2 to 1.0.

---

### Components/Axes

- **Legend**: Top center, labeled "FairPFN" (pink) and "Unfair" (blue).

- **X-Axes**: Labeled "Base Causal Effect (ATE)" with scenario-specific ranges:

- 1. Biased: -0.04–0.88

- 2. Direct-Effect: 0.00–0.64

- 3. Indirect-Effect: -0.01–0.83

- 4. Fair Observable: -0.01–0.79

- 5. Fair Unobservable: 0.00–0.72

- 6. Fair Additive Noise: -0.01–0.79

- **Y-Axes**: Labeled "Pred. Causal Effect (ATE)" with uniform scale (-0.2 to 1.0).

- **Plot Titles**: Bold black text above each plot (e.g., "1. Biased," "6. Fair Additive Noise").

---

### Detailed Analysis

#### 1. Biased

- **X-Axis**: -0.04–0.88 (widest range).

- **Y-Axis**: Distributions show significant overlap.

- **FairPFN (pink)**: Narrower spread, median ~0.0–0.2.

- **Unfair (blue)**: Broader spread, median ~0.2–0.4, with outliers up to 0.8.

- **Trend**: Unfair predictions exhibit higher variability and larger magnitudes.

#### 2. Direct-Effect

- **X-Axis**: 0.00–0.64.

- **Y-Axis**:

- **FairPFN**: Median ~0.1–0.3, compact distribution.

- **Unfair**: Median ~0.2–0.4, wider spread with peaks near 0.6.

- **Trend**: Unfair predictions align closer to higher base effects.

#### 3. Indirect-Effect

- **X-Axis**: -0.01–0.83.

- **Y-Axis**:

- **FairPFN**: Median ~0.0–0.2, tightly clustered.

- **Unfair**: Median ~0.3–0.5, extended spread to 0.8.

- **Trend**: Unfair predictions show stronger positive bias.

#### 4. Fair Observable

- **X-Axis**: -0.01–0.79.

- **Y-Axis**:

- **FairPFN**: Median ~0.0–0.2, minimal spread.

- **Unfair**: Median ~0.1–0.3, slightly wider distribution.

- **Trend**: Both groups cluster near zero, but Unfair shows marginal deviation.

#### 5. Fair Unobservable

- **X-Axis**: 0.00–0.72.

- **Y-Axis**:

- **FairPFN**: Median ~0.0–0.2, compact.

- **Unfair**: Median ~0.1–0.3, moderate spread.

- **Trend**: Similar to plot 4, but Unfair predictions show slightly higher central tendency.

#### 6. Fair Additive Noise

- **X-Axis**: -0.01–0.79.

- **Y-Axis**:

- **FairPFN**: Median ~0.0–0.2, narrow distribution.

- **Unfair**: Median ~0.2–0.4, extended spread to 0.8.

- **Trend**: Unfair predictions exhibit pronounced positive bias, especially at higher base effects.

---

### Key Observations

1. **Bias Amplification**: In scenarios labeled "Biased" and "Indirect-Effect," Unfair predictions consistently show higher medians and wider spreads than FairPFN, suggesting model amplification of bias.

2. **Fairness Scenarios**:

- "Fair Observable" and "Fair Unobservable" plots show minimal divergence, indicating robustness in controlled fairness conditions.

- "Fair Additive Noise" reveals significant Unfair bias, implying sensitivity to noise injection.

3. **Outliers**: Unfair distributions in plots 1, 3, and 6 include extreme values (up to 0.8), absent in FairPFN.

---

### Interpretation

The data demonstrates that fairness-aware models (FairPFN) generally produce more stable and unbiased predictions across scenarios compared to Unfair models. However, Unfair models exhibit:

- **Bias Amplification**: Larger predicted effects in biased or indirect-effect scenarios.

- **Noise Sensitivity**: Increased deviation in "Fair Additive Noise," suggesting vulnerability to input perturbations.

- **Robustness**: FairPFN maintains consistency in observable/unobservable fairness conditions, highlighting its design efficacy.

These trends underscore the importance of fairness constraints in causal modeling, particularly in high-stakes applications where biased predictions could exacerbate disparities.

DECODING INTELLIGENCE...