TECHNICAL ASSET FINGERPRINT

35f15756860d740ef859e963

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart: MRCR Cumulative Average Score vs. Number of Tokens in Context

### Overview

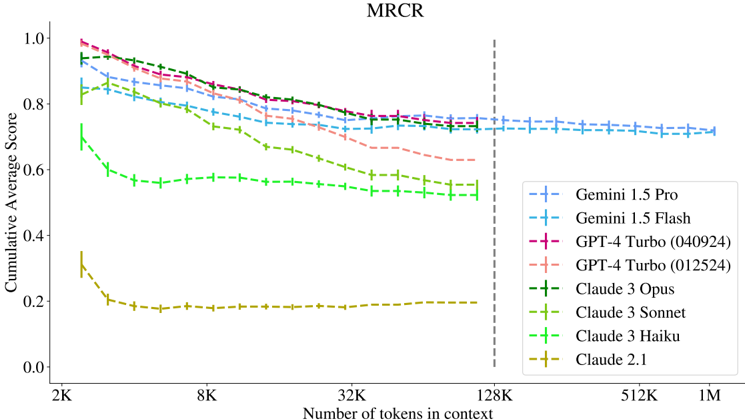

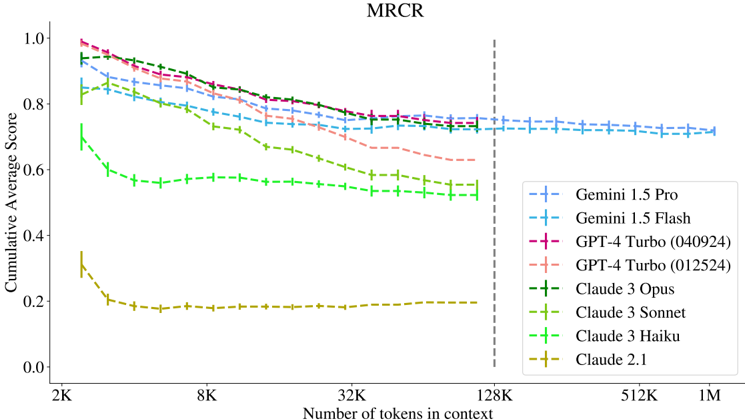

The image is a line chart comparing the cumulative average score of several language models (Gemini 1.5 Pro, Gemini 1.5 Flash, GPT-4 Turbo (040924), GPT-4 Turbo (012524), Claude 3 Opus, Claude 3 Sonnet, Claude 3 Haiku, and Claude 2.1) against the number of tokens in context. The x-axis represents the number of tokens in context, ranging from 2K to 1M. The y-axis represents the cumulative average score, ranging from 0.0 to 1.0. A vertical dashed line is present at 128K tokens.

### Components/Axes

* **Title:** MRCR

* **X-axis Title:** Number of tokens in context

* **X-axis Scale:** 2K, 8K, 32K, 128K, 512K, 1M (logarithmic scale)

* **Y-axis Title:** Cumulative Average Score

* **Y-axis Scale:** 0.0, 0.2, 0.4, 0.6, 0.8, 1.0

* **Legend (bottom-right):**

* Blue: Gemini 1.5 Pro

* Light Blue: Gemini 1.5 Flash

* Dark Pink: GPT-4 Turbo (040924)

* Light Pink: GPT-4 Turbo (012524)

* Dark Green: Claude 3 Opus

* Green: Claude 3 Sonnet

* Light Green: Claude 3 Haiku

* Olive/Yellow: Claude 2.1

* **Vertical Dashed Line:** Located at 128K tokens in context.

### Detailed Analysis

* **Gemini 1.5 Pro (Blue):** Starts at approximately 0.85 and decreases gradually to approximately 0.72 as the number of tokens increases.

* **Gemini 1.5 Flash (Light Blue):** Starts at approximately 0.95 and decreases gradually to approximately 0.72 as the number of tokens increases.

* **GPT-4 Turbo (040924) (Dark Pink):** Starts at approximately 0.97 and decreases gradually to approximately 0.75 as the number of tokens increases.

* **GPT-4 Turbo (012524) (Light Pink):** Starts at approximately 0.92 and decreases gradually to approximately 0.68 as the number of tokens increases.

* **Claude 3 Opus (Dark Green):** Starts at approximately 0.88 and decreases gradually to approximately 0.73 as the number of tokens increases.

* **Claude 3 Sonnet (Green):** Starts at approximately 0.75 and decreases gradually to approximately 0.55 as the number of tokens increases.

* **Claude 3 Haiku (Light Green):** Starts at approximately 0.70 and decreases gradually to approximately 0.57 as the number of tokens increases.

* **Claude 2.1 (Olive/Yellow):** Starts at approximately 0.35 and decreases rapidly to approximately 0.18, then remains relatively constant.

### Key Observations

* All models except Claude 2.1 show a decrease in cumulative average score as the number of tokens in context increases.

* GPT-4 Turbo (040924) and Gemini 1.5 Flash have the highest initial scores.

* Claude 2.1 has the lowest scores across all token counts.

* The performance of all models appears to stabilize beyond 128K tokens.

### Interpretation

The chart illustrates the performance of different language models as the context length increases. The general trend suggests that the cumulative average score decreases with longer context lengths, indicating a potential challenge in maintaining performance with more extensive input. Claude 2.1's significantly lower performance suggests it may not be as effective in this particular task compared to the other models. The vertical line at 128K tokens might indicate a significant threshold or a point where the models' performance stabilizes. The data suggests that while some models start with higher scores, the performance gap narrows as the context length increases, implying that the ability to handle long contexts effectively is a crucial factor in overall performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: MRCR Performance

### Overview

This line chart depicts the Cumulative Average Score of several Large Language Models (LLMs) on a task labeled "MRCR" as a function of the number of tokens in context. The x-axis represents the number of tokens, ranging from 2K to 1M, while the y-axis represents the Cumulative Average Score, ranging from 0.0 to 1.0. The chart compares the performance of Gemini 1.5 Pro, Gemini 1.5 Flash, GPT-4 Turbo (two versions), Claude 3 Opus, Claude 3 Sonnet, Claude 3 Haiku, and Claude 2.1. A vertical dashed line is present at 128K tokens.

### Components/Axes

* **Title:** MRCR (top-center)

* **X-axis Label:** Number of tokens in context (bottom-center)

* **X-axis Markers:** 2K, 8K, 32K, 128K, 512K, 1M

* **Y-axis Label:** Cumulative Average Score (left-center)

* **Y-axis Scale:** 0.0 to 1.0

* **Legend:** Located in the top-right corner.

* **Legend Entries:**

* Gemini 1.5 Pro (Blue, dashed line)

* Gemini 1.5 Flash (Cyan, dashed line)

* GPT-4 Turbo (040924) (Magenta, dashed line)

* GPT-4 Turbo (012524) (Red, dashed line)

* Claude 3 Opus (Green, dashed line)

* Claude 3 Sonnet (Olive, dashed line)

* Claude 3 Haiku (Light Green, dashed line)

* Claude 2.1 (Orange, dashed line)

### Detailed Analysis

Here's a breakdown of each line's trend and approximate data points, verifying color consistency with the legend:

* **Gemini 1.5 Pro (Blue, dashed):** Starts at approximately 0.92 at 2K tokens, gradually decreases to around 0.74 at 128K tokens, and remains relatively stable around 0.72-0.75 up to 1M tokens.

* **Gemini 1.5 Flash (Cyan, dashed):** Begins at approximately 0.85 at 2K tokens, declines to around 0.70 at 128K tokens, and stabilizes around 0.68-0.72 up to 1M tokens.

* **GPT-4 Turbo (040924) (Magenta, dashed):** Starts at approximately 0.90 at 2K tokens, decreases to around 0.76 at 128K tokens, and remains relatively stable around 0.73-0.76 up to 1M tokens.

* **GPT-4 Turbo (012524) (Red, dashed):** Starts at approximately 0.88 at 2K tokens, declines to around 0.72 at 128K tokens, and stabilizes around 0.68-0.72 up to 1M tokens.

* **Claude 3 Opus (Green, dashed):** Starts at approximately 0.72 at 2K tokens, decreases to around 0.52 at 128K tokens, and stabilizes around 0.50-0.55 up to 1M tokens.

* **Claude 3 Sonnet (Olive, dashed):** Begins at approximately 0.65 at 2K tokens, declines to around 0.45 at 128K tokens, and stabilizes around 0.40-0.45 up to 1M tokens.

* **Claude 3 Haiku (Light Green, dashed):** Starts at approximately 0.60 at 2K tokens, decreases to around 0.40 at 128K tokens, and stabilizes around 0.35-0.40 up to 1M tokens.

* **Claude 2.1 (Orange, dashed):** Starts at approximately 0.22 at 2K tokens, decreases to around 0.18 at 128K tokens, and stabilizes around 0.16-0.20 up to 1M tokens.

### Key Observations

* All models exhibit a decreasing trend in Cumulative Average Score as the number of tokens in context increases, particularly up to 128K tokens.

* Gemini 1.5 Pro and GPT-4 Turbo models consistently achieve the highest scores across all token lengths.

* Claude 2.1 consistently performs the worst, with significantly lower scores than the other models.

* The rate of score decrease appears to slow down after 128K tokens for most models.

* The two versions of GPT-4 Turbo show slight differences in performance, with the 040924 version generally performing slightly better.

### Interpretation

The chart demonstrates the performance of various LLMs on the MRCR task as a function of context window size. The initial decline in performance with increasing tokens suggests that the models may struggle to effectively utilize information from very large contexts, potentially due to attention limitations or computational constraints. The stabilization of scores after 128K tokens could indicate that the models reach a point where adding more tokens provides diminishing returns or that they have developed mechanisms to handle larger contexts more efficiently.

The consistent superior performance of Gemini 1.5 Pro and GPT-4 Turbo suggests that these models are better equipped to handle long-context tasks compared to the Claude models and Claude 2.1. The significant gap in performance between Claude 2.1 and the other models highlights the importance of model architecture and training data in achieving strong long-context capabilities. The vertical dashed line at 128K tokens may represent a significant architectural change or training cutoff point for some of the models, influencing the observed performance trends. Further investigation into the MRCR task itself would be needed to understand the specific challenges it presents and why certain models excel while others struggle.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart: MRCR - Cumulative Average Score vs. Number of Tokens in Context

### Overview

The image displays a line chart titled "MRCR" that plots the performance of eight different large language models (LLMs) as a function of their context window size. The chart illustrates how the "Cumulative Average Score" (y-axis) changes as the "Number of tokens in context" (x-axis) increases from 2,000 (2K) to 1,000,000 (1M) tokens. The general trend for most models is a decline in score as context length increases, though the rate and severity of decline vary significantly between models.

### Components/Axes

* **Title:** "MRCR" (centered at the top).

* **Y-Axis:**

* **Label:** "Cumulative Average Score".

* **Scale:** Linear, ranging from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:**

* **Label:** "Number of tokens in context".

* **Scale:** Logarithmic (base 2), with labeled tick marks at 2K, 8K, 32K, 128K, 512K, and 1M.

* **Legend:** Positioned in the bottom-right quadrant of the chart area. It contains eight entries, each with a unique color, line style (dashed), and marker symbol (+).

* **Reference Line:** A vertical dashed gray line is drawn at the 128K token mark on the x-axis.

### Detailed Analysis

**Legend and Data Series (from top to bottom at the 2K token starting point):**

1. **GPT-4 Turbo (040924):** Dark magenta/purple line with '+' markers. Starts highest at ~0.99 (2K). Shows a steady, moderate decline to ~0.75 at 128K. Data ends at 128K.

2. **Claude 3 Opus:** Dark green line with '+' markers. Starts at ~0.97 (2K). Declines steadily, closely following but slightly below GPT-4 Turbo (040924), reaching ~0.73 at 128K. Data ends at 128K.

3. **Gemini 1.5 Pro:** Blue line with '+' markers. Starts at ~0.93 (2K). Declines gradually and linearly, crossing above the GPT-4 Turbo (012524) line around 32K. Reaches ~0.72 at 128K and continues a very slow decline to ~0.71 at 1M.

4. **GPT-4 Turbo (012524):** Light salmon/pink line with '+' markers. Starts at ~0.98 (2K). Declines more steeply than its 040924 counterpart, dropping to ~0.67 at 128K. Data ends at 128K.

5. **Gemini 1.5 Flash:** Light blue/cyan line with '+' markers. Starts at ~0.85 (2K). Declines at a moderate, steady rate, reaching ~0.71 at 128K and ~0.70 at 1M.

6. **Claude 3 Sonnet:** Light olive/yellow-green line with '+' markers. Starts at ~0.83 (2K). Shows a pronounced decline, dropping to ~0.58 at 128K. Data ends at 128K.

7. **Claude 3 Haiku:** Bright green line with '+' markers. Starts at ~0.70 (2K). Experiences a sharp initial drop to ~0.57 by 8K, then declines more slowly to ~0.52 at 128K. Data ends at 128K.

8. **Claude 2.1:** Olive/brown line with '+' markers. Starts significantly lower than all others at ~0.33 (2K). Drops quickly to ~0.19 by 8K and then remains nearly flat, plateauing around 0.19-0.20 through 128K. Data ends at 128K.

**Spatial Grounding & Trend Verification:**

* The legend is placed in the bottom-right, overlapping the lower portion of the chart but not obscuring critical data points for the top-performing models.

* **Trend Check:** All lines slope downward or remain flat as they move right (increasing tokens). No line shows an upward trend. The steepness of the slope correlates with the severity of performance degradation with longer contexts.

### Key Observations

1. **Performance Hierarchy at Short Context (2K):** There is a clear tiered structure. GPT-4 Turbo (040924) and Claude 3 Opus lead (~0.97-0.99), followed by Gemini 1.5 Pro and GPT-4 Turbo (012524) (~0.93-0.98), then Gemini 1.5 Flash and Claude 3 Sonnet (~0.83-0.85), then Claude 3 Haiku (~0.70), with Claude 2.1 as a significant outlier at the bottom (~0.33).

2. **Degradation Patterns:**

* **Most Resilient:** Gemini 1.5 Pro and Gemini 1.5 Flash show the most gradual, linear declines and are the only models with data extending to 1M tokens, maintaining scores above 0.70.

* **Moderate Decline:** GPT-4 Turbo (040924) and Claude 3 Opus decline steadily but remain the top performers up to 128K.

* **Steep Decline:** GPT-4 Turbo (012524), Claude 3 Sonnet, and especially Claude 3 Haiku show steeper drops in performance as context grows.

* **Flatline Low Performance:** Claude 2.1 starts very low and shows almost no change after an initial drop, indicating a possible performance floor.

3. **The 128K Benchmark:** The vertical dashed line at 128K tokens serves as a common evaluation point for seven of the eight models (all except the Gemini models, which extend further). At this point, the top cluster (GPT-4 Turbo 040924, Claude 3 Opus, Gemini 1.5 Pro) is tightly grouped between ~0.72-0.75.

4. **Model Version Impact:** The two GPT-4 Turbo versions (040924 vs. 012524) show a notable performance gap, with the 040924 version consistently outperforming the 012524 version across all context lengths, suggesting significant updates between versions.

### Interpretation

This chart benchmarks the "needle-in-a-haystack" or retrieval-augmented generation (RAG) capability of major LLMs across vastly different context lengths, as measured by the MRCR (likely "Multi-Row Contextual Retrieval" or similar) metric. The data suggests a fundamental trade-off: **maintaining high accuracy on specific information retrieval tasks becomes exponentially harder as the volume of information in the context window increases.**

* **Technological Differentiation:** The Gemini 1.5 models' ability to maintain performance out to 1M tokens, albeit with a decline, highlights a potential architectural or training advantage for extremely long-context tasks. The tight clustering of top models at 128K suggests convergence in performance for "standard" long-context windows among leading models.

* **Practical Implications:** For applications requiring high precision retrieval from very long documents (e.g., legal contract analysis, comprehensive codebase review), the choice of model and context length is critical. Using a model like Claude 3 Haiku or an older version like Claude 2.1 with a long context would likely yield poor results. The steep decline of some models indicates they may "lose their place" or fail to attend to relevant information amidst distractors as context grows.

* **Anomaly:** Claude 2.1's performance is a stark outlier, suggesting it either was not designed for this type of task, represents a much earlier stage of long-context capability, or the metric is particularly unsuited to its architecture. Its flat line indicates it fails consistently regardless of context length beyond a very low threshold.

* **Underlying Question:** The chart prompts investigation into *why* performance degrades. Is it a limitation of attention mechanisms, a training data artifact, or an inherent challenge of information density? The variance between model families (Gemini, GPT, Claude) and within families (Claude 3 Opus vs. Haiku, GPT-4 Turbo versions) provides rich data for such analysis.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graph: MRCR Performance Across Models

### Overview

The image is a line graph titled "MRCR" (Mean Reciprocal Rank Correlation) comparing the performance of various AI models across different context lengths. The x-axis represents the number of tokens in context (ranging from 2K to 1M), and the y-axis shows the Cumulative Average Score (0.0 to 1.0). Multiple data series are plotted, each corresponding to a specific model and configuration.

### Components/Axes

- **Title**: "MRCR"

- **X-Axis**: "Number of tokens in context" (logarithmic scale: 2K, 8K, 32K, 128K, 512K, 1M)

- **Y-Axis**: "Cumulative Average Score" (linear scale: 0.0 to 1.0 in increments of 0.2)

- **Legend**: Located on the right, with color-coded labels for each model:

- Gemini 1.5 Pro (dashed blue)

- Gemini 1.5 Flash (solid blue)

- GPT-4 Turbo (040924) (solid red)

- GPT-4 Turbo (012524) (dashed red)

- Claude 3 Opus (solid green)

- Claude 3 Sonnet (dashed green)

- Claude 3 Haiku (solid cyan)

- Claude 2.1 (dashed yellow)

- **Vertical Dashed Line**: At 128K tokens, marking a threshold.

### Detailed Analysis

1. **Gemini 1.5 Pro** (dashed blue): Starts at ~0.95 (2K tokens), declines gradually to ~0.75 (1M tokens).

2. **Gemini 1.5 Flash** (solid blue): Starts at ~0.85, declines to ~0.7 (1M tokens).

3. **GPT-4 Turbo (040924)** (solid red): Starts at ~0.95, drops sharply to ~0.7 (1M tokens).

4. **GPT-4 Turbo (012524)** (dashed red): Starts at ~0.85, declines to ~0.65 (1M tokens).

5. **Claude 3 Opus** (solid green): Starts at ~0.9, declines to ~0.6 (1M tokens).

6. **Claude 3 Sonnet** (dashed green): Starts at ~0.8, declines to ~0.55 (1M tokens).

7. **Claude 3 Haiku** (solid cyan): Starts at ~0.7, declines to ~0.5 (1M tokens).

8. **Claude 2.1** (dashed yellow): Starts at ~0.3, drops sharply to ~0.2 (8K tokens), then plateaus.

### Key Observations

- **Performance Decline**: All models show a general decline in MRCR score as context length increases, with steeper drops for shorter-context models (e.g., Claude 2.1).

- **Model Hierarchy**: Gemini 1.5 Pro and GPT-4 Turbo (040924) maintain the highest scores across all context lengths.

- **Claude 2.1 Anomaly**: Sharp initial drop to ~0.2 (8K tokens) and flat performance thereafter, suggesting poor scalability.

- **Threshold at 128K**: The vertical dashed line may indicate a critical context length where performance stabilizes or degrades significantly.

### Interpretation

The data demonstrates that **longer context lengths correlate with reduced MRCR scores**, likely due to increased computational complexity or model limitations in handling extended inputs. Gemini and GPT-4 Turbo models outperform Claude variants, except Claude 3 Opus, which remains competitive. Claude 2.1’s abrupt decline highlights its inefficiency with longer contexts. The threshold at 128K tokens suggests a potential inflection point where models either stabilize or face significant challenges. This graph underscores the trade-off between context length and performance in large language models, with implications for applications requiring extensive input processing.

DECODING INTELLIGENCE...