## Line Chart: Accuracy vs. Training Samples for Different Loops

### Overview

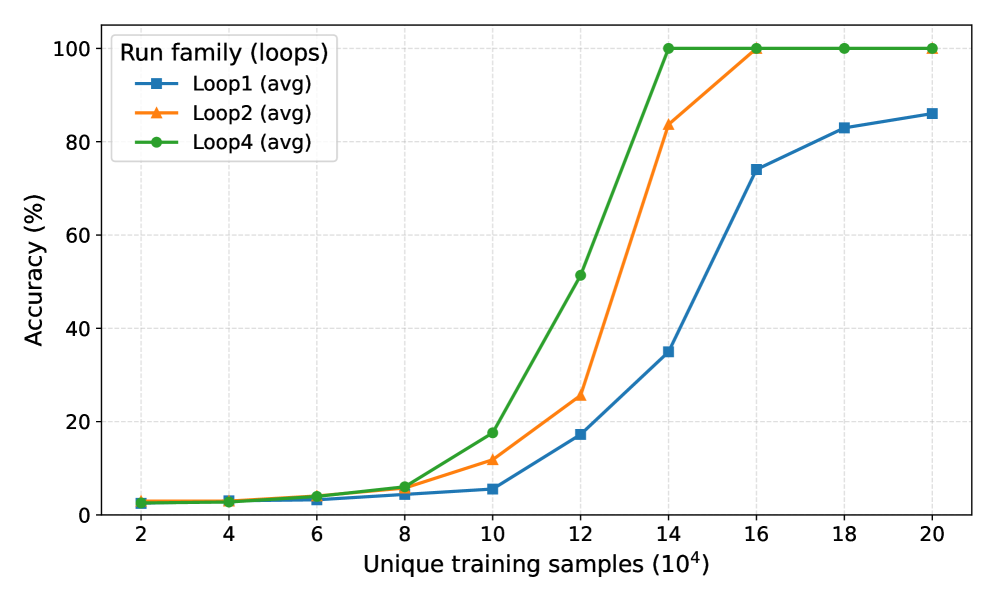

This line chart depicts the relationship between the number of unique training samples (x-axis) and the resulting accuracy (y-axis) for four different "loops" (Loop1, Loop2, Loop3, and Loop4). The data appears to represent the average accuracy achieved for each loop at various training sample sizes.

### Components/Axes

* **X-axis Title:** "Unique training samples (10⁴)" - Scale ranges from approximately 2 to 20 (in units of 10,000).

* **Y-axis Title:** "Accuracy (%)" - Scale ranges from 0 to 100.

* **Legend Title:** "Run family (loops)"

* **Data Series:**

* Loop1 (avg) - Represented by a blue line with square markers.

* Loop2 (avg) - Represented by an orange line with circular markers.

* Loop3 (avg) - Represented by a light-green line with triangular markers.

* Loop4 (avg) - Represented by a dark-green line with diamond markers.

* **Legend Position:** Top-left corner of the chart.

### Detailed Analysis

* **Loop1 (avg):** The blue line starts at approximately 2% accuracy at 20,000 training samples. It increases slowly to around 18% at 120,000 samples, then rises sharply to approximately 78% at 180,000 samples, and plateaus around 82% at 200,000 samples.

* **Loop2 (avg):** The orange line begins at approximately 4% accuracy at 20,000 training samples. It increases steadily to around 22% at 120,000 samples, then experiences a rapid increase to approximately 98% at 140,000 samples, and remains relatively constant at 100% for the remaining data points.

* **Loop3 (avg):** The light-green line starts at approximately 3% accuracy at 20,000 training samples. It increases gradually to around 8% at 100,000 samples, then rises sharply to approximately 98% at 140,000 samples, and remains at 100% for the remaining data points.

* **Loop4 (avg):** The dark-green line begins at approximately 5% accuracy at 20,000 training samples. It increases steadily to around 10% at 100,000 samples, then experiences a very rapid increase to approximately 100% at 120,000 samples, and remains constant at 100% for the remaining data points.

### Key Observations

* Loop2, Loop3, and Loop4 achieve near-perfect accuracy (approximately 100%) with relatively few training samples (around 140,000).

* Loop1 requires significantly more training samples (around 180,000) to reach a comparable level of accuracy (approximately 82%).

* The accuracy of all loops increases with the number of training samples, but the rate of increase varies significantly.

* Loop2, Loop3, and Loop4 show a steeper increase in accuracy than Loop1, especially after 100,000 training samples.

### Interpretation

The data suggests that the performance of the model is highly dependent on the "loop" used and the amount of training data provided. Loops 2, 3, and 4 demonstrate a much faster learning curve and achieve higher accuracy with fewer training samples compared to Loop 1. This could indicate that Loops 2, 3, and 4 are more efficient or better suited for the given task. The plateauing of accuracy for Loops 2, 3, and 4 after a certain number of training samples suggests that the model has reached its maximum performance potential with the current configuration. The slower learning curve and lower final accuracy of Loop 1 might indicate issues with its algorithm, hyperparameters, or data preprocessing steps. Further investigation is needed to understand the differences between the loops and optimize the model's performance. The fact that the y-axis is labeled in percentages suggests that the model is performing a classification task, where accuracy is a measure of the proportion of correctly classified instances.