\n

## Diagram: Single Chain of Thought vs. Thinking Process - Problem Solving

### Overview

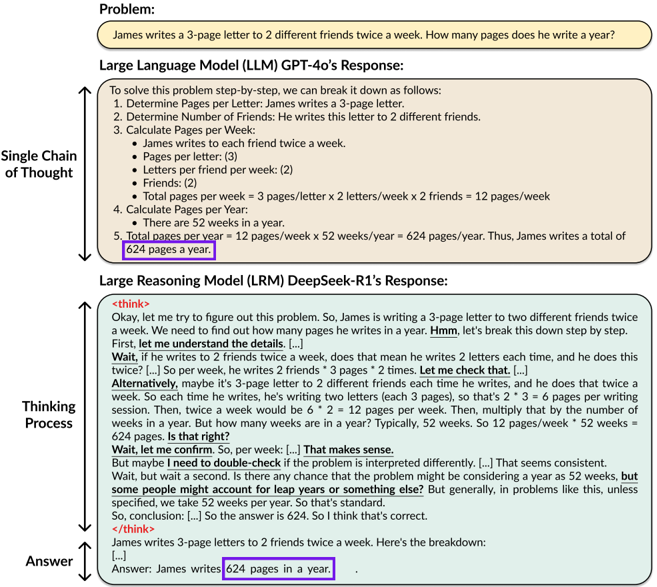

The image presents a comparison between the response of a Large Language Model (LLM) – GPT-4o – and a Large Reasoning Model (LRM) – DeepSeek-R1 – to a simple word problem. The problem asks: "James writes a 3-page letter to 2 different friends twice a week. How many pages does he write a year?". The diagram showcases the step-by-step reasoning of each model, highlighting the differences in their approaches. The LLM uses a structured, numbered approach, while the LRM demonstrates a more conversational, iterative thought process.

### Components/Axes

The diagram is divided into two main sections, one for each model. Each section contains:

* **Title:** Indicating the model type (LLM or LRM).

* **Sub-title:** Describing the approach (Single Chain of Thought or Thinking Process).

* **Problem Statement:** The original word problem.

* **Response:** The model's step-by-step solution.

* **Visual Cues:** Highlighting (yellow) of the final answer.

### Detailed Analysis / Content Details

**LLM (GPT-4o) - Single Chain of Thought:**

1. **Determine Pages per Letter:** James writes a 3-page letter.

2. **Determine Number of Friends:** He writes this letter to 2 different friends.

3. **Calculate Pages per Week:**

* James writes to each friend twice a week.

* Pages per letter: (3)

* Letters per friend per week: (2)

* Friends: (2)

* Total pages per week = 3 pages/letter x 2 letters/week x 2 friends = 12 pages/week

4. **Calculate Pages per Year:**

* There are 52 weeks in a year.

* Total pages per year = 12 pages/week x 52 weeks/year = 624 pages/year.

5. **Final Answer:** Highlighted in yellow: **624 pages a year.**

**LRM (DeepSeek-R1) - Thinking Process:**

The LRM's response is more conversational and includes internal monologue-like statements (indicated by `<think>`).

* Initial thought: "Okay, let me try to figure out this problem. So, James is writing a 3-page letter to two different friends twice a week. We need to find out how many pages he writes in a year. Hmm, let’s break this down step by step."

* First step: "Wait, if he writes to 2 friends twice a week, does that mean he writes 2 letters each time, and he does this twice? [...] So per week, he writes 2 friends * 3 pages * 2 times. Let me check that. [...]"

* Alternative calculation: "Alternatively, maybe it’s 3-page letter to 2 different friends each time he writes, and he does that twice a week. So each time he’s writing two letters (each 3 pages), so that’s 2 * 3 = 6 pages per writing session. Then, twice a week would be 6 * 2 = 12 pages per week. Then, multiply that by the number of weeks in a year. But how many weeks are in a year? Typically, 52 weeks. So 12 pages/week * 52 weeks = 624 pages. Is that right?"

* Confirmation: "Wait, let me confirm. So, per week: [...] That makes sense."

* Double-check: "But maybe I need to double-check if the problem is interpreted differently. [...] That seems consistent. But, wait, but it seemed inconsistent if the problem is interpreted differently considering a year as 52 weeks, but some people might account for leap years, so the answer would be 52.14 weeks. But, in this case, unless specified, we’ll stick to 52 weeks. So that makes sense."

* Conclusion: "So, conclusion: James writes 624 pages a year. That’s correct."

* Final Answer: Highlighted in yellow: **624 pages a year.**

### Key Observations

* Both models arrive at the same correct answer: 624 pages per year.

* The LLM (GPT-4o) presents a more structured and concise solution, breaking down the problem into numbered steps.

* The LRM (DeepSeek-R1) demonstrates a more iterative and self-questioning thought process, including internal checks and clarifications. It also considers potential ambiguities in the problem statement (e.g., leap years).

* The LRM's response is significantly more verbose and conversational.

* Both models highlight the final answer in yellow.

### Interpretation

The diagram illustrates the different approaches to problem-solving employed by two different types of large language models. The LLM exemplifies a "single chain of thought" approach, where it directly applies a series of logical steps to arrive at the solution. The LRM, on the other hand, demonstrates a more nuanced "thinking process" that involves self-reflection, consideration of alternative interpretations, and internal validation.

The LRM's approach suggests a greater degree of reasoning and understanding, as it actively attempts to clarify ambiguities and ensure the correctness of its solution. While both models achieve the correct answer, the LRM's process is more akin to how a human might approach the same problem, making it potentially more robust and adaptable to complex scenarios. The inclusion of `<think>` statements provides insight into the model's internal reasoning, which is not typically visible in the output of a standard LLM. The diagram highlights the ongoing development in AI towards more human-like reasoning capabilities.