## Diagram: Comparison of AI Model Responses to a Math Word Problem

### Overview

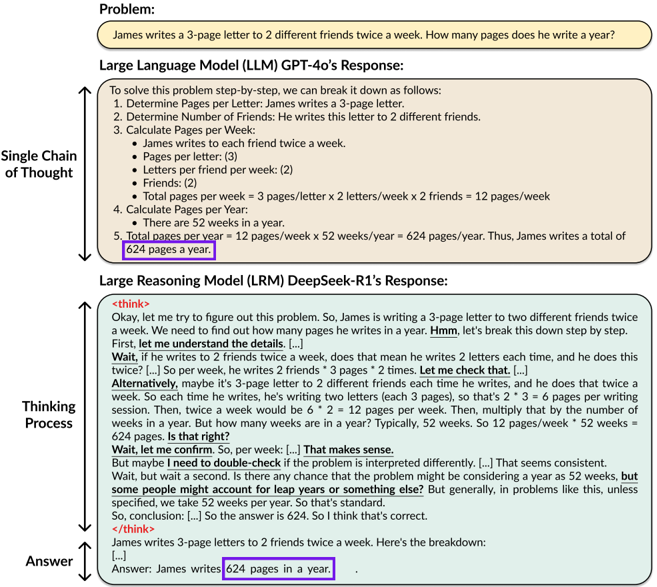

The image is a technical diagram comparing the problem-solving outputs of two different AI models: a "Large Language Model (LLM) GPT-4o" and a "Large Reasoning Model (LRM) DeepSeek-R1." It visually contrasts their reasoning processes and final answers to the same arithmetic word problem. The diagram is structured vertically, with the problem at the top, followed by the two model responses below, each annotated with labels describing their reasoning style.

### Components/Axes

The diagram is segmented into three primary horizontal regions:

1. **Header (Problem Statement):** A rounded rectangle at the top containing the problem text.

2. **Middle Section (GPT-4o Response):** A large rounded rectangle labeled "Large Language Model (LLM) GPT-4o's Response:" containing a numbered list. To its left, a vertical double-headed arrow is labeled "Single Chain of Thought."

3. **Bottom Section (DeepSeek-R1 Response):** A large rounded rectangle labeled "Large Reasoning Model (LRM) DeepSeek-R1's Response:" containing text within `<think>` tags.

* **Thinking Process:** The content within the `<think>` block is a verbose, step-by-step internal monologue. It includes calculations, self-correction, and verification steps (e.g., "Wait, let me check that...").

* **Final Answer:** Following the `<think>` block, a concise final answer is presented:

```

James writes 3-page letters to 2 friends twice a week. Here's the breakdown:

[...].

Answer: James writes **624 pages in a year**.

```

* **Highlighted Answer:** The phrase "624 pages in a year" is enclosed in a purple box.

* **Note:** The `[...]` notations indicate where text has been truncated or summarized in the diagram for brevity. The full thinking process is longer and more discursive than the GPT-4o response.

### Key Observations

1. **Identical Final Answer:** Both models arrive at the same correct numerical answer: 624 pages per year.

2. **Divergent Reasoning Presentation:**

* **GPT-4o:** Employs a concise, linear, and structured "Single Chain of Thought." It presents a clean, numbered list of steps without internal debate or alternative interpretations.

* **DeepSeek-R1:** Uses an extended, conversational "Thinking Process" encapsulated in `<think>` tags. This process includes self-questioning ("Wait, let me check that"), consideration of alternative interpretations, verification of assumptions (e.g., 52 weeks in a year), and explicit confirmation steps before producing a final, concise answer.

3. **Visual Emphasis:** The use of identical purple highlight boxes on the final answers draws a direct comparison, emphasizing that despite different processes, the outcome is the same.

4. **Structural Labels:** The diagram explicitly labels the reasoning styles ("Single Chain of Thought" vs. "Thinking Process" / "Answer"), framing the comparison around methodology rather than just result.

### Interpretation

This diagram serves as a technical comparison of AI reasoning architectures or prompting strategies. It demonstrates that different models can solve the same problem correctly but through markedly different cognitive pathways.

* **GPT-4o's approach** exemplifies a direct, optimized, and efficient problem-solving chain. It mirrors a traditional, textbook-style solution where steps are presented in their logical order without digression. This suggests a model trained or prompted to produce streamlined, user-friendly explanations.

* **DeepSeek-R1's approach** mimics human-like exploratory reasoning. The inclusion of hesitation, self-correction, and consideration of edge cases (like leap years) within the `<think>` block suggests a model designed to expose its "thought process" for transparency, debugging, or to handle more ambiguous problems where the first interpretation might be wrong. The separation of the internal "Thinking Process" from the final "Answer" is a key architectural feature highlighted here.

The underlying message is that **correctness can be achieved through different forms of intelligence.** One model prioritizes clarity and conciseness in its output, while the other prioritizes demonstrable rigor and self-verification in its process. For a technical audience, this comparison is valuable for understanding model behavior, selecting a model for a specific task (e.g., tutoring vs. complex reasoning), and designing prompts that elicit the desired type of response. The diagram argues that the "how" of AI reasoning is as important to analyze as the "what" of its final answer.