## Line Chart: Math Problem Accuracy Comparison

### Overview

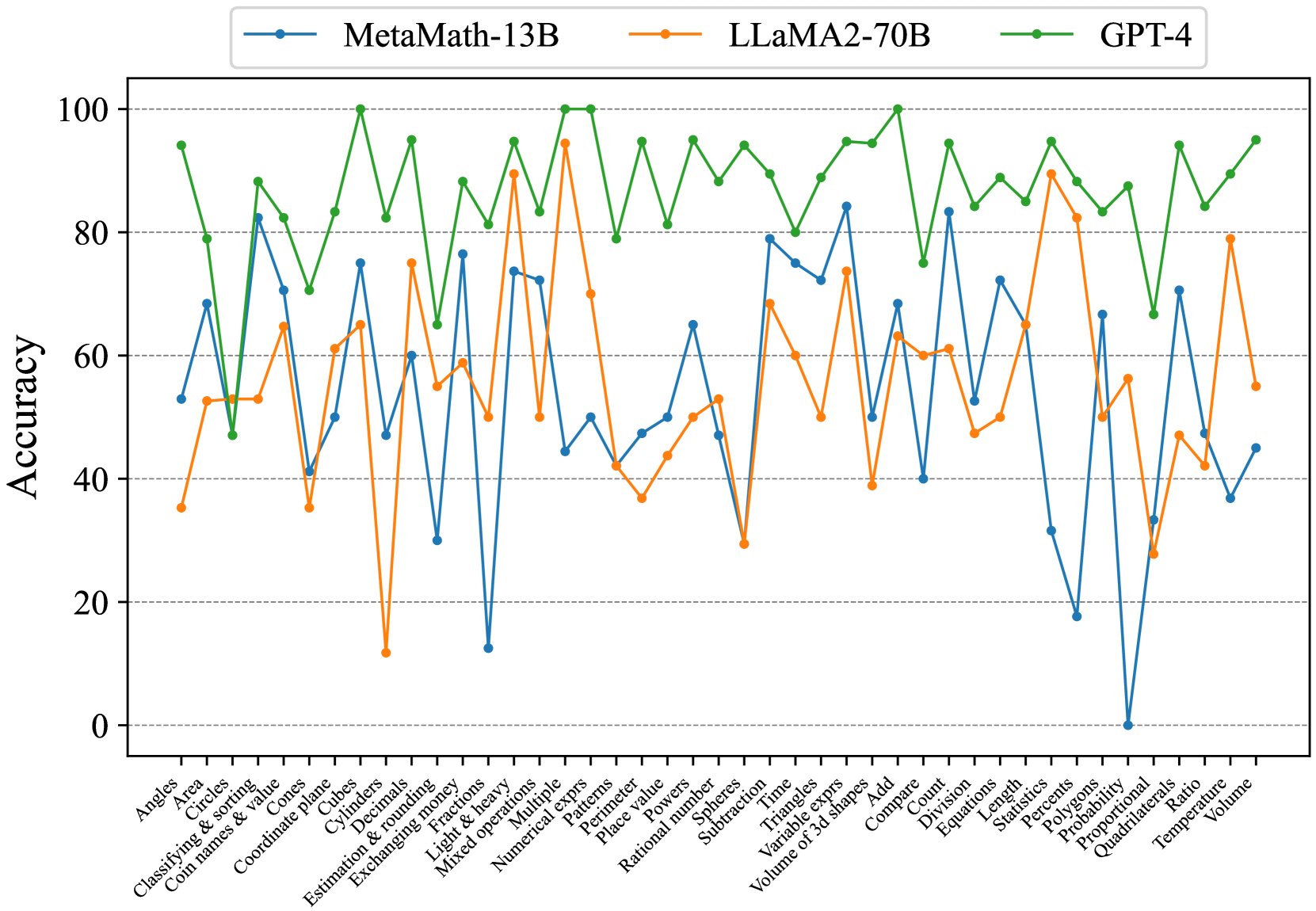

This line chart compares the accuracy of three large language models – MetaMath-13B, LLaMA2-70B, and GPT-4 – on a variety of math problem types. The x-axis represents different math topics, and the y-axis represents the accuracy percentage, ranging from 0 to 100. The chart displays the performance of each model as a line plotted against these topics.

### Components/Axes

* **X-axis Title:** Math Problem Types

* **Y-axis Title:** Accuracy

* **Y-axis Scale:** 0 to 100, with increments of 10.

* **Legend:** Located at the top-center of the chart.

* MetaMath-13B (Blue Line with Circle Markers)

* LLaMA2-70B (Orange Line with Square Markers)

* GPT-4 (Green Line with Diamond Markers)

* **X-axis Categories (Math Problem Types):** Angles, Area, Circles, Classifying & sorting, Cones & values, Coordinate plane, Cylinders, Decimals, Estimation & rounding, Exchanging money, Fractions, Light & heavy, Mixed operations, Multiple, Numerical, Patterns, Perimeter, Place value, Polygons, Pulse, Rational numbers, Spheres, Subtraction, Time, Triangles, Variable expressions, Volume of 3d shapes, Add, Compare, Count, Constant, Division, Equations, Length, Percents, Probability, Proportional, Quadrilaterals, Ratio, Temperature, Volume.

### Detailed Analysis

The chart shows the accuracy of each model across 37 different math problem types.

**MetaMath-13B (Blue Line):**

* Trend: Generally fluctuates between 40% and 90% accuracy. Shows significant dips in accuracy for "Exchanging money" (~30%), "Light & heavy" (~35%), and "Volume" (~40%). Peaks around 90% for "Time", "Triangles", and "Add".

* Specific Data Points (approximate):

* Angles: ~70%

* Area: ~60%

* Circles: ~65%

* Exchanging money: ~30%

* Time: ~90%

* Volume: ~40%

**LLaMA2-70B (Orange Line):**

* Trend: Exhibits a more consistent performance, generally staying between 60% and 95% accuracy. Shows a dip around "Exchanging money" (~50%) and "Light & heavy" (~55%). Peaks around 95% for "Time", "Triangles", and "Add".

* Specific Data Points (approximate):

* Angles: ~80%

* Area: ~75%

* Circles: ~80%

* Exchanging money: ~50%

* Time: ~95%

* Volume: ~60%

**GPT-4 (Green Line):**

* Trend: Demonstrates the highest overall accuracy, consistently above 70% and frequently reaching 100%. Shows a slight dip around "Exchanging money" (~70%) and "Light & heavy" (~75%). Peaks at 100% for many problem types, including "Time", "Triangles", and "Add".

* Specific Data Points (approximate):

* Angles: ~95%

* Area: ~90%

* Circles: ~95%

* Exchanging money: ~70%

* Time: ~100%

* Volume: ~80%

### Key Observations

* GPT-4 consistently outperforms both MetaMath-13B and LLaMA2-70B across all math problem types.

* LLaMA2-70B generally performs better than MetaMath-13B, but the gap is not as significant as the difference between LLaMA2-70B and GPT-4.

* "Exchanging money" and "Light & heavy" consistently represent the most challenging problem types for all three models, resulting in the lowest accuracy scores.

* "Time", "Triangles", and "Add" consistently represent the easiest problem types for all three models, resulting in the highest accuracy scores.

### Interpretation

The data suggests a clear hierarchy in the mathematical reasoning capabilities of the three models. GPT-4 possesses a significantly stronger ability to solve a wide range of math problems compared to LLaMA2-70B and MetaMath-13B. LLaMA2-70B demonstrates a moderate advantage over MetaMath-13B. The consistent low performance on "Exchanging money" and "Light & heavy" may indicate that these problem types require specific reasoning skills or knowledge that are not well-represented in the training data of these models. The high accuracy on "Time", "Triangles", and "Add" suggests that these concepts are more readily learned or are more prevalent in the training datasets. The chart highlights the ongoing challenges in developing AI models that can reliably solve diverse mathematical problems and suggests areas for future research and development. The consistent performance of GPT-4 indicates a more robust and generalizable understanding of mathematical concepts.