## Scaling Laws for Neural Language Models: Three-Plot Analysis

### Overview

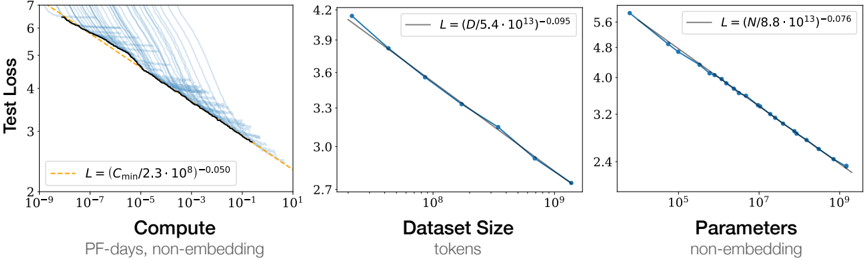

The image displays three horizontally arranged log-log plots, each illustrating a power-law relationship between a different scaling factor (Compute, Dataset Size, Parameters) and the Test Loss of a neural language model. The plots collectively demonstrate the "scaling laws" phenomenon, where model performance improves predictably as resources increase.

### Components/Axes

The image is segmented into three distinct chart regions, each with its own axes and legend.

**1. Left Chart: Compute vs. Test Loss**

* **X-Axis:** `Compute` (Label: "Compute", Sub-label: "PF-days, non-embedding"). Scale is logarithmic, ranging from `10^-9` to `10^1`.

* **Y-Axis:** `Test Loss` (Label: "Test Loss"). Scale is linear, ranging from `2` to `7`.

* **Legend:** Located in the bottom-left corner. Contains a dashed orange line and the equation: `L = (C_min / 2.3 * 10^8)^-0.050`.

* **Data Series:** A dense cloud of light blue data points, a solid black trend line passing through them, and a dashed orange extrapolation line extending the trend.

**2. Middle Chart: Dataset Size vs. Test Loss**

* **X-Axis:** `Dataset Size` (Label: "Dataset Size", Sub-label: "tokens"). Scale is logarithmic, ranging from `10^8` to `10^9`.

* **Y-Axis:** `Test Loss` (Label: "Test Loss"). Scale is linear, ranging from `2.7` to `4.2`.

* **Legend:** Located in the top-right corner. Contains a solid blue line and the equation: `L = (D / 5.4 * 10^13)^-0.095`.

* **Data Series:** A series of blue data points connected by a solid blue trend line.

**3. Right Chart: Parameters vs. Test Loss**

* **X-Axis:** `Parameters` (Label: "Parameters", Sub-label: "non-embedding"). Scale is logarithmic, ranging from `10^5` to `10^9`.

* **Y-Axis:** `Test Loss` (Label: "Test Loss"). Scale is linear, ranging from `2.4` to `5.6`.

* **Legend:** Located in the top-right corner. Contains a solid blue line and the equation: `L = (N / 8.8 * 10^13)^-0.076`.

* **Data Series:** A series of blue data points connected by a solid blue trend line.

### Detailed Analysis

**Left Chart (Compute):**

* **Trend Verification:** The data shows a clear downward trend. As Compute increases (moving right on the x-axis), Test Loss decreases (moving down on the y-axis). The relationship appears linear on this log-log plot, indicating a power law.

* **Data Points & Equation:** The fitted power-law equation is `L ∝ C^-0.050`. The constant `C_min` is `2.3 * 10^8` PF-days. The exponent `-0.050` is the smallest in magnitude among the three plots, suggesting Test Loss decreases most slowly with increasing Compute.

* **Spatial Grounding:** The dense cluster of light blue points is concentrated in the mid-to-high compute range (`10^-5` to `10^0`). The black trend line fits these points. The orange dashed line extrapolates the trend to lower and higher compute values.

**Middle Chart (Dataset Size):**

* **Trend Verification:** A strong, consistent downward trend. As Dataset Size increases, Test Loss decreases linearly on the log-log scale.

* **Data Points & Equation:** The fitted power-law equation is `L ∝ D^-0.095`. The constant `D` is `5.4 * 10^13` tokens. The exponent `-0.095` is larger in magnitude than the compute exponent, indicating a steeper improvement in loss per decade of dataset growth.

* **Spatial Grounding:** The blue data points are evenly spaced along the trend line from approximately `2*10^8` to `10^9` tokens.

**Right Chart (Parameters):**

* **Trend Verification:** A strong, consistent downward trend. As the number of Parameters increases, Test Loss decreases linearly on the log-log scale.

* **Data Points & Equation:** The fitted power-law equation is `L ∝ N^-0.076`. The constant `N` is `8.8 * 10^13` parameters. The exponent `-0.076` is intermediate between the compute and dataset size exponents.

* **Spatial Grounding:** The blue data points are evenly spaced along the trend line from approximately `10^6` to `10^9` parameters.

### Key Observations

1. **Universal Power-Law Scaling:** All three fundamental resources (Compute, Data, Parameters) exhibit a power-law relationship with model performance (Test Loss). This is a hallmark of neural scaling laws.

2. **Exponent Hierarchy:** The magnitude of the scaling exponent differs: Dataset Size (`-0.095`) > Parameters (`-0.076`) > Compute (`-0.050`). This suggests, within the observed ranges, that increasing the dataset size yields the most significant reduction in loss per unit of increase on a logarithmic scale.

3. **Smooth, Predictable Trends:** The data points align very closely with the fitted power-law lines, indicating highly predictable scaling behavior without significant outliers or plateaus in the observed regimes.

4. **Log-Log Linearity:** The linearity on log-log axes confirms the relationships are of the form `Loss ∝ (Resource)^-exponent`.

### Interpretation

This image presents empirical evidence for the **scaling laws of neural language models**. The data demonstrates that model performance, measured by test loss, improves in a predictable, power-law fashion as three key resources are scaled up.

* **What it Suggests:** The findings imply that performance gains are not arbitrary but follow a quantifiable trajectory. Researchers can use these fitted equations to forecast the expected loss for a given budget of compute, data, or parameters, enabling more efficient resource allocation for training large models.

* **Relationship Between Elements:** The three plots are interconnected facets of the same phenomenon. In practice, scaling one resource (e.g., parameters) often requires scaling the others (compute for training, data for effective learning). The different exponents hint at the relative "efficiency" of each resource in reducing loss within the studied scale.

* **Notable Implications:** The smooth, unbroken trends suggest that, up to the scales tested (e.g., ~1 trillion parameters, ~100 billion tokens), there is no fundamental "wall" or diminishing return that halts progress. This underpins the rationale behind the trend of building ever-larger models. The specific exponent values are critical for theoretical models that seek to explain why neural networks generalize and how to optimize the training triad of compute, data, and model size.