\n

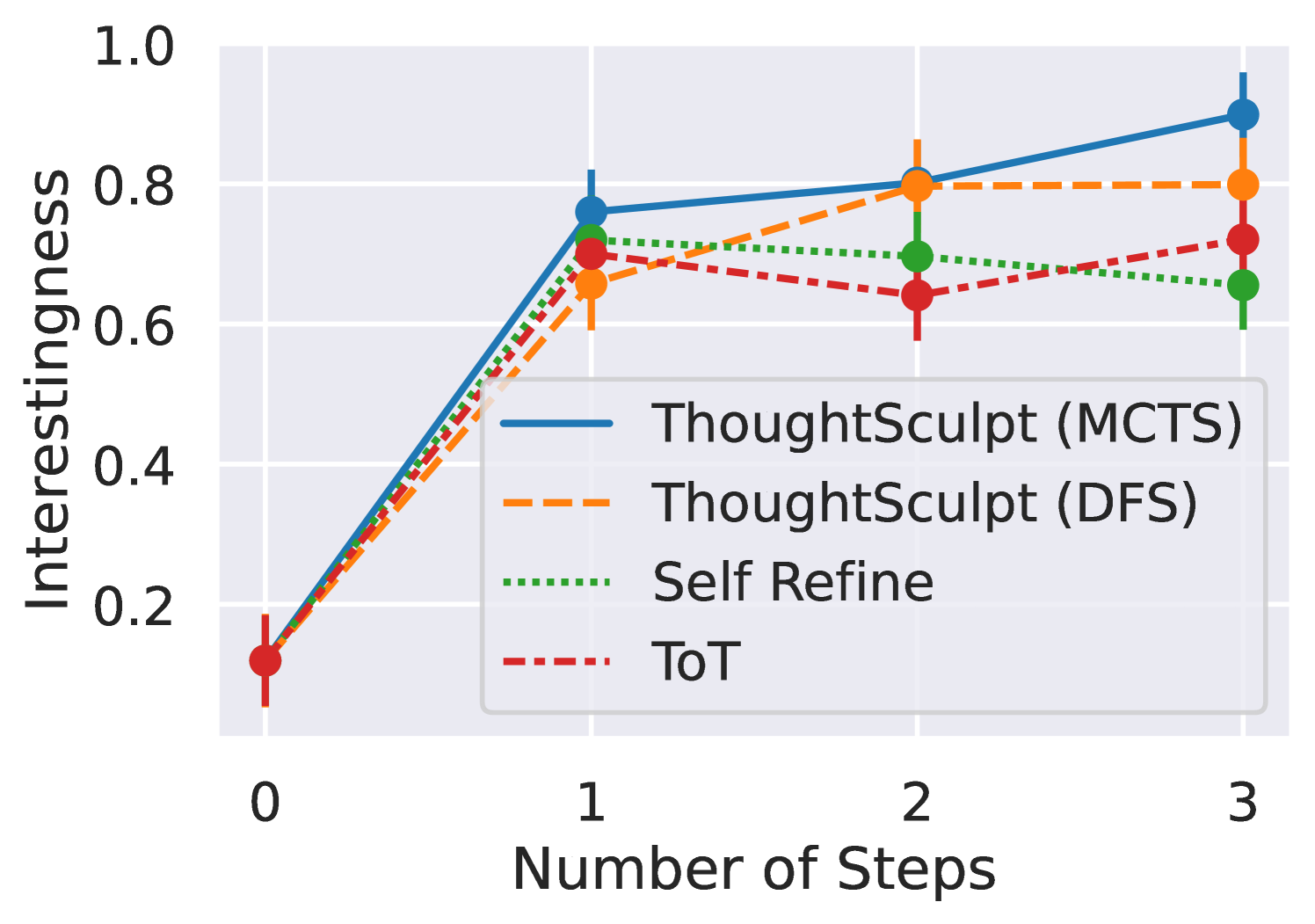

## Line Chart: Interestingness vs. Number of Steps

### Overview

The image is a line chart comparing the performance of four different methods or algorithms over a series of steps. The performance metric is "Interestingness," plotted on the y-axis, against the "Number of Steps" on the x-axis. The chart includes error bars for each data point, indicating variability or confidence intervals.

### Components/Axes

* **Y-Axis:** Labeled "Interestingness". The scale ranges from 0.0 to 1.0, with major tick marks at 0.0, 0.2, 0.4, 0.6, 0.8, and 1.0.

* **X-Axis:** Labeled "Number of Steps". The scale shows discrete steps at 0, 1, 2, and 3.

* **Legend:** Positioned in the bottom-right quadrant of the chart area, slightly overlapping the data lines. It contains four entries:

1. **ThoughtSculpt (MCTS):** Represented by a solid blue line with circular markers.

2. **ThoughtSculpt (DFS):** Represented by a dashed orange line with circular markers.

3. **Self Refine:** Represented by a dotted green line with circular markers.

4. **ToT:** Represented by a dash-dot red line with circular markers.

* **Data Series:** Four lines, each with data points at steps 0, 1, 2, and 3. Vertical error bars extend above and below each data point.

### Detailed Analysis

**Data Points and Trends (Approximate Values):**

* **Step 0:** All four methods start at approximately the same point, with an Interestingness value of ~0.1. The error bars are relatively small.

* **Step 1:** All methods show a sharp increase.

* **ThoughtSculpt (MCTS) [Blue, Solid]:** Rises to ~0.75. Trend: Steep upward slope from step 0.

* **ThoughtSculpt (DFS) [Orange, Dashed]:** Rises to ~0.65. Trend: Steep upward slope from step 0.

* **Self Refine [Green, Dotted]:** Rises to ~0.70. Trend: Steep upward slope from step 0.

* **ToT [Red, Dash-Dot]:** Rises to ~0.70. Trend: Steep upward slope from step 0.

* **Step 2:** The trends diverge.

* **ThoughtSculpt (MCTS) [Blue, Solid]:** Increases to ~0.80. Trend: Continued moderate upward slope.

* **ThoughtSculpt (DFS) [Orange, Dashed]:** Increases to ~0.80. Trend: Moderate upward slope, now matching MCTS.

* **Self Refine [Green, Dotted]:** Decreases slightly to ~0.70. Trend: Slight downward slope.

* **ToT [Red, Dash-Dot]:** Decreases to ~0.65. Trend: Moderate downward slope.

* **Step 3:** Final values show separation.

* **ThoughtSculpt (MCTS) [Blue, Solid]:** Increases to the highest value, ~0.90. Trend: Continued upward slope, finishing as the top performer.

* **ThoughtSculpt (DFS) [Orange, Dashed]:** Plateaus at ~0.80. Trend: Flat line from step 2.

* **Self Refine [Green, Dotted]:** Decreases further to ~0.65. Trend: Continued slight downward slope.

* **ToT [Red, Dash-Dot]:** Increases to ~0.72. Trend: Moderate upward slope from step 2.

**Error Bars:** Error bars are visible for all points. They appear largest for the "ToT" series at step 2 and for "ThoughtSculpt (MCTS)" at step 3, suggesting greater variance in results at those points.

### Key Observations

1. **Universal Initial Gain:** All methods demonstrate a significant improvement in "Interestingness" from step 0 to step 1.

2. **Diverging Paths:** After step 1, the performance trajectories split. ThoughtSculpt (MCTS) and (DFS) continue to improve or hold steady, while Self Refine and ToT show declines or volatility.

3. **Top Performer:** ThoughtSculpt (MCTS) shows the most consistent positive trend, ending with the highest Interestingness score at step 3.

4. **Plateauing:** ThoughtSculpt (DFS) matches MCTS at step 2 but then plateaus, failing to achieve the final gain that MCTS does.

5. **Volatility:** The ToT method shows a notable dip at step 2 before recovering somewhat at step 3.

### Interpretation

The chart suggests that for the task of generating "Interestingness," iterative refinement (increasing the number of steps) is beneficial, but the strategy employed matters greatly after the initial step.

* **ThoughtSculpt (MCTS)** appears to be the most robust and scalable approach, as its performance continues to climb with more steps. The use of Monte Carlo Tree Search (MCTS) may allow for more effective exploration of the solution space compared to the other methods.

* **ThoughtSculpt (DFS)**, using Depth-First Search, is effective initially but hits a performance ceiling by step 2, suggesting it may get stuck in local optima or lack the exploratory breadth of MCTS.

* **Self Refine** and **ToT (Tree of Thoughts)** show a pattern of initial promise followed by degradation. This could indicate overfitting, instability in the refinement process, or that these methods require careful tuning of the number of steps to avoid negative returns. The recovery of ToT at step 3, however, hints at potential if the process is extended further.

The presence of error bars underscores that these are average results with inherent variability. The larger error bars for ToT at its low point (step 2) and for MCTS at its high point (step 3) are particularly noteworthy, indicating less predictable outcomes at those stages for those methods. Overall, the data strongly favors the MCTS variant of ThoughtSculpt for maximizing interestingness over multiple refinement steps.