## Diagram: Federated Learning Process Flowchart

### Overview

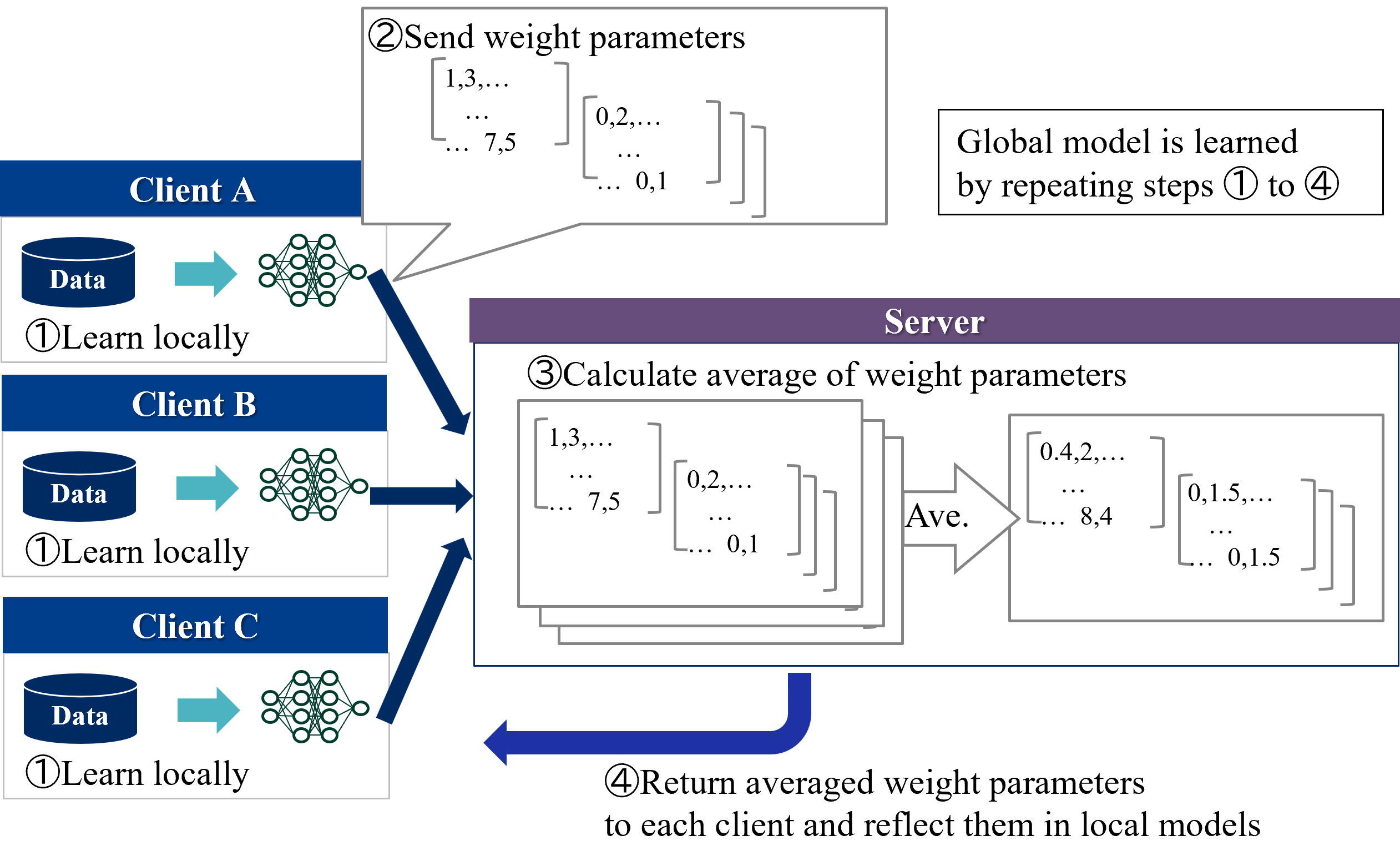

This image is a technical flowchart illustrating the four-step iterative process of **Federated Learning**, a decentralized machine learning paradigm. The diagram shows how multiple clients (Client A, Client B, Client C) collaboratively train a shared global model without directly sharing their local data. The process involves local training, parameter aggregation on a central server, and model synchronization.

### Components/Axes

The diagram is structured into two main sections:

1. **Left Column (Clients):** Three vertically stacked client blocks, each labeled "Client A", "Client B", and "Client C" in white text on a dark blue header bar. Each client block contains:

* A dark blue cylinder icon labeled "Data" in white text.

* A teal arrow pointing right.

* A green neural network diagram icon.

* The text "①Learn locally" below the data cylinder.

2. **Right Section (Server & Process Flow):**

* A large central block with a purple header labeled "Server" in white text.

* A text box in the top-right corner.

* Numbered step labels (②, ③, ④) with descriptive text.

* Arrows indicating data flow between clients and the server.

### Detailed Analysis

**Step-by-Step Process Flow:**

1. **Step ①: Learn locally**

* **Location:** Within each of the three client blocks on the left.

* **Action:** Each client uses its own local "Data" to train a local version of the neural network model. This is represented by the data cylinder icon pointing to the neural network icon.

2. **Step ②: Send weight parameters**

* **Location:** A callout box originating from the neural network icon of Client A, pointing towards the Server block.

* **Content:** The callout contains the text "②Send weight parameters" and two example matrices representing model weights.

* Matrix 1: `[1,3,... ... 7,5]`

* Matrix 2: `[0,2,... ... 0,1]`

* **Flow:** Dark blue arrows from the neural network icons of all three clients (A, B, C) point directly to the Server block, indicating that each client sends its locally updated weight parameters to the central server.

3. **Step ③: Calculate average of weight parameters**

* **Location:** Inside the main "Server" block.

* **Content:** The text "③Calculate average of weight parameters" is at the top. Below it is a visual representation of aggregation:

* **Input:** A stack of three matrices (representing the weights from Clients A, B, and C). The top matrix is shown with values `[1,3,... ... 7,5]` and `[0,2,... ... 0,1]`, matching the example from Step ②.

* **Process:** A large arrow labeled "Ave." (for Average) points from the input stack to the output.

* **Output:** A single averaged matrix with example values `[0.4,2,... ... 8,4]` and `[0,1.5,... ... 0,1.5]`.

4. **Step ④: Return averaged weight parameters**

* **Location:** A large, curved dark blue arrow originating from the bottom of the Server block and pointing back towards the left, encompassing all three clients.

* **Content:** The text "④Return averaged weight parameters to each client and reflect them in local models" is positioned below this arrow.

**Additional Text Element:**

* **Location:** A standalone text box in the top-right corner of the image.

* **Content:** "Global model is learned by repeating steps ① to ④". This explicitly states the iterative nature of the federated learning process.

### Key Observations

* **Decentralized Data:** The "Data" cylinders are contained within each client block, visually emphasizing that raw data never leaves the client devices.

* **Centralized Aggregation:** The "Server" block acts solely as an aggregation point for model updates (weight parameters), not a data repository.

* **Iterative Cycle:** The process is explicitly described as a repeating cycle (steps 1-4), which is fundamental to federated learning convergence.

* **Example Values:** The matrices use placeholder numerical values (e.g., `1,3,... 7,5`) to concretely illustrate the concept of weight parameters being sent and averaged. The averaged values (e.g., `0.4`, `1.5`) demonstrate the result of the aggregation operation.

### Interpretation

This diagram provides a clear, high-level schematic of the **Federated Averaging (FedAvg)** algorithm, which is the foundational protocol for federated learning. It visually communicates the core value proposition: enabling collaborative model training across distributed datasets while preserving data privacy.

* **Relationships:** The flowchart establishes a clear client-server relationship. Clients are autonomous training units, while the server is a stateless coordinator. The arrows define the direction of information flow: local updates flow upstream to the server, and the aggregated global model flows downstream to all clients.

* **Process Logic:** The sequence is logical and causal. Local learning (Step 1) must occur before updates can be sent (Step 2). The server cannot average (Step 3) until it receives updates. The updated global model must be distributed (Step 4) before the next local training round can begin with improved parameters.

* **Notable Implication:** The diagram abstracts away complexities like client selection, secure aggregation, communication costs, and handling of non-identical (non-IID) data across clients. It focuses purely on the core parameter averaging mechanism. The example matrices suggest a simple averaging operation, though real-world implementations may use weighted averages based on client dataset sizes.

* **Underlying Principle:** The entire process embodies the Peircean concept of **abduction**—forming the best explanation (the global model) from a set of observations (local data) gathered through a structured, iterative investigative process (the federated learning rounds). The "global model" is the abductive conclusion that best fits the distributed evidence.