TECHNICAL ASSET FINGERPRINT

371317b120926b83d1edd03e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart Type: Combined Bar and Radar Chart

### Overview

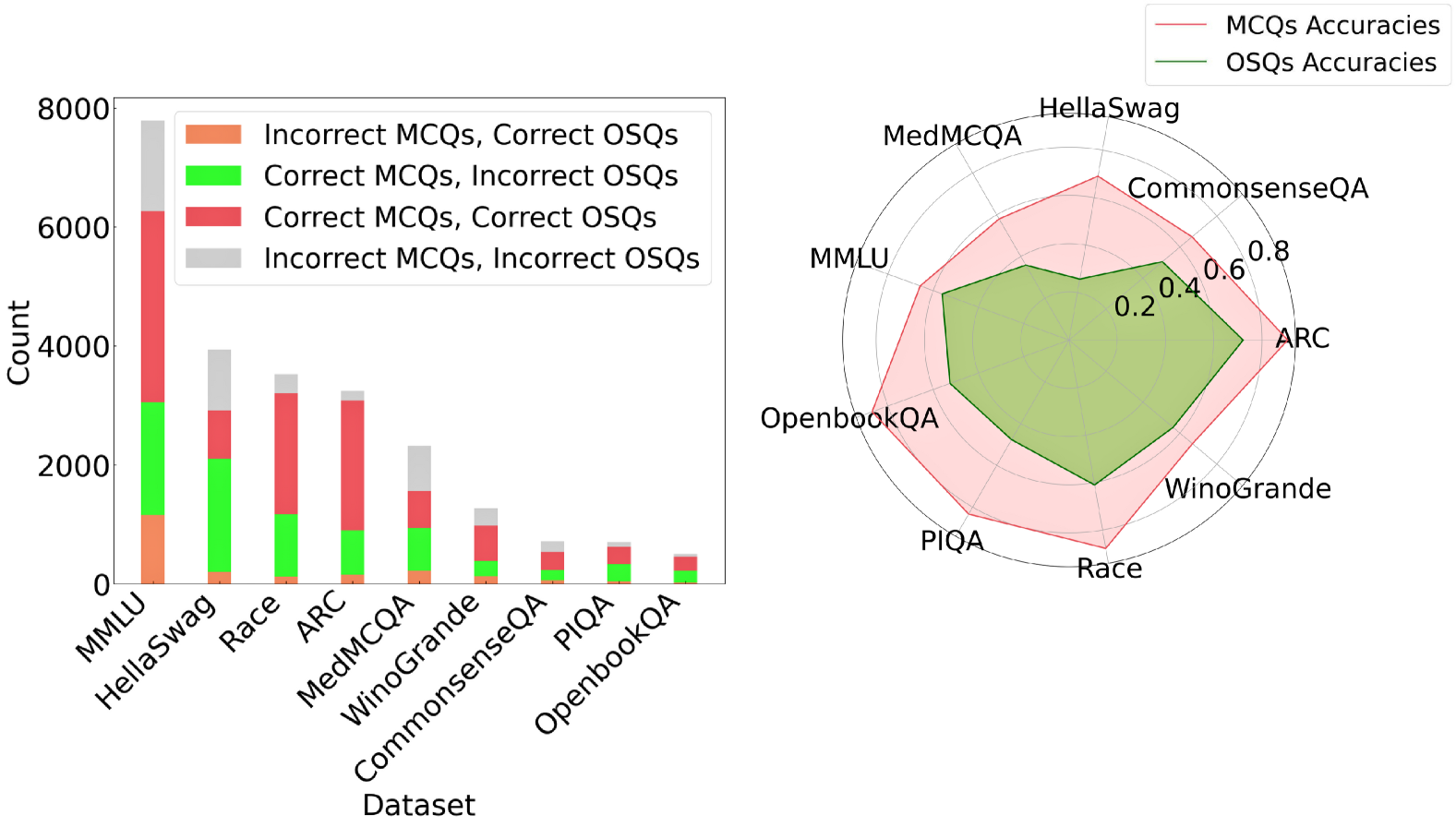

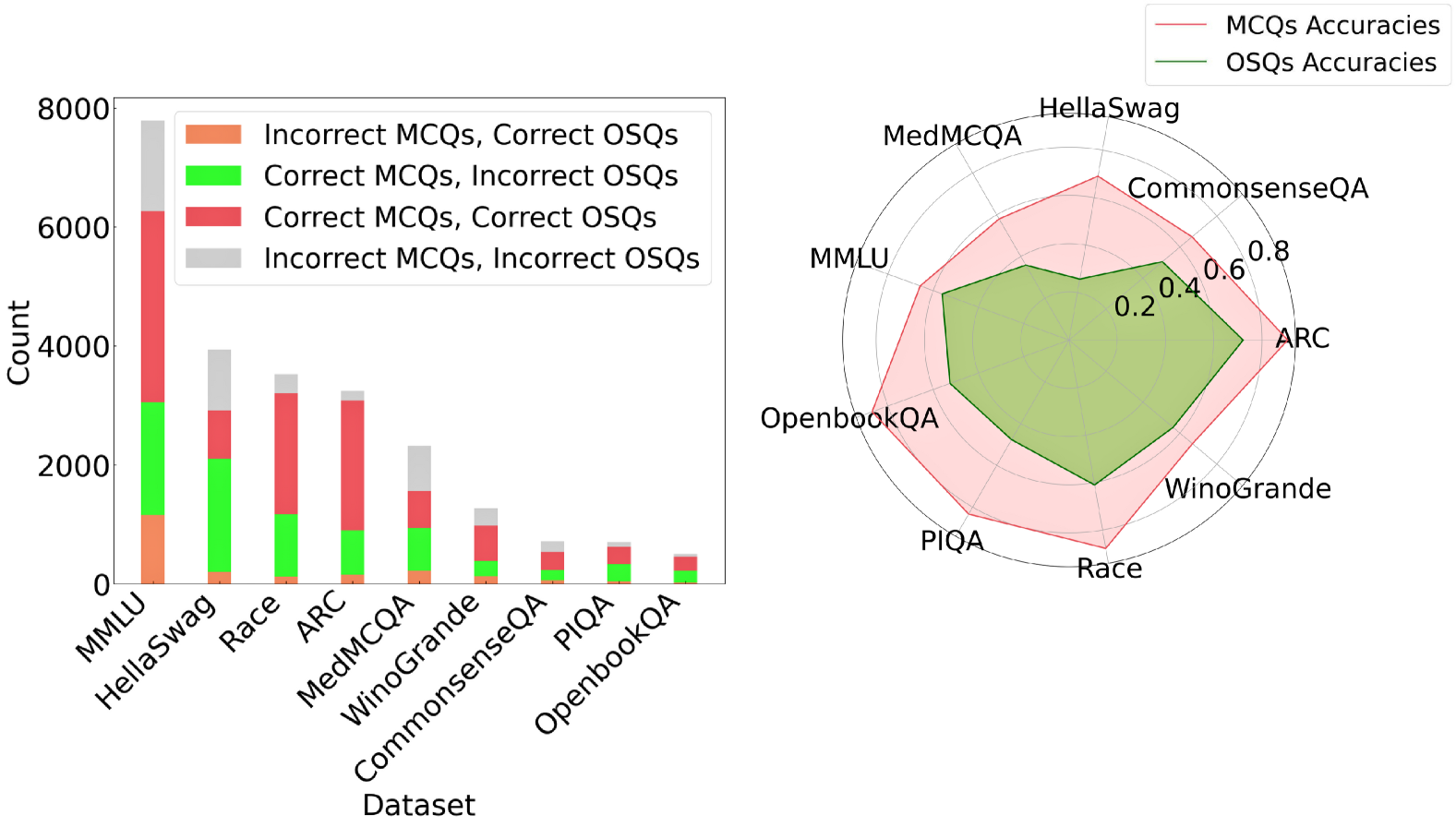

The image presents a combined visualization consisting of a stacked bar chart on the left and a radar chart on the right. The bar chart displays the counts of different combinations of correct/incorrect answers for MCQs (Multiple Choice Questions) and OSQs (Open-ended Short Questions) across various datasets. The radar chart compares the accuracies of MCQs and OSQs across the same datasets.

### Components/Axes

**Left: Stacked Bar Chart**

* **X-axis:** "Dataset" with categories: MMLU, HellaSwag, Race, ARC, MedMCQA, WinoGrande, CommonsenseQA, PIQA, OpenbookQA.

* **Y-axis:** "Count" ranging from 0 to 8000, with increments of 2000.

* **Legend (Top-Right of Bar Chart):**

* Orange: Incorrect MCQs, Correct OSQs

* Green: Correct MCQs, Incorrect OSQs

* Red: Correct MCQs, Correct OSQs

* Gray: Incorrect MCQs, Incorrect OSQs

**Right: Radar Chart**

* **Axes:** Radial axes represent the datasets: HellaSwag, CommonsenseQA, ARC, WinoGrande, Race, PIQA, OpenbookQA, MMLU, MedMCQA.

* **Scale:** Concentric circles indicate accuracy values from 0.2 to 0.8, with increments of 0.2.

* **Legend (Top-Right of Radar Chart):**

* Red Line/Area: MCQs Accuracies

* Green Line/Area: OSQs Accuracies

### Detailed Analysis

**Left: Stacked Bar Chart**

* **MMLU:**

* Incorrect MCQs, Correct OSQs (Orange): ~1200

* Correct MCQs, Incorrect OSQs (Green): ~1800

* Correct MCQs, Correct OSQs (Red): ~3300

* Incorrect MCQs, Incorrect OSQs (Gray): ~1500

* **HellaSwag:**

* Incorrect MCQs, Correct OSQs (Orange): ~200

* Correct MCQs, Incorrect OSQs (Green): ~2100

* Correct MCQs, Correct OSQs (Red): ~600

* Incorrect MCQs, Incorrect OSQs (Gray): ~1000

* **Race:**

* Incorrect MCQs, Correct OSQs (Orange): ~100

* Correct MCQs, Incorrect OSQs (Green): ~1100

* Correct MCQs, Correct OSQs (Red): ~1800

* Incorrect MCQs, Incorrect OSQs (Gray): ~500

* **ARC:**

* Incorrect MCQs, Correct OSQs (Orange): ~100

* Correct MCQs, Incorrect OSQs (Green): ~1200

* Correct MCQs, Correct OSQs (Red): ~1800

* Incorrect MCQs, Incorrect OSQs (Gray): ~300

* **MedMCQA:**

* Incorrect MCQs, Correct OSQs (Orange): ~50

* Correct MCQs, Incorrect OSQs (Green): ~400

* Correct MCQs, Correct OSQs (Red): ~1100

* Incorrect MCQs, Incorrect OSQs (Gray): ~800

* **WinoGrande:**

* Incorrect MCQs, Correct OSQs (Orange): ~50

* Correct MCQs, Incorrect OSQs (Green): ~500

* Correct MCQs, Correct OSQs (Red): ~400

* Incorrect MCQs, Incorrect OSQs (Gray): ~300

* **CommonsenseQA:**

* Incorrect MCQs, Correct OSQs (Orange): ~25

* Correct MCQs, Incorrect OSQs (Green): ~250

* Correct MCQs, Correct OSQs (Red): ~400

* Incorrect MCQs, Incorrect OSQs (Gray): ~100

* **PIQA:**

* Incorrect MCQs, Correct OSQs (Orange): ~25

* Correct MCQs, Incorrect OSQs (Green): ~150

* Correct MCQs, Correct OSQs (Red): ~200

* Incorrect MCQs, Incorrect OSQs (Gray): ~100

* **OpenbookQA:**

* Incorrect MCQs, Correct OSQs (Orange): ~25

* Correct MCQs, Incorrect OSQs (Green): ~100

* Correct MCQs, Correct OSQs (Red): ~150

* Incorrect MCQs, Incorrect OSQs (Gray): ~50

**Right: Radar Chart**

* **MCQs Accuracies (Red Area):** The red area represents the accuracy of MCQs across different datasets. The accuracy varies, with peaks at ARC and CommonsenseQA, and lower values at PIQA and OpenbookQA.

* HellaSwag: ~0.7

* CommonsenseQA: ~0.75

* ARC: ~0.75

* WinoGrande: ~0.6

* Race: ~0.6

* PIQA: ~0.5

* OpenbookQA: ~0.5

* MMLU: ~0.6

* MedMCQA: ~0.65

* **OSQs Accuracies (Green Area):** The green area represents the accuracy of OSQs across different datasets. The accuracy is generally lower than MCQs, with peaks at HellaSwag and CommonsenseQA.

* HellaSwag: ~0.5

* CommonsenseQA: ~0.5

* ARC: ~0.4

* WinoGrande: ~0.3

* Race: ~0.3

* PIQA: ~0.3

* OpenbookQA: ~0.3

* MMLU: ~0.4

* MedMCQA: ~0.45

### Key Observations

* The stacked bar chart shows the distribution of correct and incorrect answers for MCQs and OSQs across different datasets. MMLU has the highest overall count, while OpenbookQA has the lowest.

* The radar chart indicates that MCQs generally have higher accuracy than OSQs across all datasets.

* The difference in accuracy between MCQs and OSQs is most pronounced in datasets like ARC and WinoGrande.

### Interpretation

The combined chart provides insights into the performance of models on different question types (MCQs and OSQs) across various datasets. The stacked bar chart highlights the raw counts of correct and incorrect answers, while the radar chart normalizes these counts into accuracy scores, allowing for a direct comparison of MCQ and OSQ performance.

The data suggests that models generally perform better on MCQs than OSQs, possibly due to the constrained nature of multiple-choice questions. Datasets like MMLU, which have a high overall count but moderate accuracy in the radar chart, may be more challenging overall. The radar chart clearly shows the relative strengths and weaknesses of the models across different datasets and question types. The stacked bar chart shows the raw counts of each combination of correct/incorrect answers, which can be useful for understanding the types of errors the models are making.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: Performance Comparison of MCQs and OSQs Across Datasets

### Overview

This chart presents a comparative analysis of performance on Multiple Choice Questions (MCQs) and Open-ended Short Questions (OSQs) across various datasets. The left side is a bar chart showing the count of correct and incorrect answers for each question type, while the right side is a radar chart displaying accuracy scores.

### Components/Axes

* **Left Chart:**

* X-axis: Dataset (MMLU, HellaSwag, Race, ARC, MedMCQA, Winogrande, CommonsenseQA, PIQA, OpenbookQA)

* Y-axis: Count (ranging from 0 to 8000)

* Bar Colors:

* Red: Incorrect MCQs, Correct OSQs

* Green: Correct MCQs, Incorrect OSQs

* Blue: Incorrect MCQs, Incorrect OSQs

* **Right Chart:**

* Radial Axes: Representing the datasets (MMLU, HellaSwag, CommonsenseQA, ARC, OpenbookQA, PIQA, Race, Winogrande, MedMCQA)

* Radial Scale: Accuracy (ranging from 0 to 1.0)

* Line Colors:

* Red: MCQs Accuracies

* Pink: OSQs Accuracies

* **Legend (Top-Right):** Clearly identifies the line colors for MCQs and OSQs accuracies.

### Detailed Analysis or Content Details

**Left Chart (Bar Chart):**

* **MMLU:** Approximately 6500 Incorrect MCQs, Correct OSQs; ~500 Correct MCQs, Incorrect OSQs; ~500 Incorrect MCQs, Incorrect OSQs.

* **HellaSwag:** Approximately 5500 Incorrect MCQs, Correct OSQs; ~1000 Correct MCQs, Incorrect OSQs; ~500 Incorrect MCQs, Incorrect OSQs.

* **Race:** Approximately 2500 Incorrect MCQs, Correct OSQs; ~2500 Correct MCQs, Incorrect OSQs; ~500 Incorrect MCQs, Incorrect OSQs.

* **ARC:** Approximately 2000 Incorrect MCQs, Correct OSQs; ~2000 Correct MCQs, Incorrect OSQs; ~500 Incorrect MCQs, Incorrect OSQs.

* **MedMCQA:** Approximately 1000 Incorrect MCQs, Correct OSQs; ~1000 Correct MCQs, Incorrect OSQs; ~200 Incorrect MCQs, Incorrect OSQs.

* **Winogrande:** Approximately 800 Incorrect MCQs, Correct OSQs; ~500 Correct MCQs, Incorrect OSQs; ~200 Incorrect MCQs, Incorrect OSQs.

* **CommonsenseQA:** Approximately 600 Incorrect MCQs, Correct OSQs; ~400 Correct MCQs, Incorrect OSQs; ~100 Incorrect MCQs, Incorrect OSQs.

* **PIQA:** Approximately 400 Incorrect MCQs, Correct OSQs; ~200 Correct MCQs, Incorrect OSQs; ~50 Incorrect MCQs, Incorrect OSQs.

* **OpenbookQA:** Approximately 300 Incorrect MCQs, Correct OSQs; ~200 Correct MCQs, Incorrect OSQs; ~50 Incorrect MCQs, Incorrect OSQs.

**Right Chart (Radar Chart):**

* **MMLU:** MCQs Accuracy ~0.8, OSQs Accuracy ~0.2

* **HellaSwag:** MCQs Accuracy ~0.8, OSQs Accuracy ~0.2

* **CommonsenseQA:** MCQs Accuracy ~0.6, OSQs Accuracy ~0.4

* **ARC:** MCQs Accuracy ~0.6, OSQs Accuracy ~0.4

* **OpenbookQA:** MCQs Accuracy ~0.4, OSQs Accuracy ~0.6

* **PIQA:** MCQs Accuracy ~0.4, OSQs Accuracy ~0.6

* **Race:** MCQs Accuracy ~0.4, OSQs Accuracy ~0.6

* **Winogrande:** MCQs Accuracy ~0.4, OSQs Accuracy ~0.6

* **MedMCQA:** MCQs Accuracy ~0.4, OSQs Accuracy ~0.6

### Key Observations

* The bar chart shows that across all datasets, there are significantly more instances of incorrect MCQs being answered correctly as OSQs than vice versa.

* The radar chart reveals that MCQs generally achieve higher accuracy scores than OSQs on MMLU and HellaSwag.

* On datasets like OpenbookQA, PIQA, Race, Winogrande, and MedMCQA, OSQs consistently outperform MCQs in terms of accuracy.

* The accuracy scores for MCQs and OSQs are relatively similar on CommonsenseQA and ARC.

### Interpretation

The data suggests a trade-off between question type and dataset complexity. MCQs excel on datasets requiring factual recall or pattern recognition (MMLU, HellaSwag), where the multiple-choice format can guide the answer. However, OSQs demonstrate superior performance on datasets demanding reasoning, common sense, or nuanced understanding (OpenbookQA, PIQA, Race, Winogrande, MedMCQA). This indicates that OSQs are better suited for evaluating higher-order cognitive skills.

The large number of incorrect MCQs answered correctly as OSQs suggests that many questions may be ambiguous or poorly designed, leading to incorrect selections in the MCQ format but eliciting correct responses when open-ended. The radar chart visually reinforces this, showing a clear divergence in performance based on the dataset. The datasets where OSQs outperform MCQs are likely those where the nuances of language and context are critical for accurate answers, something that OSQs can better capture. The positioning of the datasets on the radar chart also suggests a clustering of performance, with some datasets consistently favoring one question type over the other.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Stacked Bar Chart & Radar Chart: Model Performance Analysis

### Overview

The image contains two distinct charts analyzing the performance of a model (or models) on various question-answering datasets. The left chart is a stacked bar chart showing the count of questions categorized by the correctness of the model's answers on Multiple Choice Questions (MCQs) and Open-Ended/Short-form Questions (OSQs). The right chart is a radar chart comparing the overall accuracy percentages for MCQs and OSQs across the same datasets.

### Components/Axes

**Left Chart (Stacked Bar Chart):**

* **X-axis (Dataset):** Lists 9 datasets: MMLU, HellaSwag, Race, ARC, MedMCQA, WinoGrande, CommonsenseQA, PIQA, OpenbookQA.

* **Y-axis (Count):** Linear scale from 0 to 8000, with major ticks at 2000 intervals.

* **Legend (Top-Left):** Defines four stacked categories:

* Orange: Incorrect MCQs, Correct OSQs

* Green: Correct MCQs, Incorrect OSQs

* Red: Correct MCQs, Correct OSQs

* Grey: Incorrect MCQs, Incorrect OSQs

**Right Chart (Radar Chart):**

* **Radial Axes:** Represent the 9 datasets, arranged clockwise: HellaSwag, CommonsenseQA, ARC, WinoGrande, Race, PIQA, OpenbookQA, MMLU, MedMCQA.

* **Concentric Circles:** Represent accuracy values, with labeled rings at 0.2, 0.4, 0.6, and 0.8 (20%, 40%, 60%, 80%).

* **Legend (Top-Right):**

* Red Line & Shaded Area: MCQs Accuracies

* Green Line & Shaded Area: OSQs Accuracies

### Detailed Analysis

**Stacked Bar Chart Data (Approximate Counts):**

* **MMLU:** Total ~7800. Breakdown (bottom to top): Orange ~1200, Green ~1800, Red ~3200, Grey ~1600.

* **HellaSwag:** Total ~3900. Breakdown: Orange ~200, Green ~1900, Red ~800, Grey ~1000.

* **Race:** Total ~3500. Breakdown: Orange ~100, Green ~1100, Red ~2000, Grey ~300.

* **ARC:** Total ~3200. Breakdown: Orange ~100, Green ~800, Red ~2200, Grey ~100.

* **MedMCQA:** Total ~2300. Breakdown: Orange ~200, Green ~700, Red ~700, Grey ~700.

* **WinoGrande:** Total ~1200. Breakdown: Orange ~100, Green ~300, Red ~600, Grey ~200.

* **CommonsenseQA:** Total ~700. Breakdown: Orange ~50, Green ~150, Red ~400, Grey ~100.

* **PIQA:** Total ~650. Breakdown: Orange ~50, Green ~250, Red ~300, Grey ~50.

* **OpenbookQA:** Total ~450. Breakdown: Orange ~50, Green ~150, Red ~200, Grey ~50.

**Radar Chart Data (Approximate Accuracies):**

* **MCQs (Red Line):** HellaSwag ~0.75, CommonsenseQA ~0.70, ARC ~0.75, WinoGrande ~0.65, Race ~0.80, PIQA ~0.70, OpenbookQA ~0.65, MMLU ~0.65, MedMCQA ~0.60.

* **OSQs (Green Line):** HellaSwag ~0.55, CommonsenseQA ~0.50, ARC ~0.60, WinoGrande ~0.55, Race ~0.65, PIQA ~0.55, OpenbookQA ~0.50, MMLU ~0.50, MedMCQA ~0.45.

### Key Observations

1. **Dataset Size Disparity:** MMLU is the largest dataset by a significant margin (~7800 questions), while OpenbookQA is the smallest (~450).

2. **Performance Consistency:** The "Correct MCQs, Correct OSQs" (Red) segment is consistently the largest or second-largest segment across all datasets, indicating the model often gets both question types right.

3. **MCQ vs. OSQ Accuracy Gap:** The radar chart shows a consistent pattern where MCQ accuracy (red area) is higher than OSQ accuracy (green area) for every single dataset. The gap appears largest for MedMCQA and MMLU.

4. **Highest/Lowest Accuracy:** The model achieves its highest MCQ accuracy on Race (~80%) and its lowest on MedMCQA (~60%). For OSQs, the highest is on Race (~65%) and the lowest on MedMCQA (~45%).

5. **Correlation in Performance:** Datasets where the model performs well on MCQs (e.g., Race, ARC) also tend to show relatively better performance on OSQs, though the gap remains.

### Interpretation

This visualization provides a multi-faceted view of model performance across diverse benchmarks. The stacked bar chart reveals the *composition* of errors and successes, showing not just overall volume but the relationship between performance on two distinct task formats (MCQ vs. OSQ) within the same dataset. The radar chart provides a direct, normalized *comparison* of accuracy rates.

The data suggests a few key insights:

1. **Task Format Matters:** The consistent accuracy gap indicates the model finds generating correct short-form answers (OSQs) more challenging than selecting from given options (MCQs). This is a common pattern in language models, where recognition (MCQ) often outperforms recall/generation (OSQ).

2. **Dataset Difficulty:** Datasets like MedMCQA and MMLU appear to be more challenging for the model on both task formats, as indicated by lower accuracy scores and a larger proportion of "Incorrect/Incorrect" (grey) segments in the bar chart.

3. **Error Analysis Potential:** The bar chart's four-category breakdown is particularly useful for diagnostic analysis. For instance, a large "Correct MCQs, Incorrect OSQs" (green) segment would suggest the model has knowledge but struggles to articulate it, while a large "Incorrect MCQs, Correct OSQs" (orange) segment would be unusual and might indicate issues with the MCQ format or options.

4. **Complementary Views:** The two charts are complementary. The bar chart shows absolute counts (influenced by dataset size), while the radar chart shows normalized rates. A dataset can have a large red bar (many questions got both right) but a moderate accuracy point if the total dataset size is very large.

In summary, the model demonstrates competent but uneven performance across benchmarks, with a clear and persistent advantage on multiple-choice tasks over open-ended generation tasks. The analysis highlights specific datasets where performance lags, guiding potential areas for model improvement or data curation.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Dataset Performance Breakdown by Question Type

### Overview

The bar chart compares performance metrics across multiple datasets, segmented by question type (MCQs/OSQs) and correctness. Each bar is divided into four color-coded segments representing combinations of correct/incorrect answers for MCQs and OSQs.

### Components/Axes

- **X-axis (Datasets)**:

- MMLU, HellaSwag, Race, ARC, MedMCQA, WinoGrande, CommonsenseQA, PIQA, OpenbookQA

- **Y-axis (Count)**:

- Scale from 0 to 8,000 in increments of 2,000

- **Legend**:

- Orange: Incorrect MCQs, Correct OSQs

- Green: Correct MCQs, Incorrect OSQs

- Red: Correct MCQs, Correct OSQs

- Gray: Incorrect MCQs, Incorrect OSQs

### Detailed Analysis

- **MMLU**:

- Tallest bar (~8,000 total)

- Segments: ~1,000 (orange), ~2,000 (green), ~6,000 (red), ~1,000 (gray)

- **HellaSwag**:

- ~4,000 total

- Segments: ~200 (orange), ~1,800 (green), ~2,000 (red), ~1,000 (gray)

- **Race**:

- ~3,500 total

- Segments: ~100 (orange), ~1,200 (green), ~2,200 (red), ~100 (gray)

- **ARC**:

- ~3,200 total

- Segments: ~150 (orange), ~900 (green), ~2,150 (red), ~0 (gray)

- **MedMCQA**:

- ~2,500 total

- Segments: ~200 (orange), ~1,000 (green), ~1,300 (red), ~0 (gray)

- **WinoGrande**:

- ~1,500 total

- Segments: ~100 (orange), ~400 (green), ~1,000 (red), ~0 (gray)

- **CommonsenseQA**:

- ~800 total

- Segments: ~50 (orange), ~200 (green), ~500 (red), ~50 (gray)

- **PIQA**:

- ~600 total

- Segments: ~30 (orange), ~150 (green), ~400 (red), ~20 (gray)

- **OpenbookQA**:

- ~400 total

- Segments: ~20 (orange), ~100 (green), ~250 (red), ~30 (gray)

### Key Observations

1. **MCQ Dominance**: Correct MCQs (red) consistently dominate across all datasets, with MMLU showing the highest count (~6,000).

2. **OSQ Variability**: Correct OSQs (green) vary significantly, with MMLU having the highest (~2,000) and OpenbookQA the lowest (~100).

3. **Error Patterns**: Incorrect MCQs (orange) are minimal except in MMLU (~1,000). Incorrect OSQs (gray) are negligible in most datasets but present in MMLU and CommonsenseQA.

### Interpretation

The data suggests MCQs are more frequently answered correctly than OSQs across all datasets, with MMLU exhibiting the largest volume of correct MCQs. The near-absence of incorrect OSQs in datasets like ARC and MedMCQA implies higher reliability for OSQs in these contexts. However, the dominance of MCQs may reflect dataset design biases rather than inherent question type superiority.

## Radar Chart: MCQ vs. OSQ Accuracy Comparison

### Overview

The radar chart compares accuracy scores (0–0.8) for MCQs (red) and OSQs (green) across nine datasets. MCQ accuracies consistently outperform OSQs, with ARC showing the highest MCQ accuracy and CommonsenseQA the lowest.

### Components/Axes

- **Axes (Datasets)**:

- ARC, CommonsenseQA, PIQA, WinoGrande, OpenbookQA, Race, MedMCQA, HellaSwag, MMLU

- **Legend**:

- Red: MCQs Accuracies

- Green: OSQs Accuracies

### Detailed Analysis

- **ARC**:

- MCQs: ~0.75

- OSQs: ~0.5

- **CommonsenseQA**:

- MCQs: ~0.6

- OSQs: ~0.4

- **PIQA**:

- MCQs: ~0.65

- OSQs: ~0.45

- **WinoGrande**:

- MCQs: ~0.7

- OSQs: ~0.55

- **OpenbookQA**:

- MCQs: ~0.7

- OSQs: ~0.5

- **Race**:

- MCQs: ~0.68

- OSQs: ~0.48

- **MedMCQA**:

- MCQs: ~0.62

- OSQs: ~0.42

- **HellaSwag**:

- MCQs: ~0.6

- OSQs: ~0.4

- **MMLU**:

- MCQs: ~0.65

- OSQs: ~0.45

### Key Observations

1. **MCQ Superiority**: MCQ accuracies exceed OSQs by ~0.15–0.25 across all datasets.

2. **ARC Exception**: ARC shows the largest gap (~0.25) between MCQ and OSQ accuracies.

3. **CommonsenseQA**: Lowest performance for both question types, with MCQs at ~0.6 and OSQs at ~0.4.

### Interpretation

The radar chart reveals a systematic advantage for MCQs in accuracy, potentially due to structured answer choices simplifying response generation. However, the minimal OSQ accuracy in CommonsenseQA suggests challenges in open-ended reasoning for this dataset. The consistent MCQ-OSQ gap across datasets implies architectural or training biases favoring structured formats over open-ended ones.

DECODING INTELLIGENCE...