TECHNICAL ASSET FINGERPRINT

3727160a74afcdf974a3385b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

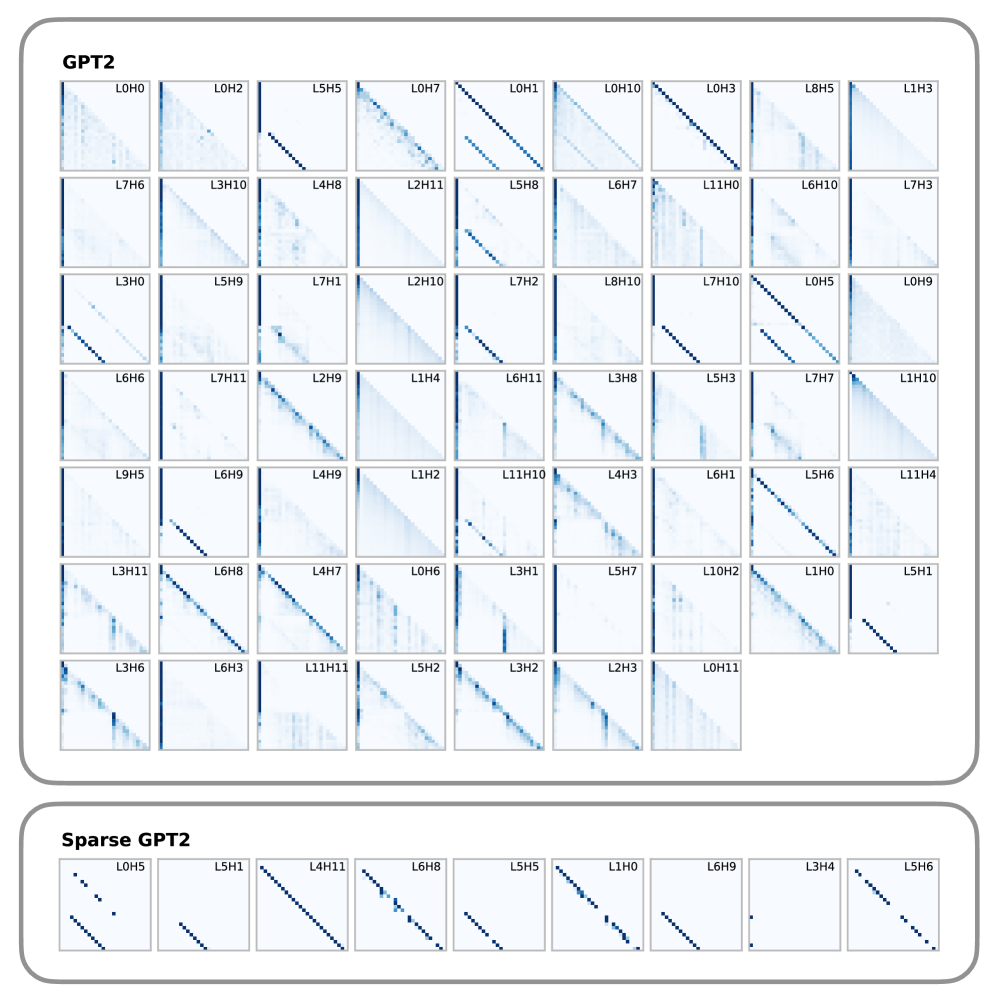

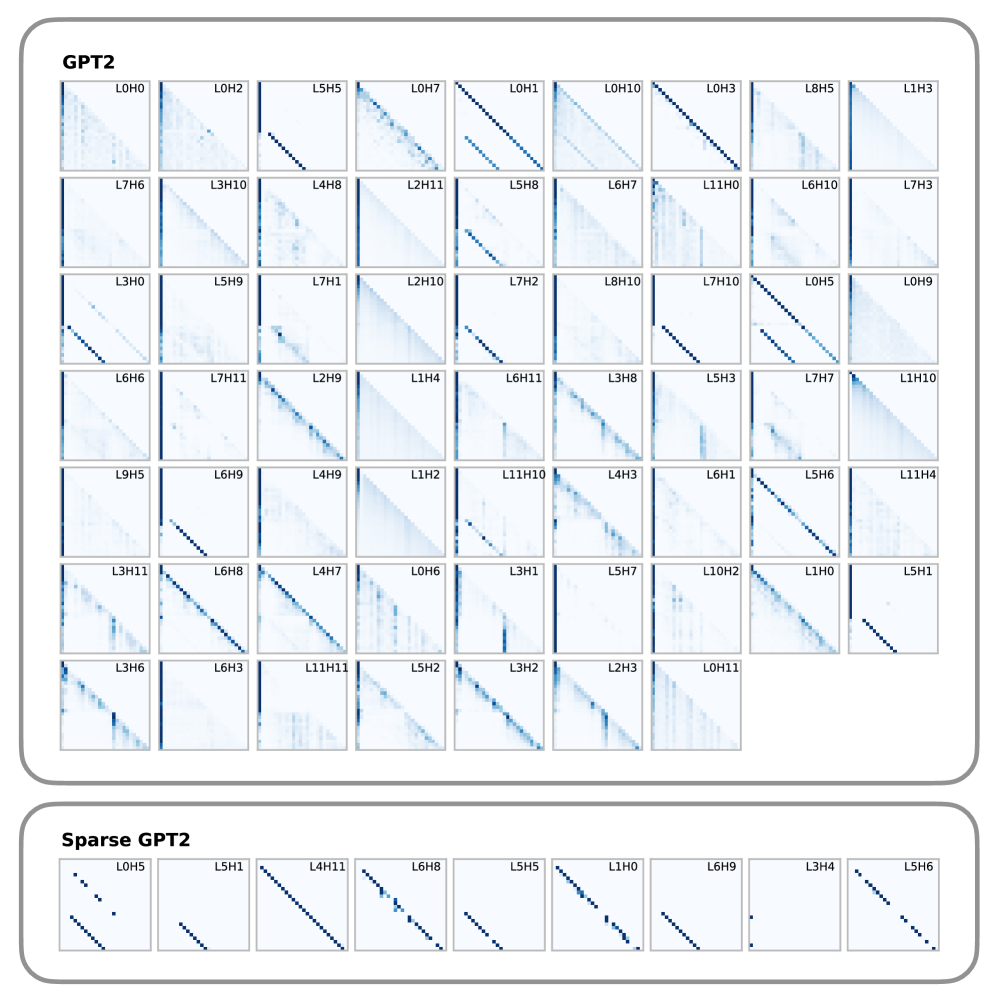

## Grid of Attention Maps: Standard GPT2 vs. Sparse GPT2

### Overview

The image displays a comparative visualization of attention head patterns from two different neural network models: a standard "GPT2" model and a "Sparse GPT2" model. The visualization consists of numerous small, square heatmaps (matrices) organized into two distinct, rounded-rectangular panels. Each small square represents the attention weights of a specific attention head across a sequence of tokens. All matrices are lower-triangular, which is characteristic of causal (autoregressive) language models where a token can only attend to itself and preceding tokens.

### Components/Axes

* **Panels:**

* **Top Panel:** Labeled "GPT2" in the top-left corner. Contains a grid of 61 attention maps.

* **Bottom Panel:** Labeled "Sparse GPT2" in the top-left corner. Contains a single row of 9 attention maps.

* **Individual Heatmaps (Attention Maps):**

* **Y-axis (implied):** Query token position (sequence index), starting from the top (position 0) and moving downward.

* **X-axis (implied):** Key token position (sequence index), starting from the left (position 0) and moving rightward.

* **Color Scale (implied legend):** The color gradient ranges from white to dark blue. White represents an attention weight of approximately 0.0. Dark blue represents a high attention weight (approaching 1.0).

* **Masking:** The upper-right triangle of every plot is pure white, representing the causal mask (future tokens are hidden).

* **Labels:** Each heatmap has an alphanumeric label in the top-right corner in the format `L[x]H[y]`, where `L` stands for Layer and `H` stands for Head (e.g., `L0H0` means Layer 0, Head 0).

---

### Content Details

#### Component 1: GPT2 Panel (Top)

**Spatial Grounding:** This panel occupies the upper 80% of the image. It contains a grid arranged in 7 rows. Rows 1 through 6 contain 9 columns. Row 7 contains 7 columns.

**Label Transcription (Left to Right, Top to Bottom):**

* **Row 1:** L0H0, L0H2, L5H5, L0H7, L0H1, L0H10, L0H3, L8H5, L1H3

* **Row 2:** L7H6, L3H10, L4H8, L2H11, L5H8, L6H7, L11H0, L6H10, L7H3

* **Row 3:** L3H0, L5H9, L7H1, L2H10, L7H2, L8H10, L7H10, L0H5, L0H9

* **Row 4:** L6H6, L7H11, L2H9, L1H4, L6H11, L3H8, L5H3, L7H7, L1H10

* **Row 5:** L9H5, L6H9, L4H9, L1H2, L11H10, L4H3, L6H1, L5H6, L11H4

* **Row 6:** L3H11, L6H8, L4H7, L0H6, L3H1, L5H7, L10H2, L1H0, L5H1

* **Row 7:** L3H6, L6H3, L11H11, L5H2, L3H2, L2H3, L0H11

**Trend Verification & Pattern Categorization:**

The attention maps in this standard GPT2 model exhibit a variety of dense and semi-dense patterns.

1. **Vertical Edge (First-Token Attention):** A solid dark blue line runs down the far-left edge (X=0). This indicates the head pays heavy attention to the very first token in the sequence regardless of the current token position.

* *Examples:* L0H0, L7H6, L3H0, L9H5, L3H11, L3H6.

2. **Main Diagonal (Local/Previous-Token Attention):** A solid dark blue line runs diagonally from top-left to bottom-right along the edge of the causal mask. This indicates the head attends primarily to the immediately preceding token or the current token.

* *Examples:* L0H1, L0H3, L7H10, L6H8, L4H7.

3. **Offset Diagonal:** A diagonal line that is shifted downward from the main diagonal. This indicates attention to a token a fixed number of steps in the past.

* *Examples:* L5H5, L5H8, L7H2, L6H9, L5H1.

4. **Diffuse/Broad Attention:** A light blue wash spread across the lower triangle, indicating attention is distributed across many past tokens.

* *Examples:* L0H2, L2H11, L1H4, L1H2.

5. **Banded/Vertical Stripes:** Multiple faint vertical lines, indicating attention to specific absolute positions or specific recurring tokens.

* *Examples:* L11H0, L0H6, L10H2, L0H11.

6. **Complex/Hybrid:** Combinations of the above, such as a strong diagonal combined with a strong first-token vertical line.

* *Examples:* L0H5, L5H6, L1H0.

#### Component 2: Sparse GPT2 Panel (Bottom)

**Spatial Grounding:** This panel occupies the bottom 20% of the image. It contains a single row of 9 heatmaps.

**Label Transcription (Left to Right):**

* L0H5, L5H1, L4H11, L6H8, L5H5, L1H0, L6H9, L3H4, L5H6

**Trend Verification & Pattern Categorization:**

The defining visual trend here is **extreme sparsity**. Unlike the top panel, there is almost no light blue "wash" or diffuse attention. The matrices are predominantly pure white. The attention weights are concentrated into discrete, sharp, dark blue dots.

* **Strict Diagonals:** L4H11, L6H8, L1H0 exhibit dots forming a perfect main diagonal.

* **Strict Offset Diagonals:** L5H1, L5H5, L6H9 exhibit dots forming a diagonal shifted downward.

* **Fragmented/Multiple Diagonals:** L0H5 and L5H6 show dots forming segments of multiple parallel diagonals.

* **Near-Empty:** L3H4 is almost entirely white, with only one or two faint dots visible on the far left edge.

---

### Key Observations

1. **Direct Comparison of Specific Heads:** Several heads shown in the Sparse GPT2 panel are also present in the standard GPT2 panel. Comparing them reveals the exact effect of the sparsification process:

* **L0H5:** In standard GPT2, it has a solid main diagonal and a solid offset diagonal. In Sparse GPT2, the continuous lines are broken into discrete, separated dots, and any background noise is removed.

* **L6H8:** In standard GPT2, it is a solid, continuous main diagonal. In Sparse GPT2, it is a dotted diagonal.

* **L1H0:** In standard GPT2, it has a strong diagonal with a diffuse blue wash below it. In Sparse GPT2, the diffuse wash is completely eliminated, leaving only the diagonal dots.

2. **Elimination of the "Attention Sink":** The prominent vertical line on the left edge (attention to the first token) seen frequently in standard GPT2 (e.g., L0H0, L7H6) is notably absent in the sample of Sparse GPT2 heads provided.

3. **Discretization:** The Sparse GPT2 model forces attention to be binary or highly localized, rather than distributed continuously across a sequence.

---

### Interpretation

This image serves as a technical diagnostic visualization demonstrating the internal mechanics of attention heads in Transformer-based language models, specifically highlighting the impact of applying a sparsity constraint.

**Reading Between the Lines (Peircean Investigative Analysis):**

* **Head Specialization:** The standard GPT2 panel proves that different attention heads learn distinct, specialized roles without human intervention. Some act as "previous token" fetchers (diagonals), some look for specific syntactic offsets (offset diagonals), and some aggregate broad context (diffuse).

* **The "Attention Sink" Phenomenon:** The heavy vertical lines on the left of many standard GPT2 plots represent the model using the first token (often a `[BOS]` or starting token) as a "sink." When a head doesn't find anything relevant in the past context to attend to, it dumps its attention mass onto the first token to satisfy the mathematical requirement that attention weights sum to 1.0.

* **The Purpose of Sparsity:** The bottom panel demonstrates what happens when a model is trained or modified to be "sparse" (likely through techniques like sparse attention masks, thresholding, or specific regularization). The diffuse "noise" and the continuous lines are stripped away.

* **Efficiency vs. Expressivity:** The Sparse GPT2 maps suggest that much of the continuous attention in standard GPT2 might be redundant or unnecessary for certain tasks. By reducing attention to discrete points (dots instead of solid lines/washes), the model requires significantly less memory and compute (as zero-values don't need to be calculated in sparse matrix operations). However, the visual starkness of L3H4 (nearly empty) suggests that forcing sparsity might effectively "kill" or render certain heads inactive if they previously relied on broad, diffuse context gathering.

DECODING INTELLIGENCE...