TECHNICAL ASSET FINGERPRINT

3727160a74afcdf974a3385b

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

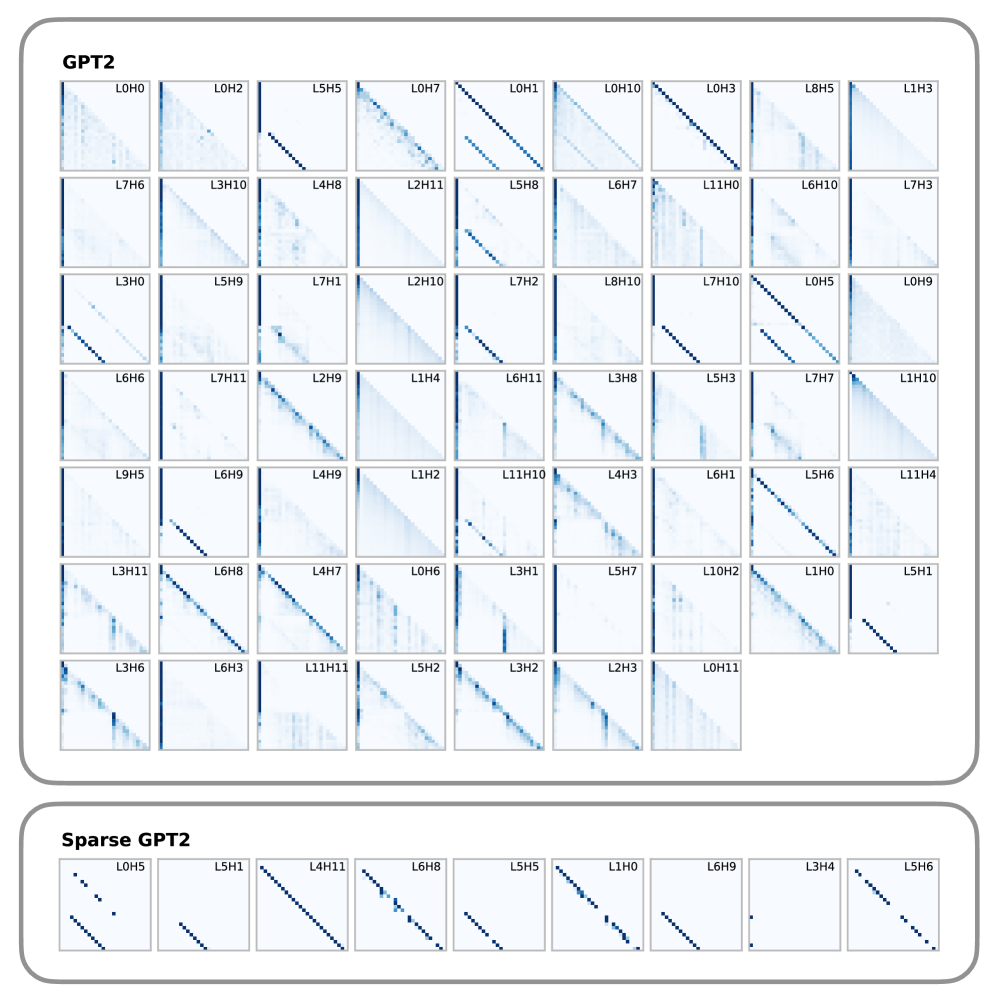

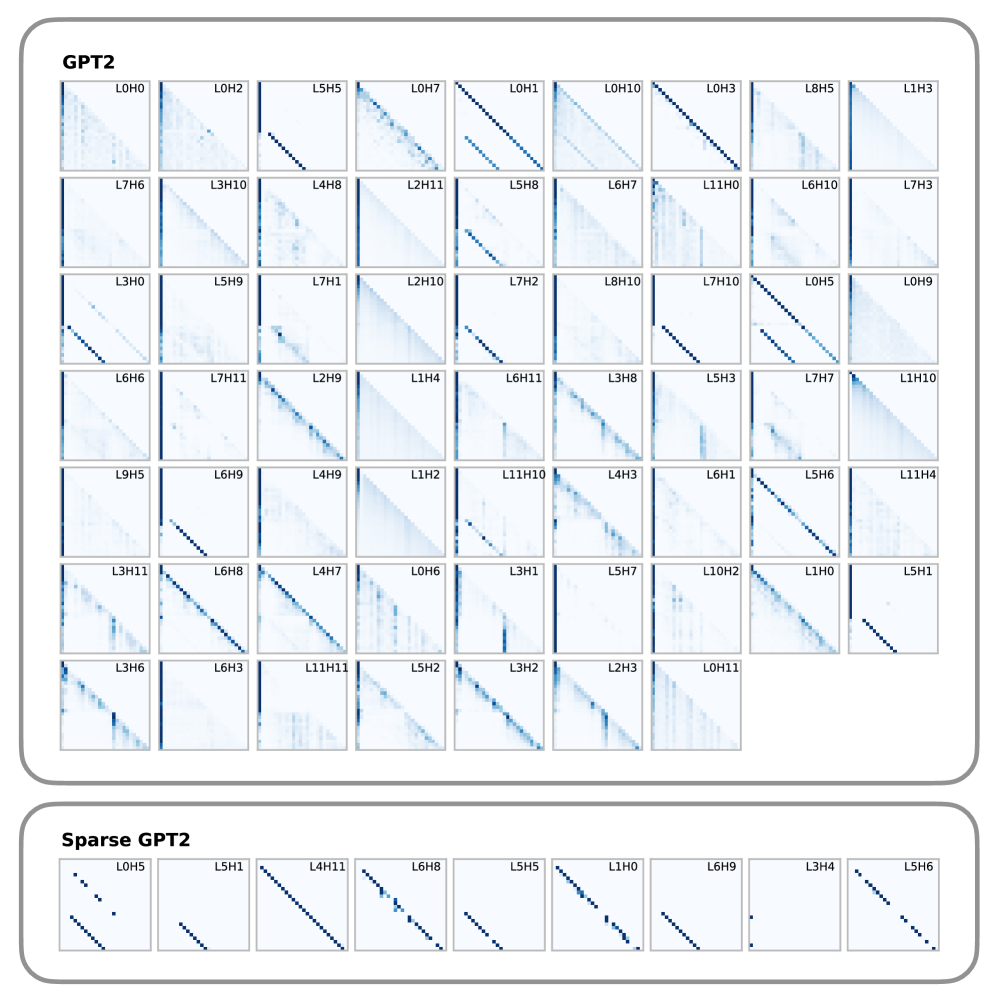

## Attention Pattern Visualization: GPT-2 vs. Sparse GPT-2

### Overview

The image displays a comparative visualization of attention patterns from two transformer models: a standard "GPT2" model and a "Sparse GPT2" model. The visualization consists of two distinct panels, each containing a grid of small, square subplots. Each subplot represents the attention pattern of a specific attention head within a specific layer of the model. The patterns are depicted as heatmaps where dark blue dots indicate high attention weights between token positions, and white space indicates low or zero attention.

### Components/Axes

* **Panel Titles:** The top panel is labeled **"GPT2"**. The bottom panel is labeled **"Sparse GPT2"**.

* **Subplot Labels:** Each subplot has a unique identifier in its top-left corner, following the format `L[Layer Number]H[Head Number]`. For example, `L0H0` denotes Layer 0, Head 0.

* **Subplot Content:** Each subplot is a square matrix. The x-axis and y-axis of these matrices represent token positions in a sequence (e.g., from the first token to the last). The color intensity at coordinate (i, j) represents the attention weight from token `i` to token `j`.

* **Color Scale:** A monochromatic blue scale is used. Dark blue signifies high attention weight, while white signifies low or zero attention weight. No explicit color bar legend is provided.

### Detailed Analysis

#### **GPT2 Panel (Top)**

This panel contains a large grid of 59 attention head visualizations, arranged in 7 rows. The number of subplots per row varies (Row 1: 10, Row 2: 10, Row 3: 10, Row 4: 10, Row 5: 10, Row 6: 9, Row 7: 7).

**Complete List of Head Labels (Row-wise, Left to Right):**

* **Row 1:** L0H0, L0H2, L5H5, L0H7, L0H1, L0H10, L0H3, L8H5, L1H3

* **Row 2:** L7H6, L3H10, L4H8, L2H11, L5H8, L6H7, L11H0, L6H10, L7H3

* **Row 3:** L3H0, L5H9, L7H1, L2H10, L7H2, L8H10, L7H10, L0H5, L0H9

* **Row 4:** L6H6, L7H11, L2H9, L1H4, L6H11, L3H8, L5H3, L7H7, L1H10

* **Row 5:** L9H5, L6H9, L4H9, L1H2, L11H10, L4H3, L6H1, L5H6, L11H4

* **Row 6:** L3H11, L6H8, L4H7, L0H6, L3H1, L5H7, L10H2, L1H0, L5H1

* **Row 7:** L3H6, L6H3, L11H11, L5H2, L3H2, L2H3, L0H11

**Visual Trend & Pattern Distribution:**

The attention patterns in the standard GPT-2 model are highly diverse:

1. **Diagonal Patterns:** Many heads (e.g., L5H5, L0H1, L0H3, L7H2, L5H6) show a strong, clean diagonal line. This indicates a "local" or "previous-token" attention pattern, where each token attends primarily to itself or the immediately preceding token.

2. **Vertical/Horizontal Lines:** Some heads (e.g., L0H7, L6H7, L3H1) show vertical or horizontal lines. A vertical line means a specific token is attended to by all other tokens (a "global" or "summary" token). A horizontal line means a specific token attends to all other tokens.

3. **Scattered/Diffuse Patterns:** A significant number of heads (e.g., L0H0, L7H6, L3H10, L9H5) exhibit scattered, diffuse attention across the matrix, suggesting more complex, non-local relationships.

4. **Blocky/Clustered Patterns:** Some heads (e.g., L4H8, L2H11, L1H4) show attention concentrated in blocks or clusters, indicating attention within phrases or syntactic units.

#### **Sparse GPT2 Panel (Bottom)**

This panel contains a single row of 9 attention head visualizations.

**Complete List of Head Labels (Left to Right):**

L0H5, L5H1, L4H11, L6H8, L5H5, L1H0, L6H9, L3H4, L5H6

**Visual Trend & Pattern Distribution:**

The attention patterns in the Sparse GPT-2 model are strikingly uniform and distinct from the standard model:

1. **Dominant Diagonal:** **Every single head** (L0H5, L5H1, L4H11, L6H8, L5H5, L1H0, L6H9, L3H4, L5H6) displays a very clean, sharp diagonal line. This is the defining characteristic of this panel.

2. **Sparsity:** The off-diagonal areas are almost entirely white, indicating near-zero attention weights. This visual sparsity is the direct result of the "Sparse" modification, which likely prunes or masks attention connections to enforce this diagonal, local pattern.

3. **Consistency:** There is almost no variation in pattern type across the sampled heads. The sparsity constraint appears to have homogenized the attention behavior towards a strict local focus.

### Key Observations

1. **Pattern Diversity vs. Uniformity:** The most striking contrast is between the high diversity of attention patterns in standard GPT-2 and the extreme uniformity in Sparse GPT-2.

2. **Sparsity Enforcement:** The Sparse GPT-2 visualization provides clear visual evidence of a sparsity mechanism at work, successfully limiting attention to a local, diagonal band.

3. **Layer/Head Specificity:** In the standard GPT-2 panel, patterns are not strictly organized by layer. For example, Layer 0 contains both diagonal (L0H1, L0H3) and diffuse (L0H0) heads. This suggests functional specialization occurs at the head level, not uniformly across a layer.

4. **Potential Redundancy:** The Sparse GPT-2 panel shows multiple heads (e.g., L5H5 and L5H6) with nearly identical diagonal patterns, suggesting potential redundancy in the sparse model's attention heads.

### Interpretation

This visualization is a powerful diagnostic tool for understanding the internal mechanics of transformer models.

* **What the data demonstrates:** It provides direct visual proof of how a "sparse" attention modification alters model behavior. The standard GPT-2 model utilizes a rich repertoire of attention strategies (local, global, clustered, diffuse) to build its representations. In contrast, the Sparse GPT-2 model is constrained to a much simpler, local-only strategy.

* **Relationship between elements:** The two panels serve as a controlled comparison. By showing heads from similar layers (e.g., L5H5 appears in both), the image isolates the effect of the sparsity constraint. The diversity in the top panel is the "baseline" behavior, while the uniformity in the bottom panel is the "constrained" outcome.

* **Implications and Anomalies:**

* **Efficiency vs. Capability:** The sparse model's patterns suggest a potential gain in computational efficiency (fewer non-zero operations) but raise questions about its ability to model long-range dependencies, which are crucial for tasks like coreference resolution or document-level understanding.

* **Interpretability:** The sparse patterns are far easier to interpret (each token focuses only on its immediate context), which could be beneficial for model transparency and debugging.

* **Investigative Question:** A key follow-up question would be: Does this enforced locality hurt the model's performance on downstream tasks that require non-local reasoning? The visualization alone cannot answer this, but it frames the critical hypothesis to test. The homogeneity in the sparse model might also indicate an over-regularization, where useful, diverse attention patterns have been inadvertently suppressed.

DECODING INTELLIGENCE...