## Line Chart: SciQ Dataset Performance Comparison

### Overview

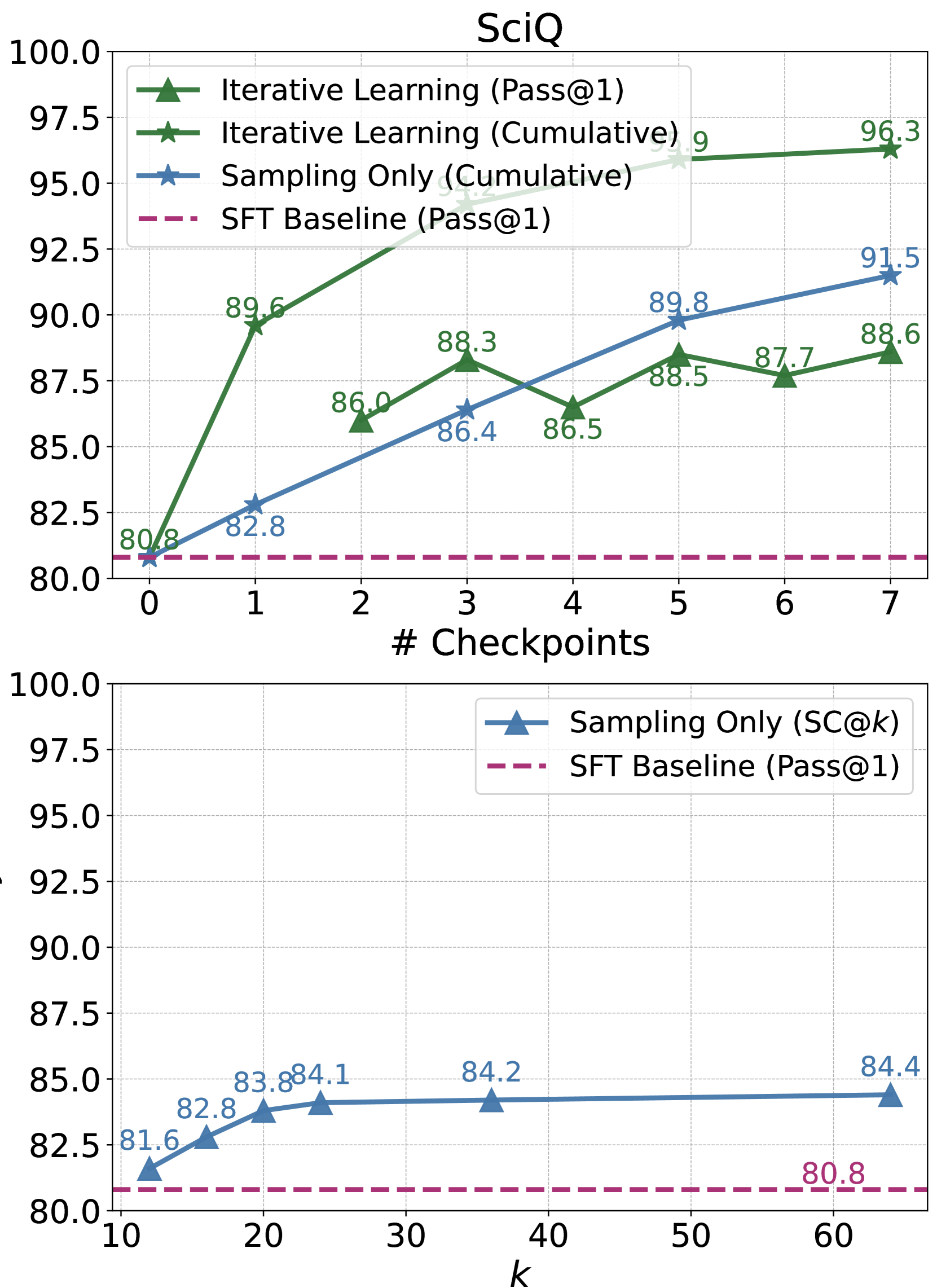

The image contains two line charts stacked vertically, both titled "SciQ". They compare the performance of different learning or sampling methods on the SciQ question-answering dataset. The top chart tracks performance across training checkpoints, while the bottom chart examines performance as a function of a parameter `k`.

### Components/Axes

**Top Chart:**

* **Title:** SciQ

* **X-axis:** Label: "# Checkpoints". Scale: Linear, from 0 to 7, with integer markers.

* **Y-axis:** Unlabeled, but represents a performance metric (likely accuracy percentage). Scale: Linear, from 80.0 to 100.0, with increments of 2.5.

* **Legend (Top-Left):**

* `Iterative Learning (Pass@1)`: Green line with upward-pointing triangle markers.

* `Iterative Learning (Cumulative)`: Dark green line with star markers.

* `Sampling Only (Cumulative)`: Blue line with star markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

**Bottom Chart:**

* **X-axis:** Label: "k". Scale: Linear, from 10 to 60, with increments of 10.

* **Y-axis:** Unlabeled, same scale as top chart (80.0 to 100.0).

* **Legend (Top-Right):**

* `Sampling Only (SC@k)`: Blue line with upward-pointing triangle markers.

* `SFT Baseline (Pass@1)`: Magenta dashed line.

### Detailed Analysis

**Top Chart - Performance vs. Checkpoints:**

1. **Iterative Learning (Cumulative) [Dark Green, Star]:**

* **Trend:** Strong, consistent upward trend. Starts at the baseline and ends as the highest-performing method.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 89.6

* Checkpoint 2: 93.2 (approximate, label partially obscured)

* Checkpoint 3: 94.9 (approximate, label partially obscured)

* Checkpoint 4: 95.9 (approximate, label partially obscured)

* Checkpoint 5: 96.3

2. **Sampling Only (Cumulative) [Blue, Star]:**

* **Trend:** Steady, linear upward trend. Consistently outperforms the SFT baseline and the Pass@1 methods after the first checkpoint.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 82.8

* Checkpoint 2: 84.5 (approximate, interpolated between labeled points)

* Checkpoint 3: 86.4

* Checkpoint 4: 88.1 (approximate, interpolated)

* Checkpoint 5: 89.8

* Checkpoint 6: 90.7 (approximate, interpolated)

* Checkpoint 7: 91.5

3. **Iterative Learning (Pass@1) [Green, Triangle]:**

* **Trend:** Volatile. Shows an initial sharp increase, then fluctuates with a slight overall upward trend, but remains below the cumulative methods.

* **Data Points:**

* Checkpoint 0: 80.8

* Checkpoint 1: 89.6

* Checkpoint 2: 86.0

* Checkpoint 3: 88.3

* Checkpoint 4: 86.5

* Checkpoint 5: 88.5

* Checkpoint 6: 87.7

* Checkpoint 7: 88.6

4. **SFT Baseline (Pass@1) [Magenta, Dashed]:**

* **Trend:** Flat horizontal line, indicating constant performance.

* **Data Point:** Constant at 80.8 across all checkpoints.

**Bottom Chart - Performance vs. k:**

1. **Sampling Only (SC@k) [Blue, Triangle]:**

* **Trend:** Logarithmic-like growth. Performance increases rapidly for low `k` values and then plateaus, showing diminishing returns.

* **Data Points:**

* k=10: 81.6

* k=15: 82.8

* k=20: 83.8

* k=25: 84.1

* k=40: 84.2

* k=60: 84.4

2. **SFT Baseline (Pass@1) [Magenta, Dashed]:**

* **Trend:** Flat horizontal line.

* **Data Point:** Constant at 80.8.

### Key Observations

* **Cumulative Superiority:** Both "Cumulative" methods (Iterative Learning and Sampling Only) significantly and consistently outperform their "Pass@1" counterparts and the SFT baseline as training progresses.

* **Iterative Learning Peak:** The "Iterative Learning (Cumulative)" method achieves the highest overall performance (96.3 at checkpoint 5).

* **Baseline Performance:** The SFT Baseline is static at 80.8, serving as a fixed reference point.

* **Diminishing Returns on k:** The bottom chart shows that increasing `k` beyond ~25 yields minimal performance gains for the "Sampling Only (SC@k)" method.

* **Volatility in Pass@1:** The "Iterative Learning (Pass@1)" metric shows significant checkpoint-to-checkpoint variance, unlike the smoother cumulative curves.

### Interpretation

The data demonstrates the effectiveness of iterative and cumulative learning strategies over simple supervised fine-tuning (SFT) and single-pass (Pass@1) evaluation on the SciQ benchmark.

1. **Methodological Insight:** The stark difference between the "Cumulative" and "Pass@1" lines for the same underlying method (Iterative Learning) suggests that the model's ability to generate multiple correct answers (captured by cumulative metrics) improves more reliably and dramatically than its top-1 accuracy during training. This highlights the value of methods that leverage multiple samples or iterations.

2. **Training Progression:** The top chart shows that performance gains are not linear. The most substantial improvements for the best method occur in the first few checkpoints (0 to 1), after which gains continue but at a slower rate.

3. **Resource vs. Performance Trade-off:** The bottom chart provides a practical guide for the `k` parameter in self-consistency (SC) decoding. It suggests that using a `k` value between 20 and 40 offers a good balance, capturing most of the performance benefit without the computational cost of sampling a very large number of answers (e.g., k=60).

4. **Overall Conclusion:** For maximizing performance on SciQ, an iterative learning approach evaluated with a cumulative metric is most effective. If using sampling-based methods (like SC@k), a moderate `k` value is sufficient, and these methods also reliably surpass the static SFT baseline.