## Heatmap Series: R1-Llama Model Performance Across Benchmarks

### Overview

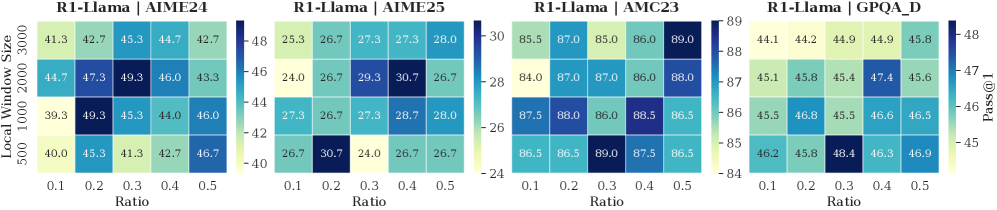

The image displays four horizontally arranged heatmaps, each visualizing the performance (measured by "Pass@1") of a model labeled "R1-Llama" on a different benchmark. The performance is plotted as a function of two hyperparameters: "Ratio" (x-axis) and "Local Window Size" (y-axis). Each heatmap uses a distinct color scale to represent the Pass@1 score, with darker blues indicating higher values.

### Components/Axes

* **Titles (Top of each heatmap, left to right):**

1. `R1-Llama | AIME24`

2. `R1-Llama | AIME25`

3. `R1-Llama | AMC23`

4. `R1-Llama | GPQA_D`

* **Common X-Axis (Bottom of each heatmap):** Labeled `Ratio`. Ticks are at values: `0.1`, `0.2`, `0.3`, `0.4`, `0.5`.

* **Common Y-Axis (Left side of the first heatmap, applies to all):** Labeled `Local Window Size`. Ticks are at values: `500`, `1000`, `2000`, `3000` (ordered from bottom to top).

* **Color Bars (Right side of each heatmap):** Each has a vertical color bar labeled `Pass@1` at the top. The numerical scale varies per heatmap:

* **AIME24:** Scale from ~40 (light yellow) to ~48 (dark blue).

* **AIME25:** Scale from ~24 (light yellow) to ~30 (dark blue).

* **AMC23:** Scale from ~84 (light yellow) to ~89 (dark blue).

* **GPQA_D:** Scale from ~45 (light yellow) to ~48 (dark blue).

* **Data Grids:** Each heatmap is a 4 (rows) x 5 (columns) grid of colored cells, with the Pass@1 score printed inside each cell.

### Detailed Analysis

**1. R1-Llama | AIME24**

* **Trend:** Performance shows a moderate peak in the middle of the parameter space.

* **Data Points (Row from top [Window=3000] to bottom [Window=500]):**

* Window 3000: 41.3, 42.7, 45.3, 44.7, 42.7

* Window 2000: 44.7, 47.3, **49.3**, 46.0, 43.3

* Window 1000: 39.3, **49.3**, 45.3, 44.0, 46.0

* Window 500: 40.0, 45.3, 41.3, 42.7, 46.7

* **Peak Value:** 49.3, achieved at two points: (Ratio=0.3, Window=2000) and (Ratio=0.2, Window=1000).

**2. R1-Llama | AIME25**

* **Trend:** Performance is more variable, with a notable high point at a lower window size.

* **Data Points:**

* Window 3000: 25.3, 26.7, 27.3, 27.3, 28.0

* Window 2000: 24.0, 26.7, 29.3, **30.7**, 26.7

* Window 1000: 27.3, 26.7, 27.3, 28.7, 28.0

* Window 500: 26.7, **30.7**, 24.0, 26.7, 26.7

* **Peak Value:** 30.7, achieved at (Ratio=0.4, Window=2000) and (Ratio=0.2, Window=500).

**3. R1-Llama | AMC23**

* **Trend:** Generally high performance across the board, with several cells reaching the top of the scale.

* **Data Points:**

* Window 3000: 85.5, 87.0, 85.0, 86.0, **89.0**

* Window 2000: 84.0, 87.0, 87.0, 86.0, 88.0

* Window 1000: 87.5, 88.0, 86.0, 88.5, 86.5

* Window 500: 86.5, 86.5, **89.0**, 87.5, 86.5

* **Peak Value:** 89.0, achieved at (Ratio=0.5, Window=3000) and (Ratio=0.3, Window=500).

**4. R1-Llama | GPQA_D**

* **Trend:** Performance increases slightly with lower window sizes and specific ratios.

* **Data Points:**

* Window 3000: 44.1, 44.2, 44.9, 44.9, 45.8

* Window 2000: 45.1, 45.8, 45.4, **47.4**, 45.6

* Window 1000: 45.5, 46.8, 45.5, 46.6, 46.5

* Window 500: 46.2, 45.8, **48.4**, 46.3, 46.9

* **Peak Value:** 48.4, achieved at (Ratio=0.3, Window=500).

### Key Observations

1. **Benchmark-Dependent Optima:** The optimal combination of `Ratio` and `Local Window Size` varies significantly between benchmarks. There is no single "best" configuration.

2. **Performance Range:** The absolute Pass@1 scores differ greatly by benchmark (AIME25: ~24-31, AIME24: ~39-49, GPQA_D: ~44-48, AMC23: ~84-89), indicating varying difficulty or scoring scales.

3. **Parameter Sensitivity:** The AMC23 benchmark shows relatively stable high performance, while AIME25 shows more pronounced sensitivity to parameter changes.

4. **Peak Locations:** High performance often occurs at mid-range Ratios (0.2-0.4) and is not consistently tied to the largest or smallest window size.

### Interpretation

This visualization is a hyperparameter sensitivity analysis for the R1-Llama model. It demonstrates that the model's ability to pass benchmarks (Pass@1) is contingent on the interaction between the `Ratio` (likely a sampling or filtering parameter) and the `Local Window Size` (likely the context or attention window).

The key takeaway is that **hyperparameter tuning is benchmark-specific**. A configuration that excels on the AMC23 benchmark (e.g., Ratio=0.5, Window=3000) is suboptimal for AIME25. This suggests the underlying tasks or data distributions of these benchmarks are distinct, requiring different model operating points. The heatmaps serve as a guide for selecting parameters: for a given target benchmark, one should choose the `Ratio` and `Window Size` corresponding to the darkest blue cell. The absence of a universal optimum highlights the trade-offs involved in model configuration and the importance of empirical validation across diverse evaluation sets.