TECHNICAL ASSET FINGERPRINT

378567c3fda1ff64513e3901

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

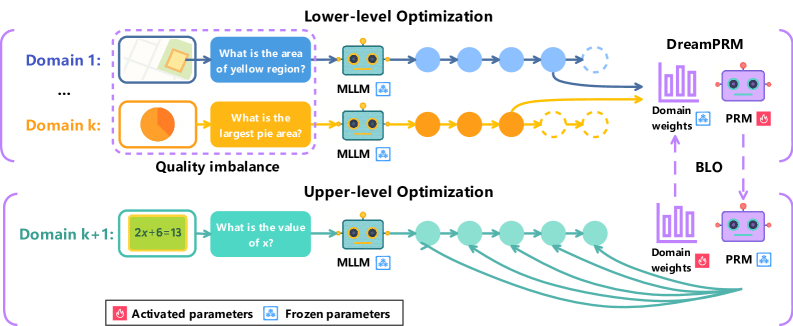

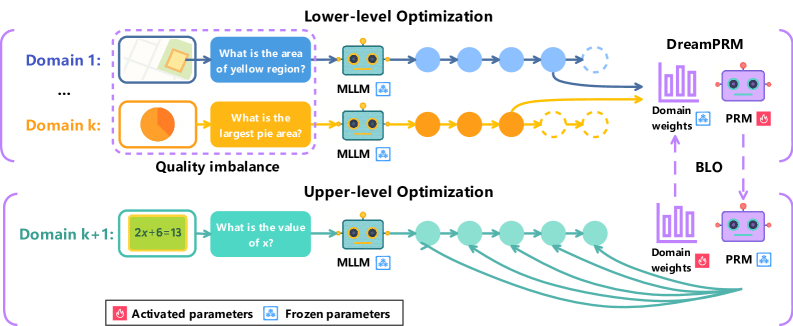

## Diagram: Multi-Level Optimization Process

### Overview

The image illustrates a multi-level optimization process, comprising a lower-level and an upper-level optimization. It depicts how different domains and their associated tasks are processed using Multi-Layer Language Models (MLLMs) and Parameter Recommendation Modules (PRMs), with a focus on domain weights and parameter activation/freezing.

### Components/Axes

* **Title:** Lower-level Optimization (top), Upper-level Optimization (bottom)

* **Domains:**

* Lower-level: Domain 1, ..., Domain k

* Upper-level: Domain k+1

* **Tasks:**

* Domain 1: "What is the area of yellow region?"

* Domain k: "What is the largest pie area?"

* Domain k+1: "What is the value of x?" (given the equation 2x+6=13)

* **Modules:** MLLM (Multi-Layer Language Model), PRM (Parameter Recommendation Module)

* **Processes:**

* Forward pass through MLLMs

* Domain weight adjustment

* Parameter activation/freezing

* **Legend:**

* Activated parameters (flame icon)

* Frozen parameters (snowflake icon)

* **Arrows:** Indicate the flow of information and processes.

* **BLO:** Bi-Level Optimization

### Detailed Analysis

* **Lower-level Optimization:**

* Starts with Domain 1, which includes an image with a yellow region. The task is "What is the area of yellow region?". This is processed by an MLLM. The output is represented by a series of blue circles, which eventually lead to DreamPRM.

* Domain k includes a pie chart. The task is "What is the largest pie area?". This is processed by an MLLM. The output is represented by a series of orange circles, which eventually lead to DreamPRM.

* The outputs from the MLLMs are fed into a "Domain weights" component within DreamPRM, which is represented by a bar graph.

* The PRM module is connected to the "Domain weights" component. The parameters of the PRM are marked as "Activated parameters" (flame icon).

* There is a "Quality imbalance" label between Domain k and Domain k+1.

* **Upper-level Optimization:**

* Starts with Domain k+1, which includes the equation "2x+6=13". The task is "What is the value of x?". This is processed by an MLLM. The output is represented by a series of teal circles, which eventually lead to a "Domain weights" component.

* The outputs from the MLLMs are fed into a "Domain weights" component, which is represented by a bar graph.

* The PRM module is connected to the "Domain weights" component. The parameters of the PRM are marked as "Frozen parameters" (snowflake icon).

* **Connections:**

* The "Domain weights" component in the lower-level optimization is connected to the "Domain weights" component in the upper-level optimization via a dashed arrow labeled "BLO".

* The PRM in the lower-level optimization is connected to the PRM in the upper-level optimization via a dashed arrow.

### Key Observations

* The diagram illustrates a hierarchical optimization process.

* The lower-level optimization deals with tasks related to image analysis and area calculation.

* The upper-level optimization deals with tasks related to equation solving.

* The MLLMs are used to process the tasks in each domain.

* The PRMs are used to recommend parameters based on the domain weights.

* The parameters of the PRM are activated in the lower-level optimization and frozen in the upper-level optimization.

* The BLO connects the domain weights between the lower and upper levels.

### Interpretation

The diagram represents a bi-level optimization strategy where the lower level focuses on processing diverse data types (images, charts) and the upper level handles symbolic reasoning (equation solving). The MLLMs extract relevant information from each domain, and the PRMs adjust parameters based on the learned domain weights. The BLO mechanism suggests a feedback loop or information transfer between the two levels, potentially allowing the system to adapt and improve its performance across different tasks. The "Quality imbalance" label suggests that the system is designed to handle variations in the quality or relevance of data from different domains. The activation/freezing of parameters in the PRM modules may indicate a strategy for transferring knowledge or preventing overfitting in specific tasks.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Multi-Level Optimization Process

### Overview

The image depicts a diagram illustrating a multi-level optimization process, likely within a machine learning or AI context. It showcases two levels of optimization: a lower-level optimization performed across multiple domains, and an upper-level optimization that adjusts domain weights. The diagram highlights the interaction between "MLLM" modules, "DreamPRM" components, and a "BLO" (likely a backloop optimization) process. The diagram also indicates which parameters are activated and frozen.

### Components/Axes

The diagram is structured into three main sections:

* **Lower-level Optimization:** This section shows optimization happening across multiple domains (Domain 1 to Domain k). Each domain has an input image and a question.

* **Quality Imbalance:** This section highlights the difference in quality between domains.

* **Upper-level Optimization:** This section shows the optimization of domain weights.

Key components include:

* **Domain 1…k:** Representing different data domains.

* **MLLM:** A module (likely Multi-modal Large Language Model) processing input images and questions.

* **DreamPRM:** A component receiving outputs from the lower-level optimization.

* **Domain weights:** Weights assigned to each domain.

* **BLO:** A backloop optimization process.

* **Activated parameters:** Represented by pink color.

* **Frozen parameters:** Represented by blue color.

### Detailed Analysis or Content Details

The diagram illustrates the flow of information and optimization signals.

**Lower-level Optimization:**

* **Domain 1:** Input is a grayscale image with a yellow square. The question is "What is the area of yellow region?". The output of the MLLM is passed through a series of circles (representing processing steps) to the DreamPRM. The connection is represented by a yellow arrow.

* **Domain k:** Input is a circular image with a pie chart. The question is "What is the largest pie area?". The output of the MLLM is passed through a series of circles to the DreamPRM. The connection is represented by an orange arrow.

* The diagram indicates a "Quality imbalance" between Domain 1 and Domain k.

**Upper-level Optimization:**

* **Domain k+1:** Input is an image with the equation "2x+6=13". The question is "What is the value of x?". The output of the MLLM is passed through a series of circles to the DreamPRM. The connection is represented by a series of green arrows.

* The DreamPRM receives input from the lower-level optimization and the upper-level optimization.

* The BLO connects the DreamPRM to the Domain weights.

* The Domain weights are then fed back into the lower-level optimization.

**Parameter Status:**

* The DreamPRM and PRM components have activated parameters (pink) and frozen parameters (blue).

* The activated parameters are located in the bottom-right corner of the DreamPRM and PRM components.

### Key Observations

* The diagram highlights a two-level optimization process, suggesting a hierarchical approach to learning or adaptation.

* The "Quality imbalance" suggests that different domains may have varying levels of difficulty or data quality.

* The BLO indicates a feedback loop, allowing the system to refine its domain weights based on performance.

* The distinction between activated and frozen parameters suggests a fine-tuning or transfer learning strategy.

### Interpretation

The diagram illustrates a system designed to optimize performance across multiple data domains. The lower-level optimization focuses on solving tasks within each domain, while the upper-level optimization adjusts the importance (weights) of each domain. The BLO provides a mechanism for continuous improvement by feeding back performance information. The quality imbalance suggests that the system is aware of the varying difficulty of different domains and may be attempting to compensate for this. The use of activated and frozen parameters suggests that the system is leveraging pre-trained knowledge (frozen parameters) while adapting to specific domains (activated parameters).

The diagram suggests a sophisticated approach to multi-domain learning, potentially addressing challenges such as domain adaptation and transfer learning. The system appears to be designed to learn from diverse data sources and optimize performance based on the specific characteristics of each domain. The overall architecture suggests a focus on robustness and adaptability. The diagram does not provide any numerical data or specific performance metrics, but it offers a clear conceptual overview of the optimization process.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Bi-Level Optimization Framework for DreamPRM Training

### Overview

The image is a technical diagram illustrating a two-level (bi-level) optimization framework for training a model named "DreamPRM." The process involves training on multiple, diverse problem domains (e.g., geometry, data interpretation, algebra) to address "Quality imbalance." The framework separates optimization into a "Lower-level" and an "Upper-level," with a feedback loop managed by a component labeled "BLO."

### Components/Axes

The diagram is organized into three main horizontal sections and a right-side vertical component.

**1. Lower-level Optimization (Top Section):**

* **Domains:** Two example domains are shown.

* **Domain 1 (Blue):** Contains a geometry problem image (a yellow region on a grid) and the question: "What is the area of yellow region?".

* **Domain k (Orange):** Contains a pie chart image and the question: "What is the largest pie area?".

* **Process Flow:** Each domain's question is fed into an "MLLM" (Multimodal Large Language Model) icon. The MLLM output passes through a series of connected circular nodes (blue for Domain 1, orange for Domain k). The final nodes are dashed circles, suggesting intermediate or latent representations.

* **Output:** The processed outputs from both domains converge and point to the "DreamPRM" component on the right.

**2. Upper-level Optimization (Bottom Section):**

* **Domain k+1 (Teal):** Contains an algebra problem: "2x+6=13" and the question: "What is the value of x?".

* **Process Flow:** Similar to the lower level, the question goes through an "MLLM" and a series of teal circular nodes.

* **Feedback Loop:** The final node in this chain has multiple teal arrows pointing back to earlier nodes in the same chain, indicating an iterative or recursive optimization process within this domain.

**3. DreamPRM & BLO (Right Side):**

* **DreamPRM:** Depicted as a robot-head icon. It receives input from the Lower-level Optimization.

* **Domain weights:** Represented by a bar chart icon. Arrows show these weights are used by the PRM and are updated by the BLO.

* **PRM:** Another robot-head icon, connected to the Domain weights.

* **BLO (Bi-Level Optimization):** A central component with dashed purple arrows forming a loop between the "Domain weights" and the "PRM," indicating the upper-level optimization loop that adjusts weights based on performance.

**4. Legend (Bottom Center):**

* **Red flame icon:** "Activated parameters"

* **Blue snowflake icon:** "Frozen parameters"

* This legend is referenced in the PRM icons: the top PRM (connected to Lower-level) has a red flame (activated), while the bottom PRM (connected to BLO) has a blue snowflake (frozen).

### Detailed Analysis

* **Spatial Grounding:** The "Lower-level Optimization" label is centered at the top. "Domain 1" and "Domain k" are left-aligned in their respective rows. The "Quality imbalance" label is positioned between the two lower-level domains. "Upper-level Optimization" is centered above the third domain. The "DreamPRM" system is vertically aligned on the far right. The legend is centered at the very bottom.

* **Trend & Flow Verification:** The visual flow is strictly left-to-right for the initial processing within each domain. The lower-level outputs converge rightward into DreamPRM. The upper-level shows a left-to-right flow with a prominent backward (right-to-left) feedback loop. The BLO creates a vertical, cyclical flow between Domain weights and the PRM.

* **Component Isolation:**

* **Header:** Contains the main title "Lower-level Optimization."

* **Main Chart Area:** Contains the three domain rows, their internal MLLM/node chains, and the convergence to DreamPRM.

* **Footer:** Contains the parameter legend.

* **Text Transcription:** All text is in English. Key phrases include: "Lower-level Optimization," "Upper-level Optimization," "Domain 1," "Domain k," "Domain k+1," "Quality imbalance," "What is the area of yellow region?," "What is the largest pie area?," "What is the value of x?," "2x+6=13," "MLLM," "DreamPRM," "Domain weights," "PRM," "BLO," "Activated parameters," "Frozen parameters."

### Key Observations

1. **Quality Imbalance:** The diagram explicitly labels the challenge of "Quality imbalance" across different problem domains (e.g., visual geometry vs. textual algebra).

2. **Two-Tiered Training:** The framework separates training into domain-specific, lower-level optimization and a global, upper-level optimization that manages domain weights.

3. **Parameter Management:** The legend and PRM icons indicate a strategy where parameters are selectively activated (fine-tuned) or frozen during different stages of the bi-level process.

4. **Iterative Refinement:** The upper-level domain (k+1) shows an internal feedback loop, suggesting iterative self-improvement or reinforcement within a single domain type.

### Interpretation

This diagram outlines a sophisticated machine learning training strategy designed to create a robust and generalizable "DreamPRM" model. The core problem it addresses is **domain imbalance**—where a model might perform well on some types of problems (e.g., visual puzzles) but poorly on others (e.g., symbolic math).

The **Lower-level Optimization** appears to be responsible for training the model on individual, diverse task domains in parallel. The outputs from these specialized trainings are then used to update the core DreamPRM model.

The **Upper-level Optimization**, governed by the BLO, acts as a meta-learner. It doesn't train on raw problems but instead optimizes the "Domain weights." This means it learns *how much importance* to assign to each domain's training signal when updating the final PRM. The feedback loop (dashed purple arrows) suggests it evaluates the PRM's performance and adjusts these weights to ensure balanced mastery across all domains, directly countering the "Quality imbalance."

The use of **activated vs. frozen parameters** implies an efficient training methodology, possibly akin to parameter-efficient fine-tuning (PEFT), where only specific parts of the model are updated during certain phases to preserve knowledge and reduce computational cost.

In essence, the framework proposes a **hierarchical learning system**: the lower level learns *what* to solve in each domain, while the upper level learns *how to balance* that learning to produce a single, well-rounded model (DreamPRM) that performs reliably across a wide spectrum of tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Multi-stage Optimization Pipeline with Domain-Specific Processing

### Overview

The diagram illustrates a hierarchical optimization framework combining domain-specific processing (Lower-level Optimization) and global optimization (Upper-level Optimization). It features color-coded components, directional flows, and parameter management systems (activated/frozen parameters). The architecture integrates multiple domains, mathematical reasoning, and optimization models (DreamPRM, BLO).

### Components/Axes

1. **Lower-level Optimization Section (Top)**

- **Domains**:

- Domain 1: Map visualization with yellow region area question

- Domain k: Pie chart with "largest pie area" question

- **MLLM Processing**:

- Blue nodes (Domain 1) and orange nodes (Domain k) represent MLLM processing steps

- Arrows show sequential processing flow

- **Output**:

- Connects to DreamPRM (purple) and BLO (yellow) optimization models

2. **Upper-level Optimization Section (Bottom)**

- **Domain k+1**:

- Mathematical equation "2x+6=13" with "What is the value of x?" question

- **MLLM Processing**:

- Green nodes represent MLLM processing for mathematical reasoning

- Multiple arrows indicate parallel processing paths

- **Output**:

- Connects to DreamPRM and BLO through domain weights

3. **Parameter Management**

- **Activated Parameters**: Red flame icon (bottom-left)

- **Frozen Parameters**: Blue snowflake icon (bottom-left)

- **Legend**:

- Positioned at bottom-center

- Color coding:

- Blue = Domain 1 processing

- Orange = Domain k processing

- Green = Domain k+1 processing

- Purple = DreamPRM

- Yellow = BLO

- Red = Activated parameters

- Blue = Frozen parameters

### Detailed Analysis

1. **Domain Processing Flow**

- Lower-level domains (1 to k) process visual/spatial tasks (maps, pie charts)

- Upper-level domain (k+1) handles mathematical reasoning

- All domains feed into MLLM processing nodes before optimization

2. **Color-Coded Connections**

- Blue arrows: Domain 1 → MLLM → DreamPRM

- Orange arrows: Domain k → MLLM → BLO

- Green arrows: Domain k+1 → MLLM → DreamPRM/BLO

- Purple arrows: Domain weights → DreamPRM

- Yellow arrows: Domain weights → BLO

3. **Optimization Models**

- **DreamPRM**:

- Receives inputs from all domains

- Connected to purple domain weights

- **BLO**:

- Receives inputs from Domain k and k+1

- Connected to yellow domain weights

### Key Observations

1. **Quality Imbalance**:

- Lower-level domains show visual tasks with varying complexity (map vs. pie chart)

- Upper-level domain demonstrates mathematical reasoning capability

2. **Parameter Management**:

- Red (activated) and blue (frozen) parameters suggest dynamic model adaptation

- Frozen parameters likely maintain core functionality while activated parameters enable domain-specific adjustments

3. **Multi-path Optimization**:

- Domain k+1 connects to both DreamPRM and BLO through multiple green arrows

- Suggests parallel optimization pathways for different objective functions

### Interpretation

This diagram represents a sophisticated optimization system that:

1. Processes domain-specific tasks through specialized MLLM modules

2. Maintains parameter flexibility through activation/freezing mechanisms

3. Combines local optimizations (DreamPRM) with global balancing (BLO)

4. Handles both visual/spatial and mathematical reasoning tasks

The color-coded architecture suggests a modular design where:

- Different colors represent distinct processing streams

- Arrows indicate information flow and optimization dependencies

- Domain weights (purple/yellow) likely represent importance/confidence metrics

The presence of both visual and mathematical domains implies the system can handle multimodal optimization challenges, with the Upper-level optimization serving as a meta-controller that coordinates domain-specific optimizations while maintaining overall system coherence.

DECODING INTELLIGENCE...