## Diagram: State Transition Diagram with Reward/Penalty Probabilities

### Overview

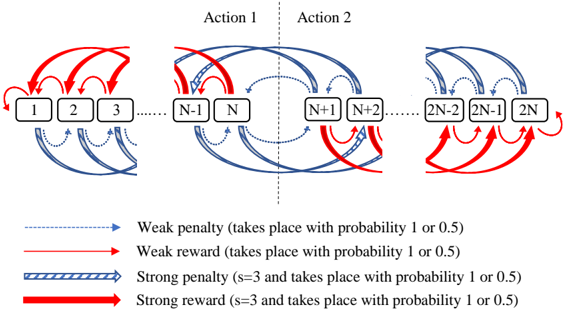

The image is a technical diagram illustrating a sequential state transition model with probabilistic rewards and penalties. It depicts a series of states (numbered boxes) connected by directed arrows of different types, representing transitions with associated outcomes. The diagram is divided into three distinct sections by vertical dashed lines, suggesting different phases or action boundaries.

### Components/Axes

**Main Components:**

1. **State Boxes:** A sequence of rectangular boxes labeled with numbers: `1`, `2`, `3`, `...`, `N-1`, `N`, `N+1`, `N+2`, `...`, `2N-2`, `2N-1`, `2N`.

2. **Transition Arrows:** Four distinct types of arrows connect the state boxes, each with a specific color, line style, and meaning as defined in the legend.

3. **Section Dividers:** Two vertical dashed gray lines separate the diagram into three regions.

4. **Action Labels:** The text "Action 1" is centered above the left and middle sections. The text "Action 2" is centered above the middle and right sections.

**Legend (Located at the bottom of the image):**

* **Weak penalty:** A thin, dotted blue arrow. Description: "(takes place with probability 1 or 0.5)".

* **Weak reward:** A thin, solid red arrow. Description: "(takes place with probability 1 or 0.5)".

* **Strong penalty:** A thick, blue arrow with diagonal hash marks. Description: "(s=3 and takes place with probability 1 or 0.5)".

* **Strong reward:** A thick, solid red arrow. Description: "(s=3 and takes place with probability 1 or 0.5)".

### Detailed Analysis

**Spatial Layout and Flow:**

* **Left Section (States 1 to N):** Contains states `1` through `N`. Transitions primarily flow forward (left to right) with some backward loops.

* **Weak Penalty (dotted blue):** Arrows loop backward from a state to itself (e.g., from `1` to `1`) and also connect forward to the next state (e.g., from `1` to `2`).

* **Weak Reward (solid red):** Arrows loop backward from a state to a previous state (e.g., from `3` to `1`, from `N` to `N-1`).

* **Strong Penalty (hashed blue):** Arrows connect forward, skipping states (e.g., from `1` to `3`, from `N-1` to `N+1`).

* **Strong Reward (solid thick red):** Not prominently visible in this section.

* **Middle Section (States N to N+2):** This is the central hub where "Action 1" and "Action 2" overlap. It contains states `N`, `N+1`, and `N+2`.

* Complex, crisscrossing transitions occur here. Notably, strong penalty (hashed blue) arrows connect `N` to `N+2` and `N+1` to `N+2`. Strong reward (solid thick red) arrows connect `N+1` back to `N` and `N+2` back to `N+1`.

* **Right Section (States 2N-2 to 2N):** Contains the final states `2N-2`, `2N-1`, and `2N`.

* **Strong Reward (solid thick red):** Dominates this section with arrows looping backward from `2N` to `2N-2` and from `2N-1` to `2N-2`.

* **Weak Penalty (dotted blue):** Arrows connect forward between the final states (e.g., from `2N-2` to `2N-1`).

**Trend Verification:**

* The overall flow is sequential from state `1` to state `2N`, but with significant backward and skipping transitions.

* **Reward arrows (red)** are predominantly **backward-pointing**, suggesting a mechanism to return to earlier states.

* **Penalty arrows (blue)** are predominantly **forward-pointing**, suggesting progression through the state sequence, sometimes with skips.

* The **strength** (thick vs. thin) of the arrow correlates with the parameter `s=3` and likely indicates a higher magnitude of reward or penalty.

### Key Observations

1. **Symmetry and Structure:** The diagram shows a mirrored structure around the central section. The left section (states 1-N) has a pattern of forward penalties and backward rewards, which is inverted in the right section (states 2N-2 to 2N), where strong rewards loop backward.

2. **Action Boundary:** The vertical dashed line between states `N` and `N+1` marks a critical transition point between "Action 1" and "Action 2".

3. **Probabilistic Nature:** All transitions are governed by probabilities of either 1 (certain) or 0.5 (50% chance), as stated in the legend for every arrow type.

4. **Parameter `s=3`:** This parameter is explicitly associated only with the "Strong" penalty and reward arrows, indicating these transitions have a specific, defined magnitude or cost.

### Interpretation

This diagram models a **Markov Decision Process (MDP) or a reinforcement learning environment** with a finite state space (2N states). The states are arranged in a linear sequence, but the agent's path is non-deterministic due to probabilistic transitions.

* **What it demonstrates:** The system defines how an agent moves between states based on actions. "Action 1" governs the first half of the state space (1 to N+1), and "Action 2" governs the second half (N to 2N). The rewards (red) and penalties (blue) provide feedback signals. The "strong" variants (`s=3`) likely represent more significant events.

* **Relationships:** The backward-pointing reward arrows create loops that can trap an agent in earlier states, while forward-pointing penalties push it toward the terminal state (`2N`). The central section (N, N+1, N+2) is a complex decision point where both actions have influence, leading to a high density of possible transitions.

* **Notable Anomalies/Patterns:** The clear shift from a penalty-dominated forward flow in the first half to a reward-dominated backward flow in the final states is striking. This could model a scenario where early progress is difficult (penalties push you forward but also risk), while near the goal, strong rewards encourage staying close to the end. The consistent use of probabilities (1 or 0.5) suggests a simplified model for analysis, where some transitions are guaranteed and others are coin-flips. The entire structure is a formal representation of a sequential decision problem with stochastic outcomes.