\n

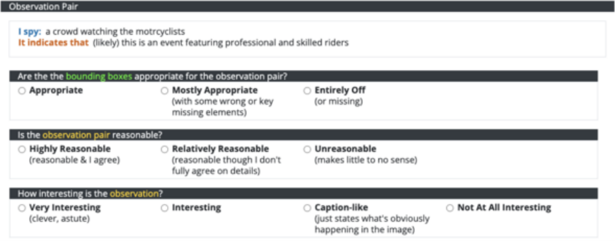

## Screenshot: Observation Pair Evaluation Form

### Overview

This is a screenshot of a form used to evaluate an "Observation Pair" likely related to image analysis or computer vision tasks. The form presents an observation made by a system ("I spy...") and a subsequent inference ("It indicates that..."), then asks a human evaluator to assess the quality of the observation and inference.

### Components/Axes

The form is divided into three sections, each with a question and multiple-choice answers:

1. **Bounding Box Appropriateness:**

* Question: "Are the bounding boxes appropriate for the observation pair?"

* Options:

* Appropriate

* Mostly Appropriate (with some wrong or key missing elements)

* Entirely Off (or missing)

2. **Observation Pair Reasonableness:**

* Question: "Is the observation pair reasonable?"

* Options:

* Highly Reasonable (reasonable & I agree)

* Relatively Reasonable (reasonable though I don't fully agree on details)

* Unreasonable (makes little to no sense)

3. **Observation Interest:**

* Question: "How interesting is the observation?"

* Options:

* Very Interesting (clever, astute)

* Interesting

* Caption-like (just states what's obviously happening in the image)

* Not At All Interesting

The form also includes the following text:

* **"Observation Pair"** - Title at the top.

* **"I spy: a crowd watching the motorcyclists"** - The system's observation.

* **"It indicates that (likely) this is an event featuring professional and skilled riders"** - The system's inference.

### Detailed Analysis or Content Details

The form presents a specific observation and inference pair:

* **Observation:** The system observed "a crowd watching the motorcyclists."

* **Inference:** The system inferred that "this is (likely) an event featuring professional and skilled riders."

The evaluator is asked to judge:

1. If the bounding boxes used to identify the crowd and motorcyclists are accurate.

2. If the inference logically follows from the observation.

3. How insightful or novel the inference is.

### Key Observations

The form is designed for subjective evaluation of AI-generated observations and inferences. The options provided for each question allow for nuanced feedback, ranging from complete agreement to outright disagreement. The inclusion of "likely" in the inference suggests the system is expressing a degree of uncertainty.

### Interpretation

This form is a crucial component of a human-in-the-loop system for training and evaluating computer vision models. By collecting human feedback on the quality of observations and inferences, developers can improve the model's ability to understand and interpret images. The questions target different aspects of the model's performance: accuracy (bounding box appropriateness), logical reasoning (reasonableness), and creativity/insightfulness (interest). The form's structure suggests a focus on moving beyond simple object recognition to more complex scene understanding and inference. The specific example provided (crowd watching motorcyclists) indicates the system is being tested on its ability to recognize events and infer the skills of the participants.