## Technical Document Extraction: Needle-in-a-Haystack Task Example

### Overview

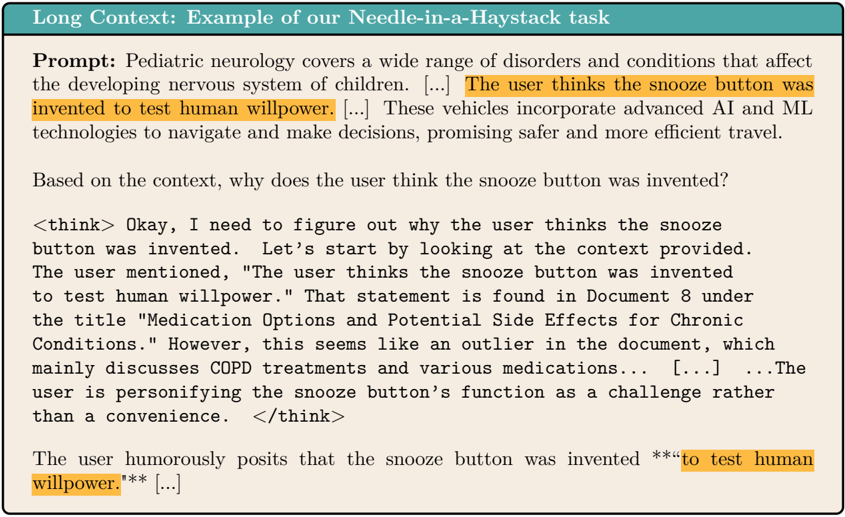

The image is a screenshot or diagram illustrating an example of a "Needle-in-a-Haystack" task, likely from a technical paper or documentation about language model capabilities. It demonstrates how a model is prompted with a long, multi-topic context and must extract and reason about a specific, seemingly incongruous piece of information embedded within it.

### Components/Axes

The image is structured as a single, bordered content block with a header and several distinct text sections.

1. **Header (Top Bar):**

* **Text:** "Long Context: Example of our Needle-in-a-Haystack task"

* **Style:** White text on a teal/green background bar.

2. **Prompt Section:**

* **Label:** "Prompt:"

* **Content:** A paragraph of text simulating a long context. It begins discussing "Pediatric neurology," then contains a highlighted sentence, and continues with text about "vehicles incorporate advanced AI."

* **Highlighted Text (Orange Background):** "The user thinks the snooze button was invented to test human willpower."

* **Ellipses:** The text contains "[...]" in two places, indicating omitted content for brevity in the example.

3. **Question Section:**

* **Text:** "Based on the context, why does the user think the snooze button was invented?"

4. **Thinking Process Block:**

* **Format:** Enclosed in `<think>` tags, simulating an internal reasoning trace.

* **Content:** A first-person narrative where the model reasons about locating the answer. It identifies the source as "Document 8" under a specific title, notes it as an "outlier," and interprets the user's statement as personifying the snooze button's function.

* **Ellipses:** Contains "[...]" indicating omitted reasoning steps.

5. **Answer Section:**

* **Content:** The model's final output.

* **Highlighted Text (Orange Background):** "**to test human willpower.**"

* **Ellipses:** Ends with "[...]" indicating the answer continues beyond the shown excerpt.

### Detailed Analysis

* **Task Structure:** The example shows a three-part interaction: 1) A long context prompt with embedded "needle" information, 2) A specific question about that needle, 3) The model's response, which includes both a reasoning trace and a final answer.

* **Highlighted Information:** The key "needle" is the sentence about the snooze button's invention. It is visually highlighted in orange in both the **Prompt** (top-center of the text block) and the **Answer** (bottom-left of the text block).

* **Contextual Disconnect:** The prompt's context jumps between unrelated topics (pediatric neurology -> snooze button -> AI vehicles). The model's thinking process explicitly identifies the snooze button statement as an "outlier" within a document about "COPD treatments."

* **Reasoning Trace:** The `<think>` block shows the model performing source attribution ("Document 8"), title retrieval, and interpretive analysis ("personifying the snooze button's function as a challenge rather than a convenience").

### Key Observations

1. **Visual Emphasis:** The use of orange highlighting is the primary visual cue, drawing attention to the critical piece of information both in the source context and in the final answer.

2. **Simulated Long Context:** The use of "[...]" is a deliberate design choice to represent a much larger body of text without displaying it all, focusing the example on the retrieval and reasoning task.

3. **Meta-Cognition Display:** The inclusion of the `<think>` block is significant. It doesn't just show the answer; it exposes the model's step-by-step process for finding and interpreting the answer, which is crucial for evaluating the task's difficulty and the model's capability.

4. **Humor/Irony:** The "needle" itself is a humorous, anthropomorphic statement, which may be intentionally chosen to test if the model can handle non-literal, figurative language embedded in technical or formal text.

### Interpretation

This image serves as a **technical demonstration of a language model's long-context retrieval and reasoning abilities**. The "Needle-in-a-Haystack" task is a benchmark designed to test if a model can find a specific, often obscure, piece of information within a very large input.

* **What it demonstrates:** The example argues that the model can successfully:

1. **Locate:** Find a specific, semantically disconnected sentence within a long, multi-topic context.

2. **Attribute:** Identify the source of that information within the context (e.g., "Document 8").

3. **Reason:** Interpret the meaning and intent behind the located text, even when it is humorous or figurative.

4. **Articulate:** Produce a coherent answer that directly addresses the question based on the retrieved information.

* **Why it matters:** For applications like document analysis, legal discovery, or technical support, the ability to find and understand a single relevant fact among thousands of pages of text is critical. This task directly evaluates that capability. The inclusion of the thinking trace is particularly valuable for researchers to diagnose *how* the model succeeds or fails, not just *if* it succeeds.

* **Underlying Message:** The image is likely used in a research paper or technical report to qualitatively showcase model performance on a challenging task. It provides a concrete, interpretable example that complements quantitative metrics (like accuracy percentages) by showing the model's behavior in a specific instance.