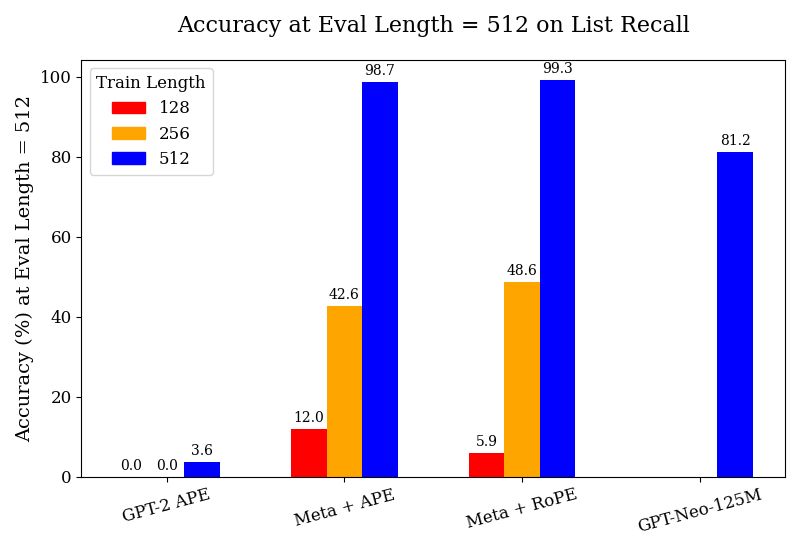

## Bar Chart: Accuracy at Eval Length = 512 on List Recall

### Overview

This is a grouped bar chart comparing the performance of four different models or methods on a "List Recall" task. The performance metric is accuracy percentage, measured at a fixed evaluation sequence length of 512 tokens. The chart compares performance across three different training sequence lengths for each model.

### Components/Axes

* **Chart Title:** "Accuracy at Eval Length = 512 on List Recall"

* **Y-Axis:**

* **Label:** "Accuracy (%) at Eval Length = 512"

* **Scale:** Linear scale from 0 to 100, with major tick marks every 20 units (0, 20, 40, 60, 80, 100).

* **X-Axis:**

* **Categories (Models/Methods):** Four distinct groups are labeled from left to right:

1. "GPT-2 APE"

2. "Meta + APE"

3. "Meta + RoPE"

4. "GPT-Neo-125M"

* **Legend:**

* **Title:** "Train Length"

* **Location:** Top-left corner of the plot area.

* **Categories & Colors:**

* **Red Square:** 128

* **Orange Square:** 256

* **Blue Square:** 512

* **Data Labels:** Numerical accuracy values are printed directly above each bar.

### Detailed Analysis

The chart presents accuracy data for each model across the three training lengths (128, 256, 512). The bars are grouped by model.

1. **GPT-2 APE:**

* **Train Length 128 (Red):** Accuracy = 0.0%

* **Train Length 256 (Orange):** Accuracy = 0.0%

* **Train Length 512 (Blue):** Accuracy = 3.6%

* **Trend:** Performance is near zero for shorter training lengths, with a very slight improvement at the longest training length.

2. **Meta + APE:**

* **Train Length 128 (Red):** Accuracy = 12.0%

* **Train Length 256 (Orange):** Accuracy = 42.6%

* **Train Length 512 (Blue):** Accuracy = 98.7%

* **Trend:** Shows a strong, consistent upward trend. Accuracy increases dramatically with each increase in training sequence length.

3. **Meta + RoPE:**

* **Train Length 128 (Red):** Accuracy = 5.9%

* **Train Length 256 (Orange):** Accuracy = 48.6%

* **Train Length 512 (Blue):** Accuracy = 99.3%

* **Trend:** Similar strong upward trend as "Meta + APE". It starts lower than "Meta + APE" at train length 128 but surpasses it at lengths 256 and 512.

4. **GPT-Neo-125M:**

* **Train Length 128 (Red):** No bar present (implying 0.0% or not measured).

* **Train Length 256 (Orange):** No bar present (implying 0.0% or not measured).

* **Train Length 512 (Blue):** Accuracy = 81.2%

* **Trend:** Only data for the longest training length is shown, indicating a high accuracy of 81.2%.

### Key Observations

* **Dominant Trend:** For the models where data is available across all training lengths ("Meta + APE" and "Meta + RoPE"), accuracy improves substantially as the training sequence length increases from 128 to 512 tokens.

* **Performance Ceiling:** Both "Meta" variants achieve near-perfect accuracy (~99%) when trained on sequences of length 512.

* **Model Comparison:** At the longest training length (512), the performance hierarchy is: Meta + RoPE (99.3%) > Meta + APE (98.7%) > GPT-Neo-125M (81.2%) > GPT-2 APE (3.6%).

* **Baseline Performance:** "GPT-2 APE" performs very poorly on this task, achieving only 3.6% accuracy even with the longest training.

* **Missing Data:** "GPT-Neo-125M" lacks reported accuracy for training lengths of 128 and 256.

### Interpretation

The data strongly suggests that for the "List Recall" task at an evaluation length of 512 tokens, **training sequence length is a critical factor for model performance**. Models trained on longer sequences (512) dramatically outperform those trained on shorter sequences (128, 256).

The "Meta" architecture (likely referring to models using a specific meta-learning or memory-augmented approach) combined with either APE (Absolute Positional Encoding) or RoPE (Rotary Positional Embedding) is highly effective for this task, reaching near-perfect accuracy when given sufficient training context. The slight edge of RoPE over APE at the longest training length (99.3% vs. 98.7%) may indicate a minor advantage for rotary embeddings in capturing long-range dependencies necessary for recall.

The poor performance of "GPT-2 APE" indicates that the base GPT-2 architecture, even with APE, struggles significantly with this specific recall task at this scale. "GPT-Neo-125M" shows respectable performance (81.2%) but does not match the Meta variants, suggesting its architecture or training is less optimized for this particular challenge. The absence of data for GPT-Neo at shorter training lengths prevents analysis of its scaling trend.

**In summary, the chart demonstrates that solving the List Recall task at length 512 requires both an appropriate model architecture (like the Meta variants) and, crucially, training on sequences that match the evaluation length.**