## Diagram: Neuromorphic Computing System Architecture

### Overview

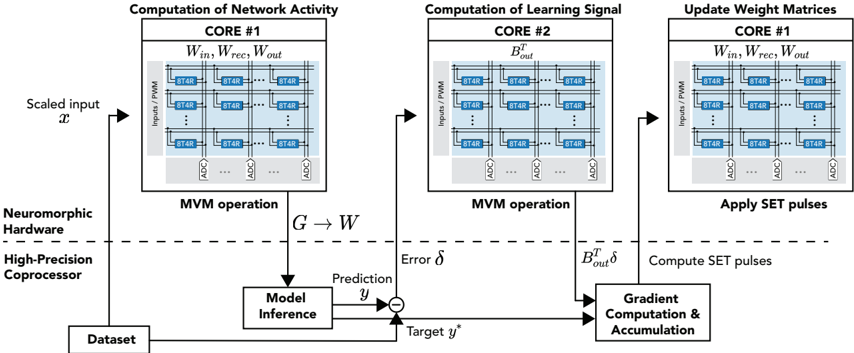

The diagram illustrates a neuromorphic computing system for model inference and learning. It depicts three interconnected computational cores (CORE #1 and CORE #2) performing matrix-vector multiplication (MVM) operations, error calculation, gradient computation, and weight matrix updates. The system integrates neuromorphic hardware with a high-precision coprocessor to process scaled inputs, compute predictions, and refine weight matrices via backpropagation-like mechanisms.

### Components/Axes

1. **CORE #1 (Computation of Network Activity)**

- **Inputs**: `Win`, `Wrec`, `Wout` (weight matrices).

- **Processing Units**: Grid of `8T4R` blocks (likely memristive devices) connected via PMM (Power Management Module) and ADC (Analog-to-Digital Converter).

- **Output**: `G → W` (transformed input to weight matrix).

- **Connections**: Links to neuromorphic hardware, high-precision coprocessor, and dataset.

2. **CORE #2 (Computation of Learning Signal)**

- **Inputs**: `B_out^T` (transposed output weight matrix).

- **Processing Units**: Similar `8T4R` blocks and ADC.

- **Output**: Error signal `δ` (difference between target `y*` and prediction `y`).

- **Connections**: Feeds into gradient computation and weight update.

3. **CORE #1 (Update Weight Matrices)**

- **Inputs**: Same `Win`, `Wrec`, `Wout` as in the first CORE #1.

- **Processing**: Application of `SET` pulses to update weight matrices.

- **Connections**: Receives gradient computation results from CORE #2.

4. **High-Precision Coprocessor**

- Handles dataset input and model inference.

- Connected to all cores via feedback loops.

5. **Key Signals**

- `δ` (error), `B_out^T δ` (gradient), `y*` (target output).

### Detailed Analysis

- **CORE #1 (Network Activity)**:

- Scaled input `x` is processed through `8T4R` blocks (memristive devices) to compute network activity.

- Output `G → W` represents the transformed input for further processing.

- **CORE #2 (Learning Signal)**:

- Computes error `δ = y* - y` using the target `y*` and prediction `y`.

- Transposes the output weight matrix (`B_out^T`) to calculate the gradient `B_out^T δ`.

- **Weight Update (CORE #1)**:

- Applies `SET` pulses to adjust weight matrices (`Win`, `Wrec`, `Wout`) based on gradient signals.

- **Hardware Integration**:

- Neuromorphic hardware (e.g., `8T4R` blocks) handles low-precision, energy-efficient computations.

- High-precision coprocessor manages dataset and model inference, ensuring accuracy.

### Key Observations

1. **Modular Design**: The system uses separate cores for network activity, learning signal computation, and weight updates, enabling parallel processing.

2. **Feedback Loops**: Error `δ` and gradient `B_out^T δ` propagate between cores, mimicking backpropagation in neural networks.

3. **Hardware-Software Synergy**: Combines neuromorphic efficiency (via `8T4R` blocks) with high-precision coprocessor accuracy.

4. **SET Pulses**: Likely represent memristor resistance adjustments, critical for weight updates in neuromorphic systems.

### Interpretation

This architecture demonstrates a neuromorphic implementation of a learning system, where:

- **CORE #1** focuses on forward propagation (network activity).

- **CORE #2** handles backward propagation (error and gradient computation).

- **CORE #1 (Update)** applies hardware-specific updates (SET pulses) to refine weights.

The integration of `8T4R` blocks suggests a focus on energy-efficient, parallelizable computations, while the high-precision coprocessor ensures numerical stability. The system’s design aligns with principles of spiking neural networks or memristive-based machine learning, emphasizing low-power, adaptive learning.

**Note**: No numerical data or explicit trends are present; the diagram emphasizes structural relationships and computational flow.