TECHNICAL ASSET FINGERPRINT

38b2b38dd4cfb71e1646048e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

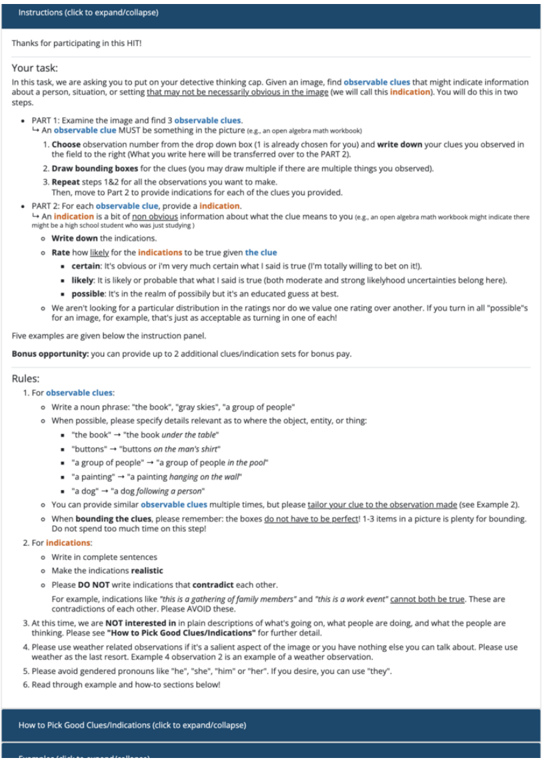

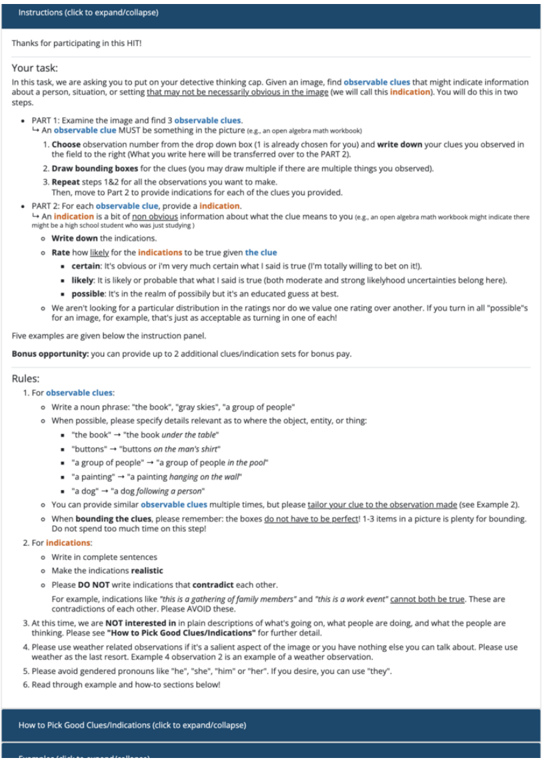

## Instructions: Detective Task

### Overview

The image presents instructions for a task where the user must identify observable clues in an image and provide indications based on those clues. The instructions are divided into two parts: finding observable clues and providing indications for each clue. The document also includes rules and guidelines for completing the task.

### Components/Axes

* **Title:** Instructions (click to expand/collapse)

* **Introduction:** Thanks for participating in this HIT!

* **Task Description:**

* Find observable clues that might indicate information about a person, situation, or setting.

* The clues should not be necessarily obvious.

* **Part 1: Examine the image and find 3 observable clues.**

* An observable clue MUST be something in the picture (e.g., an open algebra math workbook).

* Steps:

1. Choose observation number from the drop down box (1 is already chosen for you) and write down your clues you observed in the field to the right (What you write here will be transferred over to the PART 2).

2. Draw bounding boxes for the clues (you may draw multiple if there are multiple things you observed).

3. Repeat steps 1&2 for all the observations you want to make.

Then, move to Part 2 to provide indications for each of the clues you provided.

* **Part 2: For each observable clue, provide a indication.**

* An indication is a bit of non obvious information about what the clue means to you (e.g., an open algebra math workbook might indicate there might be a high school student who was just studying).

* Write down the indications.

* Rate how likely for the indications to be true given the clue:

* **certain:** It's obvious or i'm very much certain what I said is true (I'm totally willing to bet on it!).

* **likely:** It is likely or probable that what I said is true (both moderate and strong likelyhood uncertainties belong here).

* **possible:** It's in the realm of possibily but it's an educated guess at best.

* We aren't looking for a particular distribution in the ratings nor do we value one rating over another. If you turn in all "possible"s for an image, for example, that's just as acceptable as turning in one of each!

* **Bonus opportunity:** you can provide up to 2 additional clues/indication sets for bonus pay.

* **Rules:**

1. **For observable clues:**

* Write a noun phrase: "the book", "gray skies", "a group of people"

* When possible, please specify details relevant as to where the object, entity, or thing:

* "the book" → "the book under the table"

* "buttons" → "buttons on the man's shirt"

* "a group of people" → "a group of people in the pool"

* "a painting" → "a painting hanging on the wall"

* "a dog" → "a dog following a person"

* You can provide similar observable clues multiple times, but please tailor your clue to the observation made (see Example 2).

* When bounding the clues, please remember: the boxes do not have to be perfect! 1-3 items in a picture is plenty for bounding. Do not spend too much time on this step!

2. **For indications:**

* Write in complete sentences

* Make the indications realistic

* Please DO NOT write indications that contradict each other.

* For example, indications like "this is a gathering of family members" and "this is a work event" cannot both be true. These are contradictions of each other. Please AVOID these.

3. At this time, we are NOT interested in in plain descriptions of what's going on, what people are doing, and what the people are thinking. Please see "How to Pick Good Clues/Indications" for further detail.

4. Please use weather related observations if it's a salient aspect of the image or you have nothing else you can talk about. Please use weather as the last resort. Example 4 observation 2 is an example of a weather observation.

5. Please avoid gendered pronouns like "he", "she", "him" or "her". If you desire, you can use "they'".

6. Read through example and how-to sections below!

* **Footer:** How to Pick Good Clues/Indications (click to expand/collapse)

### Detailed Analysis or ### Content Details

The instructions outline a two-part task that involves identifying observable clues in an image and providing indications based on those clues. The instructions emphasize the importance of providing non-obvious information and avoiding contradictions in the indications. The document also provides rules and guidelines for completing the task, including examples of how to write observable clues and indications.

### Key Observations

* The task requires the user to think critically and creatively to identify clues and provide indications.

* The instructions emphasize the importance of providing non-obvious information and avoiding contradictions.

* The document provides clear rules and guidelines for completing the task.

### Interpretation

The instructions are designed to guide users through a task that requires them to analyze an image and draw inferences based on observable clues. The task is intended to be challenging and requires the user to think critically and creatively. The instructions emphasize the importance of providing non-obvious information and avoiding contradictions, which suggests that the task is designed to assess the user's ability to think logically and draw reasonable conclusions. The inclusion of rules and guidelines ensures that all users approach the task in a consistent manner.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Document: Instructions for a HIT (Human Intelligence Task)

### Overview

The image presents a document outlining instructions for a crowdsourcing task, specifically a Human Intelligence Task (HIT) on a platform like Amazon Mechanical Turk. The task involves identifying observable clues within an image and making indications about what those clues might suggest. The document details the process in two parts, provides rules for identifying clues, and includes a rating scale for the certainty of indications.

### Components/Axes

The document is structured with clear headings and bullet points. Key components include:

* **Title:** "Instructions (click to expand/collapse)"

* **Introduction:** A thank you message and a brief description of the task.

* **Part 1:** Instructions for examining the image and identifying observable clues.

* **Part 2:** Instructions for providing indications based on the clues.

* **Rating Scale:** A scale for assessing the certainty of indications (certain, likely, possible).

* **Rules:** Guidelines for identifying and describing observable clues.

* **Bonus Opportunity:** Information about earning bonus points.

* **Footer:** Copyright information.

### Detailed Analysis or Content Details

Here's a transcription of the document's content, broken down by section:

**Introduction:**

"Thanks for participating in this HIT!"

**Part 1: Examine the image and find 3 observable clues.**

"An observable clue MUST be something in the picture (e.g., an open algebra math workbook)"

1. Choose observation number from the drop down box (it is already chosen for you) and write down your clues you observed in the field to the right (What you write here will be transferred over to the PART 2).

2. Draw bounding boxes for the clues (you may draw multiple if there are multiple things you observed).

3. Repeat steps 1 & 2 for all the observations you want to make.

Then, move to Part 2 to provide indications for each of the clues you provided.

**Part 2: For each observable clue, provide an indication.**

"An indication is a bit of non-obvious information about what the clue means to you (e.g., an open algebra math workbook might there might be a high school students who was just studying)."

* Write down the indications.

* Rate how likely the indications to be true given the clue.

* **certain:** it's obvious or I'm very much certain what I said is true (I'm totally willing to bet on it).

* **likely:** it is likely or probable that what I said is true (both moderate and strong likelihood uncertainties belong here).

* **possible:** it's in the realm of possibly but it's an educated guess at best.

* We aren't looking for a particular distribution in the ratings nor do we value one rating over another. You turn in all "possible" for an image, for example, that's just as acceptable as turning in one of each!

**Bonus opportunity:**

"You can provide up to 2 additional clues/indication sets for bonus pay."

**Rules:**

1. For observable clues:

* Write a noun phrase: "the book", "gray skies", "a group of people"

* When possible, please specify details relevant as to where the object, entity, or thing:

* "the book" -> "the book under the table"

* "buttons" -> "buttons on the man's shirt"

* "a group of people" -> "a group of people"

* "a painting" -> "a painting hanging on the wall"

* "a dog" -> "a dog following a person"

* You can provide any countable object on the multiple times, but please tailor your clue to the instance you seen (e.g. for example if you saw two dogs, you can say "a dog" or "dogs").

2. For indications:

* Write a complete thought/sentence.

* Do not simply restate the clue.

* Do not write too much detail.

**Example:**

* **Observable clue:** a wedding cake

* **Indication:** someone is getting married.

* **Rating:** certain

**Additional Notes:**

"If you have any questions, please contact us through the Mechanical Turk forums."

**Footer:**

"© 2014 Mechanical Turk, Inc. or its affiliates. All Rights Reserved."

### Key Observations

* The document is highly structured and provides clear, step-by-step instructions.

* The emphasis is on identifying *non-obvious* information (indications) based on observable clues.

* The rating scale allows for nuanced assessment of the certainty of indications.

* The rules are designed to ensure the quality and consistency of the responses.

* The document is geared towards a crowdsourcing platform, likely Amazon Mechanical Turk.

### Interpretation

This document outlines a task designed to leverage human pattern recognition and inference skills. The core idea is to move beyond simply identifying objects in an image (observable clues) to interpreting their potential meaning (indications). The rating scale is crucial because it acknowledges that interpretations are rarely certain and allows workers to express their confidence level. The rules are in place to prevent trivial responses (e.g., simply restating the clue) and to encourage detailed, yet concise, indications. The bonus opportunity incentivizes workers to provide additional insights.

The task is likely used for data annotation or to gather subjective assessments of images for machine learning purposes. For example, the data collected could be used to train a computer vision system to understand the context of images or to predict human reactions to visual stimuli. The document demonstrates a thoughtful approach to crowdsourcing, recognizing the importance of clear instructions, quality control, and worker motivation.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Screenshot: Task Instructions for Image Analysis

### Overview

This image is a screenshot of a webpage containing detailed instructions for a crowdsourced task (likely a Human Intelligence Task, or HIT). The task requires participants to analyze an image, identify observable clues, and then make inferences (indications) based on those clues. The instructions are structured, with clear rules, examples, and a bonus opportunity.

### Components/Axes

The screenshot is a vertical, text-heavy document with a clear hierarchical structure. Key UI and structural elements include:

* **Header Bar:** A dark blue bar at the top with the text "Instructions (click to expand/collapse)".

* **Main Content Area:** A white background containing all instructional text.

* **Text Formatting:** Uses bold text, bullet points, numbered lists, and underlined text for emphasis and organization.

* **Collapsible Sections:** Indicated by "(click to expand/collapse)" next to section headers like "How to Pick Good Clues/Indications".

### Detailed Analysis / Content Details

The text content is transcribed and structured below.

**Top Section:**

* "Thanks for participating in this HIT!"

* **Your task:** "In this task, we are asking you to put on your detective thinking cap. Given an image, find **observable clues** that might indicate information about a person, situation, or setting that may not be necessarily obvious in the image (we will call this **indication**). You will do this in two steps:"

**PART 1: Instructions**

* **PART 1: Examine the image and find 3 observable clues.**

* Definition: "An **observable clue** MUST be something in the picture (e.g., an open algebra math workbook)"

* Step 1: "Choose observations from the drop-down box (1 is already chosen for you) and **write down** your clues you observed in the box to the right. You can write up to 3 clues per observation."

* Step 2: "Draw bounding boxes for the clues (you may draw multiple if there are multiple things you observed)."

* Step 3: "Repeat steps 1&2 for all of the observations you want to make."

* "Then, move to Part 2 to provide indications for each of the clues you provided."

**PART 2: Instructions**

* **PART 2: Examine the clues you found and provide indications.**

* Definition: "An **indication** is a bit of **non-obvious** information about what the clue means to you (e.g., an open algebra math workbook might indicate there might be a high school student who was just studying.)"

* Actions:

* "Write down the indication."

* "Rate how likely you think your indications to be true given the clue"

* **certain**: "it's obvious or I'm very certain what I said is true (I'm totally willing to bet on it)."

* **likely**: "it is likely or probable that what I said is true (both moderate and strong likelihood uncertainties belong here)."

* **possible**: "it's in the realm of possibility but it's an educated guess at best."

* Note: "We aren't looking for a particular distribution on the ratings nor do we value one rating over another. If you turn in all 'possible's for an image, for example, that's just as acceptable as turning in one of each!"

**Bonus & Rules:**

* "Five examples are given below the instruction panel."

* **Bonus opportunity:** "you can provide up to 2 additional clues/indication sets for bonus pay."

* **Rules:**

1. **For observable clues:**

* "Write a noun phrase: 'the book', 'gray skies', 'a group of people'"

* "When possible, please specify details relevant as to where the object, entity, or thing:" (Examples given: "the book" -> "the book under the table").

* "You can provide the **same observable clue** multiple times, but please *tailor your clue to the observation made* (see Example 2)."

* "When bounding the clues, please remember: the boxes **do not have to be perfect**! 1-3 items in a picture is plenty for bounding. Do not spend too much time on this step!"

2. **For indications:**

* "Write in complete sentences"

* "Make the indications realistic"

* "Please **DO NOT** write indications that **contradict** each other." (Example given: "this is a gathering of family members" and "this is a work event" cannot both be true).

3. "At this stage, we are **NOT** interested in plain descriptions of what's going on, what people are doing, and what the people are like. Please see *How to Pick Good Clues/Indications* for clarification."

4. "Please use weather as an indication if it's salient aspect of the image or you have nothing else you can talk about. Please use weather as the last resort. Example 4 observation 2 is an example of a weather observation."

5. "Please avoid gendered pronouns like 'he', 'she', 'him' or 'her'. If you desire, you can use 'they'."

6. "Read through example and how-to sections below!"

**Bottom Section:**

* A collapsible section header: "How to Pick Good Clues/Indications (click to expand/collapse)"

* A partially visible header: "Examples (click to expand/collapse)"

### Key Observations

* **Structured Pedagogy:** The instructions are meticulously designed to guide a user through a specific analytical process: observation -> inference -> confidence rating.

* **Emphasis on Non-Obvious Inference:** The core task is explicitly not to describe the image, but to derive hidden meaning ("indication") from visible evidence ("clue").

* **Rules to Ensure Quality and Consistency:** Rules prohibit contradictions, mandate complete sentences, and discourage plain description. Rule 4 about using weather as a "last resort" is a specific, pragmatic guideline.

* **Incentive Structure:** A bonus opportunity is offered for additional work, a common feature in crowdsourcing platforms.

* **Accessibility & Clarity:** The use of bold, examples, and clear step-by-step lists aims to minimize worker error and confusion.

### Interpretation

This document is a protocol for gathering structured, inferential data about images from human workers. It operationalizes a form of abductive reasoning—forming the best explanation for observations—within a controlled framework.

* **Purpose:** The likely goal is to train or test AI models on tasks requiring contextual understanding and commonsense reasoning, moving beyond simple object detection. By collecting many workers' clues and inferences for the same image, the requesters can build a dataset of plausible interpretations.

* **Relationship Between Elements:** The "clue" is the grounded, visual evidence. The "indication" is the hypothesis. The "likelihood rating" is a measure of the hypothesis's strength given the evidence. This mirrors a scientific or investigative process.

* **Notable Patterns in the Instructions:** The rules actively combat common pitfalls in human annotation: description over inference (Rule 3), internal inconsistency (Rule 2), and biased language (Rule 5). The instruction to use weather only as a last resort (Rule 4) suggests the task is focused on social, personal, or situational inferences rather than environmental ones, unless unavoidable.

* **Underlying Assumption:** The task assumes that images contain latent information that can be reliably decoded by human observers following a shared methodology. The value of the output lies in the diversity and plausibility of the inferred "indications," not in a single correct answer.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Task Instructions for Image Analysis

### Overview

The image displays a structured task instruction page for a user study or data annotation task. The content is organized into sections with clear headings, bullet points, and formatting (bold, colored text) to guide participants through a two-part process involving image analysis and clue/indication extraction.

---

### Components/Axes

- **Header**: Blue banner with text "Instructions (click to expand/collapse)" and a thank-you message for participating in a "HIT" (likely Amazon Mechanical Turk task).

- **Main Content**:

- **Section 1**: "Your task" with a directive to analyze an image for observable clues and indications.

- **Section 2**: "PART 1" with three steps:

1. Identify 3 observable clues (e.g., "an open algebra math workbook").

2. Draw bounding boxes around clues.

3. Repeat steps 1–2 for all observations.

- **Section 3**: "PART 2" with instructions to provide indications (interpretations of clues) and rate their likelihood (certain, likely, possible).

- **Bonus Opportunity**: Up to 2 additional clue/indication sets for bonus pay.

- **Rules**: Six numbered guidelines for clue/indication formatting, including:

- Use noun phrases for clues (e.g., "the book under the table").

- Avoid contradictions in indications.

- Exclude plain descriptions of actions or thoughts.

- Use weather observations if salient.

- Avoid gendered pronouns.

- Review examples and "How to Pick Good Clues/Indications" section.

---

### Detailed Analysis

#### Textual Content

- **Header**:

- "Instructions (click to expand/collapse)"

- "Thanks for participating in this HIT!"

- **Your Task**:

- "In this task, we are asking you to put on your detective thinking cap. Given an image, find observable clues that might indicate information about a person, situation, or setting that may not be necessarily obvious in the image (we will call this indication)."

- **PART 1**:

- "Examine the image and find 3 observable clues."

- "An observable clue MUST be something in the picture (e.g., an open algebra math workbook)."

- Steps 1–3 emphasize iterative analysis and bounding box annotation.

- **PART 2**:

- "For each observable clue, provide an indication."

- Indications are non-obvious interpretations (e.g., "an open algebra math workbook might indicate a high school student studying").

- Likelihood ratings: certain, likely, possible.

- **Bonus Opportunity**: Up to 2 additional clue/indication sets for bonus pay.

- **Rules**:

1. **Observable Clues**: Noun phrases with spatial details (e.g., "the book under the table").

2. **Indications**: Complete sentences, realistic, non-contradictory.

3. Exclude plain descriptions of actions/thoughts.

4. Use weather observations if relevant.

5. Avoid gendered pronouns (use "they" if needed).

6. Review examples and "How to Pick Good Clues/Indications" section.

---

### Key Observations

- **Formatting**: Critical terms like "observable clues" (blue) and "indication" (orange) are color-coded for emphasis.

- **Iterative Process**: Participants must analyze images in two phases: clue identification (Part 1) and interpretation (Part 2).

- **Quality Control**: Rules enforce specificity (e.g., spatial details for clues) and consistency (e.g., avoiding contradictions).

- **Incentive Structure**: Bonus pay for additional clue/indication sets encourages thoroughness.

---

### Interpretation

This task appears to be part of a crowdsourced data collection effort, likely for training machine learning models or validating human reasoning. Participants are asked to:

1. **Identify Clues**: Extract explicit, observable details from images (e.g., objects, settings).

2. **Generate Indications**: Infer implicit meanings or contexts (e.g., linking a math workbook to a student).

3. **Rate Certainty**: Assign likelihood scores to indications to gauge confidence.

The structured rules ensure data quality by standardizing clue descriptions (noun phrases with spatial context) and filtering out irrelevant or contradictory interpretations. The bonus opportunity incentivizes participants to provide deeper analysis, potentially enriching the dataset. The task’s focus on "non-obvious" clues suggests an emphasis on uncovering latent patterns or contextual inferences, which could be critical for applications like scene understanding or behavioral prediction.

DECODING INTELLIGENCE...