## Document: Instructions for a HIT (Human Intelligence Task)

### Overview

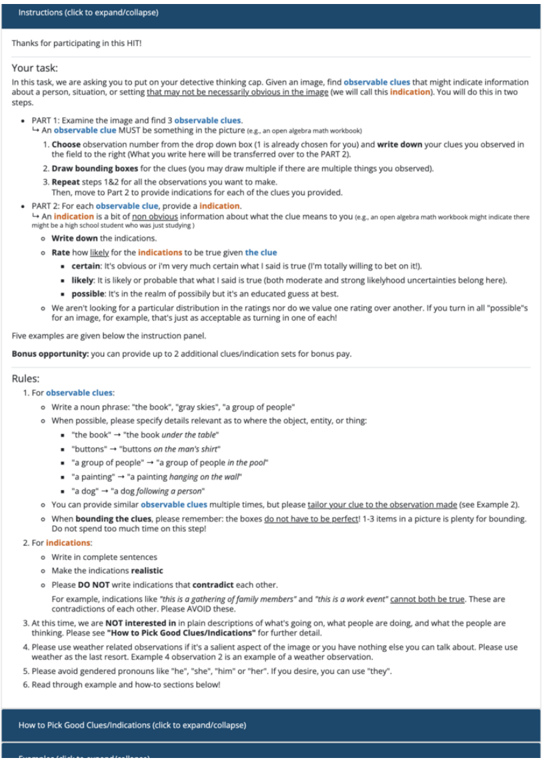

The image presents a document outlining instructions for a crowdsourcing task, specifically a Human Intelligence Task (HIT) on a platform like Amazon Mechanical Turk. The task involves identifying observable clues within an image and making indications about what those clues might suggest. The document details the process in two parts, provides rules for identifying clues, and includes a rating scale for the certainty of indications.

### Components/Axes

The document is structured with clear headings and bullet points. Key components include:

* **Title:** "Instructions (click to expand/collapse)"

* **Introduction:** A thank you message and a brief description of the task.

* **Part 1:** Instructions for examining the image and identifying observable clues.

* **Part 2:** Instructions for providing indications based on the clues.

* **Rating Scale:** A scale for assessing the certainty of indications (certain, likely, possible).

* **Rules:** Guidelines for identifying and describing observable clues.

* **Bonus Opportunity:** Information about earning bonus points.

* **Footer:** Copyright information.

### Detailed Analysis or Content Details

Here's a transcription of the document's content, broken down by section:

**Introduction:**

"Thanks for participating in this HIT!"

**Part 1: Examine the image and find 3 observable clues.**

"An observable clue MUST be something in the picture (e.g., an open algebra math workbook)"

1. Choose observation number from the drop down box (it is already chosen for you) and write down your clues you observed in the field to the right (What you write here will be transferred over to the PART 2).

2. Draw bounding boxes for the clues (you may draw multiple if there are multiple things you observed).

3. Repeat steps 1 & 2 for all the observations you want to make.

Then, move to Part 2 to provide indications for each of the clues you provided.

**Part 2: For each observable clue, provide an indication.**

"An indication is a bit of non-obvious information about what the clue means to you (e.g., an open algebra math workbook might there might be a high school students who was just studying)."

* Write down the indications.

* Rate how likely the indications to be true given the clue.

* **certain:** it's obvious or I'm very much certain what I said is true (I'm totally willing to bet on it).

* **likely:** it is likely or probable that what I said is true (both moderate and strong likelihood uncertainties belong here).

* **possible:** it's in the realm of possibly but it's an educated guess at best.

* We aren't looking for a particular distribution in the ratings nor do we value one rating over another. You turn in all "possible" for an image, for example, that's just as acceptable as turning in one of each!

**Bonus opportunity:**

"You can provide up to 2 additional clues/indication sets for bonus pay."

**Rules:**

1. For observable clues:

* Write a noun phrase: "the book", "gray skies", "a group of people"

* When possible, please specify details relevant as to where the object, entity, or thing:

* "the book" -> "the book under the table"

* "buttons" -> "buttons on the man's shirt"

* "a group of people" -> "a group of people"

* "a painting" -> "a painting hanging on the wall"

* "a dog" -> "a dog following a person"

* You can provide any countable object on the multiple times, but please tailor your clue to the instance you seen (e.g. for example if you saw two dogs, you can say "a dog" or "dogs").

2. For indications:

* Write a complete thought/sentence.

* Do not simply restate the clue.

* Do not write too much detail.

**Example:**

* **Observable clue:** a wedding cake

* **Indication:** someone is getting married.

* **Rating:** certain

**Additional Notes:**

"If you have any questions, please contact us through the Mechanical Turk forums."

**Footer:**

"© 2014 Mechanical Turk, Inc. or its affiliates. All Rights Reserved."

### Key Observations

* The document is highly structured and provides clear, step-by-step instructions.

* The emphasis is on identifying *non-obvious* information (indications) based on observable clues.

* The rating scale allows for nuanced assessment of the certainty of indications.

* The rules are designed to ensure the quality and consistency of the responses.

* The document is geared towards a crowdsourcing platform, likely Amazon Mechanical Turk.

### Interpretation

This document outlines a task designed to leverage human pattern recognition and inference skills. The core idea is to move beyond simply identifying objects in an image (observable clues) to interpreting their potential meaning (indications). The rating scale is crucial because it acknowledges that interpretations are rarely certain and allows workers to express their confidence level. The rules are in place to prevent trivial responses (e.g., simply restating the clue) and to encourage detailed, yet concise, indications. The bonus opportunity incentivizes workers to provide additional insights.

The task is likely used for data annotation or to gather subjective assessments of images for machine learning purposes. For example, the data collected could be used to train a computer vision system to understand the context of images or to predict human reactions to visual stimuli. The document demonstrates a thoughtful approach to crowdsourcing, recognizing the importance of clear instructions, quality control, and worker motivation.