## Bar Chart: OpenAI RE Interview Coding Performance

### Overview

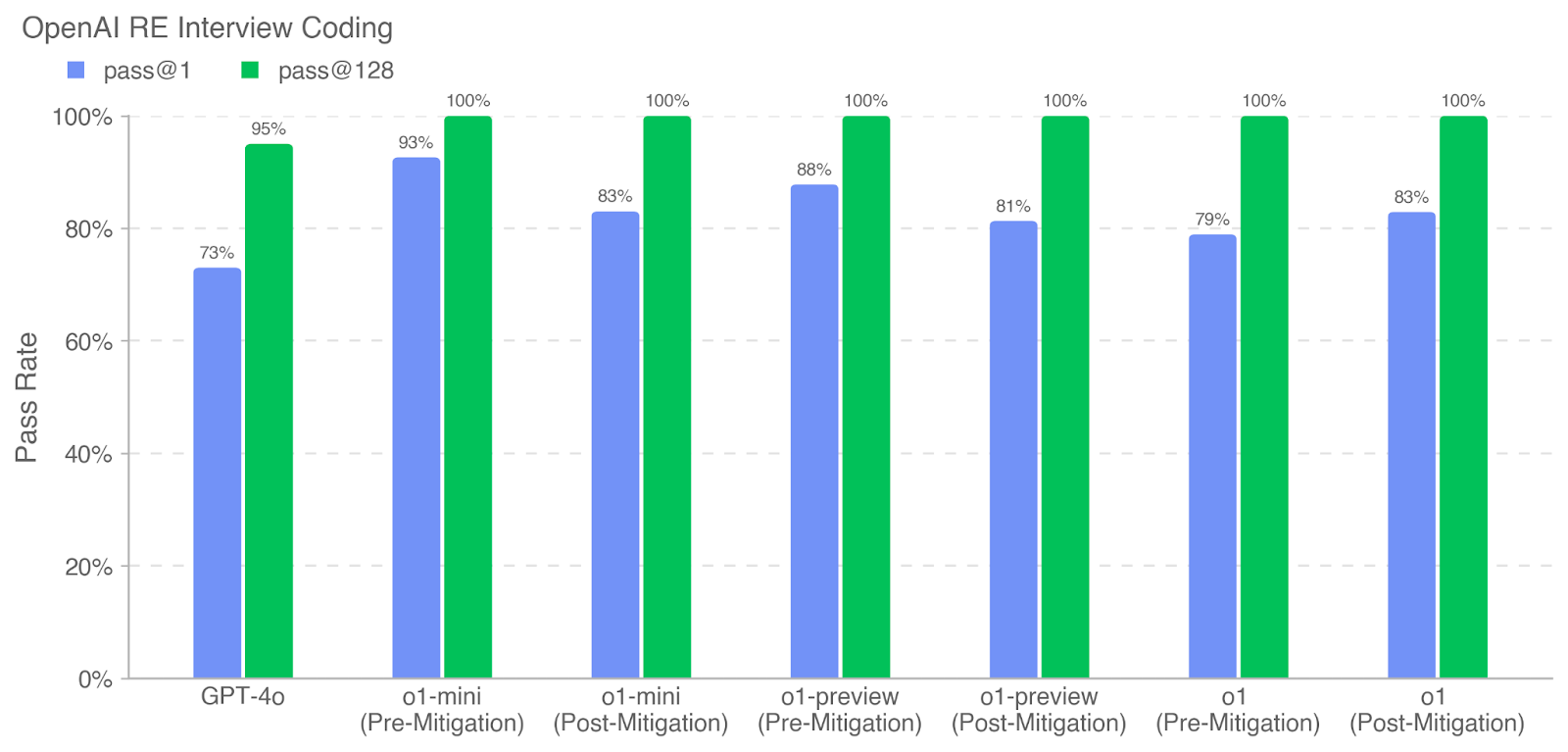

This is a grouped bar chart titled "OpenAI RE Interview Coding" that compares the performance of different AI models on a coding interview task. The chart measures "Pass Rate" as a percentage, comparing two evaluation metrics: `pass@1` (blue bars) and `pass@128` (green bars) across seven distinct model conditions.

### Components/Axes

* **Title:** "OpenAI RE Interview Coding" (top-left).

* **Legend:** Located at the top-left, below the title.

* Blue square: `pass@1`

* Green square: `pass@128`

* **Y-Axis:** Labeled "Pass Rate". Scale runs from 0% to 100% in increments of 20% (0%, 20%, 40%, 60%, 80%, 100%). Horizontal grid lines are present at each 20% increment.

* **X-Axis:** Lists seven model conditions. Each condition has a pair of bars (blue and green).

1. GPT-4o

2. o1-mini (Pre-Mitigation)

3. o1-mini (Post-Mitigation)

4. o1-preview (Pre-Mitigation)

5. o1-preview (Post-Mitigation)

6. o1 (Pre-Mitigation)

7. o1 (Post-Mitigation)

### Detailed Analysis

The chart presents the following pass rate data for each model condition. Values are read directly from the labels atop each bar.

| Model Condition | pass@1 (Blue Bar) | pass@128 (Green Bar) |

| :--- | :--- | :--- |

| **GPT-4o** | 73% | 95% |

| **o1-mini (Pre-Mitigation)** | 93% | 100% |

| **o1-mini (Post-Mitigation)** | 83% | 100% |

| **o1-preview (Pre-Mitigation)** | 88% | 100% |

| **o1-preview (Post-Mitigation)** | 81% | 100% |

| **o1 (Pre-Mitigation)** | 79% | 100% |

| **o1 (Post-Mitigation)** | 83% | 100% |

**Trend Verification:**

* **pass@128 (Green Bars):** This series shows a consistently high, near-perfect trend. All green bars are at 100%, except for GPT-4o, which is slightly lower at 95%. The visual trend is a flat line at the ceiling of the chart for all "o1" family models.

* **pass@1 (Blue Bars):** This series shows significant variation. The trend is not linear. The highest single-attempt pass rate is for `o1-mini (Pre-Mitigation)` at 93%. The lowest is for `GPT-4o` at 73%. For the "o1-mini" and "o1-preview" models, the `pass@1` score decreases from the "Pre-Mitigation" to the "Post-Mitigation" condition. For the "o1" model, the score increases slightly from Pre- to Post-Mitigation.

### Key Observations

1. **Metric Disparity:** There is a substantial and consistent gap between `pass@1` and `pass@128` for every model. The `pass@128` metric is always equal to or greater than `pass@1`.

2. **Ceiling Effect:** The `pass@128` metric hits a ceiling of 100% for all models in the "o1" family (mini, preview, and base), regardless of mitigation status.

3. **Mitigation Impact:** The effect of "mitigation" on the `pass@1` score is inconsistent across model families.

* For **o1-mini**, mitigation correlates with a **decrease** of 10 percentage points (93% → 83%).

* For **o1-preview**, mitigation correlates with a **decrease** of 7 percentage points (88% → 81%).

* For **o1**, mitigation correlates with a slight **increase** of 4 percentage points (79% → 83%).

4. **Model Comparison:** In the `pass@1` metric, `o1-mini (Pre-Mitigation)` (93%) outperforms `GPT-4o` (73%) by a significant margin of 20 percentage points.

### Interpretation

This chart likely evaluates the effectiveness of safety or performance "mitigations" applied to OpenAI's "o1" series models on a challenging real-world evaluation (RE Interview Coding).

* **What the data suggests:** The `pass@128` metric, which allows for 128 attempts to generate a correct solution, shows that all advanced models (o1 family) are fundamentally capable of solving the task perfectly given enough tries. The `pass@1` metric, representing a single, more realistic attempt, reveals the practical, on-the-fly performance and is more sensitive to model differences and applied mitigations.

* **Relationship between elements:** The consistent gap between the two metrics indicates that while these models have high ultimate capability (`pass@128`), their reliability in a single shot (`pass@1`) is lower and more variable. The mitigation strategies appear to trade off some single-attempt performance (in the case of o1-mini and o1-preview) for other unstated benefits (likely safety or alignment), though this trade-off is not uniform, as seen with the base o1 model.

* **Notable Anomalies:** The most striking anomaly is the perfect 100% `pass@128` score across all o1 models, suggesting the evaluation task, while difficult, is fully within the capability boundary of these systems when given sufficient sampling. The inconsistent direction of the mitigation effect on `pass@1` across different model versions warrants further investigation into the specific nature of the mitigations applied.