TECHNICAL ASSET FINGERPRINT

39345e466f3b26475cf01acc

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

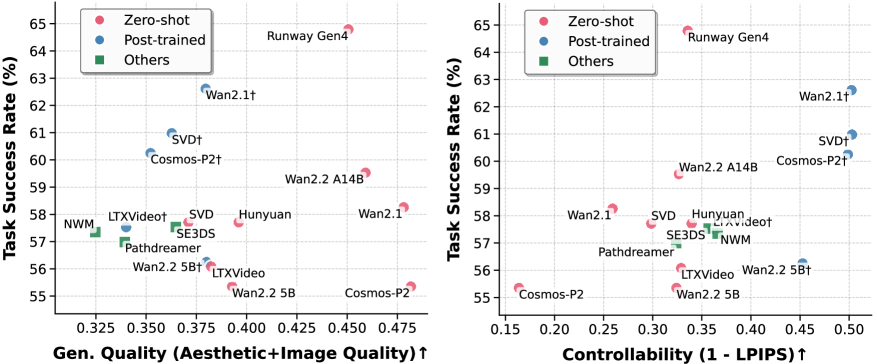

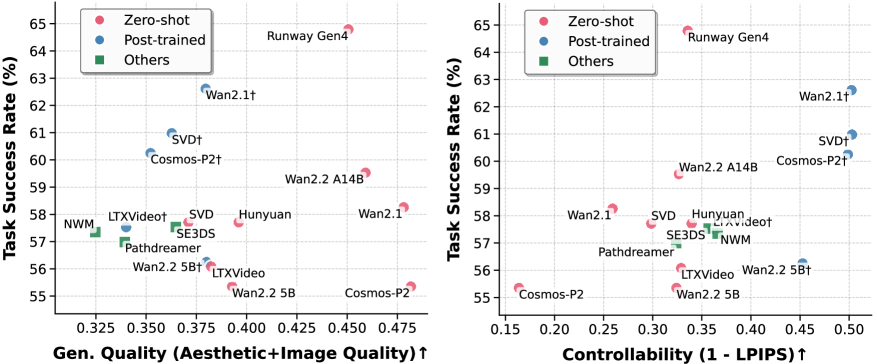

## Scatter Plot: Task Success Rate vs. Quality/Controllability

### Overview

The image contains two scatter plots comparing the Task Success Rate (%) of different models against two different metrics: General Quality (Aesthetic+Image Quality) and Controllability (1 - LPIPS). The plots show the performance of Zero-shot, Post-trained, and Other models.

### Components/Axes

**Left Scatter Plot:**

* **Title:** Implicitly "Task Success Rate vs. General Quality"

* **Y-axis:** Task Success Rate (%), ranging from 55% to 65% with gridlines at each percentage point.

* **X-axis:** Gen. Quality (Aesthetic+Image Quality) ↑, ranging from approximately 0.325 to 0.475, with gridlines at intervals of 0.025. The upward arrow indicates that higher values are better.

* **Legend (Top-Left):**

* Red circle: Zero-shot

* Blue circle: Post-trained

* Green square: Others

**Right Scatter Plot:**

* **Title:** Implicitly "Task Success Rate vs. Controllability"

* **Y-axis:** Task Success Rate (%), ranging from 55% to 65% with gridlines at each percentage point.

* **X-axis:** Controllability (1 - LPIPS) ↑, ranging from approximately 0.15 to 0.50, with gridlines at intervals of 0.05. The upward arrow indicates that higher values are better.

* **Legend (Top-Left):**

* Red circle: Zero-shot

* Blue circle: Post-trained

* Green square: Others

### Detailed Analysis

**Left Scatter Plot (Task Success Rate vs. General Quality):**

* **Zero-shot (Red):**

* Runway Gen4: Task Success Rate ~64.8%, Gen. Quality ~0.47

* Wan2.1: Task Success Rate ~58.2%, Gen. Quality ~0.46

* Wan2.2 A14B: Task Success Rate ~59.5%, Gen. Quality ~0.43

* Hunyuan: Task Success Rate ~58%, Gen. Quality ~0.40

* SVD: Task Success Rate ~58%, Gen. Quality ~0.37

* LTXVideo: Task Success Rate ~56%, Gen. Quality ~0.36

* Wan2.2 5B: Task Success Rate ~55.8%, Gen. Quality ~0.41

* Cosmos-P2: Task Success Rate ~55.5%, Gen. Quality ~0.47

* **Post-trained (Blue):**

* Wan2.1†: Task Success Rate ~62.5%, Gen. Quality ~0.39

* SVD†: Task Success Rate ~60.8%, Gen. Quality ~0.36

* Cosmos-P2†: Task Success Rate ~60.2%, Gen. Quality ~0.36

* LTXVideot: Task Success Rate ~57.5%, Gen. Quality ~0.35

* Wan2.2 5B†: Task Success Rate ~56.2%, Gen. Quality ~0.39

* **Others (Green):**

* NWM: Task Success Rate ~57.3%, Gen. Quality ~0.32

* SE3DS: Task Success Rate ~57.2%, Gen. Quality ~0.36

* Pathdreamer: Task Success Rate ~57%, Gen. Quality ~0.35

**Right Scatter Plot (Task Success Rate vs. Controllability):**

* **Zero-shot (Red):**

* Runway Gen4: Task Success Rate ~64.8%, Controllability ~0.32

* Wan2.2 A14B: Task Success Rate ~59.5%, Controllability ~0.28

* Wan2.1: Task Success Rate ~58.2%, Controllability ~0.23

* Hunyuan: Task Success Rate ~58%, Controllability ~0.34

* SVD: Task Success Rate ~58%, Controllability ~0.32

* Cosmos-P2: Task Success Rate ~55.5%, Controllability ~0.17

* Wan2.2 5B: Task Success Rate ~55.8%, Controllability ~0.37

* **Post-trained (Blue):**

* Wan2.1†: Task Success Rate ~62.5%, Controllability ~0.45

* SVD†: Task Success Rate ~60.8%, Controllability ~0.48

* Cosmos-P2†: Task Success Rate ~60.2%, Controllability ~0.46

* Wan2.2 5B†: Task Success Rate ~56.2%, Controllability ~0.40

* LTXVideot: Task Success Rate ~57.5%, Controllability ~0.38

* **Others (Green):**

* NWM: Task Success Rate ~57.3%, Controllability ~0.35

* SE3DS: Task Success Rate ~57.2%, Controllability ~0.33

* Pathdreamer: Task Success Rate ~57%, Controllability ~0.28

### Key Observations

* Runway Gen4 (Zero-shot) achieves the highest Task Success Rate in both plots, but its Controllability is relatively low.

* Post-trained models (Wan2.1†, SVD†, Cosmos-P2†) generally show a good balance between Task Success Rate and both General Quality and Controllability.

* The "Others" category models (NWM, SE3DS, Pathdreamer) tend to cluster in the lower-left region of both plots, indicating lower performance in both Task Success Rate and the respective quality metrics.

* There is a positive correlation between Task Success Rate and both General Quality and Controllability, although the relationship is not strictly linear.

### Interpretation

The scatter plots provide a comparative analysis of different models based on their Task Success Rate, General Quality, and Controllability. The data suggests that post-training can improve the performance of models in terms of both Task Success Rate and the quality metrics. However, there is a trade-off between General Quality and Controllability for some models. For example, Runway Gen4 excels in Task Success Rate but has relatively low Controllability. The plots highlight the importance of considering multiple metrics when evaluating the performance of models, as optimizing for one metric may come at the expense of others. The "Others" category models appear to be less effective compared to the Zero-shot and Post-trained models based on these metrics.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Scatter Plots: Model Performance Comparison

### Overview

The image presents two scatter plots side-by-side, comparing the performance of various generative models. The left plot assesses "Task Success Rate" against "Gen. Quality (Aesthetic+Image Quality)", while the right plot assesses "Task Success Rate" against "Controllability (L - LPIPS)". Each plot uses color-coding to differentiate between models based on their training method: Zero-shot, Post-trained, and Others.

### Components/Axes

Both plots share the following components:

* **X-axis:** Represents a performance metric.

* Left Plot: "Gen. Quality (Aesthetic+Image Quality)↑" ranging from approximately 0.325 to 0.475.

* Right Plot: "Controllability (L - LPIPS)↑" ranging from approximately 0.15 to 0.50.

* **Y-axis:** "Task Success Rate (%)" ranging from approximately 55% to 65%.

* **Legend (Top-Left of each plot):**

* Red Circles: "Zero-shot"

* Blue Circles: "Post-trained"

* Green Squares: "Others"

* **Data Points:** Represent individual models, positioned according to their Task Success Rate and the respective performance metric.

### Detailed Analysis or Content Details

**Left Plot: Gen. Quality vs. Task Success Rate**

* **Runway Gen4 (Red):** Approximately (0.46, 64.5%).

* **Wan2.1† (Blue):** Approximately (0.41, 62.5%).

* **Wan2.2 A14B (Blue):** Approximately (0.425, 60%).

* **Cosmos-P2† (Blue):** Approximately (0.38, 60.5%).

* **SVD† (Blue):** Approximately (0.375, 61%).

* **Hunyuan (Blue):** Approximately (0.40, 58.5%).

* **LTXVideo† (Green):** Approximately (0.36, 58%).

* **SE3DS (Green):** Approximately (0.355, 57.5%).

* **NWM (Green):** Approximately (0.35, 57%).

* **Pathdreamer (Green):** Approximately (0.365, 57.5%).

* **Wan2.2 5Bt (Green):** Approximately (0.37, 56%).

* **Wan2.2 5B (Green):** Approximately (0.34, 55.5%).

* **Cosmos-P2 (Green):** Approximately (0.33, 55%).

**Right Plot: Controllability vs. Task Success Rate**

* **Runway Gen4 (Red):** Approximately (0.46, 64.5%).

* **Wan2.1† (Blue):** Approximately (0.35, 58.5%).

* **Wan2.2 A14B (Blue):** Approximately (0.38, 60%).

* **Cosmos-P2† (Blue):** Approximately (0.25, 60.5%).

* **SVD† (Blue):** Approximately (0.30, 61%).

* **Hunyuan (Blue):** Approximately (0.40, 58.5%).

* **LTXVideo† (Green):** Approximately (0.20, 58%).

* **SE3DS (Green):** Approximately (0.22, 57.5%).

* **NWM (Green):** Approximately (0.25, 57%).

* **Pathdreamer (Green):** Approximately (0.28, 57.5%).

* **Wan2.2 5Bt (Green):** Approximately (0.32, 56%).

* **Wan2.2 5B (Green):** Approximately (0.30, 55.5%).

* **Cosmos-P2 (Green):** Approximately (0.18, 55%).

**Trends:**

* **Left Plot:** A general upward trend is visible, indicating that higher Gen. Quality tends to correlate with higher Task Success Rate. However, the correlation is not strong, and there is significant overlap between the categories.

* **Right Plot:** A similar upward trend is observed, suggesting that better Controllability is associated with higher Task Success Rate. Again, the correlation is not perfect.

### Key Observations

* **Runway Gen4** consistently performs well in both plots, achieving the highest Task Success Rate.

* **Zero-shot models (Red)** generally outperform "Others" (Green) in both metrics.

* **Post-trained models (Blue)** show a wide range of performance, with some models achieving high scores and others falling behind.

* There is a noticeable cluster of models in the "Others" category with relatively low scores on both metrics.

* The "†" symbol appears next to some model names, but its meaning is not provided in the image.

### Interpretation

The data suggests that both aesthetic/image quality and controllability are important factors influencing the task success rate of generative models. Runway Gen4 appears to be a leading model in terms of overall performance. The distinction between Zero-shot, Post-trained, and Other models highlights the impact of training methodology on model capabilities. The spread of data points within each category indicates that performance is not solely determined by training method, and other factors (e.g., model architecture, dataset) also play a significant role. The lack of a strong correlation between the metrics suggests that optimizing for one metric does not necessarily guarantee improvement in the other. The "†" symbol could indicate a specific characteristic of those models, such as a particular training dataset or a specific task they were evaluated on. Further investigation would be needed to understand its meaning.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot Comparison: Model Performance on Task Success Rate vs. Generative Quality & Controllability

### Overview

The image contains two side-by-side scatter plots. Both plots share the same Y-axis, "Task Success Rate (%)", but compare it against two different X-axis metrics: "Gen. Quality (Aesthetic+Image Quality) ↑" on the left and "Controllability (1 - LPIPS) ↑" on the right. The plots compare the performance of various AI models, categorized into three groups: Zero-shot (pink circles), Post-trained (blue circles), and Others (green squares). The upward arrows (↑) on the X-axis labels indicate that higher values are better.

### Components/Axes

**Common Elements:**

* **Y-axis:** "Task Success Rate (%)". Scale ranges from 55 to 65, with major ticks at every integer.

* **Legend:** Located in the top-left corner of each plot.

* Pink Circle: Zero-shot

* Blue Circle: Post-trained

* Green Square: Others

* **Data Points:** Each point is labeled with a model name. The color and shape correspond to the legend.

**Left Plot:**

* **X-axis:** "Gen. Quality (Aesthetic+Image Quality) ↑". Scale ranges from 0.325 to 0.475, with major ticks at 0.025 intervals.

**Right Plot:**

* **X-axis:** "Controllability (1 - LPIPS) ↑". Scale ranges from 0.15 to 0.50, with major ticks at 0.05 intervals.

### Detailed Analysis

**Left Plot: Task Success Rate vs. Generative Quality**

* **Trend Verification:** There is a general, weak positive trend. Models with higher Generative Quality scores tend to have slightly higher Task Success Rates, but the correlation is not strong, and there is significant scatter.

* **Data Points (Approximate Coordinates - Gen. Quality, Task Success):**

* **Zero-shot (Pink):**

* Runway Gen4: (0.450, 65.0)

* Wan2.2 A14B: (0.450, 59.5)

* Wan2.1: (0.475, 58.3)

* Hunyuan: (0.400, 58.0)

* Wan2.2 5B: (0.400, 55.0)

* Cosmos-P2: (0.475, 55.0)

* **Post-trained (Blue):**

* Wan2.1†: (0.375, 62.5)

* SVD†: (0.360, 61.0)

* Cosmos-P2†: (0.350, 60.0)

* SVD: (0.375, 57.5)

* Pathdreamer: (0.350, 57.0)

* Wan2.2 5B†: (0.375, 56.0)

* **Others (Green):**

* LTXVideo†: (0.350, 57.5)

* SE3DS: (0.365, 57.3)

* LTXVideo: (0.375, 56.5)

* NWM: (0.325, 57.2)

**Right Plot: Task Success Rate vs. Controllability**

* **Trend Verification:** There is a clearer positive trend compared to the left plot. Models with higher Controllability scores generally achieve higher Task Success Rates.

* **Data Points (Approximate Coordinates - Controllability, Task Success):**

* **Zero-shot (Pink):**

* Runway Gen4: (0.325, 65.0)

* Wan2.2 A14B: (0.325, 59.5)

* Wan2.1: (0.275, 58.3)

* SVD: (0.325, 57.8)

* Hunyuan: (0.350, 57.5)

* LTXVideo: (0.325, 56.0)

* Wan2.2 5B: (0.325, 55.5)

* Cosmos-P2: (0.175, 55.0)

* **Post-trained (Blue):**

* Wan2.1†: (0.500, 62.5)

* SVD†: (0.500, 61.0)

* Cosmos-P2†: (0.500, 60.0)

* Wan2.2 5B†: (0.450, 56.0)

* **Others (Green):**

* LTXVideo†: (0.350, 57.5)

* SE3DS: (0.350, 57.3)

* Pathdreamer: (0.300, 57.0)

* NWM: (0.375, 57.2)

### Key Observations

1. **Top Performer:** "Runway Gen4" (Zero-shot) is the clear outlier, achieving the highest Task Success Rate (~65%) in both plots, with high Generative Quality but only moderate Controllability.

2. **Post-training Effect:** Models with the "†" suffix (indicating post-training) consistently show a significant rightward shift on the Controllability axis (right plot) compared to their base versions, while maintaining or slightly improving Task Success Rate. This effect is less pronounced on the Generative Quality axis.

3. **Metric Correlation:** Task Success Rate appears to have a stronger visual correlation with Controllability than with Generative Quality.

4. **Cluster of "Others":** The green "Others" models (NWM, SE3DS, LTXVideo) cluster in a mid-range for both metrics, generally between 56-58% Task Success Rate.

5. **Cosmos-P2 Anomaly:** The base "Cosmos-P2" model has the lowest Controllability score (~0.175) but a mid-range Generative Quality score, indicating a potential trade-off or specialization in its design.

### Interpretation

This comparative analysis suggests several insights about the evaluated models:

* **The Success-Controlability Link:** The stronger trend in the right plot implies that a model's ability to be precisely controlled (as measured by 1-LPIPS) is a more reliable predictor of its overall task success than its raw aesthetic or image quality. This makes intuitive sense for applied tasks where following instructions is paramount.

* **Value of Post-training:** The dramatic improvement in Controllability for post-trained models (†) highlights the effectiveness of this technique for enhancing steerability without sacrificing—and sometimes even improving—task performance. This is a key finding for model development.

* **Performance vs. Specialization:** "Runway Gen4" demonstrates that it's possible to achieve top-tier task success with a zero-shot model, but its controllability is not the highest. Conversely, post-trained models like "Wan2.1†" and "SVD†" achieve the highest controllability scores, suggesting they may be preferable for applications requiring fine-grained user input.

* **Trade-off Identification:** The position of "Cosmos-P2" suggests a model architecture or training focus that prioritizes generative quality over controllability. This isn't inherently negative but defines its use case.

In summary, the data argues that for maximizing task success in this evaluation framework, optimizing for controllability is likely more impactful than optimizing solely for generative quality, and post-training is a highly effective method for achieving that optimization.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plots: Model Performance Comparison

### Overview

The image contains two side-by-side scatter plots comparing model performance across two metrics: **Gen. Quality (Aesthetic+Image Quality)** and **Controllability (1 - LPIPS)**. Both plots share the same y-axis (**Task Success Rate (%)**), while the x-axes differ. Data points are color-coded by model type: **Zero-shot (red)**, **Post-trained (blue)**, and **Others (green)**.

---

### Components/Axes

#### Left Panel: Gen. Quality vs. Task Success Rate

- **X-axis (Gen. Quality)**: Ranges from **0.325** to **0.475** (increasing rightward).

- **Y-axis (Task Success Rate)**: Ranges from **55%** to **65%** (increasing upward).

- **Legend**:

- **Red**: Zero-shot

- **Blue**: Post-trained

- **Green**: Others

#### Right Panel: Controllability vs. Task Success Rate

- **X-axis (Controllability)**: Ranges from **0.15** to **0.50** (increasing rightward).

- **Y-axis (Task Success Rate)**: Same as left panel (**55%** to **65%**).

- **Legend**: Same as left panel.

---

### Detailed Analysis

#### Left Panel: Gen. Quality vs. Task Success Rate

- **Zero-shot (Red)**:

- **Runway Gen4**: (0.475, 64%)

- **Wan2.2 A14B**: (0.45, 59%)

- **Cosmos-P2**: (0.475, 55%)

- **Post-trained (Blue)**:

- **Wan2.1†**: (0.400, 62%)

- **SVD†**: (0.375, 61%)

- **Cosmos-P2†**: (0.375, 60%)

- **Others (Green)**:

- **NWM**: (0.325, 57%)

- **Pathdreamer**: (0.35, 56%)

- **SE3DS**: (0.375, 56%)

- **LTXVideo**: (0.375, 57%)

- **Hunyuan**: (0.400, 58%)

- **Wan2.2 5B**: (0.400, 56%)

#### Right Panel: Controllability vs. Task Success Rate

- **Zero-shot (Red)**:

- **Runway Gen4**: (0.45, 64%)

- **Wan2.2 A14B**: (0.30, 59%)

- **Cosmos-P2**: (0.15, 55%)

- **Post-trained (Blue)**:

- **Wan2.1†**: (0.45, 62%)

- **SVD†**: (0.45, 61%)

- **Cosmos-P2†**: (0.45, 60%)

- **Others (Green)**:

- **Pathdreamer**: (0.30, 57%)

- **SE3DS**: (0.30, 57%)

- **NWM**: (0.30, 57%)

- **LTXVideo**: (0.30, 58%)

- **Hunyuan**: (0.30, 59%)

- **Wan2.2 5B**: (0.35, 56%)

---

### Key Observations

1. **High Gen. Quality, High Task Success Rate**:

- **Runway Gen4** (Zero-shot) achieves the highest Gen. Quality (**0.475**) and Task Success Rate (**64%**) in both panels.

- **Wan2.1†** (Post-trained) shows strong performance with Gen. Quality (**0.400**) and Task Success Rate (**62%**).

2. **Outliers**:

- **Cosmos-P2** (Zero-shot) has high Gen. Quality (**0.475**) but low Task Success Rate (**55%**), suggesting inefficiency.

- **Cosmos-P2** (Others) has low Controllability (**0.15**) and Task Success Rate (**55%**), indicating poor optimization.

3. **Post-trained Models**:

- Post-trained models (e.g., **Wan2.1†**, **SVD†**) consistently outperform Zero-shot and Others in both panels, suggesting training improves performance.

4. **Controllability Trends**:

- Higher Controllability (closer to 0.50) correlates with higher Task Success Rate, especially for Post-trained models.

---

### Interpretation

- **Model Efficiency**: Post-trained models (blue) demonstrate superior Task Success Rate across both Gen. Quality and Controllability, implying training enhances effectiveness.

- **Trade-offs**: Zero-shot models like **Runway Gen4** excel in Gen. Quality but may lack Controllability, while **Cosmos-P2** (Zero-shot) underperforms despite high Gen. Quality.

- **Outliers**: **Cosmos-P2** (Others) is a clear outlier, with low Controllability and Task Success Rate, suggesting it is less optimized compared to others.

- **Correlation**: Both panels show a positive correlation between Gen. Quality/Controllability and Task Success Rate, though Post-trained models break this trend by achieving higher success rates at lower Gen. Quality/Controllability.

This analysis highlights the importance of model training in balancing Gen. Quality, Controllability, and Task Success Rate, with Post-trained models leading in performance.

DECODING INTELLIGENCE...