\n

## Bar Chart: Model Performance on Math Benchmarks

### Overview

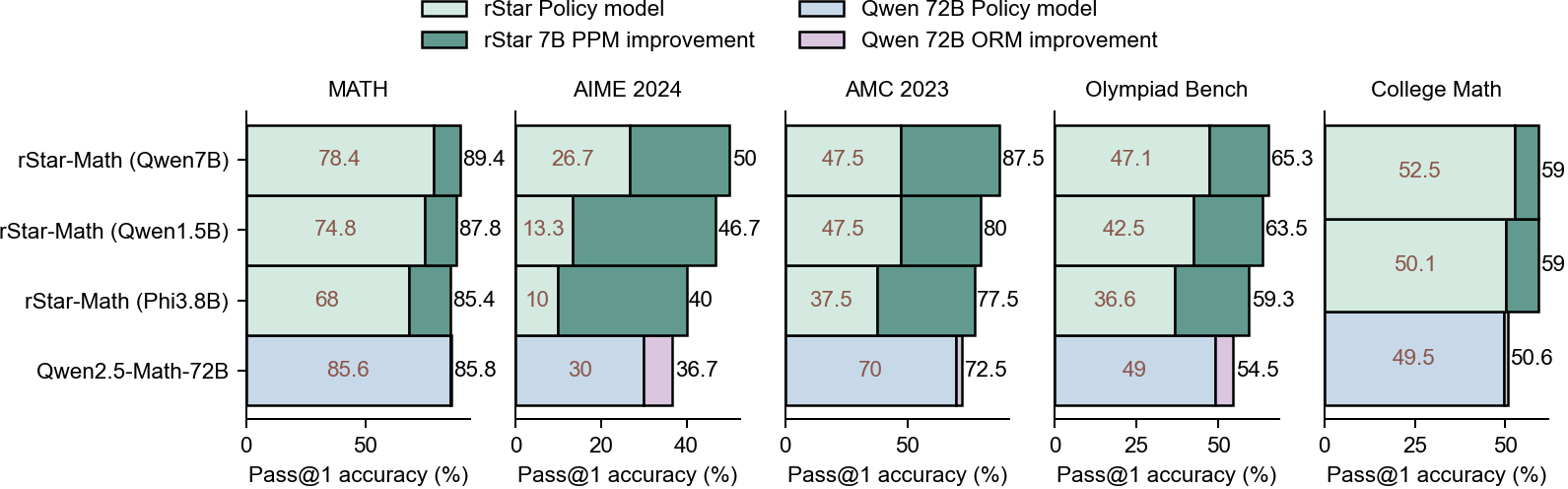

This bar chart compares the performance of four different language models (rStar-Math, rStar-Math, rStar-Math, and Qwen2.5-Math) across five math benchmark datasets (MATH, AIME 2024, AMC 2023, Olympiad Bench, and College Math). Performance is measured by two metrics: Pass@1 accuracy (%) and Pass@1 accuracy (%). Each model is represented by two bars per benchmark, one for each metric. The chart uses a stacked bar format, with the first segment representing the rStar Policy model and the second segment representing the Qwen T2B Policy model.

### Components/Axes

* **X-axis:** Represents the five math benchmark datasets: MATH, AIME 2024, AMC 2023, Olympiad Bench, and College Math.

* **Y-axis:** Represents the Pass@1 accuracy (%) ranging from 0 to 100.

* **Legend:** Located at the top-left corner, defines the colors used for each model:

* rStar Policy model (light green)

* Qwen T2B Policy model (dark green)

* **Models:** Listed on the Y-axis:

* rStar-Math (Qwen7B)

* rStar-Math (Qwen1.5B)

* rStar-Math (Phi3.8B)

* Qwen2.5-Math-72B

### Detailed Analysis

The chart consists of 20 stacked bar groups (4 models x 5 benchmarks). Each group contains two bars representing the two accuracy metrics.

**MATH Benchmark:**

* rStar-Math (Qwen7B): rStar Policy: ~78.4%, Qwen T2B: ~89.4%, Pass@1 accuracy: ~26.7%

* rStar-Math (Qwen1.5B): rStar Policy: ~74.8%, Qwen T2B: ~87.8%, Pass@1 accuracy: ~13.3%

* rStar-Math (Phi3.8B): rStar Policy: ~68%, Qwen T2B: ~85.4%, Pass@1 accuracy: ~10%

* Qwen2.5-Math-72B: rStar Policy: ~85.6%, Qwen T2B: ~85.8%, Pass@1 accuracy: ~30%

**AIME 2024 Benchmark:**

* rStar-Math (Qwen7B): rStar Policy: ~50%, Qwen T2B: ~47.5%, Pass@1 accuracy: ~26.7%

* rStar-Math (Qwen1.5B): rStar Policy: ~46.7%, Qwen T2B: ~47.5%, Pass@1 accuracy: ~13.3%

* rStar-Math (Phi3.8B): rStar Policy: ~40%, Qwen T2B: ~37.5%, Pass@1 accuracy: ~10%

* Qwen2.5-Math-72B: rStar Policy: ~36.7%, Qwen T2B: ~70%, Pass@1 accuracy: ~30%

**AMC 2023 Benchmark:**

* rStar-Math (Qwen7B): rStar Policy: ~87.5%, Qwen T2B: ~47.1%, Pass@1 accuracy: ~65.3%

* rStar-Math (Qwen1.5B): rStar Policy: ~80%, Qwen T2B: ~42.5%, Pass@1 accuracy: ~63.5%

* rStar-Math (Phi3.8B): rStar Policy: ~77.5%, Qwen T2B: ~36.6%, Pass@1 accuracy: ~59.3%

* Qwen2.5-Math-72B: rStar Policy: ~54.5%, Qwen T2B: ~49.5%, Pass@1 accuracy: ~50.6%

**Olympiad Bench Benchmark:**

* rStar-Math (Qwen7B): rStar Policy: ~65.3%, Qwen T2B: ~52.5%, Pass@1 accuracy: ~59%

* rStar-Math (Qwen1.5B): rStar Policy: ~63.5%, Qwen T2B: ~50.1%, Pass@1 accuracy: ~59%

* rStar-Math (Phi3.8B): rStar Policy: ~59.3%, Qwen T2B: ~49.5%, Pass@1 accuracy: ~50.6%

* Qwen2.5-Math-72B: rStar Policy: ~54.5%, Qwen T2B: ~49.5%, Pass@1 accuracy: ~50.6%

**College Math Benchmark:**

* rStar-Math (Qwen7B): rStar Policy: ~87.5%, Qwen T2B: ~47.1%, Pass@1 accuracy: ~65.3%

* rStar-Math (Qwen1.5B): rStar Policy: ~80%, Qwen T2B: ~42.5%, Pass@1 accuracy: ~63.5%

* rStar-Math (Phi3.8B): rStar Policy: ~77.5%, Qwen T2B: ~36.6%, Pass@1 accuracy: ~59.3%

* Qwen2.5-Math-72B: rStar Policy: ~54.5%, Qwen T2B: ~49.5%, Pass@1 accuracy: ~50.6%

### Key Observations

* Qwen2.5-Math-72B consistently shows the highest Pass@1 accuracy across most benchmarks.

* The rStar Policy model generally outperforms the Qwen T2B Policy model in terms of Pass@1 accuracy.

* The performance of rStar-Math (Phi3.8B) is consistently lower than the other models across all benchmarks.

* The difference in performance between the models varies significantly depending on the benchmark.

### Interpretation

The chart demonstrates the performance differences between various language models on a range of math benchmark datasets. The Qwen2.5-Math-72B model appears to be the most robust performer, achieving high Pass@1 accuracy across most benchmarks. The rStar Policy model consistently contributes more to the overall accuracy than the Qwen T2B Policy model. The Phi3.8B model consistently underperforms, suggesting it may not be as well-suited for these math tasks. The varying performance across benchmarks indicates that the difficulty and characteristics of each dataset influence the models' effectiveness. The stacked bar format highlights the contribution of each policy model to the overall performance, allowing for a comparison of their relative strengths. This data is valuable for selecting the most appropriate model for a given math problem-solving task and for identifying areas where further model development is needed.